I’ve been watching the AI narrative on-chain long enough to separate noise from architecture. Most projects optimize for model performance, partnerships, or token velocity. MIRA is doing something structurally different. It is focusing on verification as a base primitive. And in my view, that distinction is not cosmetic — it’s foundational.

We are entering a phase where AI-generated outputs will influence financial decisions, autonomous systems, content authenticity, and machine-to-machine coordination. In that environment, the question is no longer can a model generate? It’s can the output be proven? That is where Mira positions itself — not as another inference layer, but as a verification rail for AI.

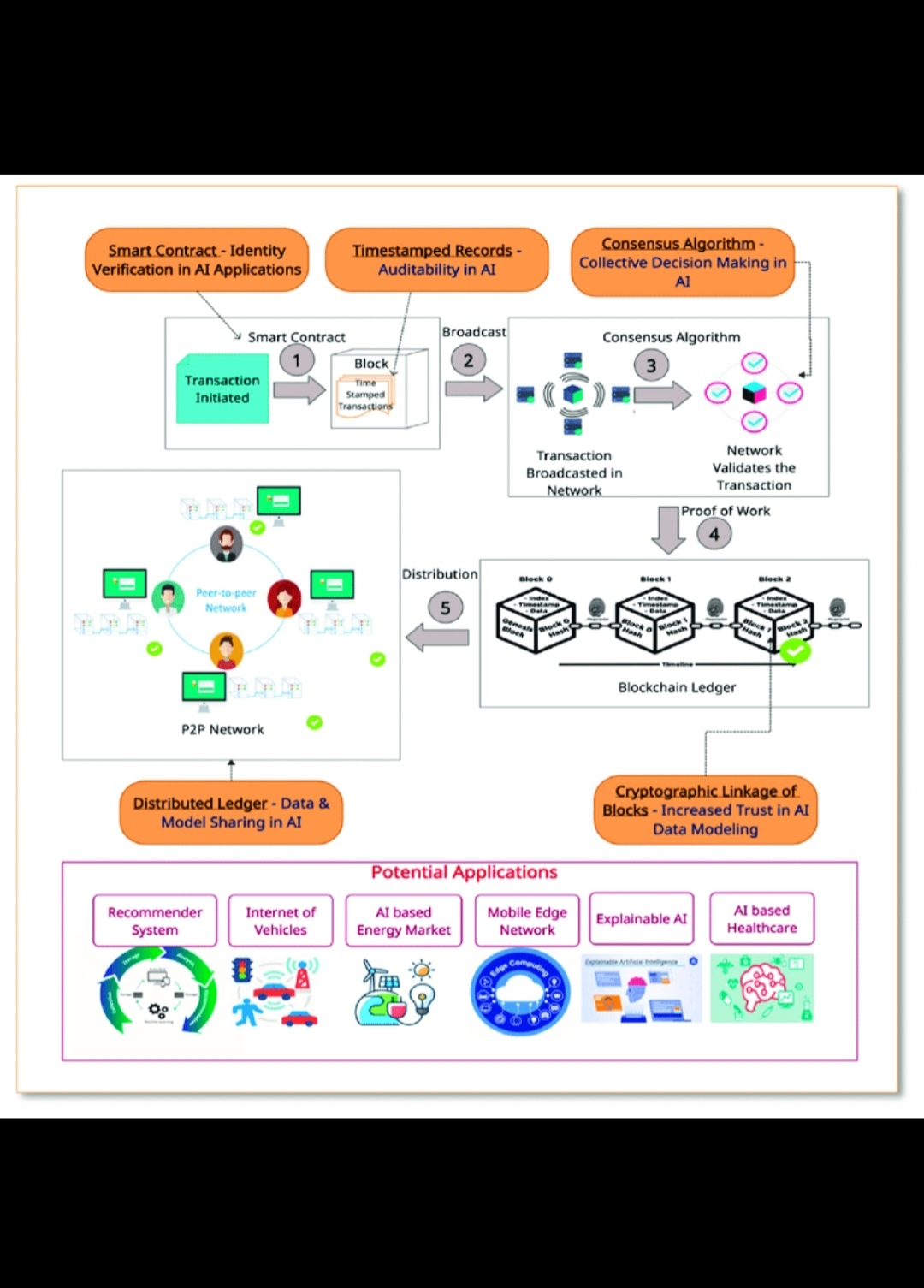

What stands out to me is the architectural discipline. Mira treats AI outputs as objects that must pass through a cryptographic validation layer before they are trusted. Instead of relying on centralized APIs or opaque black-box attestations, the system introduces validator-backed verification flows. This shifts the trust model from institutional reputation to cryptographic and network-based consensus.

From an infrastructure lens, this matters. Because once AI integrates with DeFi, governance automation, data indexing, and autonomous execution systems, incorrect or manipulated outputs become systemic risks. The cost of unverified AI is not theoretical — it becomes financial, reputational, and operational.

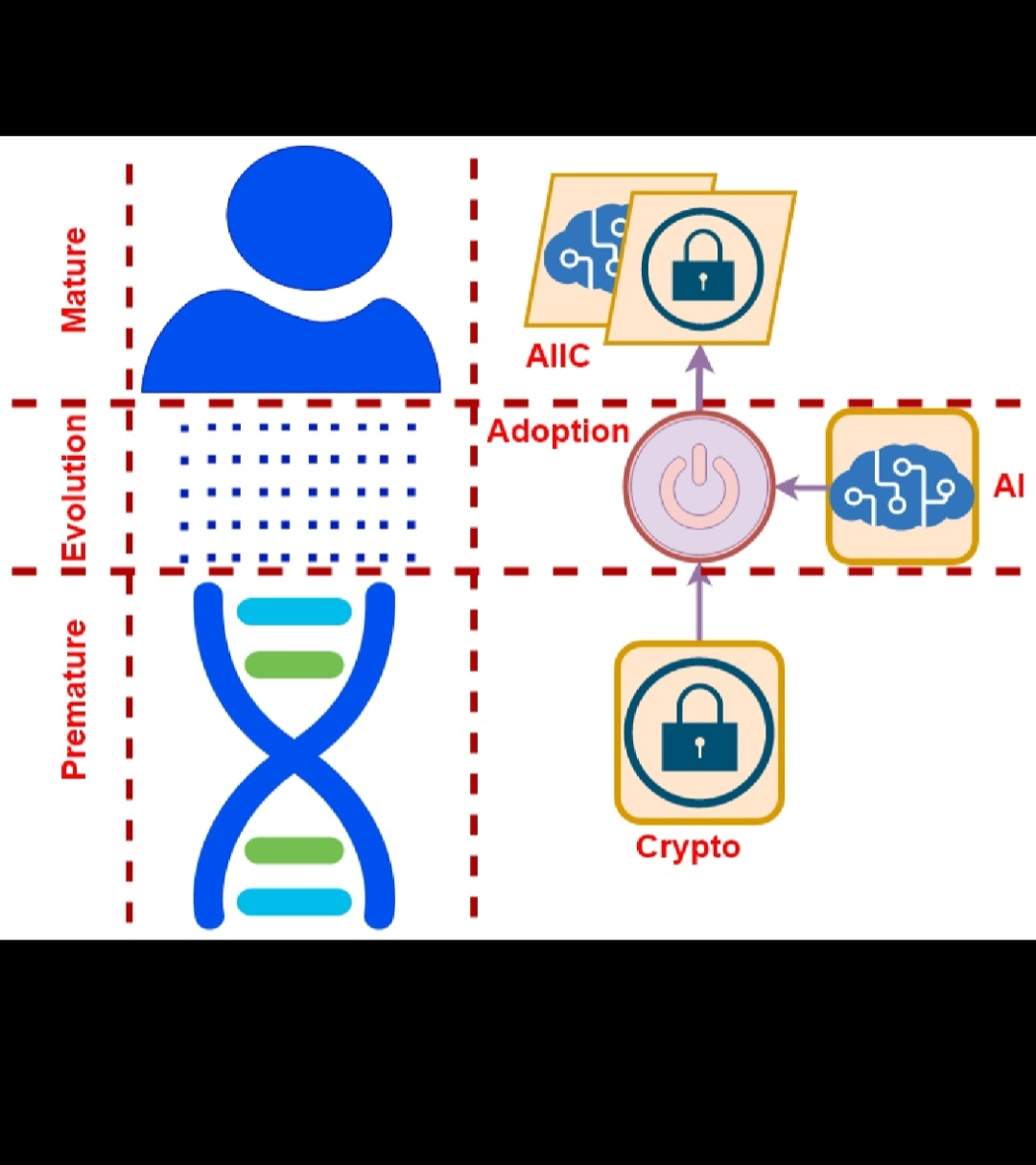

Mira’s approach reframes AI interaction. It doesn’t assume the model is the final authority. It assumes the output must be independently verifiable. That shift moves AI closer to how blockchains treat transactions: don’t trust, verify. To me, that convergence between AI generation and blockchain validation is the core thesis behind $MIRA.

Technically, the direction is clear. Strengthening validator coordination, refining attestation mechanisms, and improving output integrity pipelines are not marketing features — they are survivability features. In a saturated AI-token landscape, infrastructure depth becomes the differentiator. Projects that solve surface-level integrations may capture attention. Projects that solve trust at the protocol layer build staying power.

I also look at incentives. A verification network only works if validators are economically aligned to maintain integrity. That means staking design, slashing logic, and verification rewards must be carefully structured. If the economics are weak, the verification layer becomes decorative. If they are robust, the network becomes self-reinforcing. Mira appears to be building with that long-term equilibrium in mind rather than short-term token reflexivity.

Another factor I consider is composability. A true verification layer must integrate across ecosystems. It should not depend on a single model provider or a single chain. The more modular and chain-agnostic the verification framework becomes, the more indispensable it grows. From what I observe, Mira’s framing aligns with that multi-environment reality rather than siloed deployment.

The broader implication is simple: AI without verification cannot scale into critical infrastructure. Financial systems demand provability. Autonomous agents require deterministic validation. Enterprises need auditability. If Mira succeeds in embedding verification into the AI execution stack, it occupies a structural position — not a narrative one.

Personally, I’m less interested in short-term volatility and more interested in protocol design choices. Does the architecture reduce systemic trust assumptions? Does it create measurable accountability? Does it transform AI outputs from probabilistic suggestions into verifiable objects? These are the criteria I use. And this is why MIRA remains on my radar.

The market often misprices infrastructure in its early phases because infrastructure is quiet. It does not trend loudly. It integrates gradually. But when the ecosystem matures, infrastructure captures durable value because everything routes through it.

In the long run, AI on-chain will not be defined by which model generates the fastest response. It will be defined by which networks can prove the authenticity, integrity, and origin of those responses. If that becomes the industry standard, then Mira is not competing in the AI race — it is building the checkpoint every AI system must pass through.

That is the strategic difference I see. And that is why I view MIRA not as an AI narrative play, but as a verification infrastructure thesis unfolding in real time.