I first noticed calibration as a cost the week after an incident. Nothing was technically broken. Latency was fine. Throughput was fine. But every integration slowed down.

Not because the network lagged.

Because operators stopped believing “approved” meant the same thing it meant last week.

One team added a two-second hold before the next step could fire. Another added a second sign-off for borderline cases. The workflow still ran.

Autonomy just became cautious.

That’s the part of ROBO I keep coming back to. Not verification itself — the threshold line.

Calibration is where verification turns into permission.

When thresholds move, users don’t see a bug. They feel a rule change.

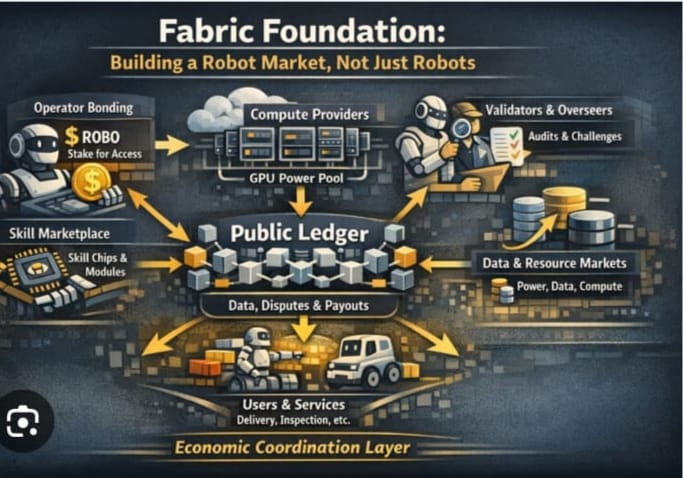

With support from the non-profit Fabric Foundation, Fabric operates close to safety, policy, and real-world consequences. That makes calibration unavoidable. It also makes calibration a hidden governance surface. A small shift in acceptance changes who gets through, what gets approved, and how many humans are needed to keep the system usable.

A drifting threshold doesn’t reduce risk. It relocates it.

I look for calibration stress in three places: drift, bias, and posture.

Drift is the first leak. How often does the threshold move, and how legible is the move?

If the same input passes last week and fails this week without a clear changelog, teams start pinning to older versions. Freezing becomes safer than upgrading. That’s when drift turns into an ops tax.

Bias is the second leak. Every system chooses its preferred mistake. Tight thresholds increase false positives. Loose thresholds increase false negatives. What matters is consistency. If bias swings under load, nobody trusts the boundary. Everyone compensates.

Posture is the third leak. You see it in behavior: rising manual review share, longer hold windows, dashboards watching what used to be automatic. Autonomy isn’t a model property. It’s the moment humans stop compensating.

The simplest test is brutal and boring: after an incident, do holds revert? Does manual review compress? Do thresholds stabilize with transparent versioning? Or do safeguards stick and supervision quietly expand?

If operators have to relearn the line after every shock, autonomy never gets cheaper.

Calibration isn’t glamorous. But it’s where trust either compounds — or slowly hires more humans.