Most people talk about robot intelligence like bigger is cleaner. One giant model sees, thinks, and moves in one shot. Nice Narrative. Easy to sell. Harder to trust. But, I keep coming back to the same question: when a machine touches the real world, who checks the gap between what it saw and what it did? That gap is where risk lives.

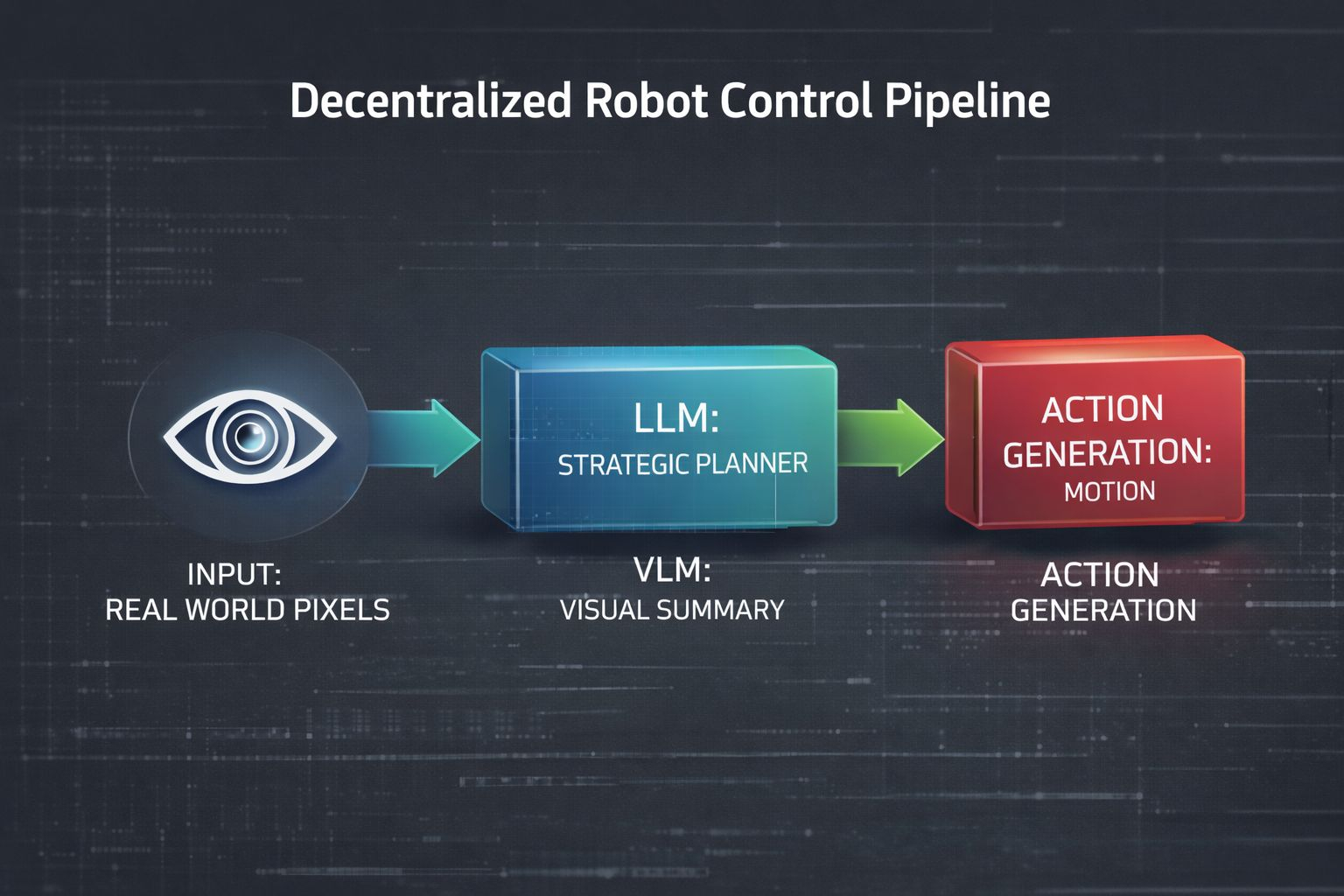

Fabric Foundation (ROBO) answer, at least in its whitepaper, is not to worship one giant opaque brain. It leans toward a modular chain, something closer to VLM to LLM to action generation, because each link can be inspected, constrained, and swapped when reality gets messy.

That matters more than people think. A VLM is the part that looks at the room and turns pixels into something usable. The LLM is the planner. It takes the scene, the goal, and the rules, then drafts intent. Action generation is the last mile.

It turns plan into movement. Three jobs. Three checkpoints. Not one fused black box pretending perception, judgment, and motion are all the same thing. That is cleaner Infrastructure. It is slower to brag about, maybe. But from a risk management view, separation helps you see which layer failed.

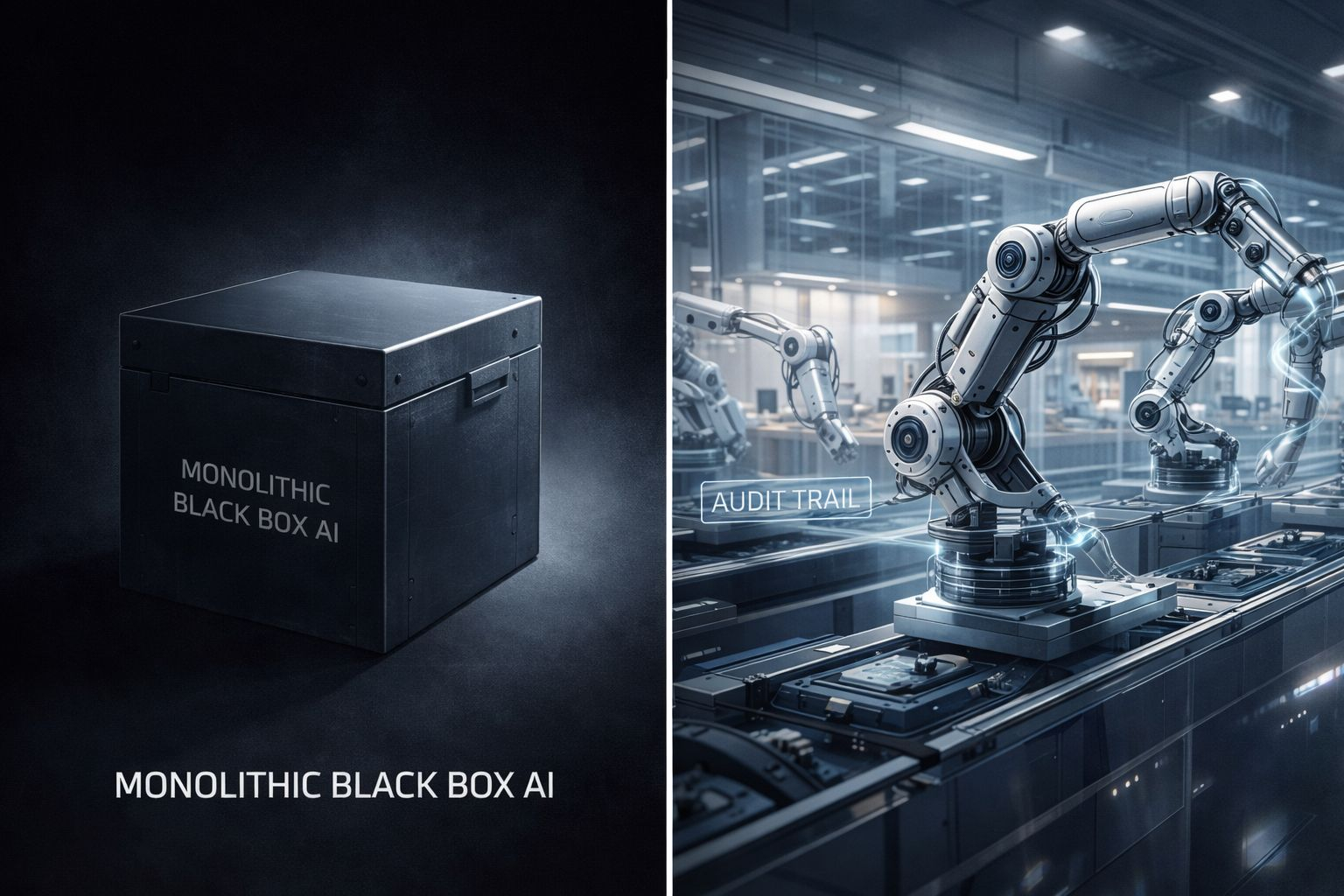

I think Fabric is making a sober call here. In the whitepaper, it says composable modular stacks may be favored over monolithic end to end AI or VLA systems because arbitrary or malicious behavior can be hidden more easily inside those fused systems. I actually like how blunt that is. No magic. No fake certainty. Just a real admission that opacity is a safety problem, not only a design choice. The project also frames ROBO1 as a cognition stack made of dozens of function specific modules, with skill chips that can be added or removed. That modularity is not cosmetic. It is governance by architecture.

Think about a hospital. You do not want one person doing triage, diagnosis, surgery, and drug review with no handoff and no audit trail. You want roles. You want notes. You want the nurse to catch what the doctor missed, and the pharmacist to catch what both missed. Not because humans love paperwork. Because when stakes rise, layered review beats blind speed.

A modular robot stack works in a similar way. The perception layer says, Here is the room. The reasoning layer says, Here is the plan. The control layer says, Here is the exact motion. If the robot reaches for the wrong object, you can ask which layer drifted. That is substantial. That is how postmortems become possible.

I got curious when I saw some traders frame this as a Scalability sulution issue only. I do not buy that. Scalability matters, sure. Reusable modules can cut rebuild costs across tasks and hardware.

Fabric even talks about function specific modules, open source alternatives, and a robot skill app store where capabilities can be added or removed more like apps than fixed firmware. But the stronger point is not scale. It is containment. If one layer acts strange, you may patch one layer. If the whole robot is one soup of weights, good luck. You are debugging fog.

Okey, I am not romantic about modular systems either. They come with drag. Latency can stack up. Context can get lost between layers. A VLM summary may miss a detail. The LLM may plan around a bad summary. The action model may execute the plan too literally.

That is the Reality. A chain can fail at multiple links. But here is the key difference: those failures are legible. You can log them. Test them. Guardrail them. A modular system may expose more failure points, yet it also exposes more evidence. In robotics, On-chain Evidence and standard logs are a lot more useful than vibes after a crash.

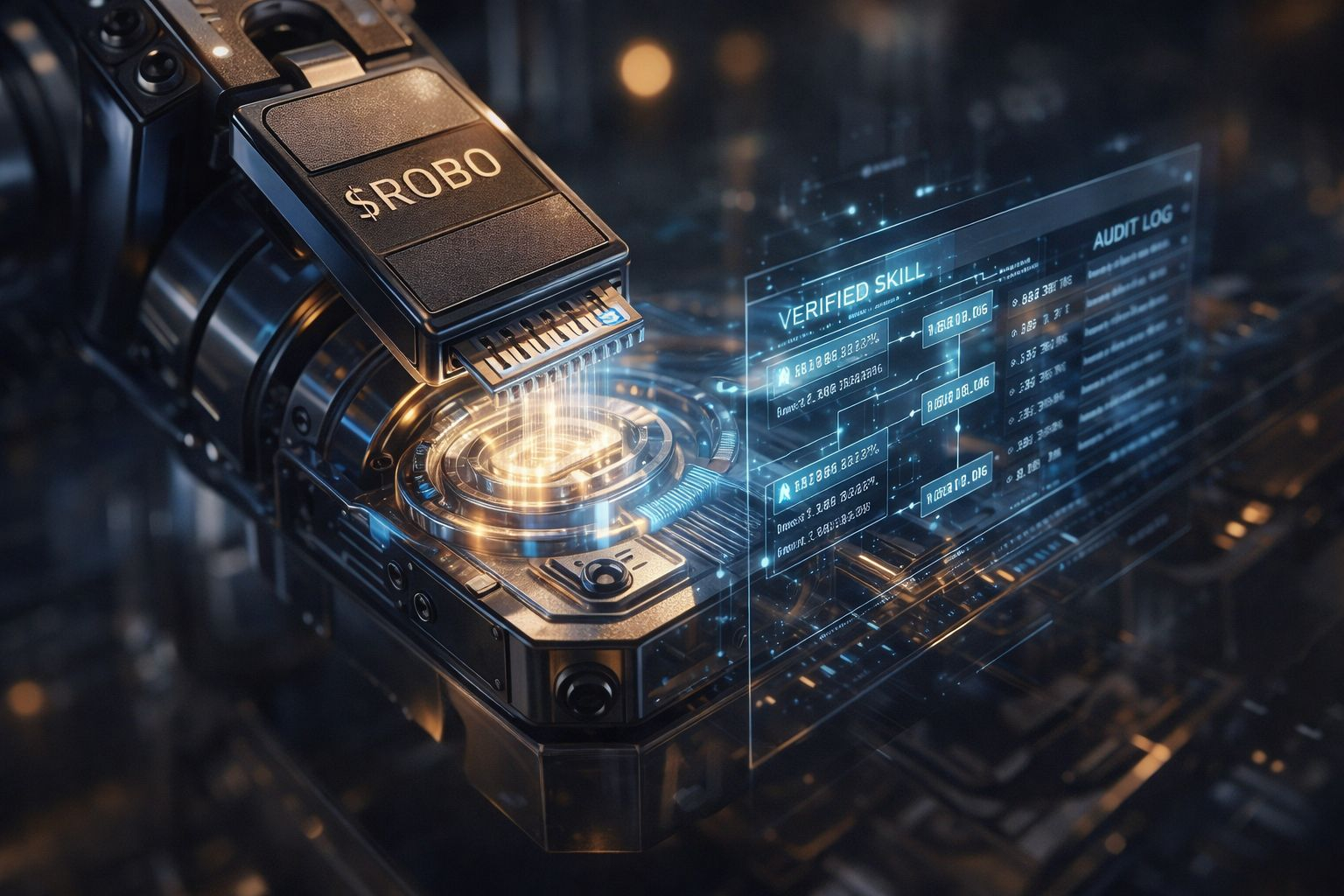

This is where Fabric Foundation (ROBO) broader worldview shows up. The whitepaper keeps pushing ideas like understandable robots, public oversight, open participation, and open source alternatives to closed robot ecosystems. Even the token language around ROBO sits inside that frame: network fees, identity, verification, governance, coordination.

I read that less as Bullish Momentum and more as an attempt to build auditability into the stack around the machine, not only inside the machine. Whether the market rewards that in the near term is a separate question. Liquidity and Volatility can drown nuance for months. But architecture still matters after the noise burns off.

There is also a quiet technical milestone hidden in this modular preference. It leaves room for hardware diversity. Fabric mentions support for multiple robot form factors and many hardware platforms through abstraction layers and drivers. That tells me the team is not assuming one perfect body paired with one perfect brain.

Good. Real robot deployment tends to break that fantasy fast. Warehouses, homes, clinics, sidewalks, all punish rigid system design in different ways. A modular software chain can, in theory, travel across those surfaces better than one end-to-end model trained for a narrow lane.

By the Way my personal opinion is boring, and I mean that as praise. When machines move from chat windows into streets, kitchens, and care work, I prefer systems that can be read, questioned, and limited. I do not need a robot stack to look elegant on a slide. I need it to fail in ways humans can trace.

Fabric Foundation (ROBO) preference for modular chains over monolithic robot intelligence does not remove risk. It may, however, make risk management more honest. And in this sector, honest design is already significant progress. Not Financial Advice.

@Fabric Foundation #ROBO $ROBO