Most people think the dangerous part of robotics is the hardware.

They’re wrong.

The real risk lives in the decision layer. Who controls it. Who approves updates. Who gets blamed when something breaks.

That’s the part nobody wants to sit with.

I’ve watched enough systems under stress markets, exchanges, validator networks to know this: when things go wrong, it’s never about features. It’s about authority. It’s about who had the power to decide and who absorbs the fallout.

And robotics is about to scale into shared human space.

“When I am watching, I am paying attention to how the system decides, not just what it does.”

That’s the lens. Always.

Now let’s talk about Fabric Protocol not as a product, not as a token story, but as infrastructure.

Fabric is building a global open network, backed by the non-profit Fabric Foundation, to coordinate general-purpose robots using verifiable computing and agent-native infrastructure. Translation: they’re trying to put robots on shared rails where behavior, updates, and rules run through a public ledger instead of a single company’s internal server.

That’s bold.

But here’s the real tension: decentralization versus operational discipline.

Robotics doesn’t forgive sloppiness. If a trading engine glitches, you lose money. If a robot glitches, someone might get hurt. That changes the game. You can’t just “iterate fast” in physical space and hope for the best.

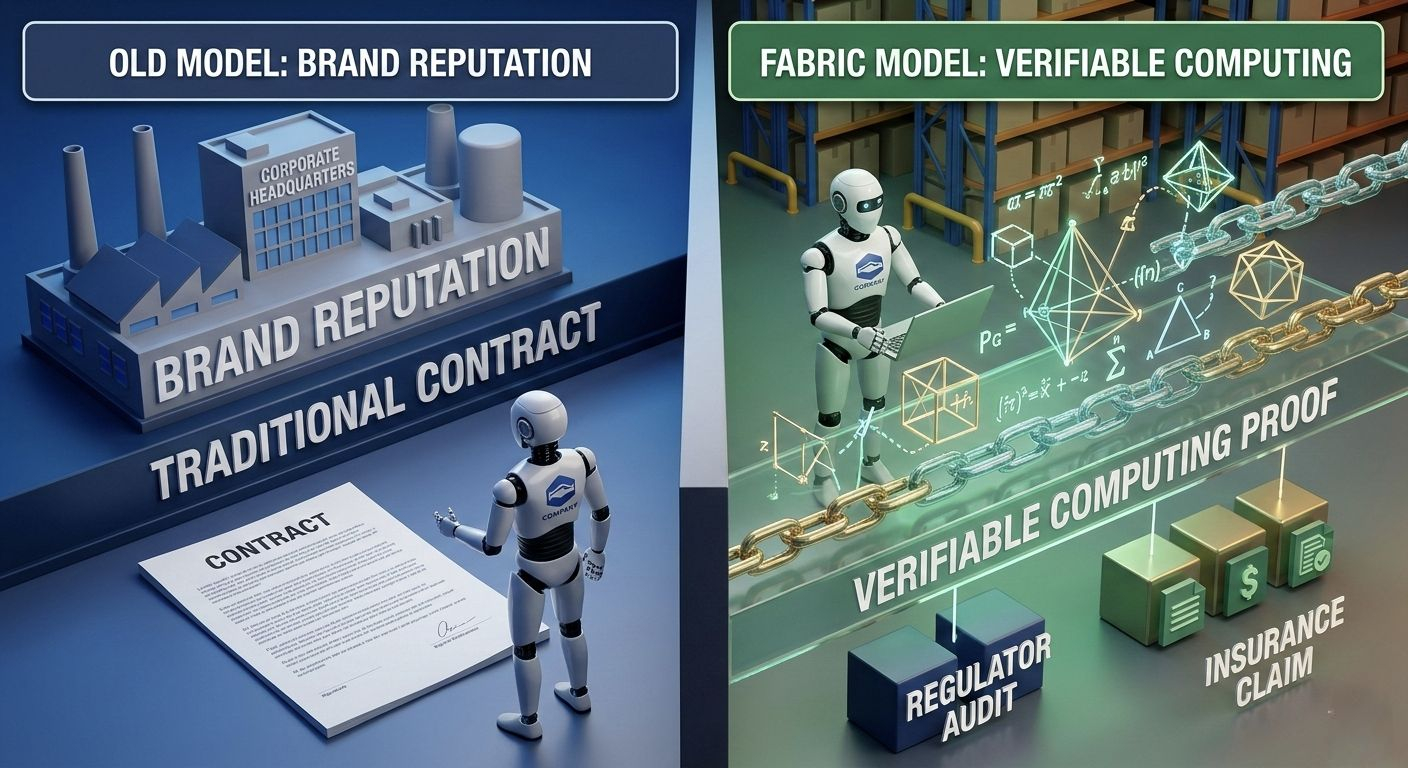

Fabric leans into verifiable computing as its backbone. Robots generate proofs that they executed certain computations correctly. Everything becomes auditable. Traceable. Anchored on-chain.

Sounds clean, right?

Here’s what that actually does. It shifts trust away from brand reputation and toward math. Instead of asking “Do we trust this manufacturer?” you ask “Can they prove what their machine did?” That’s powerful. Regulators love that. Insurers love that.

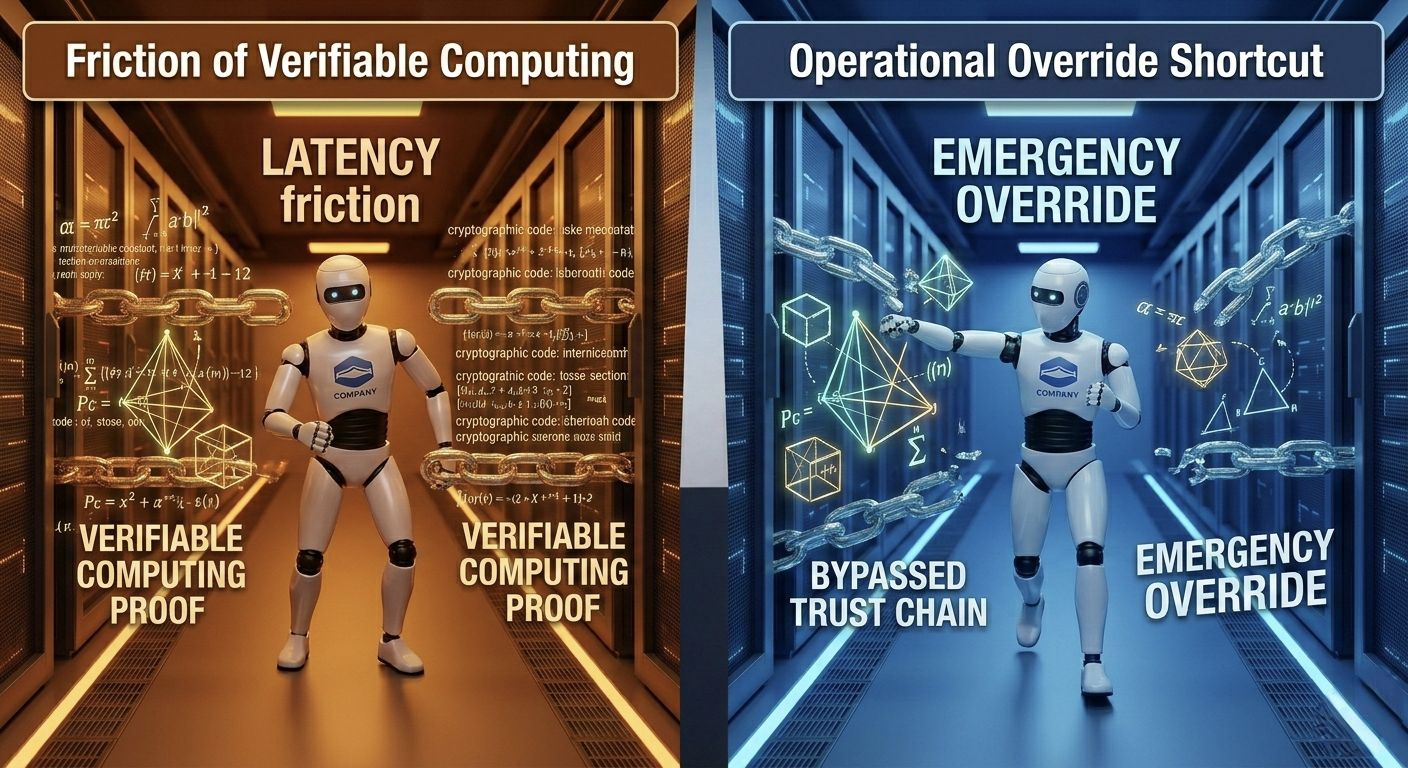

But it also slows things down.

Proofs cost compute. Compute costs time. And time, in robotics, is everything.

Imagine a warehouse robot that has milliseconds to decide whether to stop or swerve. Does it prioritize generating clean verification trails? Or does it move and reconcile later? People don’t talk about this enough. Verification layers add friction. Friction adds latency. Latency compounds.

Under calm conditions, nobody notices. Under stress? Operators start looking for shortcuts.

I’ve seen this before. When compliance overhead grows faster than performance gains, someone bypasses the system. Always.

So who benefits from verifiable computing? Auditors. Governance participants. Anyone who wants clean accountability. Who absorbs risk? The operators running machines in real environments where milliseconds matter. What fails under stress? Discipline. People optimize for survival.

That trade-off doesn’t disappear. You can’t engineer it away. You manage it.

And that’s just the first pressure point.

The second one gets even messier: governance.

Fabric coordinates data, computation, and regulatory logic through a public ledger. That ledger acts like an authority surface. Participants use the token as coordination infrastructure staking, signaling, validating updates. Not as a meme coin. Not as hype. As a mechanism to decide who influences system rules.

That changes behavior fast.

People who stake and validate gain governance weight. They vote on updates. They approve modules. They shape how robots evolve collaboratively across the network.

But here’s the uncomfortable part.

Those voters usually don’t operate the robots.

If governance approves a new navigation module and that module fails in edge cases, the validator doesn’t stand in a warehouse explaining what happened. The operator does.

Decentralized decision-making spreads authority. Liability doesn’t spread so nicely.

You can’t decentralize a broken arm.

Let’s be real this is where things get tricky. Token holders want growth. They want adoption. They want throughput and network expansion because that strengthens the coordination layer. Operators want stability. They want predictability. They want fewer surprises.

Those incentives don’t naturally align.

Imagine a critical bug shows up in a widely used control module. Governance needs to vote on a patch. The patch adds safety constraints but reduces performance. Validators might hesitate if they think reduced throughput weakens the network’s competitiveness. Operators? They’ll take the safety hit immediately because they’re the ones exposed.

That gap matters.

Under pressure, operators might fork. They might patch locally. They might step outside governance flow because they can’t wait. And when that happens, cohesion fractures.

Who benefits from open governance? Smaller builders who don’t want to submit to a single corporate authority. They get access to shared infrastructure and rule-setting. That’s real value.

Who absorbs risk? The people deploying physical machines in regulated environments.

What fails under stress? Coordination speed.

Now zoom out.

Fabric pushes modular infrastructure separate components for data, compute, regulatory logic, and agent behavior. I like modularity. It reduces integration headaches. It allows specialized evolution. But modular systems introduce boundary risks. One module updates. Another doesn’t. Dependencies shift. Suddenly something subtle breaks.

Markets do this all the time. Correlations spike. Hidden couplings surface. Nobody planned for that interaction.

Same here.

Change a perception model and you might affect motion planning in ways nobody modeled. Update regulatory constraints on-chain and operators discover new behavioral limits they didn’t expect. The ledger records the update cleanly. Transparency isn’t the problem.

Responsibility is.

Transparency shows you who voted. It doesn’t guarantee they understood second-order effects.

Here’s the question nobody wants to sit with: if decentralized governance approves a behavioral update that leads to systemic physical failure, who stands in court?

Seriously. Who?

The validator with staked tokens? The foundation? The operator? The manufacturer? Physical systems force clarity.

Fabric tries to narrow that gap by anchoring execution in verifiable computing and encoding rule changes transparently. That helps. It really does. You can trace decisions. You can attribute actions. That’s better than opaque black boxes.

But you still have a structural imbalance.

Authority distributes. Liability concentrates.

And that tension doesn’t go away.

It waits.

#ROBO #robo @Fabric Foundation $ROBO