Artificial intelligence is moving faster than almost any technology we have seen before. Every week new models appear, new AI agents launch, and new companies start integrating AI into their systems. From finance and healthcare to logistics and robotics, AI is beginning to influence real decisions that affect real people.

But behind all this progress there is a problem that almost everyone in the AI industry quietly admits.

AI can be incredibly powerful, but it is not always reliable.

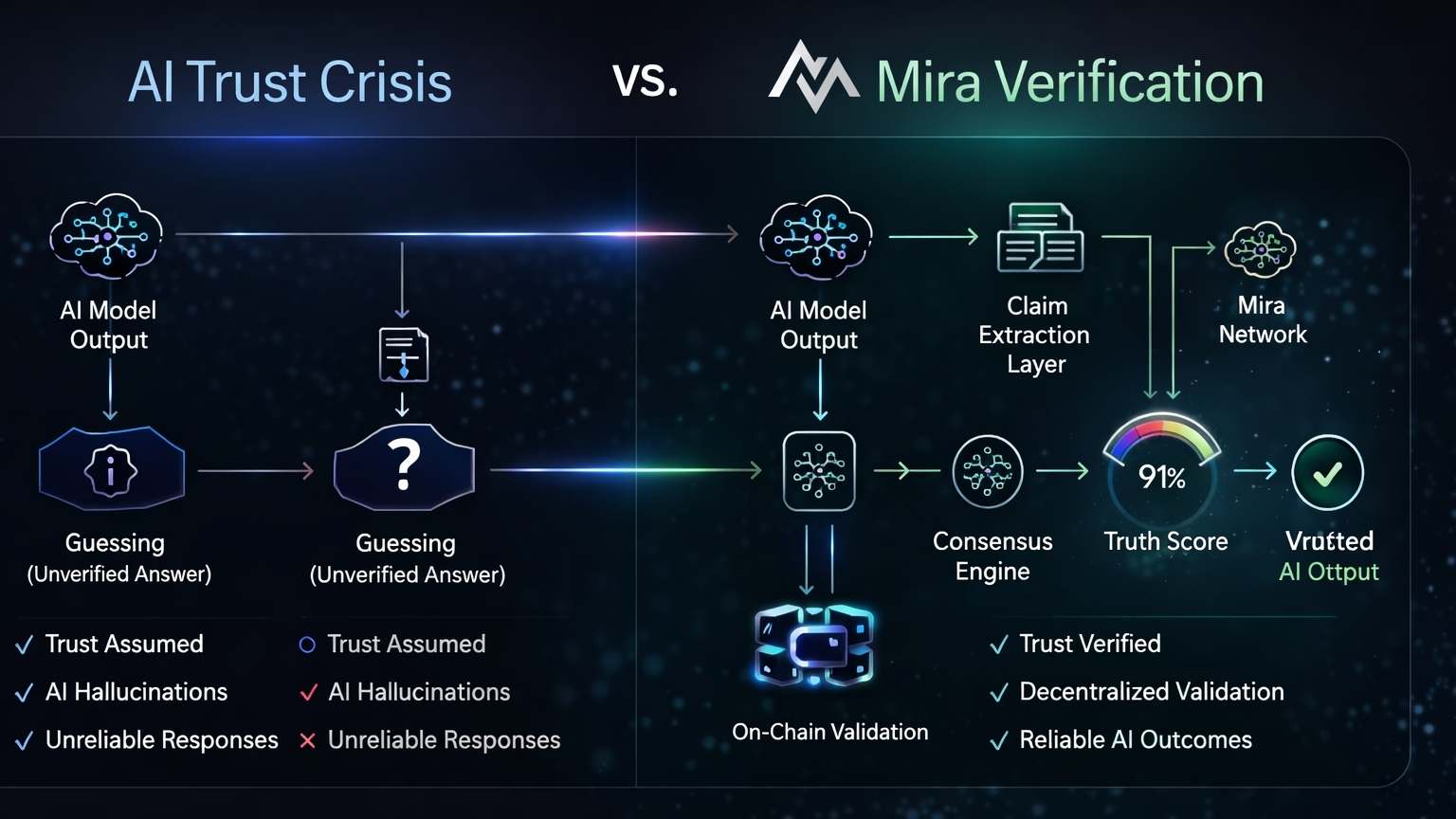

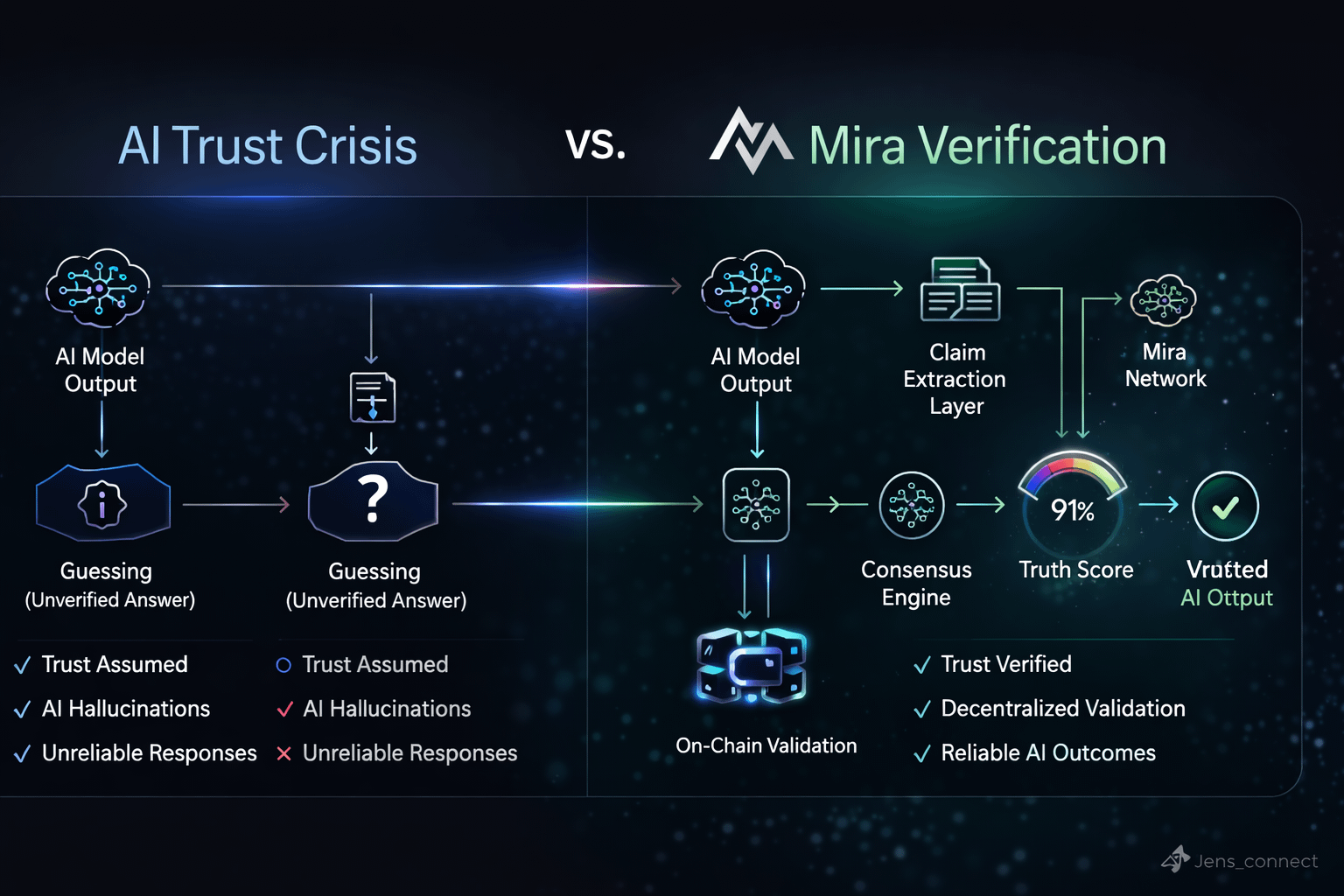

Sometimes it produces answers that look correct but are completely wrong. This phenomenon is often called AI hallucination. The model generates an output confidently even when the information is inaccurate or fabricated. For small tasks this may not matter much. But when AI starts making decisions in financial markets, autonomous systems, medical research or infrastructure, the consequences of wrong information can be serious.

This is the moment when a new idea begins to make sense.

What if AI systems did not have to be trusted blindly?

What if every AI output could actually be verified?

This is the core vision behind Mira Network.

Instead of asking people to simply trust artificial intelligence, Mira is building a system where AI results can be checked, verified and validated through a decentralized network. The goal is not to replace AI models. The goal is to make them accountable.

Think of Mira as a truth layer for artificial intelligence.

When an AI model generates an answer, that output can be extracted as a claim. Instead of assuming the claim is correct, Mira routes it through a verification process where multiple independent AI models analyze the same claim. Each model evaluates the output and compares it with its own reasoning.

The network then aggregates these responses and produces what Mira calls a truth score.

If enough independent models agree on the validity of the output, the claim is verified. If there are contradictions or inconsistencies, the system flags the response as unreliable. The final verification result can then be recorded on chain, creating a transparent and immutable record of whether an AI claim was validated or rejected.

This process transforms AI outputs from simple responses into verifiable results.

It might sound simple, but the implications are massive.

The global AI market is expanding rapidly. According to several industry estimates, the AI sector could exceed two trillion dollars in value within the next decade. At the same time, organizations are becoming increasingly aware that trust is the biggest bottleneck for AI adoption.

Businesses do not just want powerful AI models.

They want reliable AI systems.

And reliability requires verification.

This is exactly where Mira positions itself. Instead of competing with existing AI companies, the network acts as an infrastructure layer that sits on top of them. Models generate outputs, and Mira verifies them.

This approach introduces a new type of architecture for artificial intelligence.

Instead of a single model acting as the ultimate authority, multiple models participate in a decentralized verification system. The network effectively creates a consensus mechanism for AI outputs.

If that sounds familiar, it should.

Blockchain networks use consensus to verify transactions. Mira applies a similar philosophy to artificial intelligence. Instead of validating financial transactions, the network validates information and computation.

In a world where AI agents are beginning to automate tasks across the internet, this type of verification layer could become essential.

Imagine autonomous AI agents executing financial strategies, negotiating contracts, or managing supply chains. These agents will constantly generate decisions and predictions. Without a verification layer, users must trust each agent blindly.

But with a system like Mira, those decisions could be verified by an independent network before they are executed.

This transforms AI from a black box into something more transparent.

And transparency builds trust.

Another interesting aspect of Mira’s architecture is how it aligns incentives across participants. Verifiers in the network contribute computational resources to analyze AI claims. In return they are rewarded through the ecosystem’s token incentives.

This creates an economic layer around verification. Participants are incentivized to perform accurate evaluations because incorrect validation could damage their reputation and future rewards within the network.

Over time this could evolve into a marketplace for AI truth verification, where developers, enterprises and autonomous systems all rely on decentralized validators to confirm the reliability of machine intelligence.

The idea might sound futuristic, but the need for such infrastructure is becoming clearer every day.

AI is rapidly moving toward a world of autonomous agents. These agents will communicate with each other, make decisions and coordinate complex tasks. In that environment, verification becomes more important than raw intelligence.

A powerful AI that cannot be trusted is a liability.

A verified AI system becomes infrastructure.

This is the shift Mira is trying to accelerate.

By combining artificial intelligence with decentralized verification, the network introduces a model where trust is not assumed. It is measured.

Every AI output becomes a claim.

Every claim can be checked.

Every verified result becomes part of a transparent system that anyone can audit.

In a way, Mira represents the moment when artificial intelligence stops being treated as an oracle and starts being treated as something that must prove its answers.

The AI revolution is not slowing down. If anything, it is just beginning. But as AI systems move deeper into real world decision making, the demand for reliability will grow even faster than the technology itself.

Infrastructure that verifies intelligence could become just as important as the intelligence being verified.

That is the space Mira is stepping into.

And if the vision works, the future of AI may not just be about smarter machines.

It may be about machines that can finally prove they are right.