It’s easy to talk about robots as if they are just tools — clever machines delivering packages, inspecting warehouses, assisting in hospitals. But the moment they begin making decisions, earning income, and coordinating through blockchain networks, they stop being just machines in the background. They become participants in our shared world. And whenever new participants enter the economy, the oldest human question returns: who holds the power?

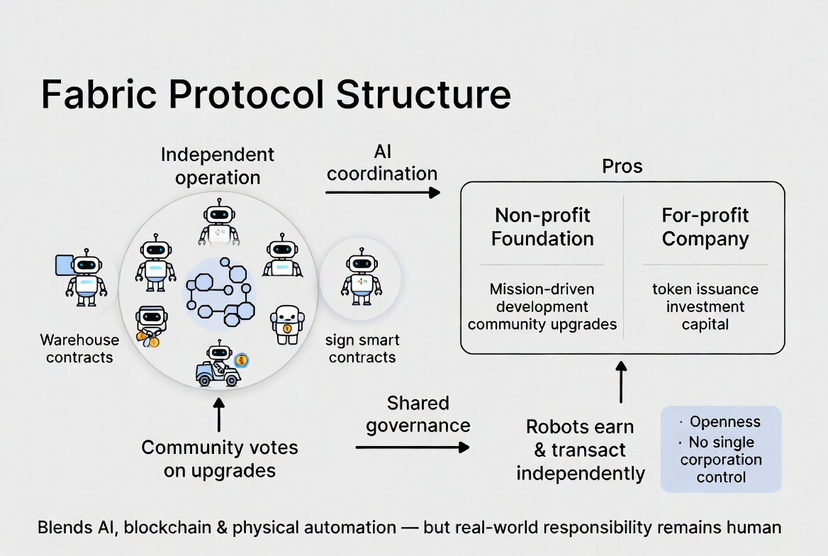

Fabric Protocol presents itself as a decentralized network where robots can operate, transact, and identify themselves independently. It blends AI, blockchain, and physical automation into a system that promises openness and shared governance. On paper, it sounds empowering — no single corporation controls the system, the community votes on upgrades, and a foundation oversees development. But behind every protocol is a structure, and behind every structure are incentives.

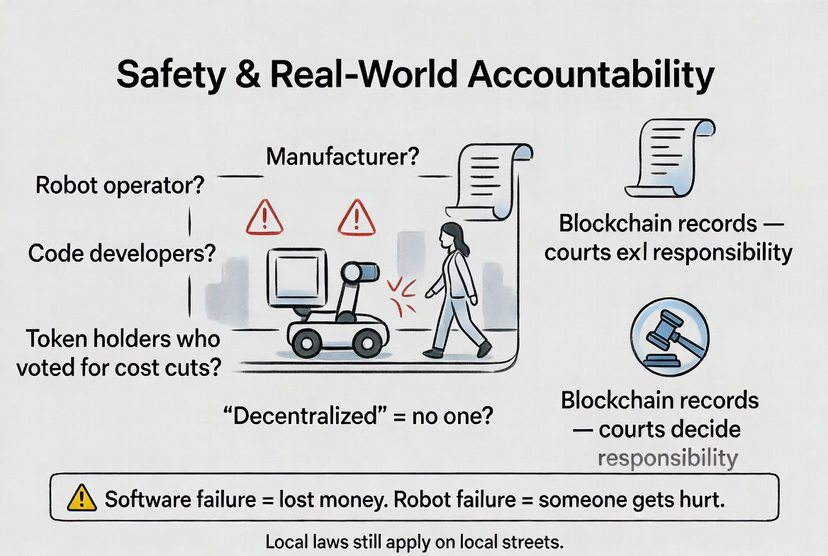

Fabric’s model splits responsibility between a non-profit foundation and a for-profit company that issues the token and raises investment capital. That arrangement isn’t unusual in crypto. It allows mission-driven development on one side and financial backing on the other. But robotics makes this balance far more delicate. When software fails in decentralized finance, money is lost. When robots fail, someone can get hurt.

If a delivery robot injures a pedestrian, responsibility cannot be shrugged off as “decentralized.” Is it the manufacturer’s fault? The operator’s? The developers who wrote the update? The token holders who voted to reduce costs? The blockchain may record what happened, but it does not answer who should stand in court. Real-world machines demand real-world accountability.

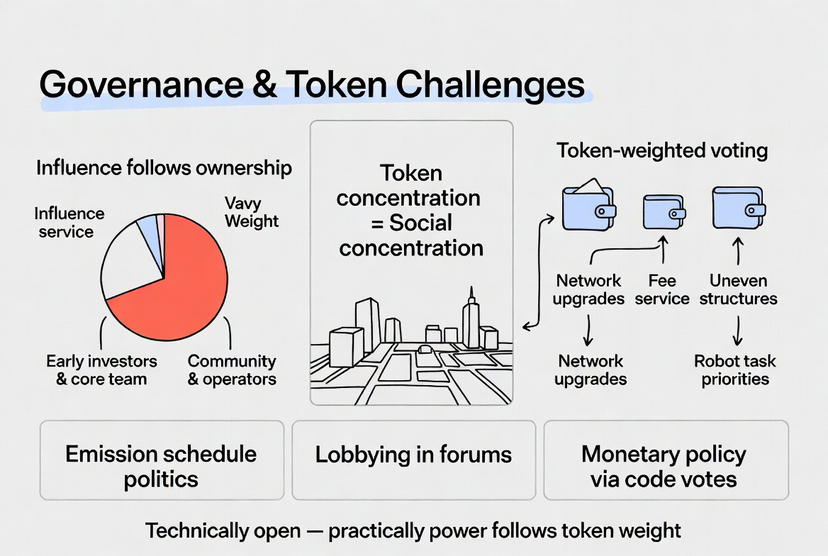

Then there’s the quieter issue of token distribution. In many blockchain systems, early investors and core teams hold a large share of governance tokens. That means their votes carry more weight. Technically, everyone can participate. Practically, influence follows ownership. If major decisions about network upgrades, fee structures, or robot task priorities depend on token-weighted voting, then those with the most tokens shape the direction of the robot economy.

That matters because robots don’t just exist online. They operate in neighborhoods, workplaces, and public spaces. If governance choices affect which areas get faster service, which tasks are rewarded, or which operators are penalized, those decisions ripple outward into society. Token concentration becomes social concentration.

Even the way new tokens are created — the emission schedule — carries political weight. Issue too many tokens, and value may drop, rewarding short-term behavior. Issue too few, and development slows. If these parameters can be changed by vote, then they become arenas for lobbying. Monetary policy, which in traditional economies is debated in parliaments and central banks, becomes a matter of code proposals and online forums. But unlike central banks, protocol governance doesn’t always operate under public oversight.

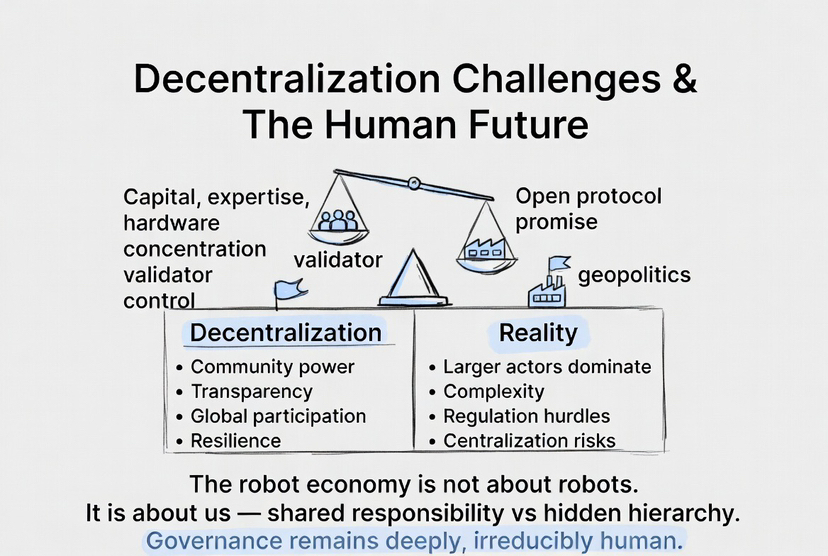

There’s also the simple reality of gravity. Decentralization is difficult to maintain. Hardware production requires capital. Running validator nodes requires expertise and infrastructure. AI systems demand vast data and computing power. Over time, larger actors tend to gain advantages. Even systems designed to be open can slowly tilt toward centralization. And when robots are involved, that tilt has physical consequences.

Imagine if a small group of validators effectively determined which robots get priority tasks in a city. They could influence delivery routes, pricing structures, even which services thrive or struggle. At that point, they’re not just network participants — they resemble utility operators. And utilities are rarely left unregulated for long.

Safety adds another layer of complexity. Governments around the world are actively updating AI and robotics regulations. New frameworks classify high-risk AI systems and require documentation, transparency, and oversight. A decentralized robot network cannot simply bypass those rules because it runs on-chain. Local laws still apply when machines operate on local streets. The romantic idea of borderless code meets the firm boundary of jurisdiction.

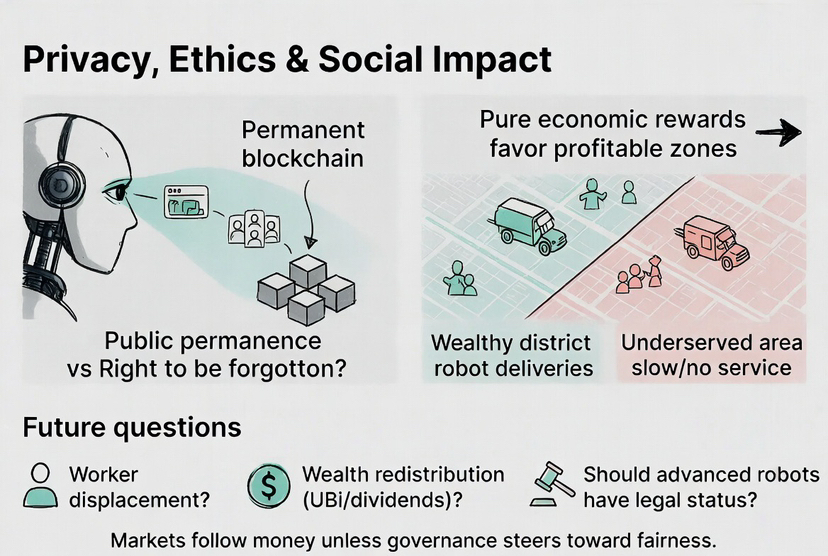

Privacy complicates matters further. Robots gather data constantly: images, sounds, spatial maps, behavioral patterns. When that information connects to blockchain systems, even indirectly, difficult questions arise. Who owns that data? The robot’s owner? The person being recorded? The network itself? Some countries grant individuals the right to have their data erased. Public blockchains are built on permanence. Engineers can design privacy layers and cryptographic protections, but those technical solutions don’t eliminate the underlying ethical tension.

And beyond safety and privacy lies a deeper ethical question: what kind of work will robots choose? If tasks are assigned based purely on economic reward, robots may favor profitable neighborhoods over underserved ones. Deliveries to wealthy districts might outcompete medicine runs to remote communities. Markets tend to follow money unless governance intentionally steers them otherwise. If a portion of network fees supported socially valuable but less profitable services, that would be a political choice — a recognition that efficiency alone does not define fairness.

The long-term implications stretch even further. As AI systems become more autonomous, some philosophers ask whether advanced machines might one day deserve a form of legal recognition. Most legal systems reject that idea for now. Still, robots capable of holding digital wallets, signing smart contracts, and generating revenue occupy new territory. At the same time, human workers face displacement pressures. Automation can increase productivity, but it can also widen inequality. If robot networks generate wealth, should a portion flow back to society? Through dividends? Through public funds? Through universal income experiments? These are no longer abstract debates.

Looking at other governance models helps clarify the stakes. Bitcoin relies on rough consensus rather than formal voting. Ethereum blends community discussion with validator coordination. Open-source communities often depend on reputation rather than token weight. Each model reflects different assumptions about trust and authority. Fabric’s token-based governance offers clarity, but it risks turning economic weight into political dominance unless safeguards are introduced — such as quadratic voting, stake caps, or multistakeholder councils that include operators and community representatives.

Then there is geopolitics. Robotics is strategic infrastructure. Nations invest heavily in AI and automation to secure economic advantage. An open global robot protocol might be embraced in some regions and restricted in others. Governments may demand compliance layers or encourage domestic alternatives. International cooperation on safety and standards is possible, but political competition is equally likely.

What makes all of this human is not the code but the consequences. Robots will not exist in isolation. They will navigate sidewalks where children play, hospitals where nurses work, and warehouses where employees depend on wages. The governance decisions embedded in a protocol will shape daily life in subtle ways — determining who benefits, who bears risk, and who gets a voice.

Decentralization is not automatically democratic. It can distribute power, but it can also disguise concentration. A foundation can promote openness, yet investors may still dominate decision-making. A token can reward contributors, yet it can also amplify speculation. The outcome depends on design choices — and on whether those choices prioritize community resilience over short-term returns.

In the end, the robot economy is not really about robots. It is about us. It asks whether we can build systems that reflect shared responsibility rather than hidden hierarchy. It asks whether innovation can coexist with fairness. And it reminds us that even in a world of autonomous machines, governance remains deeply, irreducibly human.