Robotics is no longer confined to factory cages and research laboratories. Intelligent machines are entering hospitals, warehouses, farms, classrooms, and even private homes. They assist surgeons, deliver groceries, patrol facilities, and care for the elderly. As their capabilities expand, so does their autonomy. What was once mechanical automation is quickly becoming decision-making infrastructure embedded in everyday life. Yet while investment and innovation accelerate, governance remains fragmented and reactive. If robotics scales without clear rules, shared standards, and enforceable accountability, society risks building systems that are powerful but misaligned with human priorities.

The urgency of governance lies in the physical nature of robotics. Software errors can cause financial losses or data breaches, but robotic failures can cause bodily harm. A delivery drone that malfunctions, an industrial robot that misreads sensor input, or a care robot that misinterprets a patient’s needs can produce immediate, tangible consequences. As machines gain more autonomy, they begin making context-dependent judgments in dynamic environments. That transition from tool to agent requires stronger ethical foundations. Safety cannot be an afterthought layered onto finished products; it must shape design, deployment, and oversight from the beginning.

Safety frameworks developed in recent years provide a starting point. Organizations such as the IEEE have proposed standards for ethically aligned design, emphasizing transparency, accountability, and human oversight. Similarly, the European Commission has advanced regulatory approaches to high-risk systems, particularly those operating in sensitive environments like healthcare and public infrastructure. These initiatives recognize a crucial reality: as robotics becomes embedded in society, risk management must move beyond technical reliability toward social responsibility. It is not enough for a robot to function as intended it must function within ethical and legal boundaries that reflect collective values.

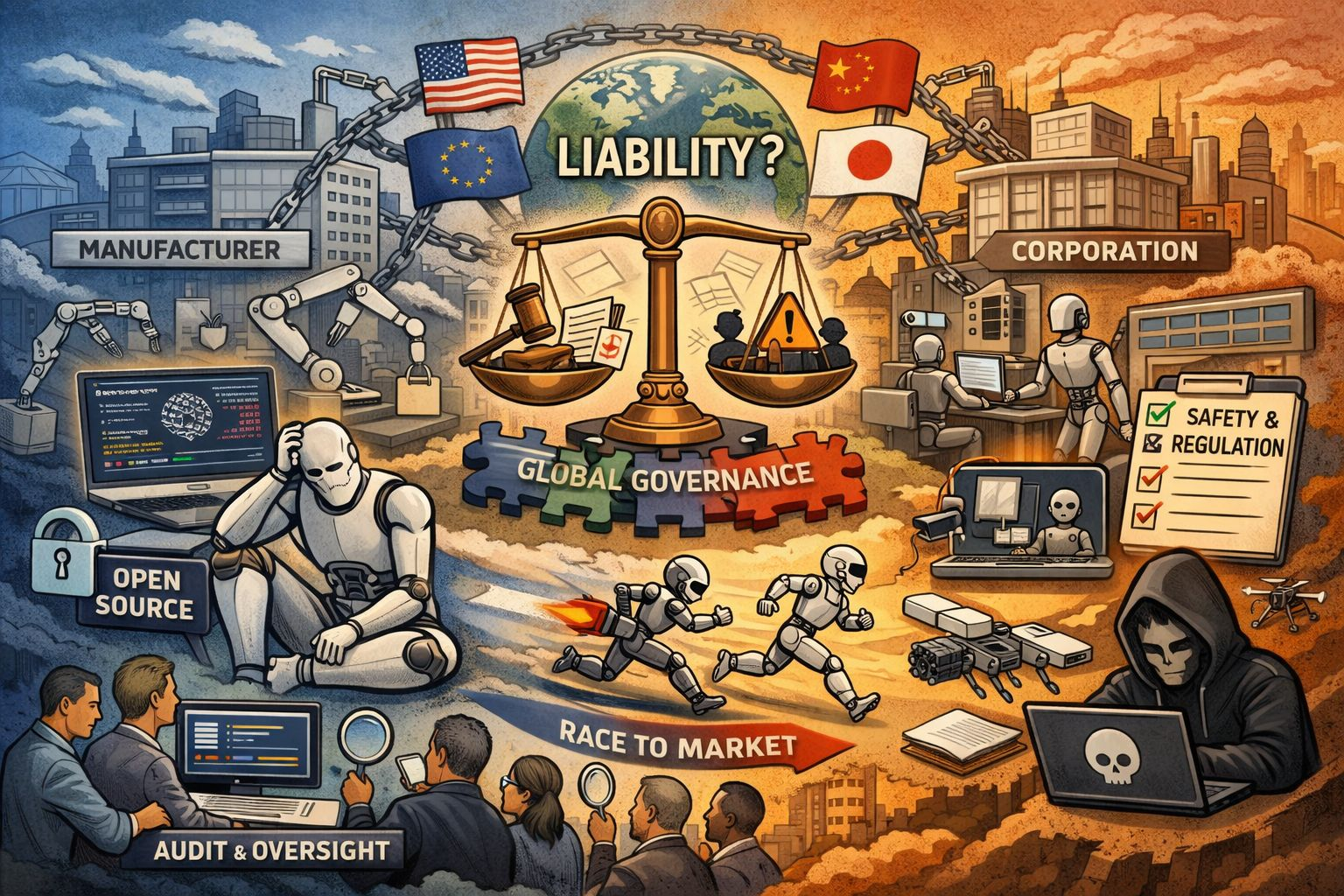

Governance also clarifies responsibility. When a robotic system makes a harmful decision, who answers for it? The manufacturer who built the hardware, the developer who trained the control model, the operator who deployed it, or the organization that purchased it? Without clearly defined liability structures, accountability diffuses into ambiguity. Companies may shift blame across supply chains, leaving victims without recourse and eroding public trust. Establishing governance before large-scale deployment ensures that liability frameworks evolve alongside technological capability rather than trailing behind it in crisis response.

Beyond accountability, governance shapes incentives. In competitive markets, speed often outruns caution. Startups racing for funding or market dominance may prioritize performance metrics over rigorous safety testing. Investors may reward rapid deployment rather than careful validation. In such an environment, responsible actors can be disadvantaged. Effective governance levels the playing field by setting minimum safety and transparency requirements. It prevents a race to the bottom where shortcuts become normalized. Rather than stifling innovation, well-designed rules can protect it by ensuring that public confidence grows with adoption.

Decentralization adds another layer of complexity. Robotics development is increasingly global and distributed. Open research communities share code, datasets, and hardware designs across borders. Decentralized manufacturing techniques allow components to be produced in multiple regions. This diffusion accelerates innovation but complicates oversight. No single authority controls the ecosystem. A robot designed in one country may be assembled in another and deployed in a third. Governance models must therefore be interoperable and internationally coordinated. Fragmented regulations risk creating loopholes that undermine safety standards.

At the same time, decentralization can strengthen governance if harnessed thoughtfully. Distributed oversight bodies, independent audit groups, and community review mechanisms can supplement formal regulation. Transparency across supply chains enables broader scrutiny. When development is visible rather than opaque, civil society, researchers, and regulators can identify risks earlier. Governance in this context becomes a networked process rather than a centralized command structure. The challenge is to design systems that preserve innovation’s openness while preventing accountability from dissolving across jurisdictions.

The debate between open and closed systems sits at the heart of this tension. Open-source robotics promotes transparency, collaborative improvement, and shared learning. Researchers can inspect code, identify vulnerabilities, and contribute fixes. Openness reduces the concentration of power in a handful of corporations and fosters a more democratic innovation landscape. However, it also raises concerns about misuse. Dual-use technologies can be adapted for harmful purposes, and malicious actors may exploit publicly available designs.

Closed systems, by contrast, offer tighter control over distribution and updates. Companies can restrict access, monitor usage, and enforce compliance within proprietary ecosystems. Yet opacity can conceal safety flaws and limit external auditing. When only internal teams can examine a system’s architecture, public trust depends entirely on corporate assurances. In critical domains such as healthcare or public infrastructure, that trust must be earned through demonstrable accountability, not secrecy alone.

Governance does not demand choosing one model exclusively. Instead, it requires establishing norms that apply across both. Open projects can adopt licensing frameworks that restrict harmful applications. Closed systems can be required to undergo independent audits and publish safety reports. Hybrid approaches may combine transparent core standards with controlled deployment layers. The objective is not ideological purity but risk reduction and value alignment.

Ethics must anchor all of these discussions. Robotics will increasingly shape labor markets, surveillance practices, and social interactions. Decisions about deployment affect who benefits and who bears costs. Automated warehouses may improve efficiency but displace workers. Security robots may enhance safety while expanding monitoring. Care robots may fill staffing shortages but alter human relationships. Governance provides a forum to weigh these trade-offs collectively rather than leaving them to market forces alone. Ethical deliberation ensures that scaling serves broader social goals instead of narrow commercial interests.

History offers cautionary lessons. Technologies such as social media and large-scale data platforms expanded rapidly with minimal early oversight, only for societies to grapple later with misinformation, privacy erosion, and concentrated power. Retrofitting regulation proved politically contentious and technically challenging. Robotics operates in a more immediate domain: the physical world. The costs of delayed governance could be higher and more visible.

Preparing governance before scale does not mean halting progress. It means recognizing that power and responsibility must grow together. Clear standards, enforceable accountability, coordinated international frameworks, and thoughtful approaches to openness are not obstacles to advancement. They are the infrastructure that allows innovation to endure. As machines gain the capacity to move, decide, and act in shared spaces, society must decide how they are guided, supervised, and corrected.

Robotics promises productivity, safety, and expanded human capability. But promise alone cannot secure public trust. Governance establishes the boundaries within which that promise can unfold responsibly. If society waits until robots are ubiquitous before defining those boundaries, correction will be reactive and painful. If it acts now, governance can shape a future where intelligent machines amplify human potential without compromising human values.