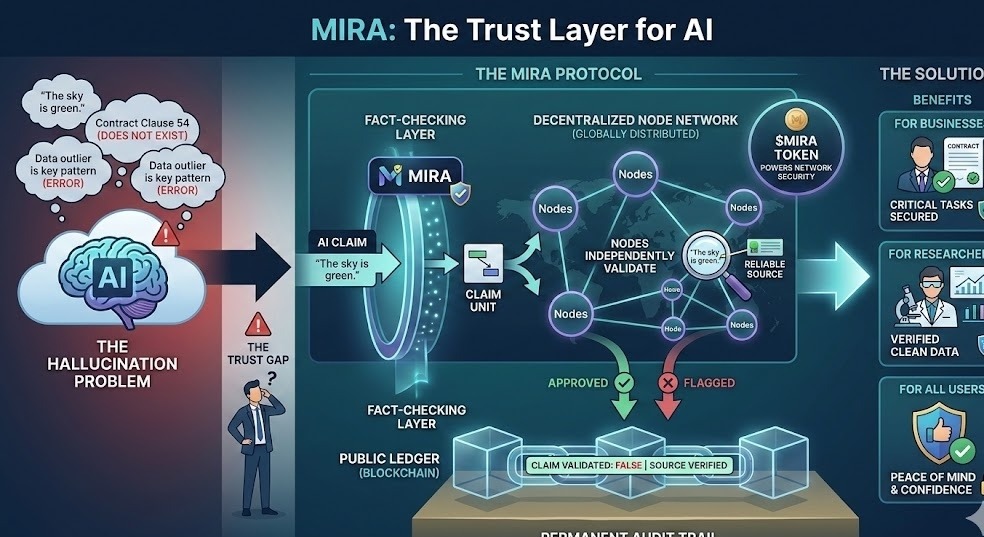

We have all seen the news. AI can write essays, code software, and answer complex questions. But AI also has a big problem: it makes things up. These mistakes are called "hallucinations." If a business uses AI to analyze a contract or a researcher uses it to analyze medical data, one tiny mistake could be a disaster.

This is where Mira comes in to fix the trust issue.

Think of Mira as a high-quality "fact-checker" for artificial intelligence. Instead of just hoping the AI is telling the truth, Mira breaks down every answer into small, simple claims. These pieces of information are then sent out to a network of independent computers (nodes) located all over the world.

These nodes don't guess. They validate the data. They check the facts against reliable sources. If the AI said "The sky is green," the nodes would flag it as wrong. If it’s correct, they approve it.

Once this process is finished, the result is recorded on a public ledger, or blockchain. Because it’s on-chain, no one can change it or delete it later. It creates a permanent audit trail.

Why does this matter for you?

For businesses, this "Trust Layer" means they can finally use AI for critical tasks without worrying about hidden errors. For researchers, it provides proof that their data is clean and verified.

With Mira, we don't have to cross our fingers and hope the AI is right anymore. We can actually know it is. It’s a new layer of security for the AI era, powered by the community and the $MIRA token. @Mira - Trust Layer of AI #mira $MIRA