The first time I started distrusting “verified” systems wasn’t because they failed loudly. It was because they succeeded too easily. Everything came back as clean agreement, like a room full of people nodding in sync. That’s when the thought hit me: if the cost of saying yes is near zero, consensus is not a guarantee of work. It’s a guarantee of coordination. Mira Network lives inside that uncomfortable gap, because its promise depends on something most people skip over. Not whether verifiers can agree, but whether they can be forced to actually compute.

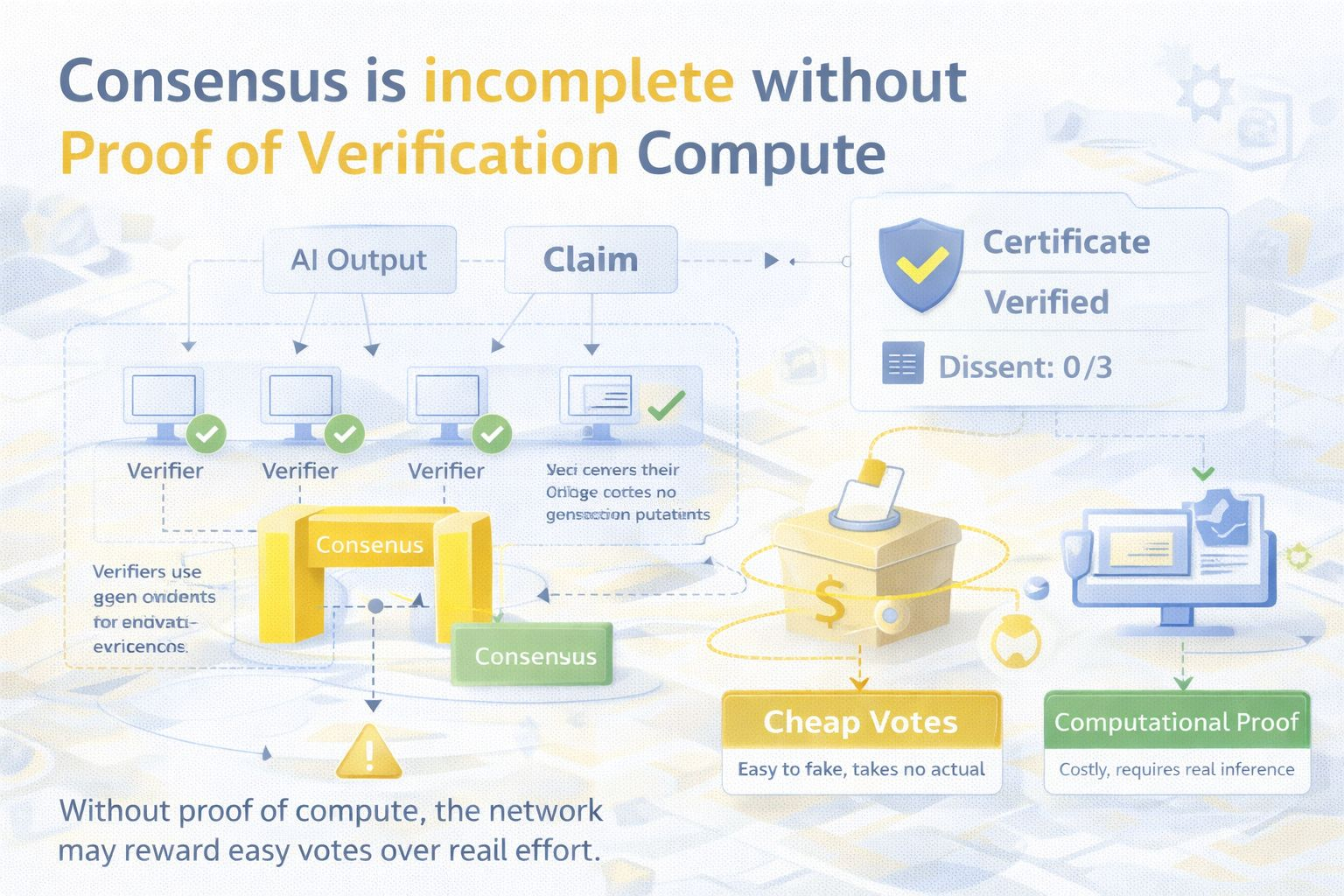

The market is pricing verification like it is a voting problem. Split an AI output into claims, ask multiple verifiers, take a quorum, stamp a certificate. It sounds like truth machinery. But inside that pipeline there’s a missing assumption: that each verifier did the verification work it claims it did. If the protocol can’t tell the difference between real inference and cheap signaling, it will end up paying for votes, not for computation, because agreement can be predicted faster than verification can be performed.

This is not an abstract fear. It’s the most practical attack surface in any incentive-driven verification network. Real verification has a cost. It consumes compute, memory, bandwidth, and time. Cheap participation has a different cost. It’s the cost of guessing what the majority will say. When rewards are tied to agreement, the rational strategy for a low-effort participant is to predict consensus and submit quickly, not to run the expensive check.

I’ve watched this pattern any time incentives reward alignment. People learn to read the room. They stop doing the hard work and start doing the safe work. In Mira’s context, “reading the room” means leaning on model priors and shared biases, then aiming for the answer that is least likely to trigger disagreement and least likely to get punished. Agreement becomes a shortcut, and the system has no native way to know if that shortcut replaced compute.

Mira’s economic security is incomplete unless verifiers can be forced to prove they actually performed the verification compute. Without that, the protocol risks building a beautifully engineered consensus layer that certifies cheap voting behavior. The network would still produce certificates. It would still look consistent. It might even look more reliable than raw AI. But it would be certifying an illusion of work.

The reason this matters is that Mira isn’t just aggregating opinions. It is monetizing verification. Once money enters the loop, participants optimize. Verifiers search for the lowest-cost path to the highest expected reward. If a verifier can submit an answer that matches the crowd without paying the compute bill, and still capture rewards, that behavior will scale. If a verifier has to expose something that makes compute falsifiable, the equilibrium shifts toward real work.

Claim splitting makes the pressure sharper. When a large answer becomes many small claims, the workload scales up. Honest verification gets more expensive in total, even if each claim is small. If rewards don’t scale in a way that keeps honest compute sustainable, the protocol creates a wedge. Honest verifiers feel squeezed by cost. Low-effort verifiers feel empowered by throughput. The network starts rewarding speed and conformity instead of diligence.

This is where consensus becomes dangerous in a quiet way. Most people think the threat is disagreement. The real threat is smooth agreement produced by laziness. If many verifiers are not actually verifying, consensus can still be strong because they are all drawing from similar priors. They agree because it is easy to agree, not because the claim is well supported. A high quorum outcome starts to mean “the verifier set is correlated,” not “the verifier set did work.”

Slashing does not automatically solve this. If the system can only punish based on deviation from consensus, it incentivizes herding. A low-effort verifier can reduce its slashing risk by aligning with what it expects others will say. It becomes safer to be wrong with the crowd than right alone. In that world, stake can enforce conformity more reliably than it enforces truth.

So the protocol needs a way to make computation legible through a crypto-native proof or attestation, not just an output that looks plausible. Work has to become measurable, even if only partially. If the only thing the network can observe is the final answer, then the cheapest strategy is to produce answers that look like the network, not answers that come from actual verification.

This is where the word “proof” becomes uncomfortable. In crypto, proofs are valued because they are cheap to verify. But AI inference is expensive to run. A verification protocol is trying to wrap cheap verification around costly computation. That creates a brutal constraint: either compute becomes attestable, or the protocol is paying for unverifiable labor. And when labor is unverifiable, markets fill the gap with theater.

You can often spot the drift by watching for reward-to-compute mismatch. If the network can sustain a large verifier set that earns rewards while running minimal compute, it’s a warning sign that the system is paying for votes. If the reward structure forces verifiers to invest in real inference capacity to remain competitive, it’s a sign the protocol is paying for work.

Another tell is performance on adversarial claims. Cheap voting does fine on generic, consensus-friendly statements. It breaks on edge cases, ambiguous language, and claims that require genuine reasoning or careful checking. If Mira’s certificates remain strong exactly where real-world claims get messy, compute attestation is probably doing its job. If certificates look strongest on trivial claims and weakest on the claims that matter, the network may be optimizing for agreement rather than verification.

None of this makes Mira “bad.” It makes it real. A verification protocol cannot be judged only by how clean its certificates look. It has to be judged by whether it makes cheating economically irrational in the place where cheating is most tempting: the verification work itself.

The trade-off is uncomfortable. Stronger proof of compute increases overhead in proof generation and verification, and it increases latency and complexity across the system. Weaker proof keeps the system fast and cheap, but invites vote markets. There isn’t a free solution. You either pay the cost upfront in protocol design, or you pay it later when certificates become a confidence product rather than a truth product.

A voting booth tells you who people chose. It does not tell you whether they read the policy. Mira’s challenge is to build a verification system that can tell the difference. If the network only measures agreement, it will optimize for agreement. If it can measure work, it can reward work. That difference is the line between a protocol that reduces hallucination risk and a protocol that industrializes plausible consensus.

If Mira can force verifiers to prove they computed, then consensus starts to mean something stronger. It becomes evidence of costly diligence, not just coordinated guesses. If the protocol cannot make compute legible, the economic equilibrium will drift toward cheap voting, and the network will produce certificates that look authoritative while quietly losing contact with the truth.

That is why I see compute proof as the real security layer. Mira’s success won’t be determined by how many verifiers it has or how many certificates it issues. It will be determined by whether “verification” in the system is an expensive action that can be forced, or a cheap gesture that can be faked.

@Mira - Trust Layer of AI $MIRA #mira