Over the past few months, I’ve been closely observing how the conversation around artificial intelligence is evolving inside the crypto ecosystem. Everyone seems focused on building bigger models, faster inference, and more advanced AI capabilities. But the more I look at the landscape, the more I realize something important is still missing: trust.

That’s exactly why Mira Network has caught my attention. Instead of competing in the already crowded race of building AI models, Mira is approaching the problem from a different and, in my opinion, far more critical direction — verification. The network is positioning itself as infrastructure for verifiable AI, where outputs from AI systems can actually be checked, validated, and trusted rather than blindly accepted.

When I think about the future of AI, this problem feels fundamental. Today most AI systems operate like black boxes. They generate answers, predictions, or content, but users often have no reliable way to confirm whether those outputs are correct. This might be acceptable for casual applications, but once AI begins influencing finance, research, governance, or automated systems, blind trust simply isn’t good enough.

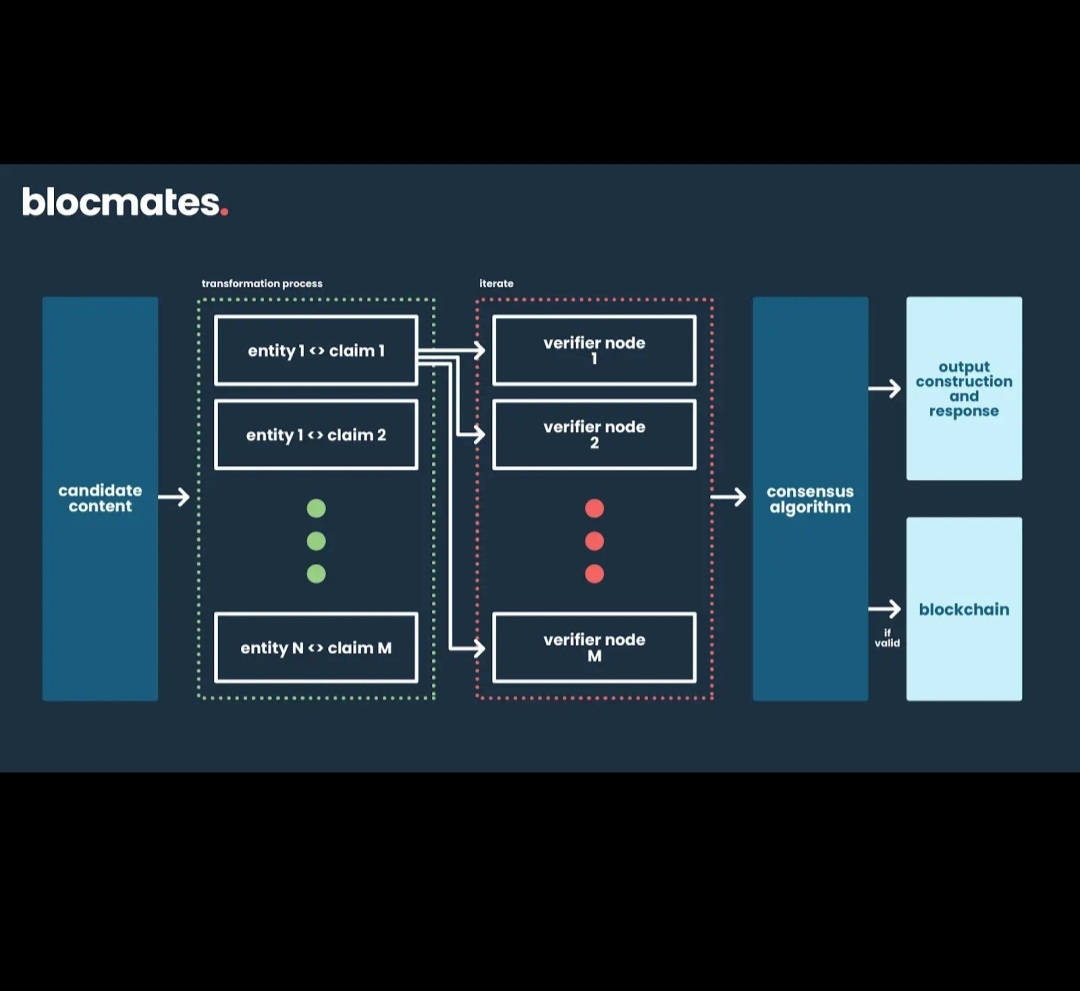

This is where Mira Network starts to make sense to me as a piece of infrastructure rather than just another project riding the AI narrative. The goal isn’t to replace existing AI systems. Instead, it’s to build a verification layer that sits alongside them, ensuring that outputs can be evaluated and validated through decentralized mechanisms.

What I find particularly interesting is how this aligns with the core philosophy of blockchain technology itself. Blockchains were created to solve the trust problem in digital systems. Instead of relying on centralized authorities, verification is distributed across networks of participants. Mira appears to be applying that same principle to artificial intelligence — turning AI verification into a decentralized process rather than a centralized one.

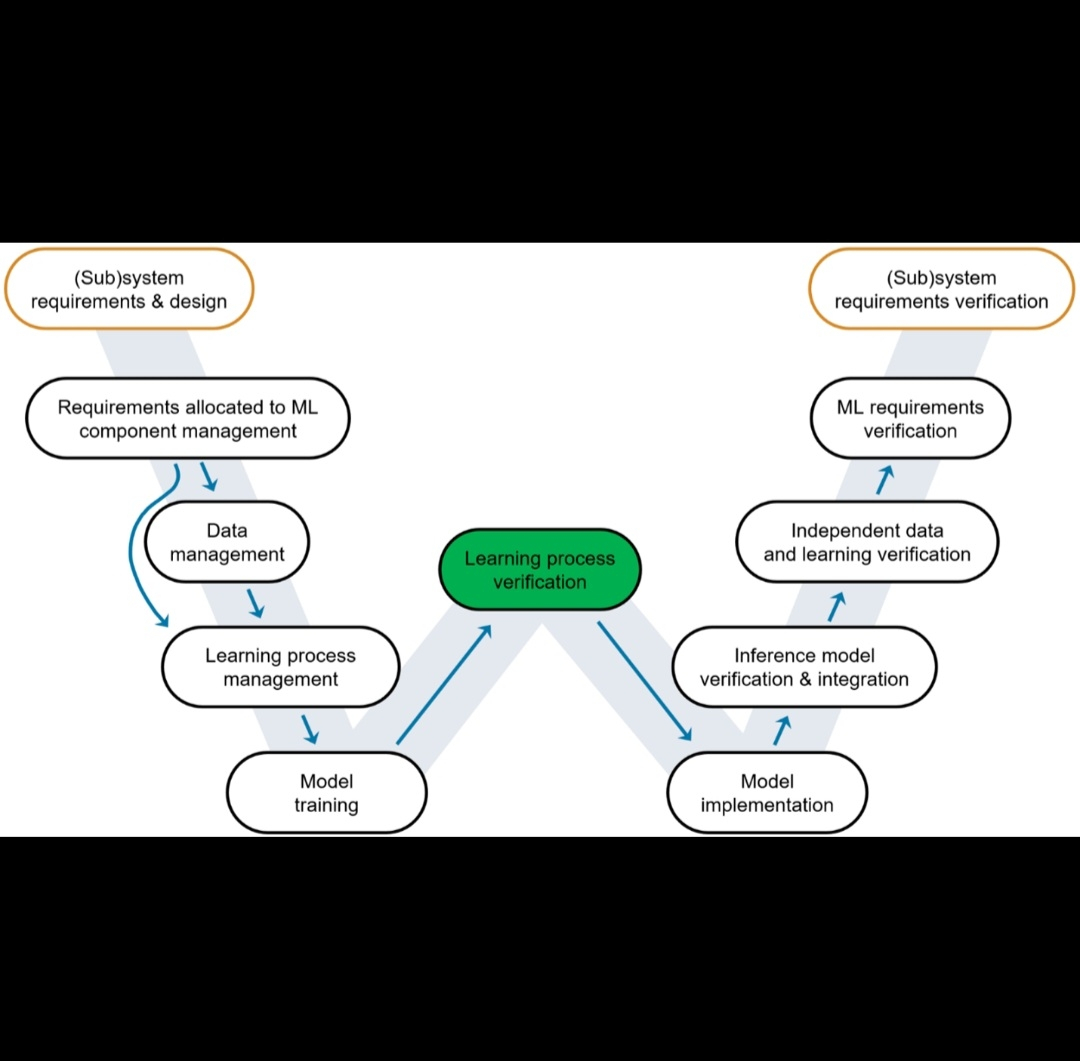

From my perspective, this approach fits perfectly into the broader evolution of crypto infrastructure. Early blockchain innovation focused on payments and digital assets. Then the ecosystem expanded into decentralized finance, smart contracts, and data availability layers. Now we’re starting to see the emergence of AI-focused infrastructure, and verification could become one of the most important components of that stack.

Another reason Mira stands out to me is the timing. AI adoption is accelerating rapidly across industries, but questions around reliability and accountability are growing just as fast. Governments, enterprises, and developers are all trying to figure out how to ensure that AI systems are producing accurate and trustworthy results. A decentralized verification network could offer a compelling solution to that challenge.

If AI outputs can be verified through cryptographic and decentralized systems, it could dramatically increase confidence in AI-driven applications. Developers could build systems that rely on AI decisions without worrying about opaque or unverifiable processes. Users could interact with AI services knowing that there are mechanisms ensuring integrity behind the scenes.

From an infrastructure perspective, this is the kind of problem that tends to become more valuable over time rather than less. As AI becomes more integrated into critical systems, the demand for verification, transparency, and reliability will only increase. In many ways, verification could become the backbone that allows AI to move from experimental technology into trusted global infrastructure.

That’s ultimately why Mira Network feels important to me. It’s not simply chasing the trend of combining AI and crypto. Instead, it’s focusing on a structural weakness in the current AI ecosystem — the lack of verifiability.

Of course, building something like this is not trivial. Designing decentralized systems that can efficiently verify complex AI outputs is a significant technical challenge. Adoption will also depend on whether developers and AI platforms actually integrate verification mechanisms into their workflows.

But despite those challenges, the core idea continues to stand out the more I think about it. In a world increasingly shaped by artificial intelligence, the question isn’t just what AI can produce, but whether we can trust what it produces.

And right now, Mira Network is one of the few projects trying to answer that question at the infrastructure level.