As industrial environments evolve toward smart factories and Industry 4.0, human-robot collaboration (HRC) is becoming increasingly common. Unlike traditional automation systems that isolate robots behind safety cages, collaborative robots (cobots) now work directly alongside human operators. This shift requires a socially adaptive cognitive architecture that enables robots not only to perform tasks efficiently but also to understand, predict, and respond to human behavior in dynamic industrial settings.

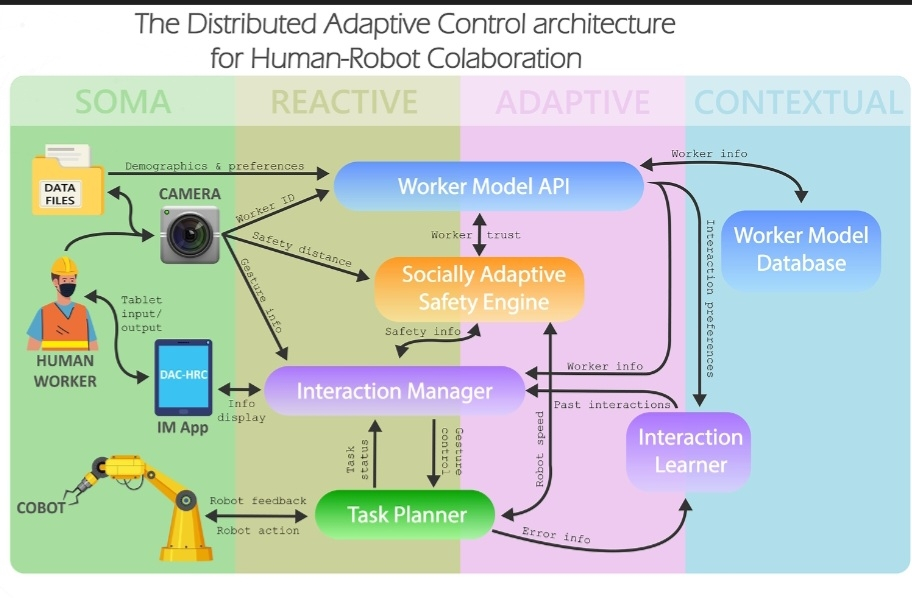

A socially adaptive cognitive architecture integrates perception, reasoning, learning, and action into a unified framework. At its foundation lies a multi-modal perception layer. This layer gathers real-time data from cameras, depth sensors, LiDAR, wearable devices, microphones, and force-torque sensors. Through sensor fusion, the system builds a contextual model of the workspace, identifying human positions, gestures, posture, facial expressions, and even stress indicators. By continuously monitoring environmental changes, the robot maintains situational awareness and detects potential hazards.

Above perception is the cognitive reasoning layer. This module interprets sensory inputs and infers human intent using machine learning and probabilistic models. For example, if a worker reaches toward a shared tool, the system predicts the intended action and adjusts its trajectory to avoid interference. Task planning algorithms dynamically allocate responsibilities between human and robot, optimizing productivity while minimizing cognitive load on workers. The architecture also includes a social reasoning component that accounts for proxemics, turn-taking, and implicit communication cues, allowing the robot to behave in a socially acceptable manner.

Learning is a critical aspect of social adaptability. The architecture incorporates reinforcement learning and continual learning mechanisms that allow the robot to adapt to individual worker preferences and skill levels over time. By analyzing historical interaction data, the system refines motion planning strategies and communication patterns. For example, if a worker prefers slower handovers, the robot adjusts its speed accordingly. This personalization improves trust and collaboration efficiency.

Safety management is embedded throughout the architecture rather than treated as an isolated module. Real-time risk assessment algorithms evaluate collision probability and enforce speed-and-separation monitoring. Compliant actuators and torque-limited joints ensure that physical interactions remain within safe thresholds. If unexpected contact occurs, force sensors trigger immediate response protocols such as motion halt or retreat. The system also integrates explainable AI components that provide transparent feedback to human operators, enhancing accountability and trust.

Communication plays a central role in socially adaptive collaboration. The architecture supports multimodal interaction, including speech recognition, visual signals, and haptic feedback. Natural language processing enables workers to issue verbal instructions, while visual displays or augmented reality interfaces provide task updates and warnings. This bidirectional communication fosters mutual understanding and reduces ambiguity in shared tasks.

At the execution layer, adaptive motion planning algorithms generate smooth and predictable trajectories. Predictability is essential for human comfort; sudden or erratic movements can increase anxiety and reduce trust. The architecture therefore incorporates human-aware path planning that respects personal space and anticipates human motion. By combining predictive modeling with real-time feedback control, the robot maintains both responsiveness and stability.

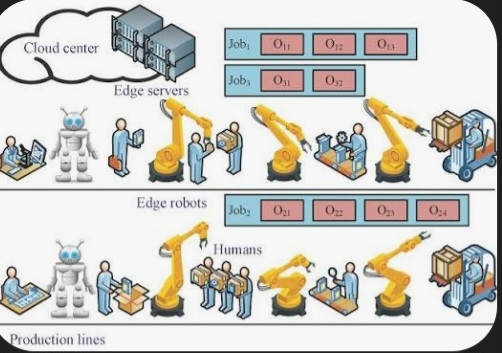

Integration with industrial control systems is another key feature. The architecture connects with Manufacturing Execution Systems (MES) and IoT platforms to coordinate workflows and share operational data. This connectivity ensures that collaborative tasks align with production schedules and quality standards. Data analytics modules monitor performance metrics, identifying bottlenecks and suggesting process improvements.

Ethical considerations also influence the design of socially adaptive cognitive architectures. Privacy-preserving data handling mechanisms protect worker information collected through sensors. Transparent decision-making processes allow operators to understand how and why the robot acts in specific ways. Clear accountability structures ensure compliance with industrial safety regulations and international standards.

In conclusion, a socially adaptive cognitive architecture for human-robot collaboration in industrial settings integrates perception, reasoning, learning, safety, communication, and execution into a cohesive system. By enabling robots to interpret human intent, adapt to social cues, and maintain high safety standards, this architecture supports efficient, trustworthy, and human-centered industrial automation. As technology advances, such systems will become fundamental to achieving resilient and intelligent manufacturing ecosystems.