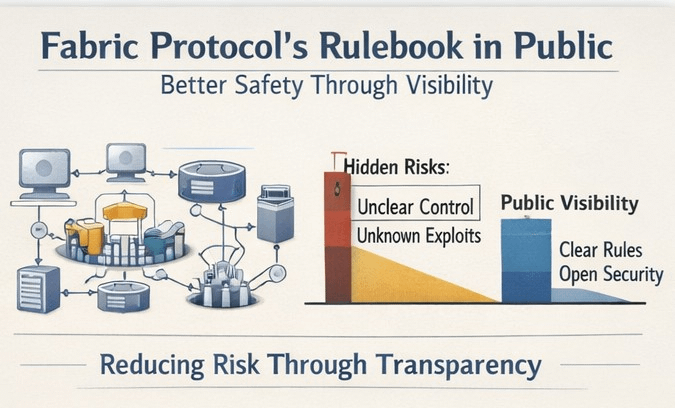

I’ve been rethinking what “safety” means when software is starting to coordinate work in the real world. My old instinct was that the safest systems were the quiet ones. Lately I’m leaning the other way: if the rules are going to govern machines and money, I want the rulebook out in the open where people can read it.

That’s the frame I use for Fabric Protocol and its ROBO token. Fabric describes a global, open network to build, govern, own, and evolve general-purpose robots, with coordination and oversight happening through public ledgers. The Fabric Foundation leans into the idea that machine behavior should be predictable and observable, and that key infrastructure—identity, task allocation, payments—should be built as a public good rather than as a private choke point. For me, that’s the core value of visibility: not that everything becomes safe, but that the important assumptions stop being hidden.

The whitepaper makes this feel less abstract. It describes the Fabric Protocol as a decentralized way to build and evolve “ROBO1,” a general-purpose robot, and it contrasts public-ledger coordination with closed datasets and opaque control. It also sketches a modular view of capability, where specific skills can be added or removed via “skill chips.” I don’t read that as a promise that robotics will neatly align with cryptography, but I do read it as an attempt to put accountability on rails that outsiders can inspect.

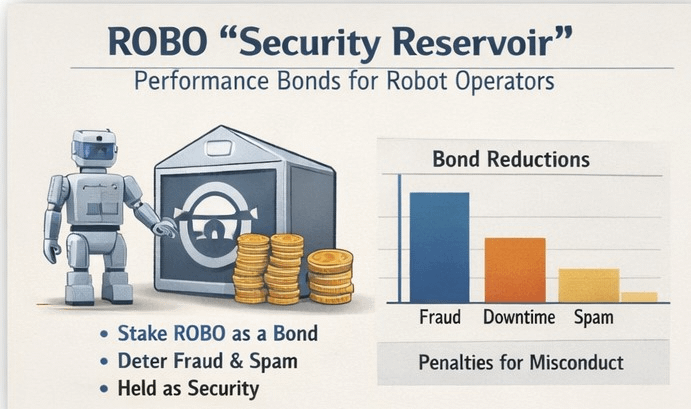

ROBO matters because it’s positioned as a tool for making operators legible to the network, not just a medium of exchange. The clearest example is the work-bond design. Fabric says registered robot operators post a refundable performance bond in ROBO to register hardware and provide services, and it calls this bond a “Security Reservoir.” The point is straightforward: make spam and fake identities expensive, and keep operators responsible if they misbehave. The document also tries to reduce practical friction by denominating the reservoir in a stable unit and settling in ROBO via an on-chain oracle, and it’s blunt that bonds are risk-management deposits. They can be reduced for misconduct such as fraud, spam, or downtime, and they do not pay interest or passive returns. I find that explicitness reassuring, because it turns “trust us” into visible incentives and penalties.

Governance is the other place where ROBO becomes part of the safety story. Fabric proposes a vote-escrow model, veROBO, where holders lock ROBO to obtain onchain voting and signaling rights. The text ties that signaling to operational questions, including protocol parameters and verification and slashing rules, and it narrows what those rights mean by saying they are procedural and do not grant control over any legal entity or a claim on revenues or distributions. That boundary might get tested over time, but I’d rather have it stated than left implicit.

I think this “public rulebook” approach is getting more attention now because robots and autonomous agents are moving out of controlled settings and into places where mistakes can hurt people, and because AI makes it easier for machines to plan and act through software. In that world, the weak points are often governance and operations, not just a single bad line of code. Public visibility doesn’t solve those problems, but it does make it harder for hidden power to masquerade as safety.

I’m not blind to the tradeoffs. Publishing rules can teach attackers what to target, and public documents can drift away from what deployed code actually does. What helps me is to treat the rulebook as a starting point for verification: read it, test it, and watch how changes are proposed. If Fabric’s bet is that robots will become economic actors, then ROBO is where that bet becomes checkable—bonds that can be slashed, settlement that can be traced, governance scope that can be debated in public. It’s not perfection, but it’s a posture I can evaluate today.

@Fabric Foundation #ROBO #robo $ROBO