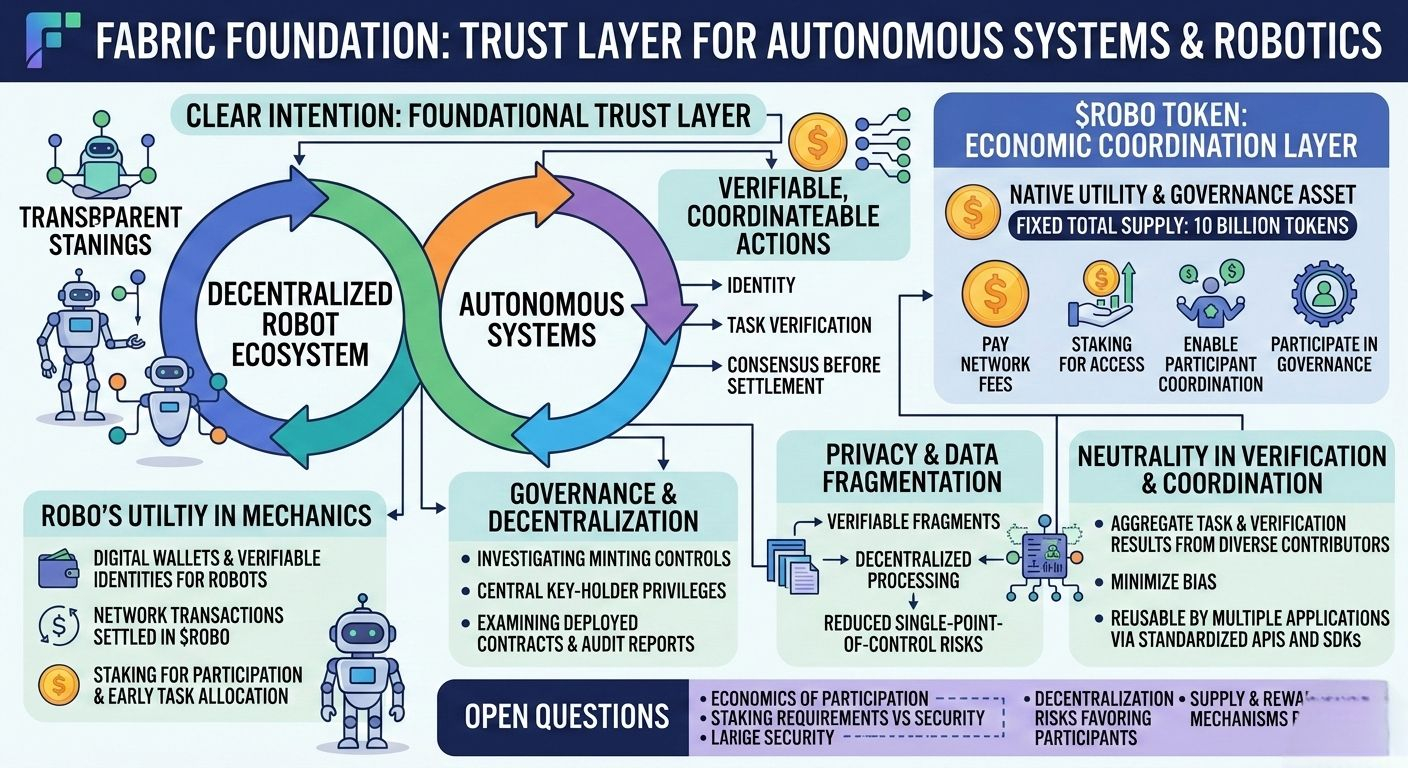

As I explored deeper into the world of Fabric Foundation, what stood out to me was not just the buzz around it, but the clear intention to build a foundational trust layer for autonomous systems and robotics. Rather than focusing purely on hype, the narrative centers on making robotic and AI-driven actions verifiable and coordinateable in a decentralized way, with transparent standards for identity, task verification, and consensus before final settlement. This approach resonates strongly with both blockchain builders and advanced AI ecosystems, aiming for authenticated, auditable outcomes rather than opaque black-box outputs.�

At the heart of this stack is the $ROBO token, the native utility and governance asset of the network. It has a fixed total supply of 10 billion tokens and practical roles within the infrastructure such as paying network fees, staking to access protocol features, enabling participant coordination, and participating in governance decisions about how the protocol evolves. Unlike meme tokens without functional purpose, $ROBO is explicitly designed as the economic coordination layer that aligns incentives between machines, developers, and human participants in a decentralized robot ecosystem.�

ROBO’s utility isn’t speculative in design; it’s woven into the mechanics of the protocol itself. Robots and autonomous agents need digital wallets and verifiable identities to exist in on-chain economies—machines can’t open bank accounts or hold passports like humans do. On Fabric, all network transactions are settled in $ROBO, whether it’s registering a robot’s identity, validating a task, or paying for machine-to-machine services. Protocol participation and early task allocation privileges come from staking $ROBO, which helps bootstrap network activity while aligning stakeholder interest with successful deployment.

Just as with any ambitious decentralized system, governance and decentralization aspects matter greatly. Investigating whether the contract contains features like minting controls or central key-holder privileges is crucial because these features could influence decentralization risk. Documentation on such mechanisms is still emerging, and examining the deployed contracts and audit reports directly is essential to understand how flexible or rigid the governance model really is.�

Privacy and data fragmentation are also parts of Fabric’s vision. Since robotic and AI actions are broken down into verifiable fragments and processed in a decentralized manner, no single entity holds the entirety of sensitive operational data. This reduces single-point-of-control risks and supports a more secure, distributed verification process, even as multiple AI agents enter the ecosystem.�

Neutrality in verification and coordination is another pillar. Fabric aims to minimize bias by aggregating task and verification results from diverse contributors across the network. This aggregated result can then be reused by multiple applications via standardized APIs and SDKs, so developers don’t need to redo verification processes for every new use case.�

There are still open questions around the economics of participation: how low the staking requirements can go while remaining secure, how decentralization might inadvertently favor larger participants, and how supply and reward mechanisms will balance long-term participation incentives. These unanswered questions will ultimately be shaped by real-world network growth, usage patterns, and governance outcomes as Fabric’s ecosystem evolves.@Fabric Foundation $ROBO #ROBO