When I hear people talk about regulation in robotics the tone usually sounds defensive. As if rules are obstacles that innovation has to move around. My reaction is different not excitement but recognition. Because the real barrier to large scale robotics adoption isn’t capability anymore it’s coordination. Machines can move, see, calculate and learn. What they struggle with is operating inside systems that require accountability and accountability doesn’t emerge automatically from better hardware.

Most robotics conversations still treat regulation like an external pressure. Build the robot first worry about compliance later. But the moment robots begin to interact with real economies factories logistics networks public infrastructure that approach breaks down. The question stops being “can the robot do the job?” and becomes “who is responsible when it does?”

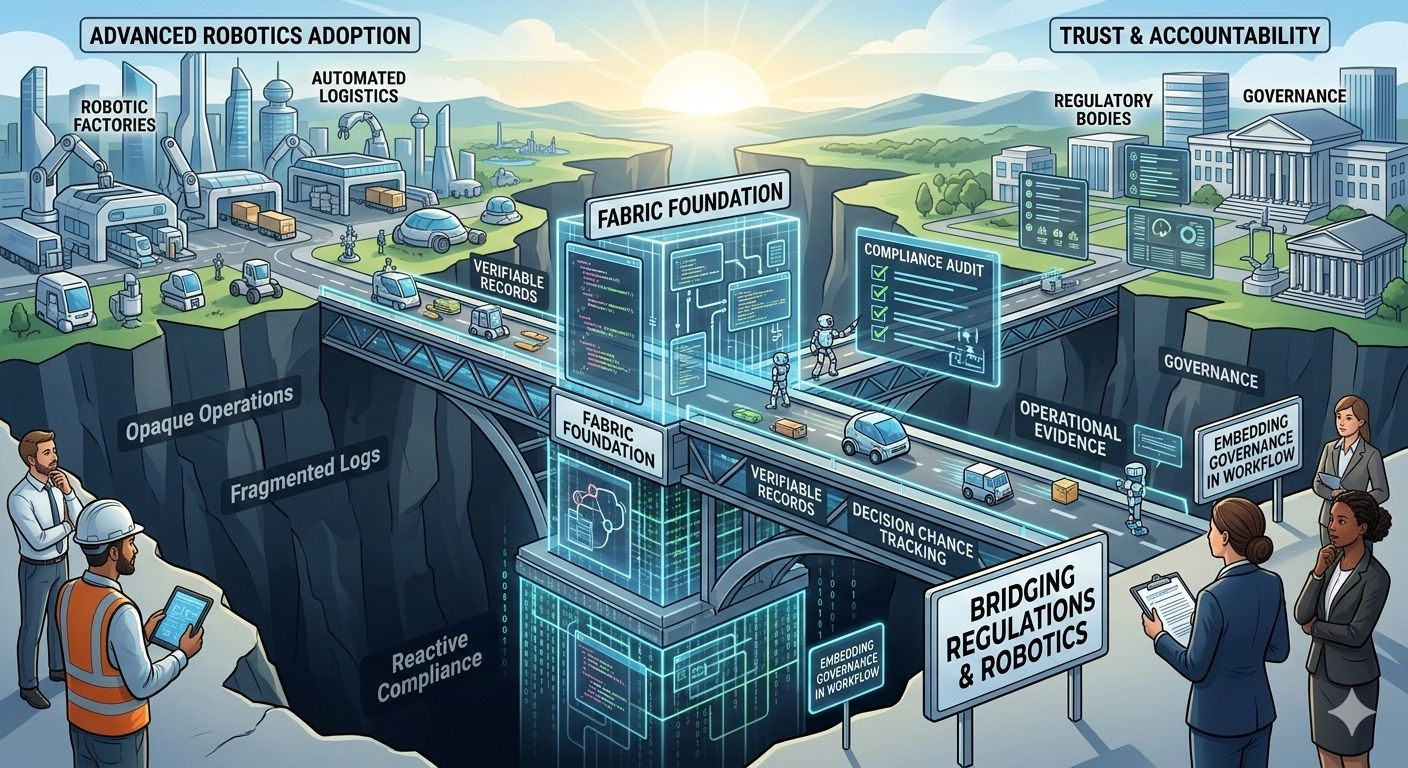

That’s where the design direction around the Fabric Foundation becomes interesting. Not because it’s building robots itself but because it’s trying to structure how robots data and governance interact from the start.

In the traditional model robots exist inside private silos. A company deploys machines collects data and manages compliance internally. If something goes wrong, accountability traces back through corporate reporting systems, internal logs and whatever documentation happens to exist. It works for controlled environments but it doesn’t scale well once machines start operating across organizations or jurisdictions.

The problem isn’t just technicalit’s structural. If a robot performs a task that involves multiple data sources, multiple operators and multiple AI models verifying what actually happened becomes complicated very quickly. Who trained the model? Which dataset influenced the decision? Which software version executed the action? These questions matter not only for debugging systems but also for regulators trying to determine responsibility. Most infrastructure today doesn’t record that chain of events in a way that’s independently verifiable.

Fabric’s approach flips that assumption. Instead of treating governance as something added after deployment the system attempts to embed verifiability directly into the workflow of machines. Computation, data inputs and outcomes can be recorded and coordinated through a shared infrastructure layer. The goal isn’t to control robots from a central authority but to make their activity legible to everyone who needs to trust it.

Once you do that regulation starts looking less like an obstacle and more like a coordination layer because regulators aren’t actually asking for control over machines. What they want is evidence. Evidence that safety constraints were followed. Evidence that decisions can be traced. Evidence that systems behave within defined boundaries. When that evidence exists in fragmented internal logs, oversight becomes slow and adversarial. When it exists in verifiable records oversight becomes procedural.That difference matters more than people realize.

In robotics today, compliance is often reactive. A machine fails, an incident happens and then investigators reconstruct what occurred from incomplete information. The process is expensive, slow and sometimes inconclusive. Embedding verifiable computation into the infrastructure changes the sequence. Instead of reconstructing events after the fact systems can demonstrate their behavior as it happens. But this also shifts responsibility in subtle ways.

Once machines operate within verifiable frameworks operators can no longer rely on ambiguity. Every input, model execution and decision pathway becomes part of an observable record. That transparency strengthens trust but it also raises the bar for everyone involved developers, operators and the organizations deploying the robots and that’s where the real balancing act begins.

Because too much rigidity can slow innovation just as easily as too little oversight can erode trust. Systems that enforce compliance mechanically may struggle to adapt to new types of machines or new regulatory environments. On the other hand systems that leave everything flexible risk becoming opaque again. The challenge isn’t simply building infrastructure. It’s building infrastructure that can evolve alongside both robotics technology and regulatory expectations.

Another layer people often overlook is interoperability. Robots rarely operate alone anymore. They interact with AI services, supply chain platforms, industrial software and increasingly with other autonomous agents. Each system carries its own policies, permissions and risk thresholds. Coordinating all of that requires more than just communication protocols it requires shared rules about how machine work is verified and governed. Without that shared layer, collaboration between autonomous systems becomes fragile.

This is why the regulatory conversation around robotics is slowly shifting away from individual devices and toward operational frameworks. Regulators care less about a specific robot model and more about whether the system surrounding that robot can reliably demonstrate compliance. In other words governance is moving from hardware certification to process verification.

Infrastructure designed around verifiable computation naturally fits that direction. Of course none of this eliminates risk. A verifiable system can still experience failures, misaligned incentives or flawed inputs. But it changes where trust lives. Instead of trusting individual organizations to report accurately participants trust the infrastructure that records and coordinates machine activity.

That’s a subtle but important shift because once trust moves into infrastructure ecosystems begin to form around it. Developers build tools that assume verifiable execution. Companies deploy robots knowing that compliance evidence is automatically recorded. Regulators evaluates behavior through structured data rather than fragmented reports.

Over time that alignment reduces friction between innovation and oversight. The interesting part is that this doesn’t look dramatic from the outside. There’s no single moment where robotics suddenly becomes “regulated correctly.” Instead the shift happens quietly as systems that embed accountability become easier to operate than systems that don’t. And when that happens governance stops feeling like an external constraint and starts functioning as part of the operating environment.

So the real question isn’t whether robotics will face regulation. That outcome is inevitable once machines move into real world economies. The more interesting question is which infrastructure layers make that regulation workable without slowing progress. Because bridging robotics and regulation isn’t about writing stricter rules. It’s about designing systems where proving responsible behavior is easier than hiding irresponsible behavior.

And the long term success of approaches like the one emerging around Fabric will likely depend on a simple test when autonomous machines become common across industries will their actions be transparent enough for societies to trust them without constantly intervening? That’s the bridge that matters.

@Fabric Foundation $ROBO #ROBO