So I’ve been staring at charts all night and somehow ended up reading about this decentralized verification idea for AI… and now my brain won’t shut up about it. It’s one of those concepts where at first you roll your eyes because crypto loves smashing buzzwords together. AI plus blockchain plus “trust layer” or whatever. You’ve seen it before.

But then you keep reading… and annoyingly it kind of makes sense.

The weird part is the problem they’re pointing at is real. AI just makes stuff up sometimes. Not like a little mistake either, like full confident nonsense. I’ve seen it do it. Ask for sources and suddenly it’s inventing research papers like it’s writing fan fiction. And people still treat the answers like gospel.

That part actually bothers me more than I expected.

Because once these AI tools start getting plugged into serious things, finance tools, automated trading, legal stuff, whatever… you can’t just have a system confidently hallucinating data. That’s like letting a drunk accountant file taxes. It might work sometimes but eventually something explodes.

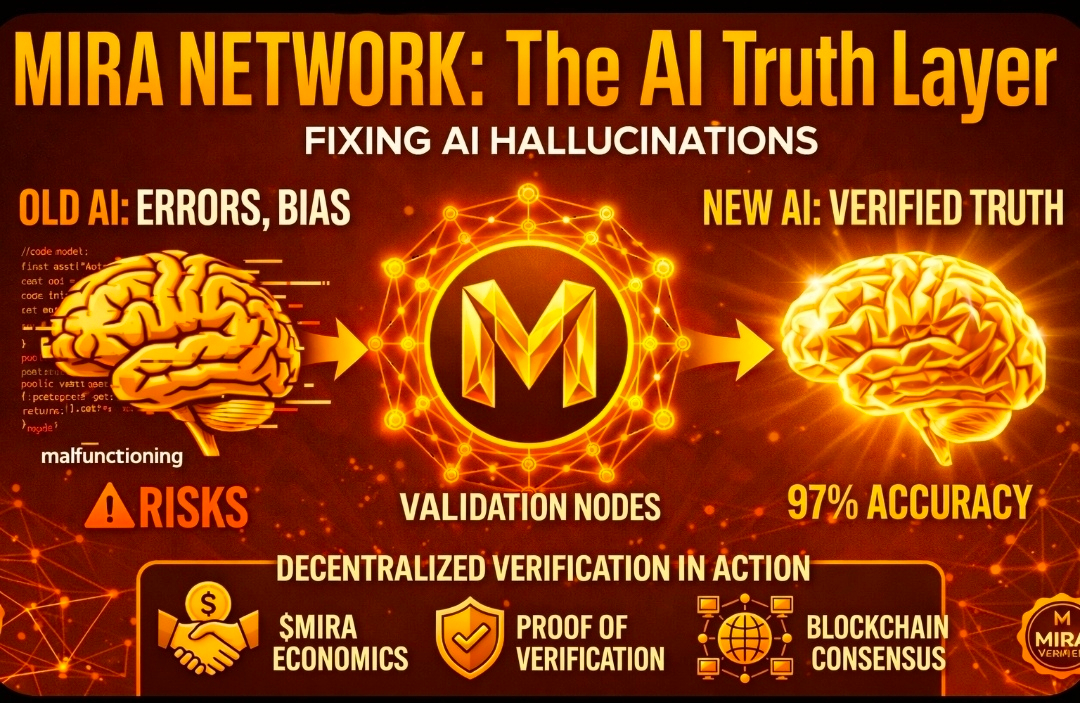

So the idea here is basically… don’t trust the AI by itself. Instead you run the output through some decentralized network that checks it. Multiple validators, maybe other AIs, maybe humans, I don’t know exactly. If enough of them agree the answer isn’t garbage, it gets marked as verified.

Sounds nice on paper.

Also sounds like the kind of thing crypto whitepapers love to promise and never actually deliver.

Still though… I keep thinking about it.

Because crypto has been wandering around for years looking for something useful to do besides speculation. DeFi was cool for a while. NFTs… yeah we saw how that went. Half the industry feels like a casino with nicer branding.

But verification? Recording truth across a network? That’s actually what blockchains were kind of designed for.

I mean that’s basically what Bitcoin does. A bunch of independent machines agree that a transaction happened and nobody gets to secretly change the record later. That model applied to AI outputs isn’t totally crazy.

But then my brain immediately goes wait… hold on.

AI systems produce ridiculous amounts of output. Like constant streams of text, answers, code, images. If every single response had to go through some decentralized validator network, the whole thing would probably slow down to dial-up internet speed. Nobody’s waiting thirty seconds for their chatbot response just so the blockchain can approve it.

So the workaround people mention is selective verification. Like only checking important outputs. Financial stuff, research stuff, anything high stakes. That helps… maybe.

Still feels messy though.

And of course there’s a token involved because this is crypto and there’s always a token. Validators get paid for verifying outputs. If they cheat they lose their stake. Incentives blah blah.

Part of me thinks that’s clever. Another part of me has seen enough tokenomics charts to know how quickly those incentives turn into speculation mania.

You know how it goes. First it’s “we’re building infrastructure.” Then suddenly everyone’s talking about price predictions and liquidity pools instead of the actual product.

That’s the part that always ruins good ideas.

Another thing that keeps bugging me… AI might end up verifying itself anyway. Seriously. Researchers are already making models that argue with each other or double check each other’s answers. Like little robot fact-checking debates happening in the background.

If that keeps improving, you might not even need some giant decentralized network for verification. The models themselves might just cross examine their own outputs.

Or maybe the blockchain thing becomes the audit trail for those checks. I don’t know. My brain keeps flipping back and forth on it.

Also the competition here is insane if you think about it. Big tech companies are pouring billions into making AI more reliable. Better training, retrieval systems, alignment research. They’re not sitting around waiting for some token network to solve the problem for them.

So yeah… I’m interested, but I’m also suspicious. Which is basically my default setting for any crypto project now.

The idea itself is cool though. I can’t deny that.

Because if AI keeps spreading everywhere, and it probably will, there’s going to be a moment where people start asking “how do we know this output is actually correct?”

And if someone already built a system that can verify that across a distributed network… that suddenly becomes very valuable.

Or it becomes another overhyped crypto experiment that fades away quietly.

Honestly both outcomes feel equally possible right now.

Anyway it’s like 2am here and I’m probably overthinking it. My brain does this thing where one random concept sends me down a five hour research spiral.

Crypto plus AI plus verification… it’s like catnip for nerds.

We’ll see what actually gets built tho.

ugh That’s the real test. Ideas are cheap. Shipping code is hard.

@Mira - Trust Layer of AI #MIRA #mira