One night, I sat reading how Mira describes Actionable Flows, and what held my attention was not the phrase autonomous agents. It was the familiar feeling of someone who has stayed in this market long enough to know how often people confuse what sounds intelligent with what can actually get work done. I have seen plenty of AI products with polished demos that fell apart the moment they entered real operations. Systems that can talk a lot are common. Systems that can reliably finish a concrete task are rare. That gap is the part worth examining.

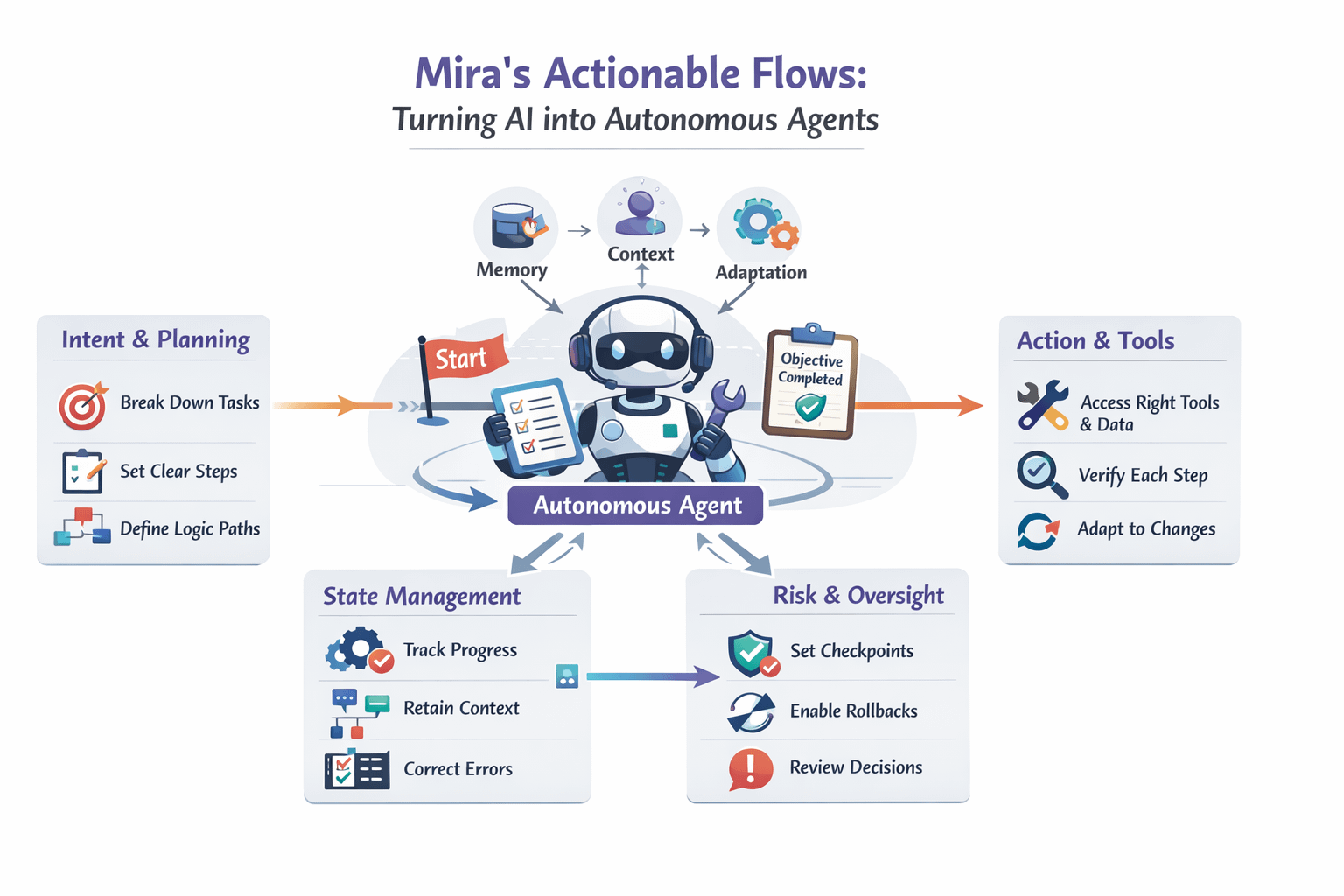

What is strong about Actionable Flows is that it does not treat a workflow as a static chain of commands. It treats a workflow as a process with a goal, with state, with context, and with stopping conditions. A chatbot simply responds. A good flow has to understand which step it is on, what data is missing, which tool should be called, and when control should be handed back to a human. Mira is addressing the hardest part of applied AI, which is turning intention into action that can be repeated in an environment full of constraints.

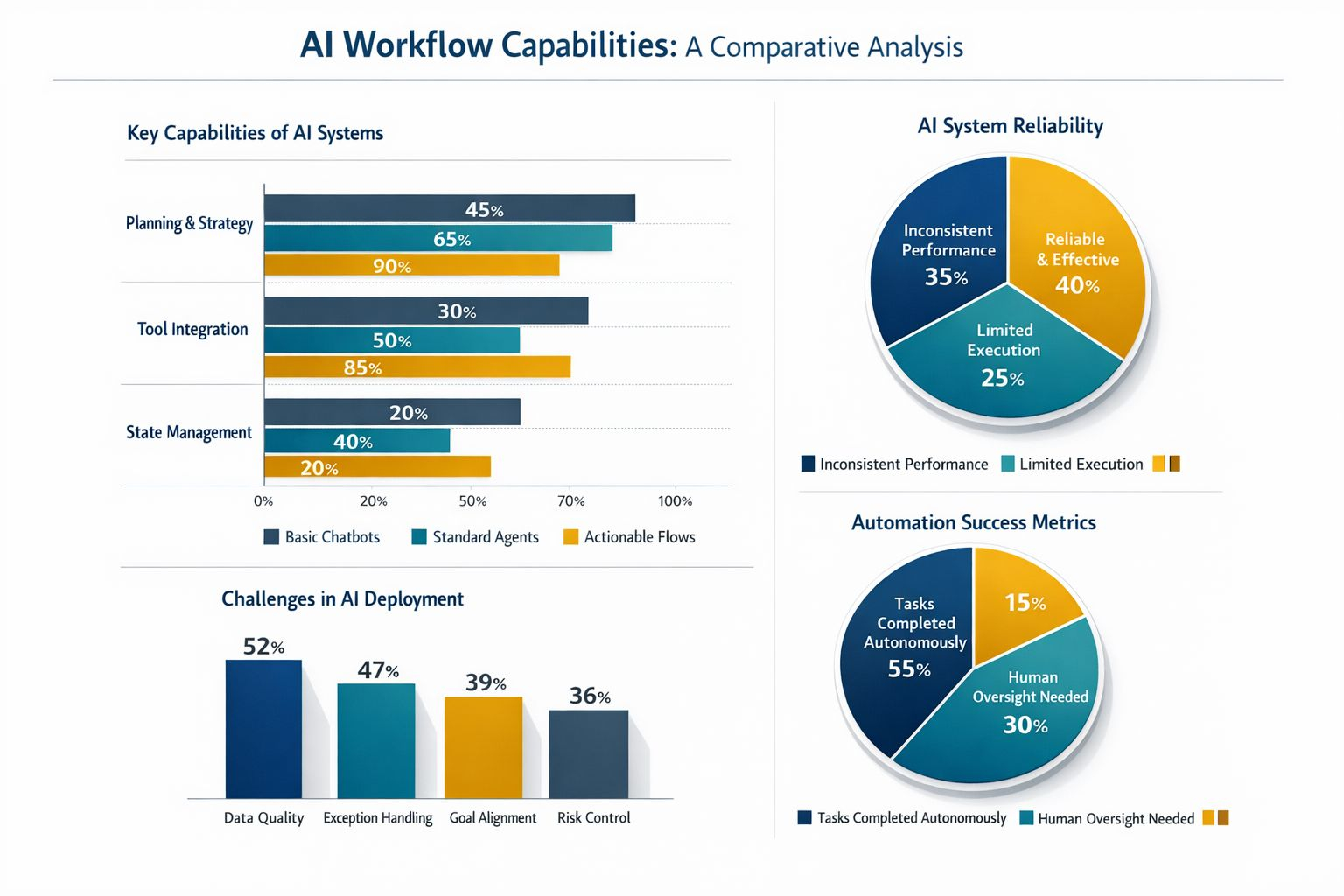

To be honest, the market has used the word agent far too casually. Add memory, add tool calling, add a few logic branches, and many teams are already willing to label something autonomous. I think that is too shallow. A real agent has to preserve its objective across many steps, tolerate incomplete data, handle exceptions, and know how to check itself before moving on. If it cannot do those things, then it is still just a more polished response layer. Mira is worth paying attention to because it places the emphasis on the ability to complete.

Looking more closely, I think Actionable Flows demands three layers of capability. The first is breaking an intent down into a clear plan of action. The second is binding each step to the right tool, the right data, and the right verification criteria. The third is maintaining state throughout the process so the flow does not forget what it has done, what it has not done, and where it is stuck. A slight failure in this layer is enough for the whole process to drift without the user even noticing. Mira is going directly after that fracture point.

Ironically, the deeper I go into AI, the more I feel the core problem looks a lot like software operations. Real world workflows are never as clean as the diagrams on a slide. They are full of conflicting data, approval steps, unexpected exceptions, and even goals that change halfway through. That is why a useful agent cannot just be good at generating language. It has to survive inside disorder. Mira seems to understand that. The focus is on coordination, validation, and execution under imperfect conditions.

But going after the hard part always comes with a cost. The more autonomy you give a system, the greater the need for control. A flow that can act sounds attractive, but if it calls the wrong tool, pulls the wrong data, or keeps running after its initial assumptions have already failed, the consequences are much bigger than a merely inaccurate answer. Because of that, I see Actionable Flows as a risk governance problem as much as a model problem. Mira is only convincing if it can build checkpoints, confirmations, and the ability to roll back.

Maybe the most interesting thing about this direction is that it forces people to redefine productivity. Many teams still measure AI by response speed, token counts, or the impression of intelligence in a first interaction. But in actual work, value lies in how many steps humans no longer need to touch, how many errors are stopped before they spread, and how many times a process can run reliably without creating extra burden. Mira is pulling the conversation away from what AI says and toward what AI does.

After many cycles, I no longer get excited easily by big promises. But the lesson from Mira is fairly clear. AI only starts to mature when it leaves the role of answerer and steps into the role of completing work within clear limits. Actionable Flows is worth watching because it forces the whole industry to face a harder question, how can a system be both proactive and verifiable in real operational settings. And when that happens, will we still measure AI by how polished its language is, or by how much responsibility it can actually carry inside each workflow.