@Fabric Foundation If you’ve ever watched a warehouse robot working late in the evening shift, it almost feels routine. The machine rolls down an aisle, lifts a container, drops it somewhere else, and the system quietly notes that the job is finished. A line appears in a database. Inventory adjusts. Nobody thinks much about it.

Inside a single company that record is usually enough.

The same organization owns the robot, the software running it, and the database that logs what happened. If a mistake appears later, engineers pull up the system history. They scroll through timestamps simple markers showing when each action occurred and try to reconstruct the sequence. The assumption is fairly straightforward: the record is trustworthy because the company controls the system that created it.

Things begin to wobble a little once robots leave those closed environments.

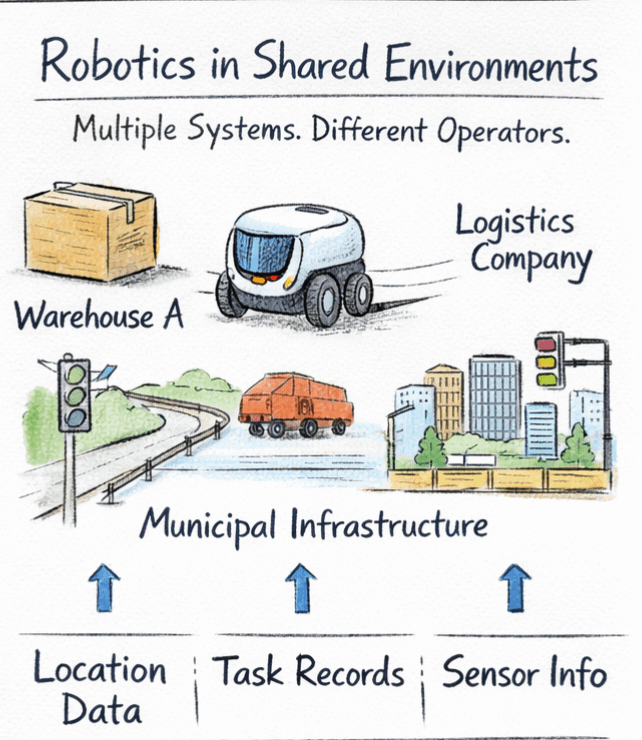

Picture a delivery machine picking up a shipment in one warehouse, transferring it into a logistics chain run by a different company, and eventually interacting with municipal infrastructure along the route. The robot is producing information the entire time. Location traces. Task confirmations. Sensor data describing obstacles or route changes.

But most of those records sit in private databases that belong to whoever operates the machine at that moment. Each participant keeps their own version of events.

At first that sounds manageable. Companies exchange reports all the time. Yet the friction appears the moment responsibility crosses a boundary. One system claims the robot completed a step. Another system depends on that claim before releasing payment or triggering the next action.

Suddenly the question becomes slightly awkward.

How does anyone verify what the machine actually did?

This is roughly where the thinking behind Fabric Protocol enters the conversation. Not as a robot builder, but more like infrastructure around robots once they start operating in shared environments.

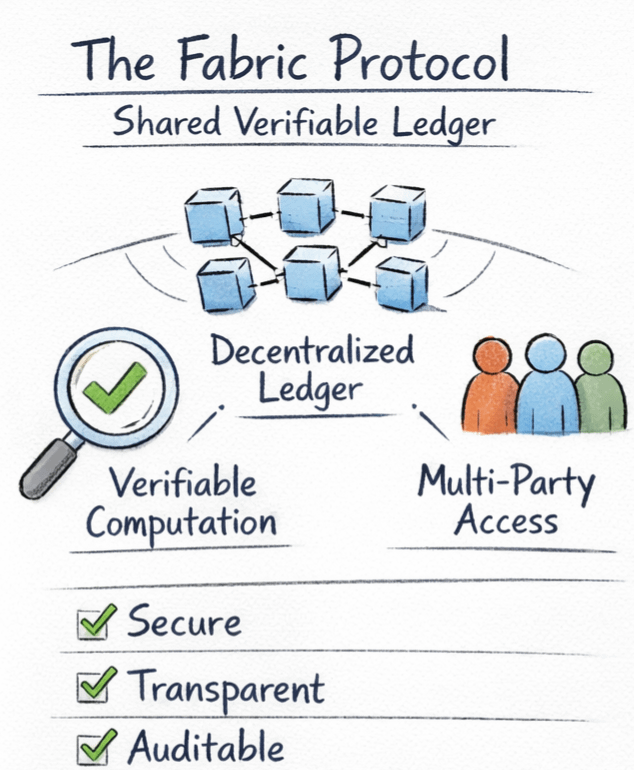

Fabric proposes using a public ledger to coordinate robotic actions. A ledger, in simple terms, is a shared record of events. In blockchain based systems that record is distributed across many computers and linked together through timestamps and cryptographic proofs, which makes past entries difficult to modify without everyone noticing.

For robotics the implication is fairly practical. When a machine completes a task, the event can be written into a record that multiple parties can examine. Instead of trusting a single operator’s database, participants can reference a shared history of what happened.

Fabric often describes this through the idea of verifiable computing. The phrase sounds technical, though the meaning is fairly grounded. It refers to systems that can demonstrate a computation or action actually occurred not just report that it did.

That matters when machines interact with people or institutions that do not share the same internal systems.

The scale of the issue is not particularly small anymore either. The International Federation of Robotics estimates that around 3.9 million industrial robots are currently active worldwide, mostly in manufacturing but increasingly in logistics and service environments. As these machines begin crossing organizational boundaries, the coordination layer around them becomes harder to ignore.

Fabric’s architecture tries to deal with that layer directly. Data from machines enters the network. Computation helps validate what occurred. Governance mechanisms allow different stakeholders developers, operators, sometimes regulators to interact with the same record of machine activity.

The ledger, in that sense, starts to resemble shared memory rather than financial infrastructure.

Imagine an inspection robot scanning sections of public infrastructure. Its reports might influence maintenance schedules or safety decisions. If those reports are tied to verifiable computation recorded on a ledger, the conversation changes slightly. Instead of relying entirely on whoever deployed the robot, others can review how the result was generated and when.

None of this removes complexity. Distributed verification slows things down compared with private systems. Coordination between many actors introduces its own failure modes. Even transparency creates design tensions about what information should remain visible and what should not.

Still, the underlying shift feels noticeable.

Robots are gradually moving into environments where many organizations and many people depend on the same machines. When that happens, intelligence alone doesn’t carry the whole burden anymore. The surrounding infrastructure for recording, checking, and understanding machine actions begins to matter just as much. #ROBO $ROBO

#ROBO $ROBO