I’ve been watching ROBO and the Fabric Foundation for a while now, and my view has become much more grounded over time. In the beginning, it was easy to focus on the scale of the idea. A network for general-purpose robots, built around verifiable computing, shared coordination, and agent-native infrastructure, naturally sounds ambitious. It carries the kind of language that makes people stop and imagine a much larger future. But the longer I have looked at it, the less I care about the size of the vision on paper. What matters more to me now is whether the structure underneath that vision is strong enough to survive contact with reality.

That is where Fabric becomes interesting. Not because it is talking about robots, but because it seems to understand that the real weakness in this space has never been intelligence alone. The weakness is in everything surrounding it. Machines may be getting more capable, but the systems we expect them to live inside still feel fragmented, improvised, and too dependent on private trust. So much still relies on human interpretation, isolated control, and decisions made behind closed systems. The machine may look autonomous, but the surrounding environment is often still held together by a small number of people manually carrying complexity that the infrastructure itself cannot yet absorb.

That gap matters more than most people admit. A robot can perform a task, respond to changing conditions, or generate value, but the moment that behavior needs to be governed across multiple actors, things get messy. Who verifies what happened? Who decides what the machine is allowed to do? Who updates rules when conditions change? Who is responsible when the machine behaves correctly by its own logic but still creates problems in a broader system? These are not glamorous questions, which is probably why they are often avoided. But they are exactly the questions that decide whether a project becomes real infrastructure or remains a compelling experiment.

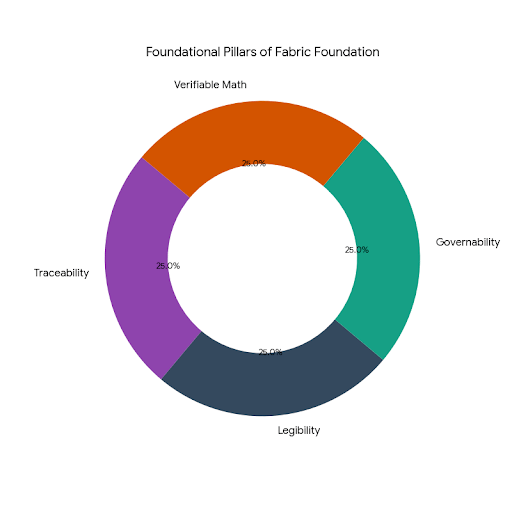

What I find serious about Fabric is that it appears to begin from that discomfort rather than from fantasy. It does not seem built on the assumption that better robots alone will solve the coordination problem. It seems built on the recognition that if machines are going to become meaningful participants in human systems, they need a framework that makes their activity traceable, legible, and governable beyond the circle of people who originally designed them. That is a far more difficult challenge than building impressive capability. It requires patience, institutional thinking, and a willingness to confront the dull parts of progress that most people would rather skip over.

The more I watch projects in this category, the more I think the deepest issue is not technological power but operational trust. A lot of systems today still work because a few people close to the project understand how to interpret them. They know where the rough edges are, which assumptions are safe, and how to step in when the system becomes unclear. But that is not durability. That is dependency disguised as functionality. A system becomes meaningful only when outsiders can rely on it without needing insider context to make sense of what is happening. That seems to be the real test Fabric is moving toward.

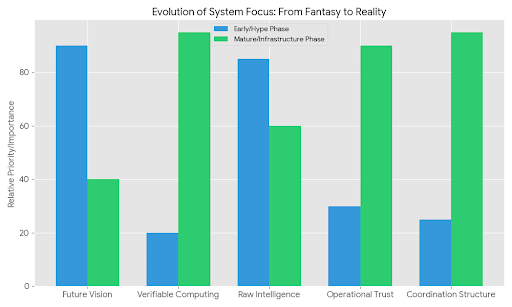

This is also why I think early users and later users reveal different truths. Early users are often generous. They believe in the direction, so they tolerate ambiguity. They will work around friction, forgive missing clarity, and help carry the weight of the system while it is still becoming itself. That phase can create a strong sense of momentum, but it can also create illusions. The real test comes later, when users arrive without emotional attachment. They are not there to admire the architecture. They are there to see whether the system reduces confusion, improves accountability, and makes coordination easier under pressure. A project only becomes infrastructure when it still makes sense to people who were never part of the original belief system.

That transition is difficult, and it usually demands more restraint than excitement. In a space like this, one of the clearest signs of maturity is not what gets launched quickly, but what gets delayed. What remains narrow. What stays controlled because the governance around it is not ready yet. That kind of restraint rarely gets celebrated, but it matters. Once machines begin operating inside shared environments, errors do not stay technical for very long. They become organizational, social, and sometimes even legal. A project that understands this has to think less about speed and more about survivability. It has to assume that edge cases are not rare exceptions but future pressure points.

I also think community trust around Fabric will be built in a quieter way than many people expect. Trust in systems like this does not come mainly from promises, incentives, or polished narratives. It comes from repeated observation. People watch how the project handles ambiguity. They notice whether hard trade-offs are explained honestly. They remember whether governance looks real when actual tension appears. In the long run, people trust what behaves consistently under strain. That matters more than any launch cycle or public story because infrastructure is not judged by how exciting it sounds. It is judged by whether people continue to rely on it when the excitement fades.

If ROBO has a meaningful long-term role here, I think it matters less as a symbol of attention and more as a signal of alignment. In a serious ecosystem, a token should reflect long-term commitment to the health of the system, not just short-term activity around it. Its value should come from helping connect governance, participation, and responsibility in a durable way. Otherwise it becomes noise layered on top of complexity. In a project trying to build real coordination infrastructure, that would be a distraction rather than a contribution.

So my view of Fabric Foundation now is simpler than it used to be. I do not see it mainly as a futuristic robotics story. I see it as a bet that the next stage of machine systems will depend less on raw capability and more on whether anyone can build the trust layer around that capability without cutting corners. That is a much less glamorous challenge, but probably a much more important one.

If the team keeps choosing structure over spectacle, clarity over speed, and long-term credibility over easy momentum, then Fabric could become something quietly important. Not because it promises the most dramatic future, but because it is trying to solve one of the most uncomfortable and necessary problems in this entire space: how to make increasingly capable machines fit into shared human systems without making those systems harder to trust.