There's a pattern I keep seeing.

Someone use Binance AI Pro to run a backtest, likes the numbers, goes live. Two weeks later they're asking why the strategy stopped working. The AI always gives a reasonable explanation. But nobody asks the more important question: did the strategy ever actually work, or did it just look like it worked on data they already knew the answer to?

I did the same thing.

Binance AI Pro lets you backtest in plain language. "If I bought BTC every time it dropped 5% in 2024, what would my return be?" Results in seconds. No code, no setup, no configuration. This is the kind of speed that used to belong to professional trading desks, and it works exactly as advertised.

But here's where two things collide in a way nobody says directly.

AI Pro returns accurate profit figures from historical data. Nothing wrong there. But when backtesting is fast enough to run many times, you naturally start testing variations. What if 4%? What if 6%? What if I add RSI below 35? Ten iterations. Twenty. Then you pick the one with the best numbers.

That selection process is the overfitting.

Not because AI Pro did something wrong. Not because the data is bad. But when you run enough variations on the same dataset and pick the best result, what you've chosen isn't a strategy with a real edge. It's the strategy that fits 2024 best. Those two things look identical in a backtest. They only separate when you go live.

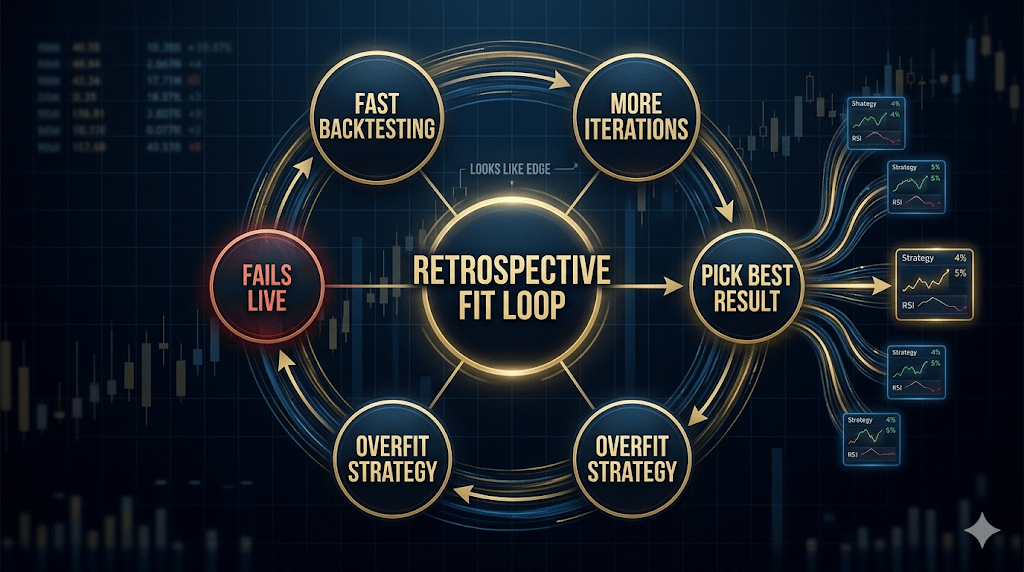

I call this the Retrospective Fit Loop: fast backtesting → more iterations → pick the best result → the selection itself creates the overfit → you can't tell real edge from retrospective fit until you're already in a live position.

The subtle part is that the loop is invisible while it's happening. After ten backtests, you don't feel like you're overfitting. You feel like you're doing serious research.

I ran straight into it with an $XAU setup. Seven variations in about twenty minutes, picked the one with the highest win rate over six months of data. Went live, strategy failed in the first week in ways the historical data gave no hint of. When I asked the AI to explain, the answer made sense. But that was a reconstruction after the loss, not the reasoning I used to pick the strategy in the first place.

The difference from before AI Pro: manual backtesting was slow enough that you'd only test a few strategies before stopping. That friction was an accidental filter. When AI Pro removed the friction, it removed the filter with it.

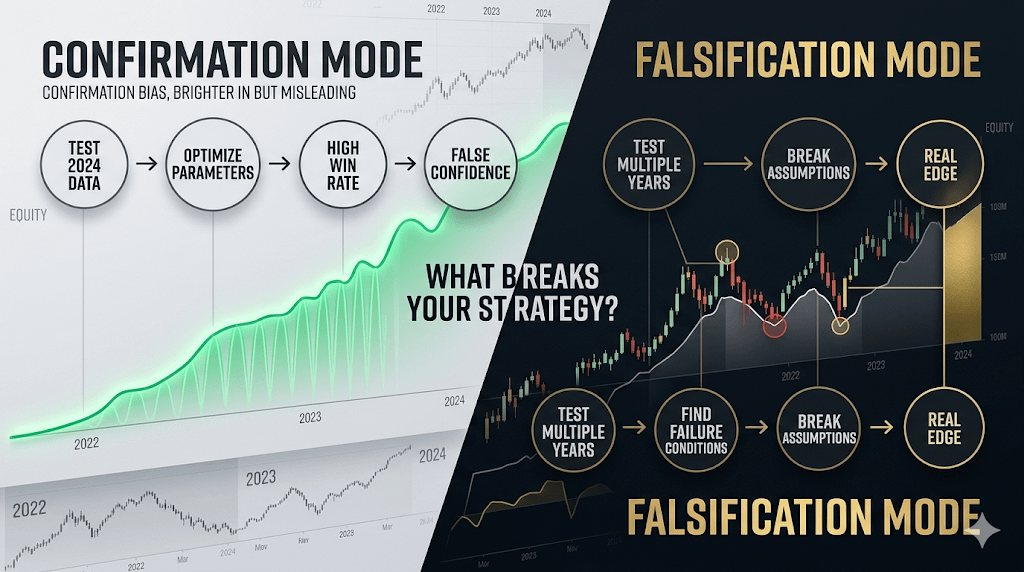

Overfitting isn't a calculation error. It happens when a strategy gets selected because it looks good on known data, not because it has logic that holds in unknown conditions. I changed how I use backtest after that: instead of asking "how much would this have made in 2024", I ask "under what conditions does this fail" and run it across 2022 and 2023 before trusting any number. A falsification question is harder to answer than a confirmation question. That's exactly why it matters more.

Trading always carries risk. AI-generated insights are not financial advice. Past performance does not reflect future results. Please check product availability in your region.

@Binance Vietnam #BinanceAIPro