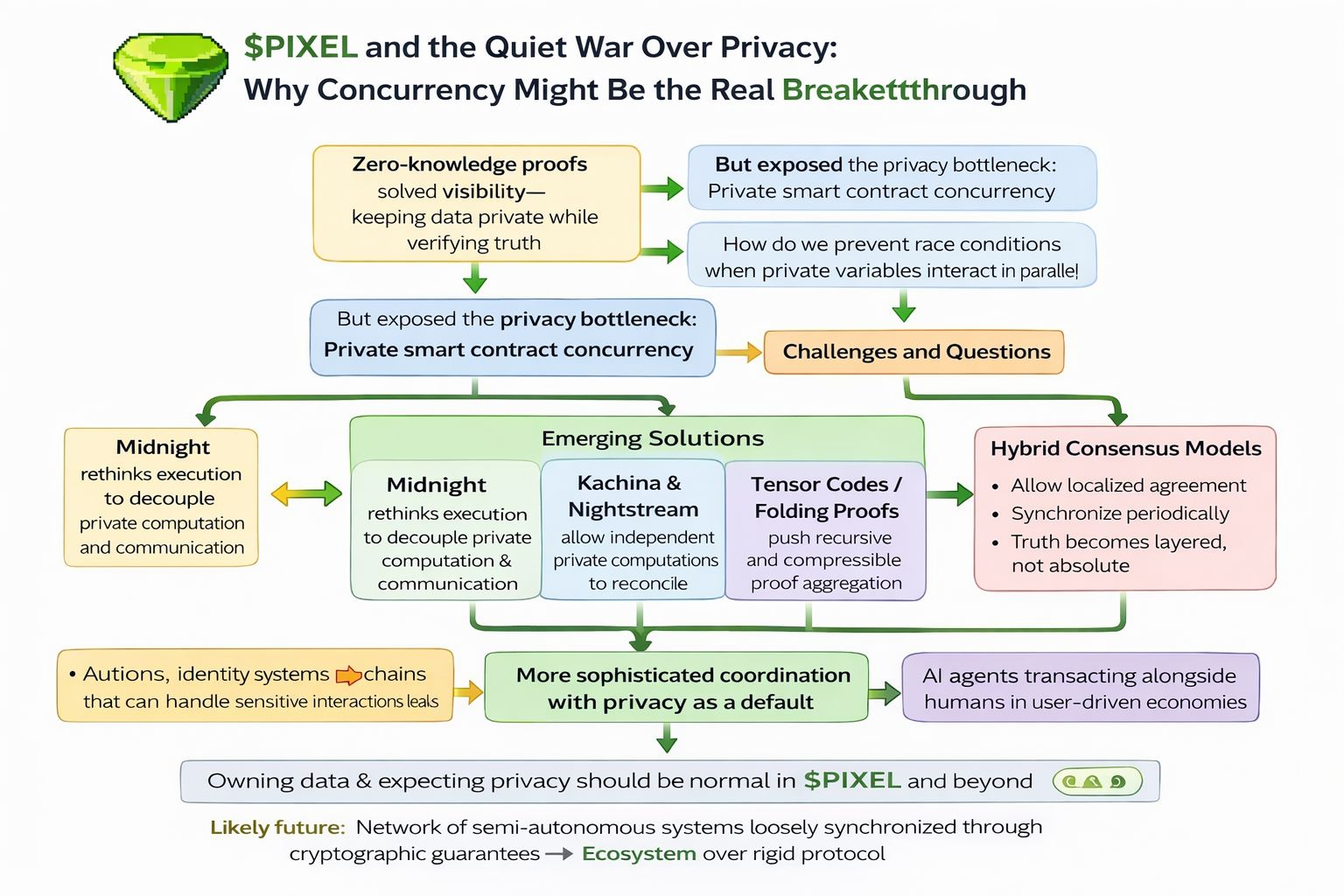

I keep circling back to a strange tension in blockchain design: we’ve gotten very good at proving things without revealing them, but we’re still terrible at letting those hidden things interact at scale. It’s almost ironic. Zero knowledge proofs solved the visibility problem how to keep data private while still verifying truth but they quietly exposed something deeper. Privacy is not just about hiding state. It’s about coordinating hidden state across many actors, at the same time, without breaking everything.

That’s where the real bottleneck lives: private smart contract concurrency.

In public systems, concurrency is messy but manageable. Everyone sees everything, so conflicts can be resolved deterministically. But once you introduce privacy, coordination becomes almost philosophical. If two contracts depend on hidden variables, how do they safely execute in parallel? How do you prevent race conditions when no one can see the full picture? The system starts to feel less like a ledger and more like a room full of people whispering secrets while trying to agree on a shared outcome.

This is the layer most people underestimate, even in projects like PIXEL where user ownership and digital economies are central. It’s easy to talk about empowering players, but if their economic interactions can’t remain private and composable, the system eventually leaks either value or trust. $PIXEL hints at this tension, even if indirectly, because player driven economies demand both coordination and discretion.

Emerging architectures like Midnight are interesting not because they “add privacy,” but because they rethink execution itself. Concepts like Kachina and Nightstream suggest a model where computation and communication are decoupled in a more fluid way. Instead of forcing everything into a single sequential chain, they allow fragments of private computation to evolve independently and then reconcile. It’s closer to distributed systems theory than traditional blockchain thinking.

Tensor Codes and folding proofs push this even further. Rather than treating proofs as isolated artifacts, they become compressible, aggregatable streams. This matters because concurrency at scale isn’t just about parallel execution it’s about compressing the verification of that execution. If every private interaction requires heavy cryptographic overhead, the system collapses under its own weight. Folding changes that equation by making proofs recursive, almost self referential, which starts to resemble how neural networks compress information.

And that’s where things get unexpectedly relevant to AI.

Imagine autonomous agents negotiating contracts, bidding in auctions, or managing supply chains. They need privacy not just for data, but for strategy. An AI participating in a financial agreement cannot expose its internal model or intent without losing its edge. Yet it must still coordinate with others. This is the same concurrency problem, just amplified. Systems like PIXEL, which revolve around dynamic user interaction, could evolve into environments where AI agents transact alongside humans if the underlying privacy infrastructure can handle that complexity.

Historically, privacy and usability have been at odds because privacy introduces friction. Every hidden variable is a coordination problem waiting to happen. But solving concurrency flips that narrative. If private interactions can compose as easily as public ones, privacy stops being a constraint and becomes a default.

Hybrid consensus models are starting to reflect this shift. Instead of enforcing a single global truth at all times, they allow localized agreement with periodic synchronization. It’s a subtle but important change. Truth becomes layered, not absolute. And in a system like PIXEL, where economies are emergent rather than predefined, that flexibility could be the difference between stagnation and genuine complexity.

I think this is the part that doesn’t get enough attention: privacy isn’t the end goal. It’s the precondition for more sophisticated coordination. Auctions that don’t leak bids. Identity systems that prove attributes without exposing individuals. Supply chains that verify authenticity without revealing trade secrets. These are not edge cases they’re foundational.

The future probably doesn’t look like one monolithic private chain. It looks like a network of semi autonomous systems, each handling its own private state, loosely synchronized through cryptographic guarantees. Something closer to an ecosystem than a protocol.

And maybe that’s where PIXEL quietly fits in again not just as a token or a game economy, but as a small glimpse into what happens when users expect both control and privacy by default. If that expectation spreads, the pressure on infrastructure will only increase.

Because once people get used to owning their data, they won’t tolerate systems that can’t handle it at scale.