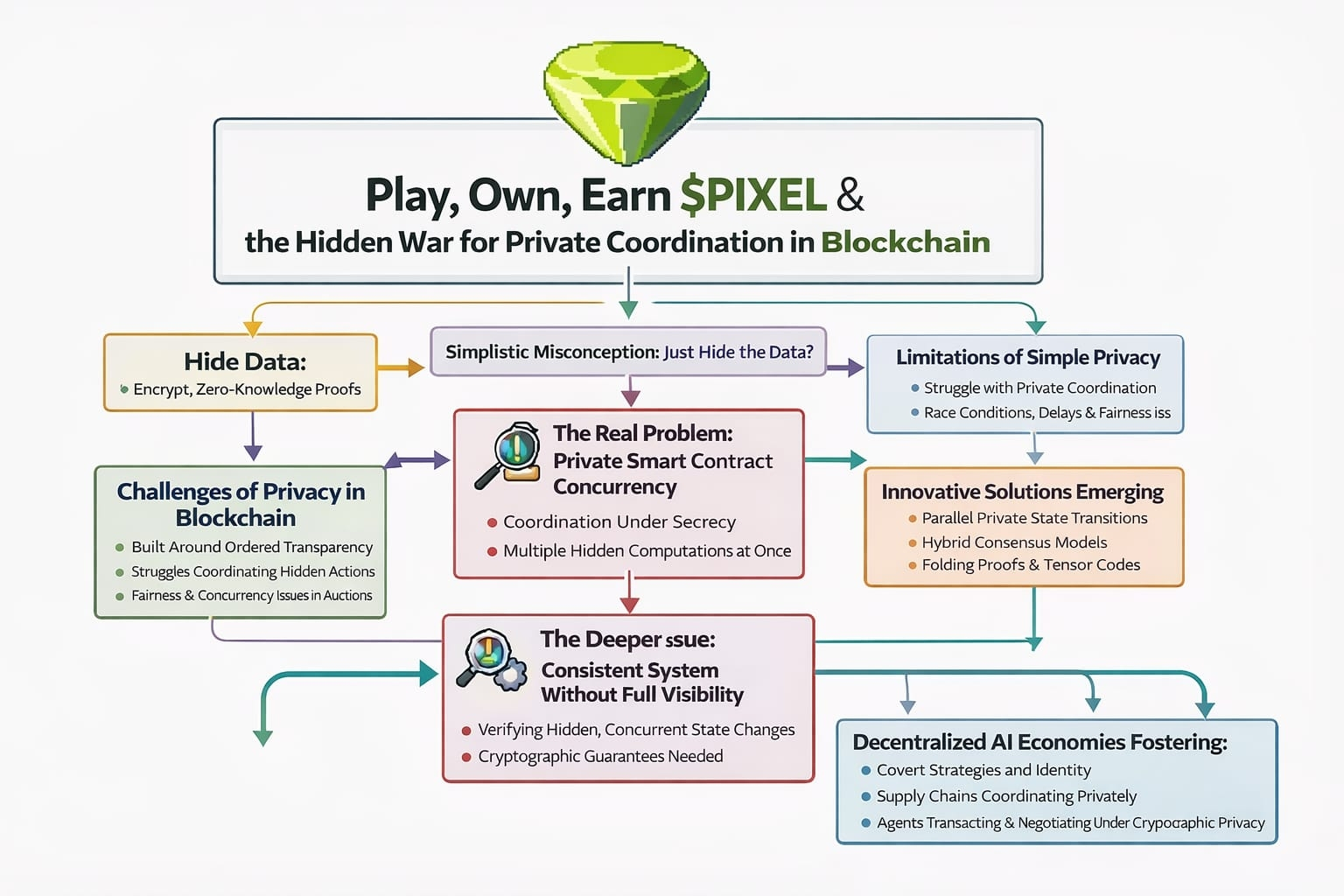

There’s this subtle misconception that keeps showing up whenever people talk about blockchain privacy. It sounds reasonable on the surface: just hide the data and you’re done. Encrypt it, wrap it in zero-knowledge proofs, shield the transactions, and the problem disappears. But if you sit with that idea long enough, it starts to feel incomplete. Privacy isn’t just about concealment. It’s about what happens after things are concealed especially when multiple actors are moving at once.

That’s where the tension really begins.

Because the hardest problem isn’t secrecy. It’s coordination under secrecy. More specifically, it’s private smart contract concurrency the ability for many independent, hidden computations to unfold at the same time without stepping on each other, leaking signals, or breaking the logic that holds everything together.

Blockchains, in their original form, were never meant to deal with this. They’re built around ordered transparency. Everything is sequenced, everything is visible, everything is agreed upon in a shared timeline. That’s what gives them strength. But it’s also what makes privacy feel like an awkward add-on. You’re trying to introduce opacity into a system that depends on clarity to function.

So what you get is a compromise. Systems that can hide individual actions, but struggle when those hidden actions need to interact. They can keep secrets, but they don’t coordinate secrets very well.

You can see this more clearly with something simple, like an auction. In a fully transparent system, the process is straightforward. Everyone sees every bid, the ordering is obvious, and the outcome is easy to verify. Now switch that to a private setup where bids are hidden using zero knowledge proofs. At first glance, it feels like a clean upgrade. But then reality creeps in. What happens when hundreds of bids arrive at nearly the same moment?

If you process them one by one, you introduce delay and subtle unfairness. If you try to process them in parallel, you run into race conditions—situations where the final result depends on invisible timing rather than clear rules. And because everything is hidden, detecting and resolving those conflicts becomes much harder.

Now stretch that scenario out. Think about financial agreements executing in parallel, trading strategies reacting in real time, or AI agents negotiating access to resources without exposing their intent. The moment you have multiple private actors operating simultaneously, the system starts to strain in ways that aren’t immediately visible.

This is why newer approaches like what’s emerging around Midnight’s architecture feel less like upgrades and more like attempts to rethink the problem entirely. Instead of forcing private computation into a rigid, sequential pipeline, they start asking a different question: what if state itself didn’t have to be handled in a single, global order?

Ideas like Kachina suggest that private state transitions could exist in a more flexible form, almost like parallel threads that only reconcile when they actually need to. Nightstream pushes the idea further, treating data not as isolated events but as ongoing flows something closer to a continuous process than a series of transactions. Then you have Tensor Codes and folding proofs, which try to make the heavy cryptography more manageable by compressing and combining proofs instead of handling each one in isolation.

Individually, these ideas are interesting. Together, they hint at something bigge a shift away from thinking of blockchains as strictly linear machines.

But even with all of that, there’s still a deeper issue sitting underneath.

How do you keep a system consistent when the information needed to verify that consistency isn’t fully visible?

In traditional distributed systems, conflicts are resolved because everyone can see the same thing. If two processes try to update the same resource, the system detects the overlap and handles it. In a private system, that shared visibility disappears. You’re left relying on indirect signals cryptographic commitments, dependency structures, partial proofs to make sure things don’t contradict each other.

Zero-knowledge proofs help, but they’re not a silver bullet. They’re expensive to compute, tricky to compose, and often asynchronous in ways that don’t align neatly with real-time interaction. Proving that a single action is valid is already non-trivial. Proving that a web of concurrent, interdependent actions is valid without revealing any of them is an entirely different challenge.

That’s where folding proofs start to feel like more than just an optimization. By allowing multiple proofs to be merged into one, they change how we think about verification itself. Instead of checking everything individually, you begin to treat computation as something that can be layered and compressed, almost like folding complexity into a smaller, more manageable shape.

But even that doesn’t fully solve coordination.

What’s still missing is a reliable way for private states to interact without collapsing into chaos. Something that lets independent actors move freely while still ensuring that their actions can be stitched back together into a coherent whole. Hybrid consensus models are beginning to explore this space, shifting away from the idea that every node needs to verify everything. Instead, smaller groups handle localized interactions, with cryptographic guarantees tying the system together at a higher level.

It starts to feel less like a rigid machine and more like an ecosystem. Not every interaction is visible, not every decision is global, but the structure holds.

Now, if you bring that lens into a world like Pixels, things get interesting quickly.

Pixels already operates on this idea that value isn’t just about what you hold, but how you behave over time. Your actions accumulate. Your presence matters. It’s less about static ownership and more about lived participation. When you introduce private coordination into that kind of environment, the dynamic shifts again.

In Pixels, actions could become layered instead of exposed. Players might coordinate quietly, form agreements that aren’t immediately visible, or deploy AI agents that act on their behalf without revealing strategy. The economy starts to take on a different texture less predictable, more strategic, closer to how real-world systems actually behave.

But again, none of that works if concurrency isn’t handled properly.

If private actions can’t run in parallel without interfering with each other, the system either slows down or starts leaking information in subtle ways. Timing patterns, transaction failures, ordering quirks these all become side channels that reveal more than they should. Privacy stops being a guarantee and becomes something fragile.

And then there’s the layer of AI.

Autonomous agents don’t just follow scripts. They adapt, react, and interact continuously. In a transparent system, you can observe and constrain them. In a private one, they become opaque participants operating at scale. Imagine thousands of these agents inside Pixels, transacting, negotiating, reallocating resources all at once, all under layers of cryptographic privacy.

Without a solution to private concurrency, that vision doesn’t hold. It either collapses under its own complexity or drifts toward centralization as a fallback for coordination.

But if it does hold, the implications are hard to ignore.

You get systems where strategies aren’t instantly exposed and exploited. Where identity can remain private without becoming unusable. Where supply chains can coordinate without revealing sensitive data. Where financial actors can move without broadcasting their entire position.

And beyond that, you start to see the outline of something bigger decentralized AI economies where agents operate independently, transact privately, and still manage to coordinate in meaningful ways.

That’s what makes architectures like Midnight feel important. Not because they promise perfect privacy, but because they’re trying to make privacy work in motion. Not static, not isolated, but active and interconnected.

It’s easy to underestimate how difficult that is. Most systems behave well under simple conditions. It’s only when things scale, when interactions overlap and timing becomes messy, that the cracks appear. And with privacy, those cracks are harder to see until they matter.

But if this problem gets solved even partially it changes the role of privacy entirely.

It stops being a constraint and starts becoming infrastructure. Something systems like Pixels can build on, not work around. A layer that allows for more nuanced interaction, where not everything is visible, but everything still connects.

And maybe that’s the real shift hiding underneath all of this.

Not a move from transparency to privacy, but from simplicity to complexity that actually works. From systems where everything is exposed to systems where things can remain hidden without breaking the flow.

That’s not just a technical challenge. It’s a structural one.

And it’s far more difficult than just hiding the data.

But it’s also where things start to get genuinely interesting.