I didn’t start from rewards this time. I started from something more uncomfortable, inconsistency that didn’t match my inputs. Same time spent, similar actions, but the session didn’t scale the way I expected. Not lower in a clear sense, just less responsive, like part of what I was doing didn’t fully register.

So I changed nothing except repetition. I ran the same structure across a few days, same order, same pacing. The first run felt clean. The second was slightly weaker. By the third, it felt like the system was no longer reacting with the same sensitivity, even though nothing on my side had changed.

That pattern is hard to explain if you assume every action is evaluated equally.

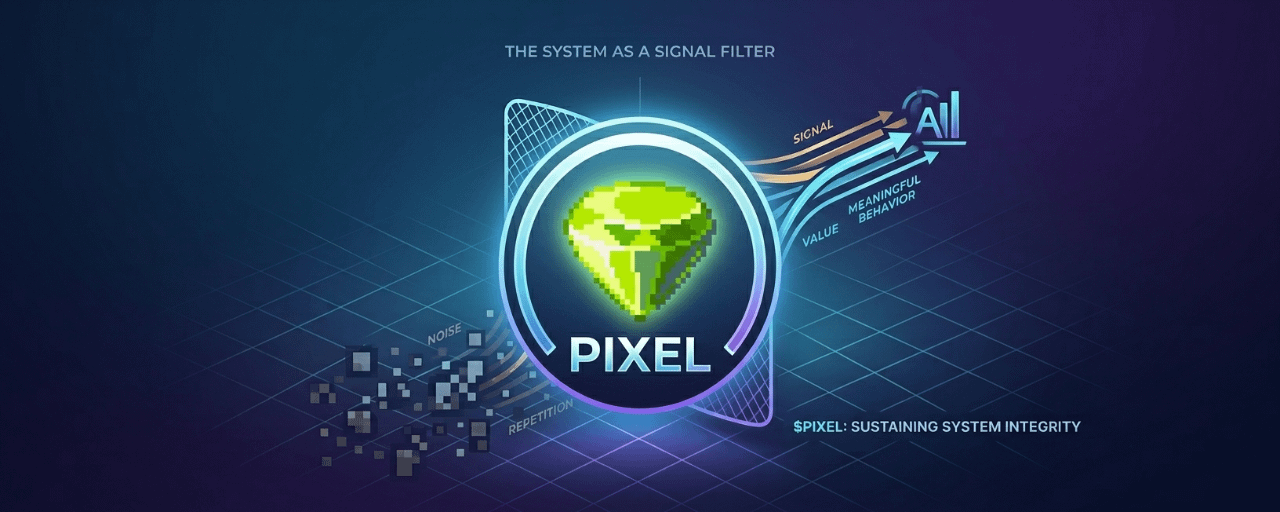

It becomes easier to explain if the system is not treating activity as value by default, but as signal that needs to be filtered. Not all behavior is equally useful to the system, even if it looks identical on the surface. Repetition without variation starts to feel like noise.

I tested this in a small way. Instead of repeating the same flow, I introduced minor changes in how I moved through the session. Not more effort, just less predictability. The response didn’t spike, but it stabilized again. The system felt “awake” in a way it wasn’t when everything was too consistent.

That’s where the perspective shifts. Pixels may not be trying to maximize output per action. It may be trying to evaluate whether the behavior behind those actions is meaningful enough to sustain.

From a technical angle, this suggests a layer that prioritizes behavioral quality over raw volume. Not in a moral sense, but in a structural one. Systems that scale to millions of interactions can’t treat every input equally without becoming exploitable. At some point, they need to decide what counts.

That decision doesn’t have to be visible to be effective. In fact, it usually isn’t. What you see is the outcome, not the filtering process behind it.

This is where Stacked fits in a more precise way than it first appears. If Pixels already needs to distinguish between noise and signal across player behavior, then an AI layer that analyzes cohorts, detects patterns, and adjusts how the system responds is not an add-on. It’s part of maintaining that filter at scale.

The idea of an AI game economist makes more sense here. It’s not about creating rewards, it’s about deciding where responses should actually be allocated so the system doesn’t degrade over time.

That also changes how $PIXEL sits inside the loop. It’s not just flowing through actions, it’s moving through a system that may already be deciding which behaviors deserve to carry weight. That makes it less about how much you do and more about how the system interprets what you do.

I’m still testing this, but the pattern keeps coming back. When everything becomes too repeatable, the system feels less responsive. When behavior carries some variation, even small, the response stabilizes again.

That doesn’t feel like a simple reward loop.

It feels like a system trying to protect itself from becoming predictable.