I’ve been around long enough to stop reacting to new systems the way I used to. There was a time when anything that promised “fair distribution” or “verifiable participation” felt like progress. Now I mostly just watch. Not because I’ve lost interest, but because I’ve seen how these things tend to unfold once the early optimism fades.

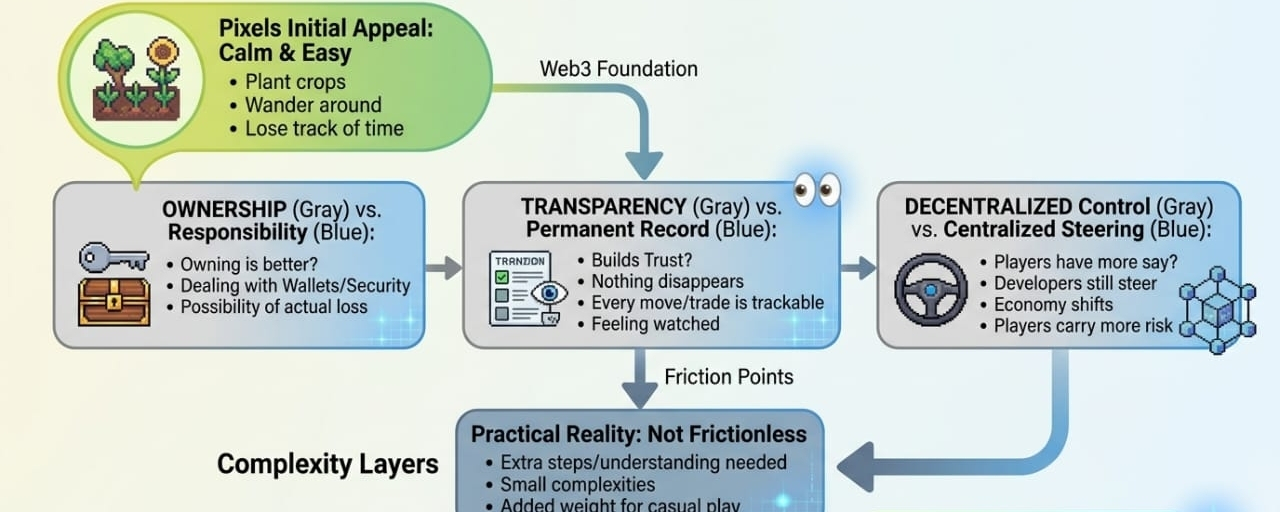

Pixels, at first glance, feels light. A farming game, open-world, a bit of creativity layered on top. It doesn’t take itself too seriously, which is probably part of the appeal. But underneath that simplicity, there’s a quieter structure forming—one that decides who gets rewarded, who gets noticed, and what kind of behavior is considered “real.”

That’s the part I pay attention to.

Any system that ties rewards to some form of credential—whether it’s activity, history, or reputation—eventually runs into the same tension. At the beginning, everything feels natural. People play because they want to explore. The rewards feel like a side effect, not the main objective. It’s loose, almost honest.

But that phase never lasts.

Give it time and people start to notice patterns. What actions get rewarded more. What behaviors are tracked. What signals matter. And once that understanding sets in, things shift. Slowly at first, then all at once. Participation turns into optimization.

You can almost feel it happen.

The same actions that once meant curiosity start to mean intent. Players aren’t just farming crops anymore—they’re farming outcomes. They’re shaping their behavior to fit whatever the system is measuring. And at that point, credentials stop reflecting reality. They start reflecting strategy.

That’s where things get complicated.

Because now the system isn’t just asking “what did this user do?” It’s trying to answer “what does this mean?” And meaning is easy to manipulate when rewards are involved. A wallet might look active, consistent, engaged—but is that genuine, or just well-practiced?

It’s not always obvious. And systems don’t always admit that.

What I’ve noticed over the years is that these systems don’t usually break in dramatic ways. There’s no single moment where everything collapses. Instead, trust starts to thin out. Quietly. You see it in small reactions—people questioning distributions, pointing out patterns that feel off, wondering how certain accounts keep ending up ahead.

Nothing explosive. Just a slow shift in perception.

Developers tend to respond the same way every time. They tighten things. Add more rules, more filters, more criteria. It makes sense. You try to protect the system. But every new layer creates new edges, and edges are where people learn to adapt.

It becomes a cycle.

Users figure out the rules, then figure out how to bend them. The system adjusts, then gets tested again. And somewhere in that loop, the original idea—fair, meaningful participation—gets harder to define.

Pixels is still moving through that process. It hasn’t hit the more uncomfortable phase yet, at least not fully. The incentives are still working in a way that feels mostly aligned. But you can already see the early signs of that shift. The moment where players stop just “playing” and start thinking in terms of return.

That’s not a failure. It’s just what incentives do.

What I find more interesting is how the system handles doubt when it eventually shows up. Because it will. It always does. Not as a crisis, but as a question: are these credentials actually telling the truth?

And maybe more importantly—who gets to decide that?

There’s also a layer people don’t talk about much, which is the credibility of the system itself. Not just whether it works, but whether people believe in how it works. Once users start to feel like outcomes are being shaped in ways they don’t fully understand, or worse, ways that feel uneven, the focus shifts.

It’s no longer about playing or earning. It’s about whether the system deserves trust at all.

That’s a harder thing to fix.

Because trust doesn’t really come from rules. It comes from consistency over time. And time is where most of these systems get tested the hardest. Not in the early days when everything is growing, but later—when growth slows, when rewards feel thinner, when the same participants keep showing up and patterns become easier to notice.

That’s when people start looking closer.

And when they do, they don’t just look at what’s working. They look at what doesn’t quite add up. The edge cases. The outliers. The accounts that seem just a little too perfect. And whether those are exceptions—or signs of something deeper.

I don’t think Pixels is trying to solve all of that. I’m not even sure it should. But if it’s going to rely on credentials and distribution as part of its core loop, then eventually it has to face those questions.

Not by proving that the system is flawless, but by allowing it to be questioned without falling apart.

That’s the part most projects underestimate. They try to design for trust from the start, when what actually matters is how that trust holds up once people begin to doubt it.

And they will.

They always do.

For now, Pixels feels like it’s still in that earlier phase where things are mostly aligned, where participation still looks like participation. But I’ve learned not to take that at face value. Not because I expect it to fail, but because I’ve seen how quickly behavior changes once incentives settle in.

So I watch.

Not with excitement, not with cynicism either. Just a kind of quiet attention. The kind that comes from knowing that trust, in systems like this, isn’t something you build once.

It’s something that gets worn down, tested, reshaped—over and over again.

And you don’t really know what’s left until enough time has passed.