Mira Network is one of those projects I initially overlooked because it does not try to compete with large AI models directly. Instead, it focuses on something less glamorous but more fundamental: whether we can actually trust AI outputs in autonomous systems.

After spending time reading through @Mira - Trust Layer of AI materials and technical discussions, I realized the core idea is simple. AI models make mistakes. They hallucinate. They reflect bias. They produce confident answers that are sometimes wrong. As AI systems begin interacting with financial contracts, governance mechanisms, and automated agents on-chain, those mistakes stop being harmless.

Mira Network is designed as a decentralized verification layer. Not a model, not a chatbot, not another LLM. A verification protocol.

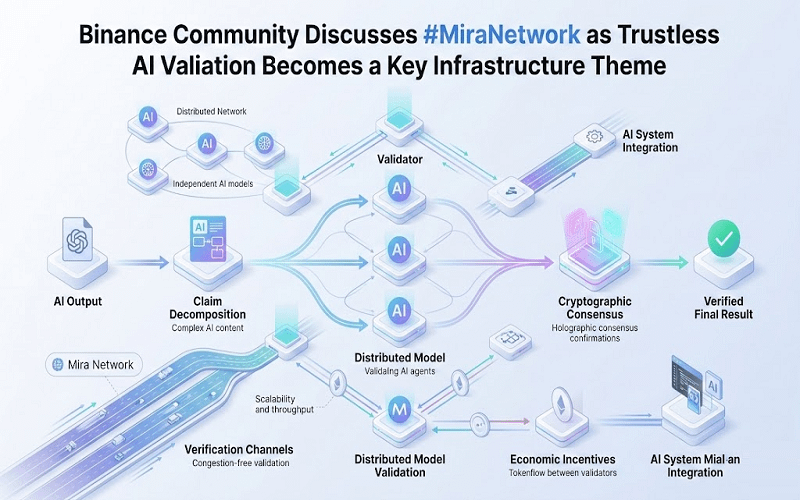

The idea is to treat every AI output as a set of claims rather than a single answer.

Instead of asking “Is this output correct?” Mira breaks the output into smaller, testable statements. Each statement can then be independently evaluated by multiple AI models running across a distributed network. If enough independent validators reach consensus on the validity of those claims, the result is cryptographically recorded on-chain.

It reminds me of how fact-checking works in journalism. You do not trust one source. You cross-check. Except here, the cross-checking is automated, economically incentivized, and anchored to blockchain consensus.

Traditional AI validation systems are centralized. A company trains a model, tests it internally, maybe runs red-team exercises, and then publishes evaluation metrics. Trust depends on the company’s transparency and reputation. If the validation process fails, there is no external accountability layer.

Mira flips that structure.

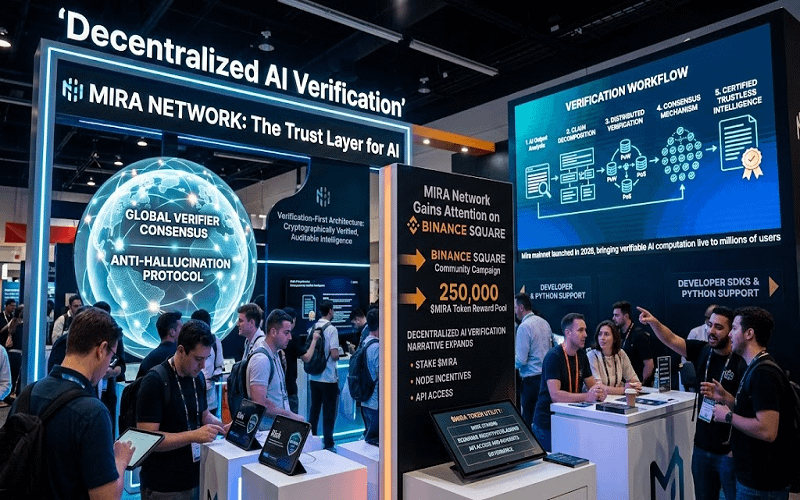

Validation is not owned by a single entity. Independent nodes participate in verifying AI claims. Economic incentives are built around honest validation, using $MIRA as the coordination mechanism. If validators behave dishonestly or carelessly, they can be penalized. If they provide reliable verification, they are rewarded.

The trust model shifts from institutional reputation to cryptographic and economic alignment.

This is where blockchain becomes more than a buzzword. Consensus mechanisms ensure that no single validator can unilaterally approve questionable outputs. Cryptographic proofs ensure that verification steps are auditable. The record of what was verified, by whom, and under what consensus threshold becomes part of a transparent ledger.

For autonomous AI agents interacting with smart contracts, this matters.

Imagine an AI agent executing trades, allocating capital, or triggering governance proposals. A hallucinated data point could lead to real financial consequences. Mira’s approach introduces a verification checkpoint before execution. It is not about preventing all mistakes. It is about reducing blind trust.

The interesting part is that Mira does not attempt to judge “truth” in an abstract sense. It validates consistency and agreement across independent models. That is a more practical goal. In distributed systems, perfect truth is less realistic than measurable consensus.

Of course, there are limitations.

Verification across multiple models increases computational costs. Coordinating distributed validators is not trivial. Latency can become an issue for real-time applications. And the broader decentralized AI infrastructure space is becoming crowded, with several protocols exploring similar reliability layers.

There is also the maturity question. Building a robust validator network takes time. Incentive design must be carefully balanced. Early-stage ecosystems often struggle with uneven participation or concentration of validators.

Still, I find the architectural choice thoughtful.

Instead of building a better AI model, Mira Network builds a system that assumes models will fail and designs around that assumption. That feels grounded in reality.

On Binance discussions around #MiraNetwork , the conversation tends to focus on price or exchange exposure. But technically, the more meaningful question is whether decentralized verification becomes a necessary layer as AI agents move deeper into on-chain environments.

The token $MIRA plays a coordination role rather than being framed as a speculative centerpiece. Its function is tied to validator incentives and governance, which makes sense given the protocol’s purpose.

When I follow updates from #Mira and occasionally check @Mira - Trust Layer of AI , what stands out is not dramatic claims but structural ambition. It is trying to solve a systems-level problem: how to make AI outputs accountable in trustless environments.

Whether that approach scales efficiently remains to be seen.

But the idea that AI should be verified before it is trusted feels less like a trend and more like an inevitability.