I've been noticing something lately while exploring different AI tools and crypto infrastructure. The conversation around artificial intelligence often focuses on how powerful models are becoming — better reasoning, more automation, smarter agents. But the more time I spend experimenting with these systems, the more I realize that power isn’t really the biggest issue anymore. The real challenge is reliability.

AI models today can generate incredibly convincing answers. Sometimes they sound so confident that you almost forget you're talking to a machine. But then you double-check something it said, and suddenly you realize part of the information is wrong. Maybe it misunderstood a detail, maybe it mixed up facts, or maybe it simply invented something that sounds believable but isn’t true.

Most people who use AI regularly have experienced this at some point. It’s one of those strange quirks of modern AI — it can sound intelligent even when it’s making mistakes.

In casual situations, this isn't a huge problem. If an AI tool gives the wrong movie release date or mixes up a historical event, it’s easy to correct. But things become very different when AI systems start operating in more serious environments — financial systems, trading bots, research analysis, or automated decision-making.

In those situations, incorrect information can have real consequences.

This is something I started thinking about while exploring projects at the intersection of AI and Web3. Many teams are trying to build smarter models or more capable AI agents, but fewer projects seem to be asking a slightly different question: how do we make AI outputs trustworthy enough for systems that act autonomously?

During that search, I came across a project called Mira Network, and the idea behind it caught my attention.

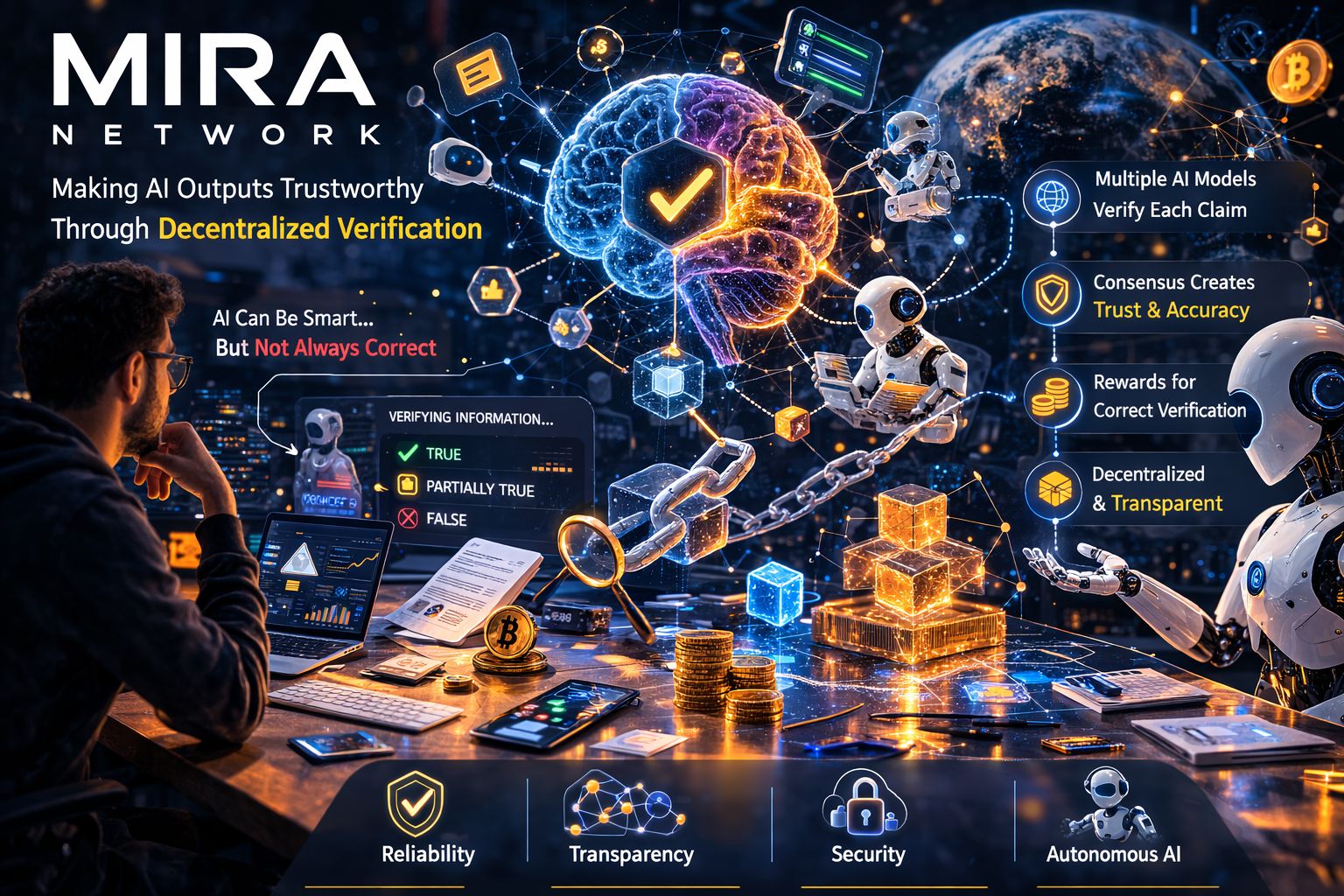

Instead of trying to create a single “perfect” AI model, Mira focuses on a different challenge — verifying whether AI-generated information is actually correct.

When I first read about the concept, it made a lot of sense. AI models don’t truly understand information in the way humans do. They generate responses based on patterns they learned during training. Most of the time those patterns lead to useful answers, but the system doesn’t have a built-in way to prove that what it’s saying is true.

That’s why hallucinations happen.

So if AI systems are going to power autonomous tools in crypto, finance, research, or decentralized governance, we probably need some way to check their outputs before those outputs trigger real actions.

Mira Network appears to be experimenting with exactly that.

The basic idea is actually quite intuitive. Instead of treating an AI response as the final answer, the system breaks the response down into smaller claims that can be examined individually. Those claims are then sent across a network of independent AI models that analyze whether the information seems accurate.

Rather than trusting a single AI model, multiple models review the same claims. When enough of them agree on the validity of a claim, it becomes verified through a kind of consensus process.

When I thought about it, the idea felt surprisingly similar to how blockchain systems validate transactions.

In networks like Bitcoin, no single computer decides whether a transaction is valid. Instead, many independent participants check the same transaction and come to an agreement. That distributed verification process is what allows the system to operate without a central authority.

Mira seems to apply a similar logic, but instead of verifying financial transactions, it focuses on verifying information generated by AI.

Another interesting part of the design is that the verification process is tied to economic incentives. Participants in the network who help verify claims can earn rewards for doing the job correctly. If they consistently validate incorrect information, they risk losing credibility or economic benefits.

The idea is that incentives encourage participants to focus on accuracy rather than simply approving whatever the majority says.

What I find fascinating about this approach is that it introduces something AI currently lacks — a trust layer.

Right now, when we interact with AI systems, we usually rely on intuition. We either trust the answer or we manually fact-check it ourselves. There isn’t much infrastructure designed specifically to verify AI outputs in a decentralized way.

But if AI agents start interacting with blockchain systems, that gap becomes important.

Imagine an AI assistant managing parts of a DeFi strategy, analyzing on-chain data, or helping coordinate decentralized governance. If the AI misinterprets information or produces incorrect data, automated systems might execute actions based on those mistakes.

Even small errors could have financial consequences.

A verification network like Mira could potentially act as a buffer between AI-generated information and real-world execution. Instead of blindly trusting the output of a single model, applications could rely on information that has been validated by a distributed network.

Of course, like many early Web3 ideas, this concept raises plenty of questions.

One challenge is that verifying knowledge isn’t always straightforward. If multiple AI models are checking each other’s work, they might still share similar biases or limitations from their training data. Agreement between models doesn’t necessarily guarantee perfect accuracy.

There’s also the issue of efficiency. AI outputs can contain a lot of information, and verifying every claim across a distributed network might require significant computing resources. For real-world applications, the system would need to remain fast and scalable.

Another complexity involves incentives. Designing economic systems that reward accurate verification without encouraging manipulation is not easy. Blockchain networks have spent years refining their incentive structures, and AI verification could introduce new layers of difficulty.

But even with those uncertainties, the direction itself feels meaningful.

For more than a decade, blockchain technology has been focused on solving one major problem: how to create trust in digital systems without relying on centralized authorities. At the same time, artificial intelligence has been expanding what machines can do with information.

The challenge now is that AI produces information probabilistically, while decentralized systems often require reliable, verifiable data.

Projects like Mira seem to be exploring ways to bridge that gap.

If systems like this work, they could support a future where autonomous AI agents operate safely within decentralized networks. Verified information could power automated trading strategies, decentralized research tools, or collaborative networks of AI agents that share trustworthy knowledge.

It also hints at a broader idea: instead of just building smarter AI, we might start building infrastructure that helps verify intelligence itself.

And in a world where AI is increasingly shaping decisions, markets, and digital systems, that kind of infrastructure might become just as important as the models generating the answers.

We’re still early in that journey, but projects exploring AI verification networks are starting to point toward a future where decentralized systems don’t just secure money — they also help secure information.