A verification worker stalled last Tuesday.

Not crashed.

Not failed.

Just… stalled.

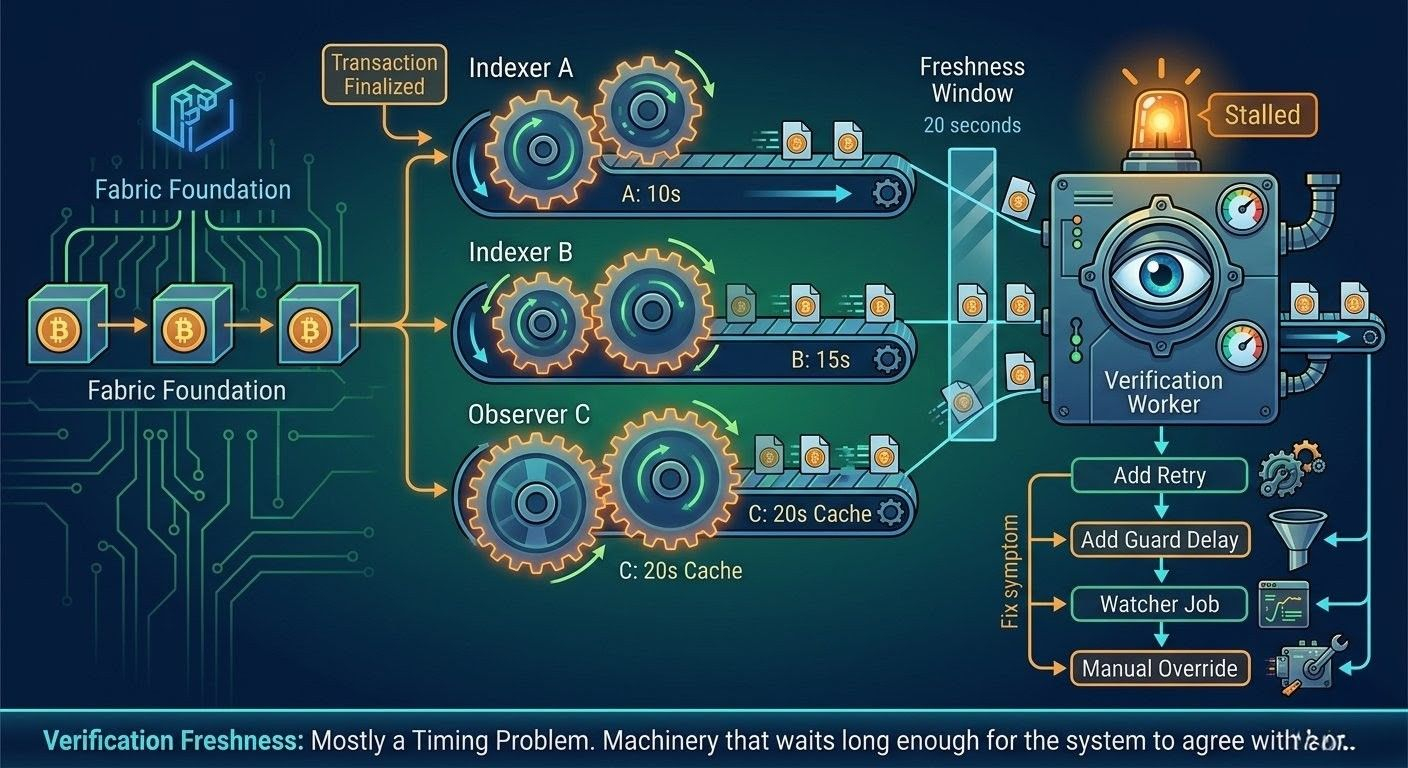

The worker belongs to a settlement pipeline inside the Fabric Foundation infrastructure that processes events related to the $ROBO token. Its job is simple: confirm that a state update has propagated across several observers before the system moves to the next step.

In theory it’s a short process.

Transaction finalizes.

Indexers observe it.

Verification passes.

The entire flow is supposed to finish in under thirty seconds.

That’s what the diagrams say.

But in production the worker sat there for almost four minutes waiting for the final confirmation.

Nothing looked broken. The transaction was finalized on-chain. Our primary node saw it immediately. One indexer updated. Another took longer. A third observer still reported the previous state.

So the verification task stayed open.

It waited because the rule says verification requires agreement across all observers within a freshness window.

That window was set to twenty seconds.

Which sounds reasonable until you run the system long enough.

Because “freshness” turns out to depend on several independent clocks.

The chain clock.

Node sync cycles.

Indexer polling intervals.

Cache expiration windows.

They are all slightly different.

One indexer refreshes every 10 seconds. Another every 15. One service caches RPC responses for 20 seconds to reduce load. Meanwhile the verification worker expects agreement inside a 20-second window.

On paper that seems aligned.

In reality those timers overlap in strange ways.

Sometimes the observers agree instantly.

Sometimes they drift just enough that verification misses the window even though the system is technically consistent.

Nothing is wrong.

But the worker still waits.

At first we treated it like a transient issue.

Someone added a retry.

If verification fails freshness checks, wait thirty seconds and try again.

Simple.

And for a while that fixed the symptoms.

But then something else started happening.

Retry loops began to interact with queue pressure.

When verification tasks retried, they re-entered the queue behind newer tasks. Meanwhile newer transactions created more verification jobs. The system didn’t collapse, but latency started spreading through the pipeline.

Verification sometimes completed two or three retries later.

Not because the system was incorrect.

Just because observers noticed the state at different times.

So we added a guard delay.

If the queue is deep, verification waits before retrying.

That smoothed things out again.

Later we added a watcher job that scans for verification tasks stuck longer than five minutes. If it finds one, it refreshes the observer queries and restarts the check.

Then someone added an indexer refresh pipeline.

Then manual verification overrides for operators during high-load periods.

None of these were part of the original design.

But gradually they became necessary.

And over time it became obvious that these fixes were doing something important.

They were coordinating time.

The protocol coordinates state transitions.

But the operational system coordinates when different services notice those transitions.

Retries manage observation delay.

Watcher jobs manage attention.

Guard delays manage queue pressure.

Manual checks manage uncertainty.

The protocol still defines correctness.

But the infrastructure defines when correctness becomes visible.

That distinction matters more than we expected.

Because distributed systems rarely fail due to incorrect state.

They fail because parts of the system see the correct state at different times.

And verification logic is extremely sensitive to that difference.

After running the Fabric Foundation infrastructure around $ROBO for months, the verification layer started looking less like a security mechanism and more like a timing negotiation between services.

The blockchain finalizes quickly.

Observers eventually converge.

But everything in between is waiting.

Waiting for caches to expire.

Waiting for indexers to refresh.

Waiting for retries to line up.

Which leads to a slightly uncomfortable realization.

The protocol guarantees agreement.

But the operations layer decides when that agreement counts.

And most of the engineering effort isn’t spent defining the protocol.

It’s spent quietly building the machinery that waits long enough for the system to agree with itself.#robo @Fabric Foundation $ROBO