I have observed that the majority of discussions surrounding robotics in Web3 are based on the concept of improved models of intelligence, smarter agents, more competent machines. Nevertheless, the greater problem is not intelligence. It's coordination.

We are heading to the future of having machines that do not work in isolation. The autonomous robots will co exist in environments, exchange data, and use the outputs provided by other systems of which they do not take control. The actual bottleneck in such an environment is not capability rather whether these systems are able to co ordinate without being forced to centralized control.

That is the coordination problem where I believe Fabric Protocol has more interestingness than initially seen.

In the modern world, the majority of robotic systems are located within closed loops. A logistics firm implements robots, works on their programs, and checks the quality of the results internally. The implicit trust is due to the fact that all things are under a single authority. However, as soon as the robots start work beyond the boundaries of organizations, among the owners, different data sources, different purposes that model begins to disintegrate.

What it is substituted with is not simply superior software. It needs a common ground upon which machines can agree on the truth.

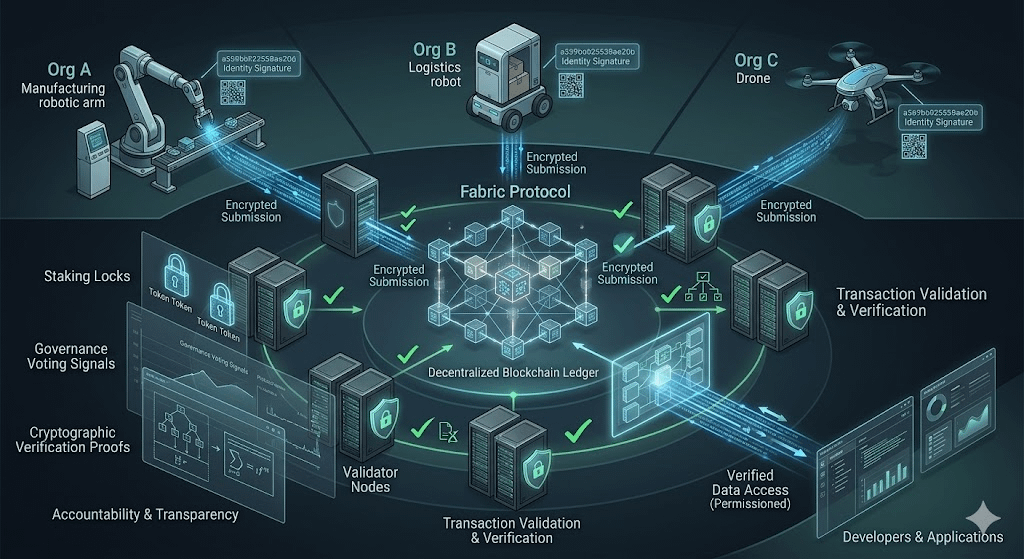

Fabric Protocol resolves this by establishing a public coordination layer, which is composed of verifiable computing. The system does not require the participant to believe what a robot writes in response to queries; it is a system which can be proven to be correct. A robot does not simply state that it has accomplished something or created some data that it produces a computation that is independently verifiable.

I consider this change rather subtle and significant. It re defines robotics as an execution system to a verifiable interaction system.

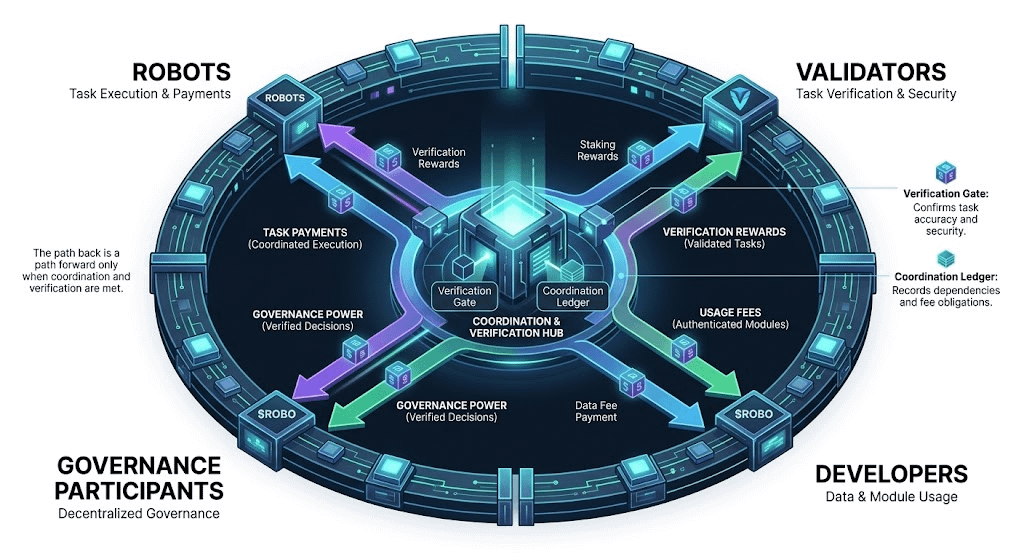

The protocol itself is a neutral ground upon which robots, developers and validators are meeting. Robots produce information and calculation outcomes. Validators ensure the accuracy of those results. Applications developed by developers are based on verified data and not raw, untested data. All this is grounded by a public ledger, which forms an overall record of machine action.

The existence of the token ROBO connects these relations in an economic system. As opposed to being a passive asset, $ROBO is the medium through which the coordination is maintained. Validators are motivated to check computations, developers are compensated to access trustworthy data, and the governance participants impact the network development as new applications appear.

The thing that is interesting to me is that the token does not attempt to generate demand artificially. It is relevant when it turns out that the network does coordinate useful machine activity. When the robot is not generating verifiable work, the token does not play any significant function. It makes it a more inseparable relationship between the use of infrastructure and economic value than what we tend to find in more abstract blockchain systems.

The recent approach of the ecosystem by fabric especially the push towards opening it more to developers and promoting integration of agent-based applications is an indication that it is trying to realize the expansion of this coordination layer beyond theory. It is not about bringing in more users, but rather, bringing more machine generated interactions going through the network. To some extent, it is not all about scaling the transactions but scaling interoperable machine behavior.

Nevertheless, coordination on this level presents challenges by itself. The verification systems shall be kept efficient to support real time or close to real time machine activity. The world of robotics is uncertain and there is no easy way of converting real-life actions to verifiable digital evidence. It also raises the issue of adoption on whether developers and organizations will be ready to work on a common infrastructure as opposed to having proprietary systems.

These aren't small obstacles. As a matter of fact, they might determine whether protocols such as Fabric continue to be a niche experiment or develop into infrastructure.

The question of direction this is heading is what interests me. With the increased autonomy of AI agents and their integration into the physical systems, the rate of machine-to-machine interaction is going to rise exponentially. Those interactions will be fragmented without a coordination layer confined in isolated ecosystems.

Fabric is essentially suggesting that even coordination can be decentralized that machines do not have to accept the results given by an authority.

I do not view this as a step that will be short term. It is akin to early preparations towards another form of network one where machines are not merely instruments, but agents which act in the same economic systems.

And should that future go the way it is beginning to do, it will not be the question how intelligent machines become.

It will be whether they will agree on what really transpired.