I did not expect the most real part of the ROBO whitepaper to feel weirdly close to office life 😂

I went back through the whitepaper again and this time I kept thinking about something funny. Underneath all the robotics language and protocol design this thing almost reads like HR for machines. I’m serious 😅 Just hear me out. Not the boring corporate kind. I mean the actual structure every work system ends up needing once performance starts to matter. Who did the job? Was the work good enough? Was it done consistently? Can someone challenge it? What happens if the output is bad? Who gets rewarded for job well done and Who's held accountable if things go wrong??

At first, it seemed like a pretty basic concept. But the more I sat with it the more I felt this might be one of the smartest parts of the whole ROBO idea.

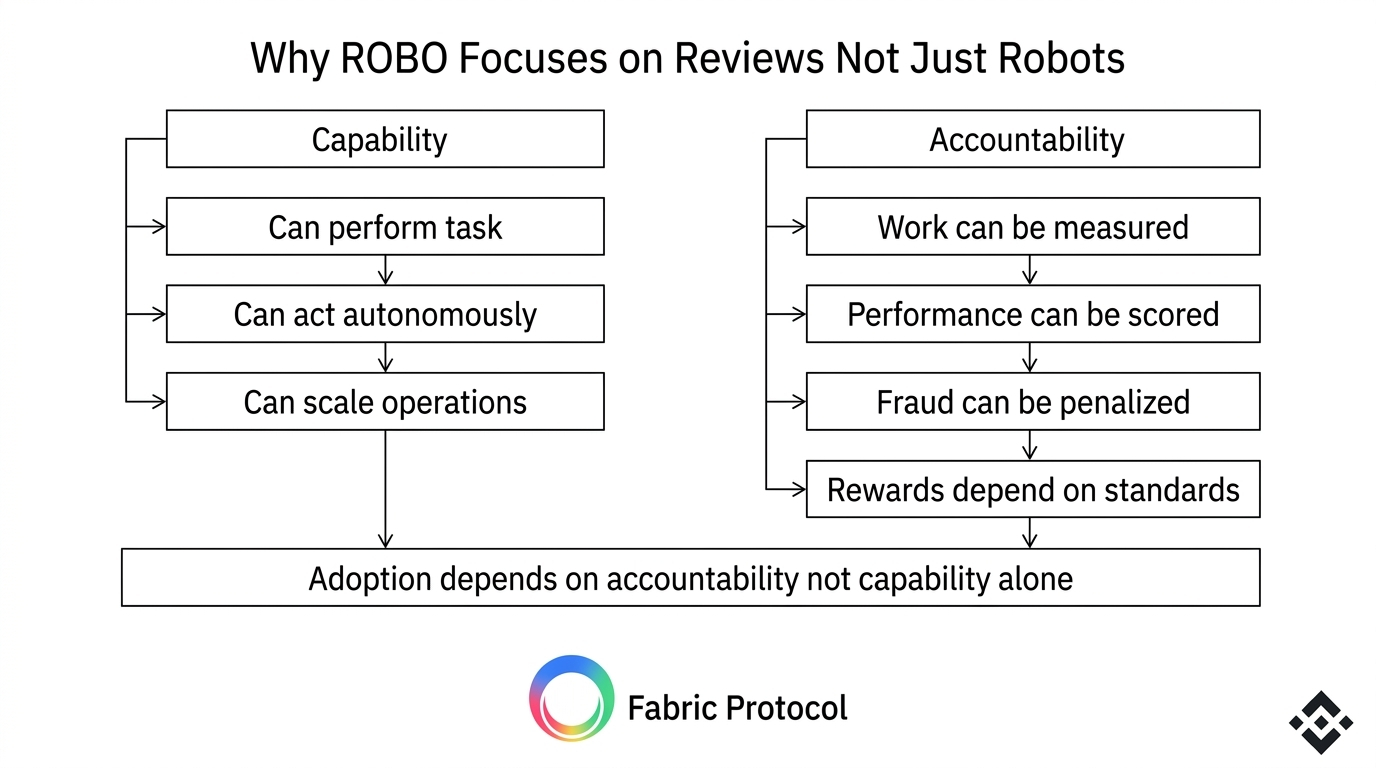

Most people still talk about AI and robotics like the only thing that matters is capability. But let's be real. Thats not all that matters. what really count is , Can the system think better? Can it move better? Can it automate more tasks? Sure that matters. But in real life nobody pays for capability alone. People pay for reliable performance. If a machine is going to do useful work in warehouses, logistics services or infrastructure then the real question becomes brutally practical. How do you know the work was actually done well??🤐👀

That is where Fabric started to make sense to me.🥳

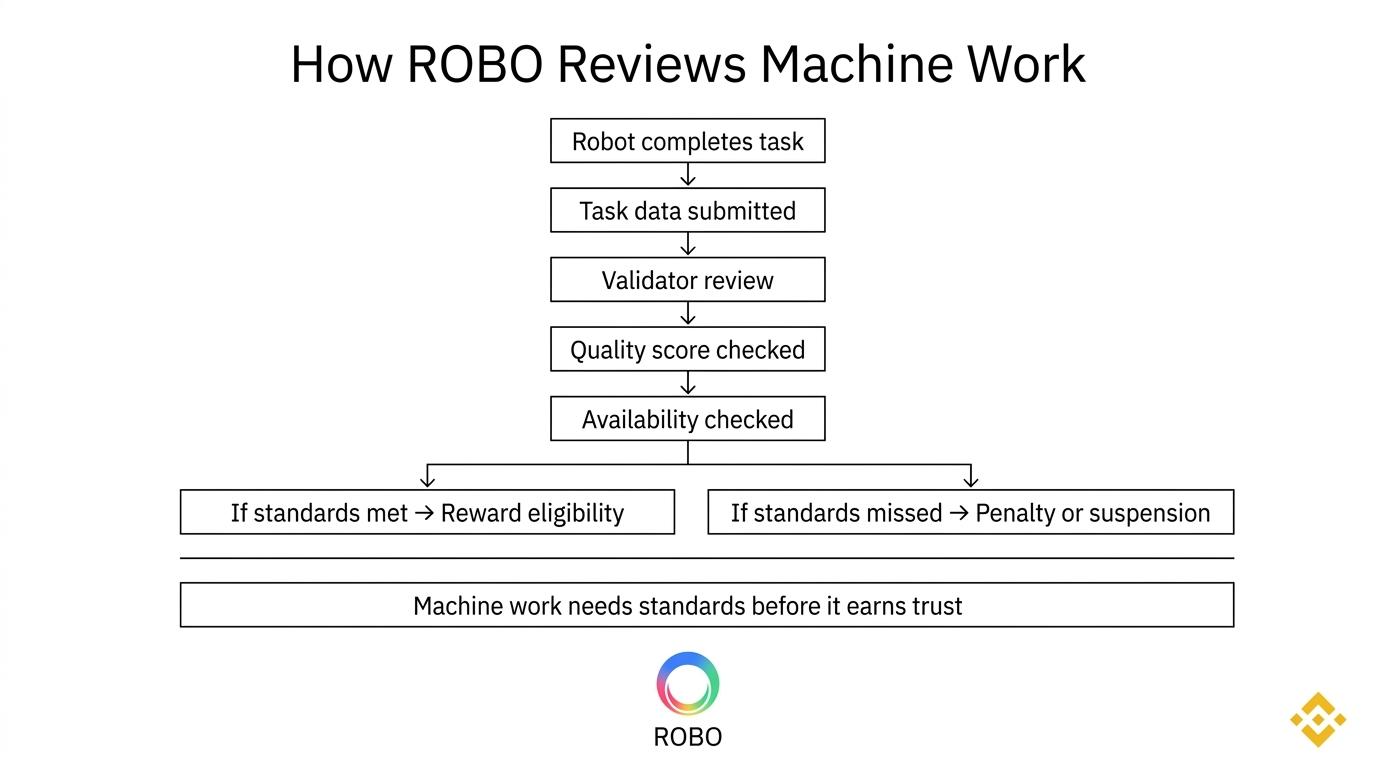

The whitepaper is not just building a story around robot participation. It is trying to build an accountability layer around robot labor. That means machine work is not treated like magic. It is treated like something that has to be measured scored challenged and enforced. Honestly I’m a little surprised more people are not focusing on this because it feels much more grounded than the usual robot future pitch.

And there is already real context for why this matters. According to the International Federation of Robotics there are now more than 4 million industrial robots operating worldwide. That number keeps growing as automation moves deeper into manufacturing and logistics. So this is not some far-off sci fi question anymore. Machines are already part of production systems. The gap is that most of them still do not live inside open economic frameworks that can evaluate their work the way human labor gets evaluated. That gap is exactly where ROBO comes in to fill.

The whitepaper stopped feeling like a token pitch to me and started feeling like a draft performance review system for machines.

That line kept bouncing around in my head.

The reason is simple. Fabric does not just say robots can do tasks and get paid. It adds standards. It sets operating requirements. It uses work bonds. It introduces validators. It defines contribution scores. It creates penalties for fraud and unreliability. The protocol is trying to answer the annoying but necessary questions that every real work system eventually faces.

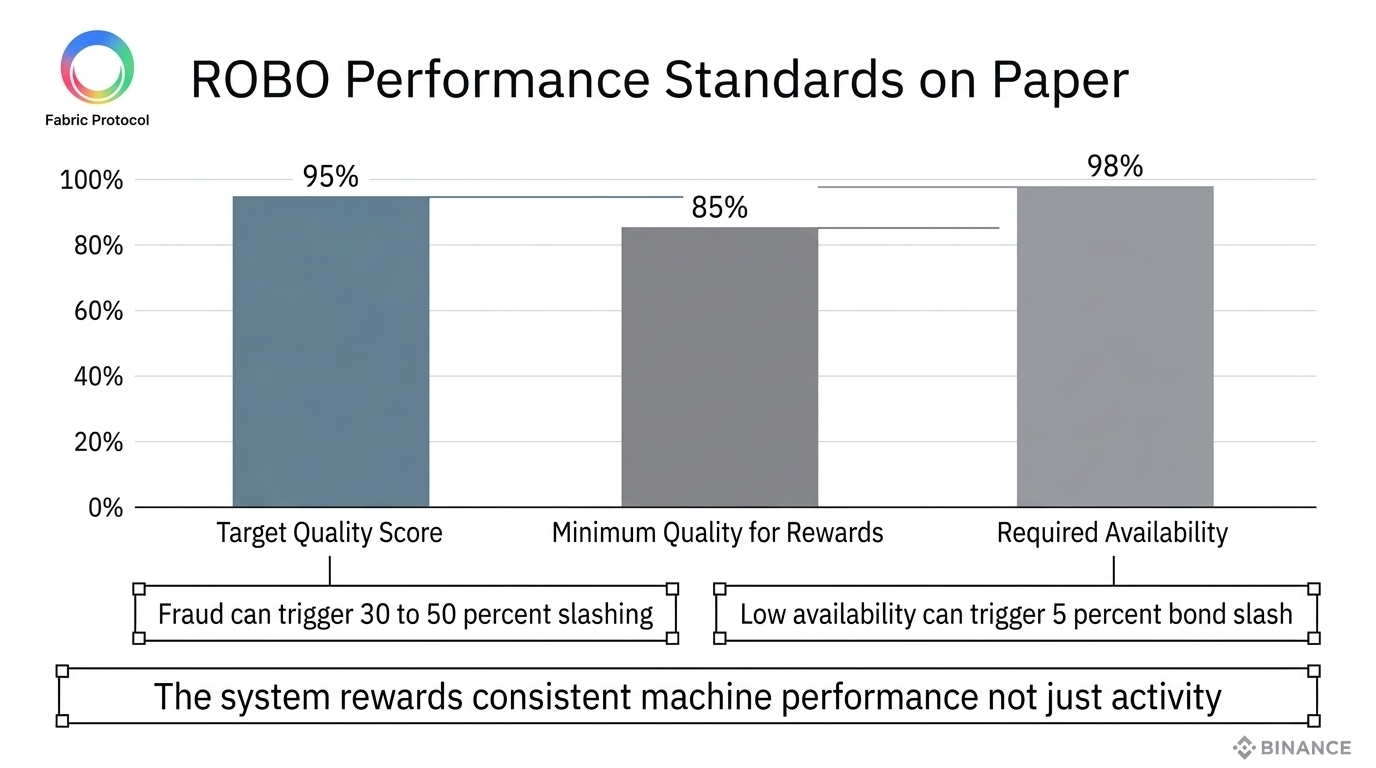

The details here actually matter. The whitepaper uses a target quality score of 0.95 in its adaptive emission design and limits adjustments to 5 percent per epoch as a kind of circuit breaker. It also sets a hard availability expectation of 98 percent over a 30 day epoch and an 85 percent quality floor for reward eligibility. If proven fraud is detected the system can slash 30 to 50 percent of earmarked task stake. That is not decorative tokenomics. That is a framework saying performance has consequences.

And yeah I know how funny that sounds. We really might be heading toward a future where robots get performance scored before some managers do. 😭 But joke aside this part of the paper made the whole project feel more serious to me. Not safer. Not guaranteed. Just more serious.

Because in real life every labor market runs on more than skill alone. It runs on verification. A person can have talent and still lose trust if results are inconsistent. The same logic applies to machines. A robot can look impressive in a demo and still be economically useless if it cannot deliver stable performance in messy conditions. That is where Fabric is trying to insert itself. Not at the moment of invention. At the moment of accountability.🤯

This is also where the project touches something bigger than robotics. It starts to look like a market design problem. If future machine economies exist then they will need standards for what counts as good work. They will need systems for disputes. They will need ways to separate real contribution from fake activity. They will need incentives that reward quality not just participation. The whitepaper understands that better than a lot of AI token projects do.

What I like here is the refusal to reward passivity. Fabric’s proof of contribution model is built around actual work. Task completion. Data. Compute. Validation. Skill development. The paper goes out of its way to say identical token holders can end up with different outcomes because rewards are tied to measurable contribution not just token ownership. That is a healthier idea than the lazy hold and earn designs crypto loves recycling.

Still I’m not blindly bullish here. The whole thing depends on whether machine work can actually be measured well in real environments. That is the hard part. Writing thresholds in a whitepaper is easy. Building fair and manipulation resistant standards for physical world performance is hard. Really hard. A robot can complete a task poorly. A validator can miss context. A user can misreport quality. Operators can optimize for the metric instead of the real outcome. We have seen this happen in human systems forever. Of course it can happen in machine systems too.💻

That risk matters because once measurement gets noisy the whole structure gets shaky. Rewards become less meaningful. Slashing becomes less fair. Contribution scores become easier to game. At that point the network stops behaving like an accountability system and starts behaving like a bureaucracy built on imperfect signals. That is probably the biggest execution risk in the entire ROBO model in my opinion.🤖

At the same time I respect that the paper does not duck the issue. It does not act like robotics trust can be solved by vibes. It tries to create incentives and penalties that make bad behavior expensive. That alone already puts it ahead of a lot of projects that throw around AI language without touching the harder economics underneath.

I also think this angle matters for the future. If robotics keeps expanding then the real winners may not just be the teams that build capable machines. They may be the ones that build systems for evaluating machine performance in a way businesses regulators and users can actually trust. That sounds less glamorous than robot demos. But markets usually reward reliability long before they reward narrative.

So yeah I’m laughing a little because this whole idea ended up sounding weirdly familiar. The most interesting part of the ROBO whitepaper may not be the robot future at all. It may be the quiet attempt to build the rules for who gets a good review and who gets written up.

Smart robots will get attention. The robots that keep passing review may be the ones that actually get adopted. Just like us , creators who keep getting good points are actually getting rewarded in creatorpad campaigns. 😁🤪😂

@Fabric Foundation #ROBO $ROBO