It doesn’t matter if it’s finance, digital platforms, or government services. At small scale, trust is manageable. You can verify things manually. You can rely on institutions, processes, or reputation.

At large scale, that stops working.

The internet solved distribution. It made it possible to move information, value, and services across the world instantly. But it never really solved trust. It just abstracted it.

We trust platforms to store our data. We trust systems to verify identities. We trust intermediaries to validate transactions, approvals, and records.

And most of the time, it works.

Until it doesn’t.

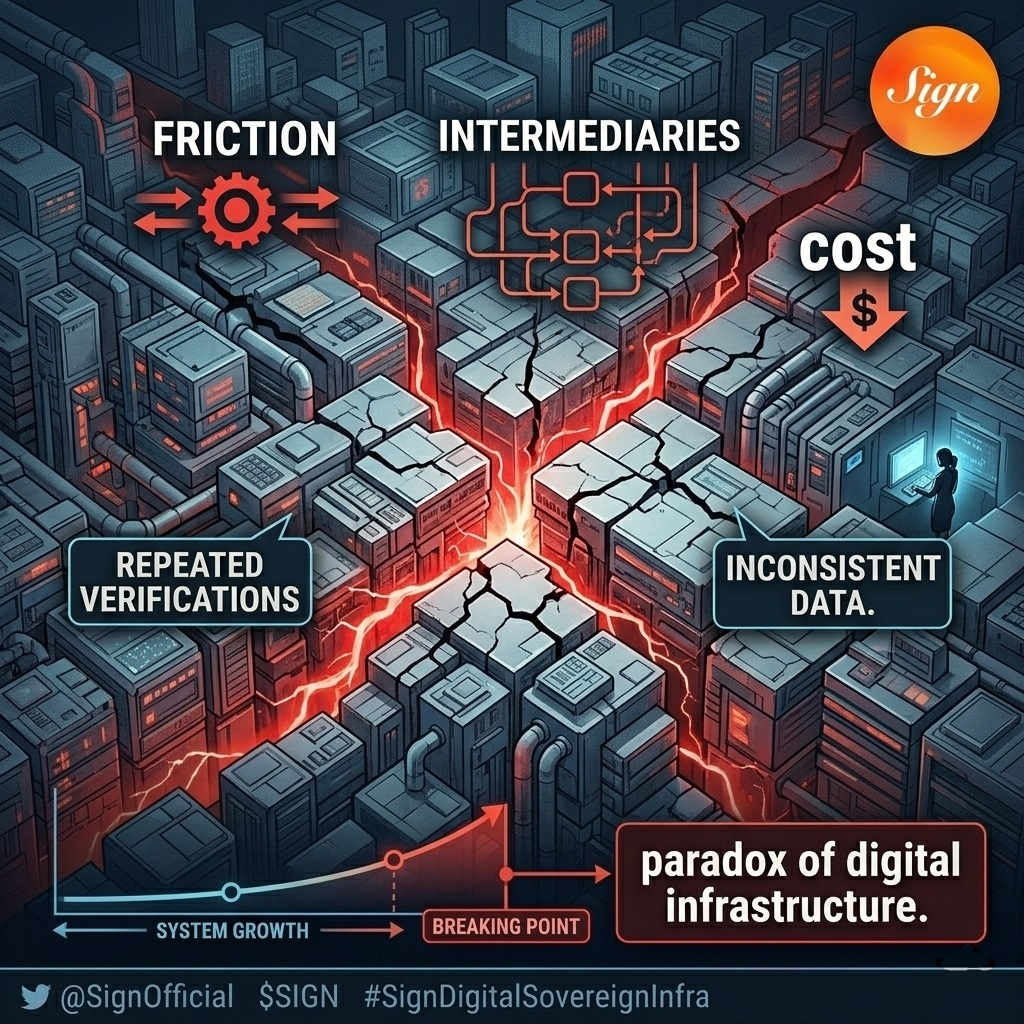

Because trust, as it exists today, does not scale cleanly. It creates bottlenecks. Every verification step adds friction. Every intermediary introduces cost. Every layer of control becomes a potential point of failure.

As systems grow, these inefficiencies compound.

A financial platform onboarding millions of users needs to verify identities repeatedly. A government digitizing public services needs to ensure records are accurate and auditable. A global application handling sensitive data must balance access with security.

Each of these problems leads to the same outcome: more checks, more processes, more overhead.

The system becomes heavier as it grows.

This is the paradox of digital infrastructure. The more we scale, the more we rely on trust mechanisms that were never designed for that scale in the first place.

And that is where things start to break.

Not in obvious ways, but in subtle ones. Delays in verification. Inconsistent data across systems. Increasing costs of compliance. Dependence on centralized entities that hold the “source of truth.”

These are not bugs. They are structural limitations.

The current model treats trust as something external to the system. Something that needs to be added on top through processes, audits, and intermediaries.

But what if trust was not external?

What if it was built directly into the system itself?

This is the direction infrastructure like Sign Protocol is moving toward.

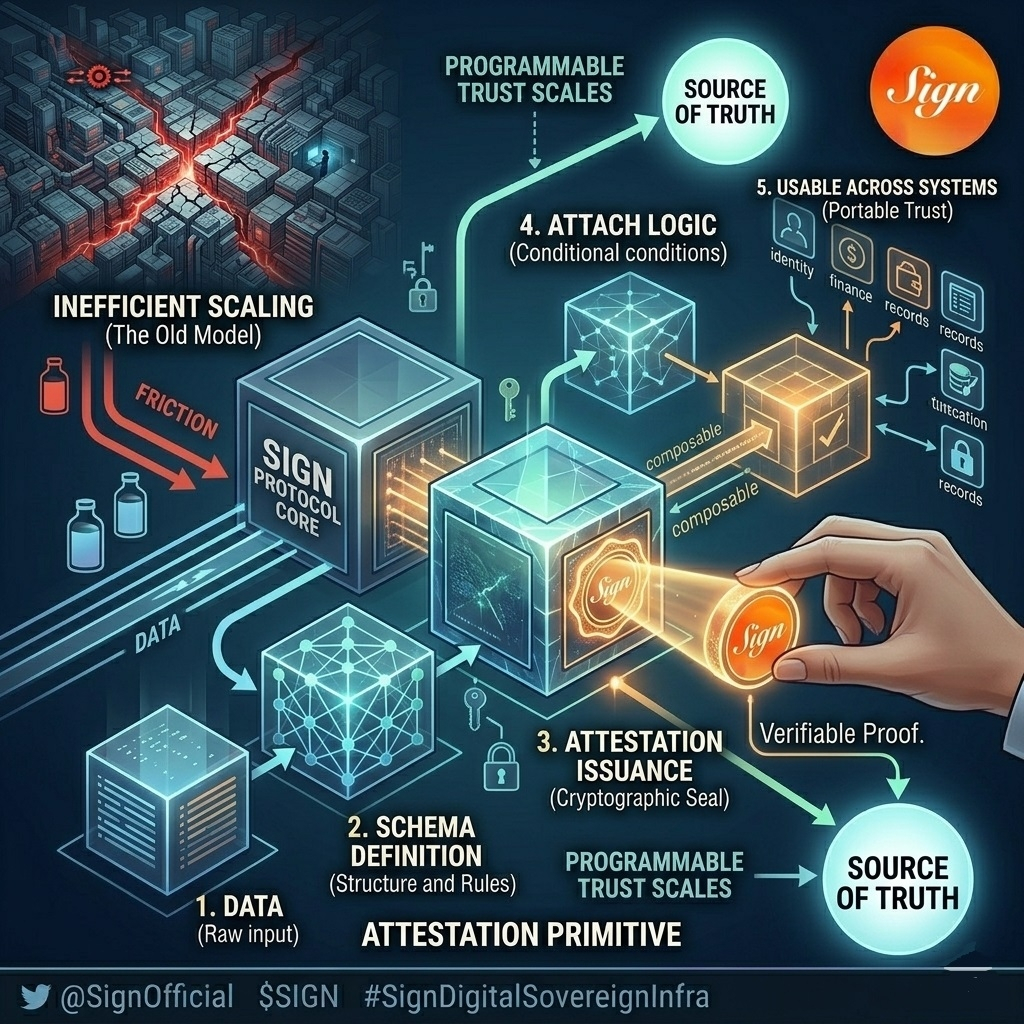

Instead of relying on repeated verification, it introduces a different primitive: attestations.

A way to encode claims about data in a structured, verifiable form. Not just statements, but proofs that can be referenced, reused, and validated across different systems.

At a basic level, an attestation represents a piece of truth. Something that has been defined, issued, and signed by a specific entity.

But the impact is not in the individual attestation. It is in what happens when they become composable.

Because once systems can rely on shared attestations, they no longer need to recreate trust from scratch every time.

A user does not need to prove the same fact repeatedly across platforms. A service does not need to store redundant data to verify a condition. A process does not need to rely on a single centralized authority to confirm validity.

Instead, they can reference an existing proof.

That changes the structure of the system.

Verification becomes modular. Trust becomes portable. Processes become lighter.

The system stops accumulating friction as it scales.

This is not just a theoretical improvement. It addresses one of the core inefficiencies of modern digital infrastructure: redundancy.

Today, the same information is verified multiple times, stored in multiple places, and managed by multiple entities. Each instance introduces cost and risk.

With a shared layer of attestations, that redundancy can be reduced.

But there is another dimension that matters just as much: control.

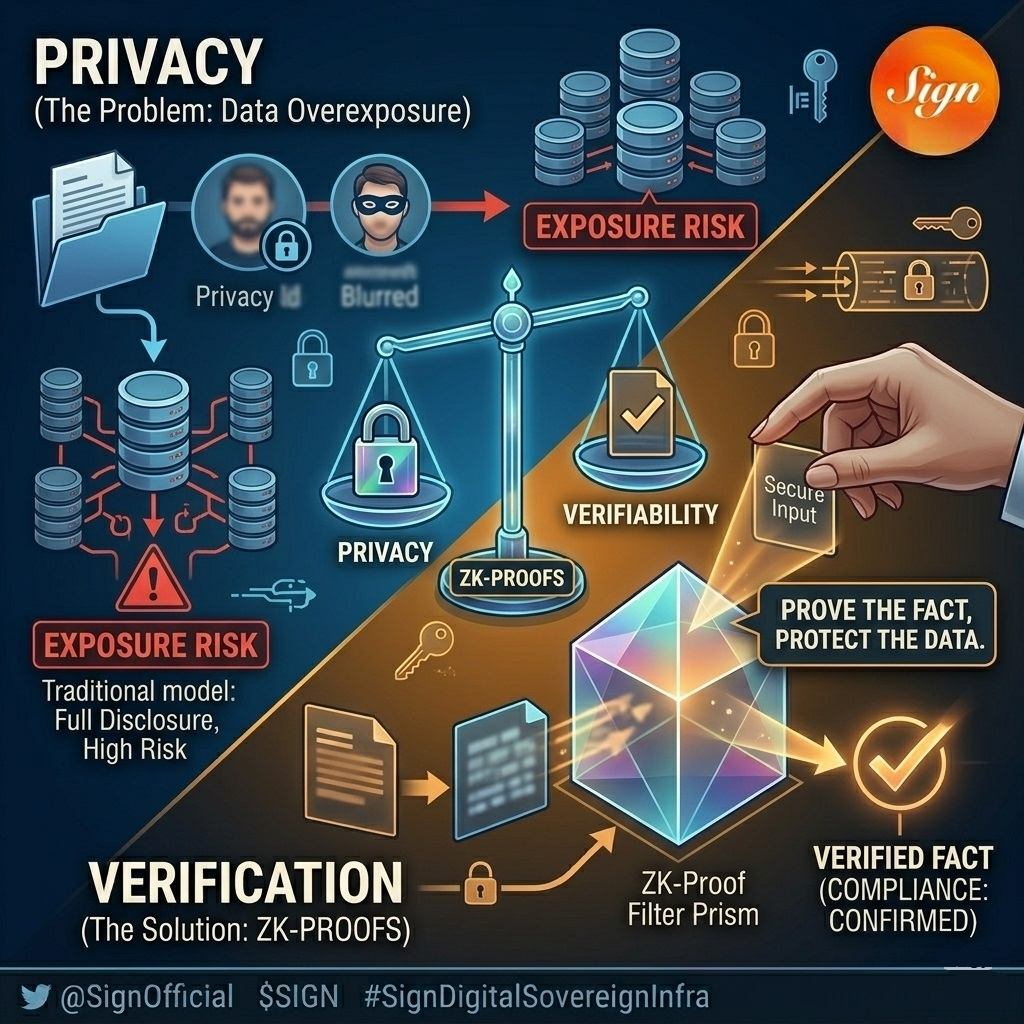

In many systems, verification requires exposure. To prove something, you often need to reveal the underlying data. This creates a trade-off between privacy and trust.

Either you expose data to gain verification, or you protect data and lose verifiability.

This trade-off becomes more problematic as systems scale and regulations become stricter.

This is where zero-knowledge proofs start to play a role.

They allow a system to confirm that a statement is true without revealing the underlying data. The proof verifies the condition, not the details.

This shifts how trust operates.

Verification no longer requires full disclosure. Systems can validate claims while preserving privacy. Institutions can enforce rules without centralizing sensitive information.

The result is a model where trust is both verifiable and controlled.

That combination is critical for systems operating at scale.

Because large-scale systems are not just about efficiency. They are about accountability. Records need to be auditable. Processes need to be transparent to the right parties. Data needs to remain protected.

Balancing these requirements is not trivial.

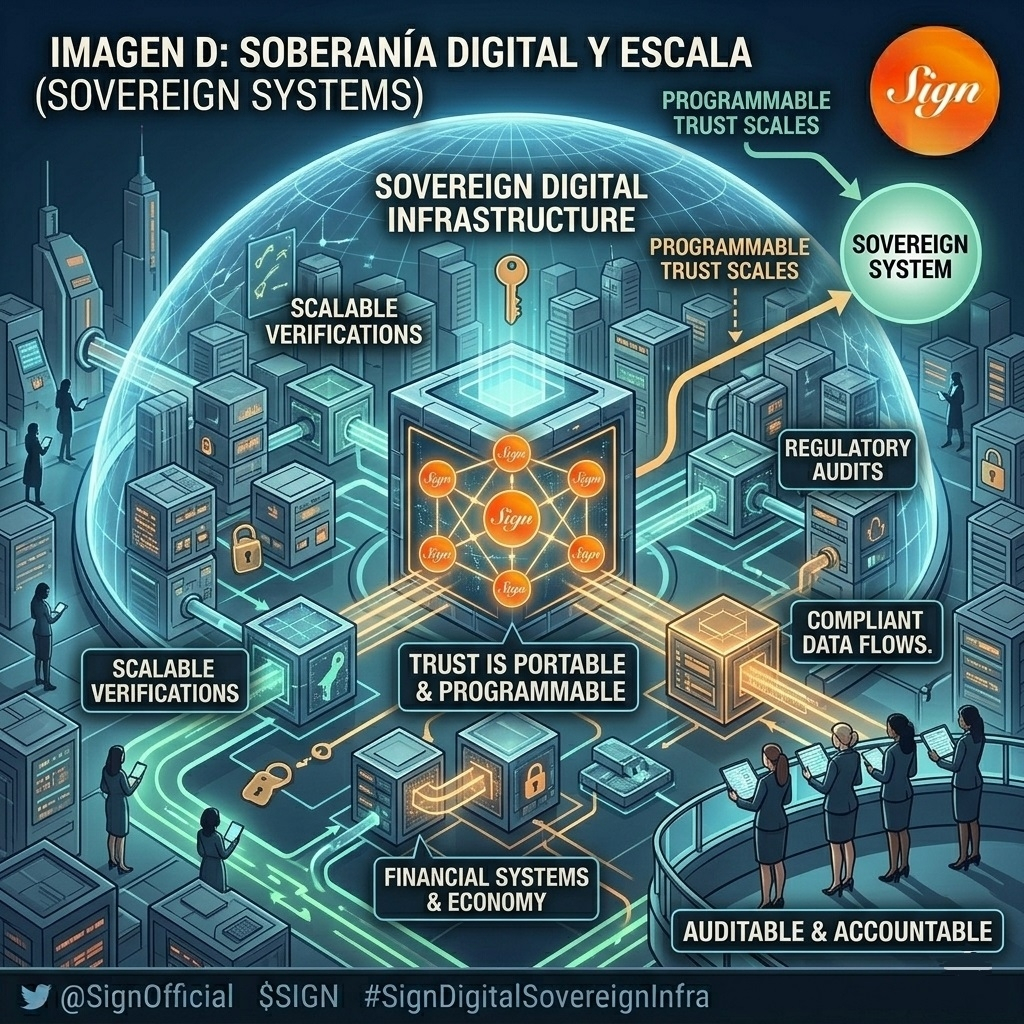

What Sign introduces is not just a tool, but a framework.

A way to structure trust as something programmable. Something that can be defined, issued, verified, and integrated into workflows without relying on constant manual intervention.

The token layer, $SIGN , supports this system by enabling verification processes, governance, and coordination between participants. But focusing only on the token misses the underlying shift.

The real shift is architectural.

Trust moves from being a layer on top of the system to being part of the system itself.

That distinction matters.

Because systems that rely on external trust mechanisms tend to become heavier over time. They require more oversight, more coordination, and more cost as they grow.

Systems that embed trust directly into their structure can scale differently.

They reduce redundancy. They streamline verification. They allow different components to interact without needing to re-establish trust at every step.

This does not eliminate complexity. But it changes where that complexity lives.

Instead of being distributed across processes and intermediaries, it is encoded into the infrastructure.

And once it is part of the infrastructure, it becomes something that other systems can build on top of.

That is how new layers of technology emerge.

Not by replacing everything that came before, but by changing the assumptions that systems are built on.

One of the clearest signals that this shift is already happening can be seen in regions where digital infrastructure is being treated as a strategic priority.

In parts of the Middle East, digital identity, financial systems, and public services are being redesigned with sovereignty in mind. The goal is not just digitization, but control, auditability, and long-term economic scalability.

Infrastructure that can verify data without exposing it, coordinate systems without relying on external platforms, and support compliant growth is becoming essential.

This is where Sign’s positioning becomes more concrete.

Not just as a protocol, but as a layer that can support sovereign digital systems at scale. Systems where trust is not outsourced, but built into the infrastructure that economies depend on.

If digital growth requires verifiable systems, then programmable trust is not an upgrade.

It is a requirement.

Which brings the conversation back to the core assumption.

Trust does not scale.

But programmable trust might.

If that holds true, then what we are seeing is not just an incremental improvement in how systems verify data.

It is the beginning of a shift in how digital systems are designed.

From systems that depend on trust…

to systems that construct it.