Like calling a knife a Swiss Army knife. Same category, just more versatile. But I think that undersells what the phrase actually implies. Because the gap between a robot that does one thing well and a robot that can adapt to many things is not an engineering gap. It's a civilizational one.

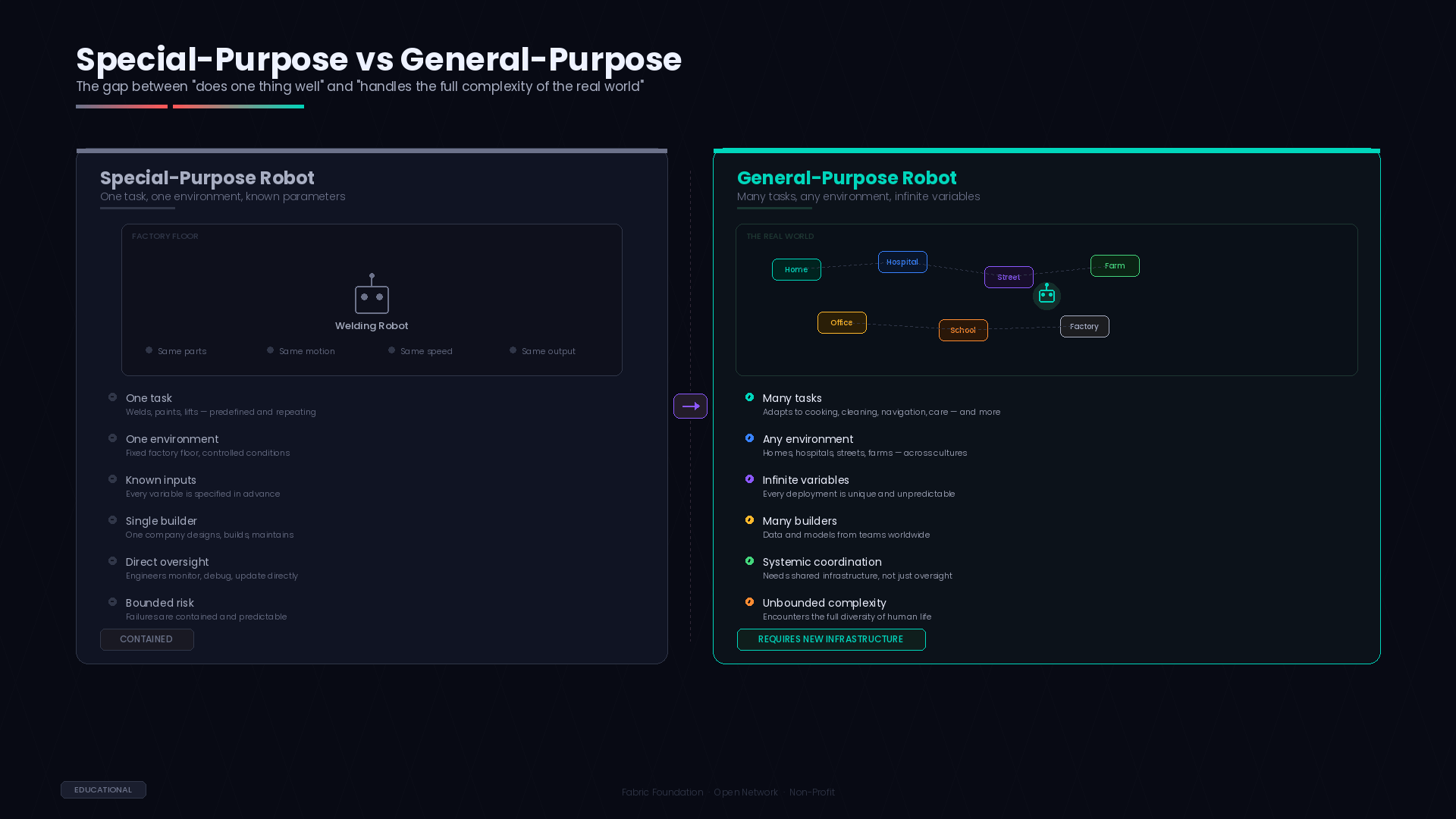

A robot that welds car frames is impressive. It's also contained. One task, one environment, one set of inputs. The engineers who built it understand every parameter. The company that deployed it controls every variable. When something goes wrong, someone knows why.

A general-purpose robot is none of those things. It operates across environments nobody fully predicted. It encounters situations its builders never tested for. It needs to adapt to contexts that vary by geography, culture, physical layout, social norms. The phrase "general-purpose" doesn't just mean "can do many tasks." It means "will encounter the full complexity of the real world."

And the real world doesn't come with a manual.

I've been thinking about this because it changes the nature of every problem downstream. When you're building a special-purpose robot, the challenges are mostly technical. Better sensors. Faster actuators. More precise models. Hard problems, sure, but bounded ones. You know what success looks like because you defined the task.

When you're building a general-purpose robot, the challenges become systemic. It's not just "can it pick up a cup?" It's "can it pick up any cup, in any kitchen, in any country, without breaking something or startling someone?" And that question opens onto an entirely different landscape of problems.

You need data from everywhere. Not a curated dataset a living, evolving body of knowledge that reflects the diversity of real human environments. You need computation you can trust not just powerful, but verifiable, because you can't personally inspect every decision a machine makes in every context. And you need governance that keeps pace rules that are flexible enough to accommodate different cultures and use cases, but sturdy enough to prevent harm.

That's roughly the problem @Fabric Foundation Protocol is trying to address.

Fabric is a global open network, run by the Fabric Foundation a non-profit. It provides shared infrastructure for building, governing, and evolving general-purpose robots. The protocol coordinates three things through a public ledger: data, computation, and governance.

But I want to approach these from a different angle this time. Not as three separate layers. As three consequences of what "general-purpose" actually demands.

The data consequence is maybe the most intuitive. If a robot is meant to work anywhere, it needs to learn from everywhere. A kitchen in Hanoi is organized differently than one in Helsinki. The objects are different. The layouts are different. The social expectations around how a machine should behave in that space those are different too. And these aren't edge cases. They're the norm. The world is overwhelmingly varied, and any robot that hasn't encountered that variety in its training will stumble the moment it leaves the lab.

No single company can capture this breadth. It's not a matter of resources. It's a matter of access and perspective. A company based in San Francisco, no matter how well-funded, will have blind spots about daily life in Dhaka. A research lab in Berlin will miss nuances about homes in Lagos. The data has to come from many sources. Which means there has to be a system for contributing, tracking, and verifying data across borders and institutions.

Fabric's public ledger handles this by recording every data contribution its origin, its terms of use, its verification status. It becomes obvious after a while that this isn't just about building a database. It's about creating trust infrastructure for a global data commons. The kind of thing that doesn't exist yet for robotics, but probably needs to.

The computation consequence is less intuitive but maybe more important. When a model is trained on diverse data from many contributors, the question of trust gets complicated fast. How do you know the model was trained correctly? How do you know the data that was supposed to be used was actually used? How do you know that the version of the software running on a robot in a hospital is the same version that was reviewed and approved?

You can usually tell when a trust model is breaking down because people start relying more heavily on reputation. "Well, it's from Company X, so it's probably fine." That works for a while. It doesn't scale. And it creates a world where only big, established players can participate, because they're the only ones with reputations to trade on.

Verifiable computing is Fabric's answer to this. The idea is that every significant computation on the network generates a cryptographic proof a mathematical guarantee that the computation was performed as specified. Not a promise. Not an audit report. A proof that anyone can check, independently, without trusting the person who generated it.

That's where things get interesting for the "general-purpose" problem specifically. Because general-purpose robots, by definition, will be built by many teams, trained on many datasets, deployed in many contexts. The chain of trust is long and distributed. Verifiable computing compresses that chain into something checkable. You don't need to trust every contributor in the chain. You just need to verify their proofs.

The governance consequence is the hardest one. And I think it's where "general-purpose" creates genuinely new problems, not just bigger versions of old ones.

A single-purpose robot in a factory operates under one jurisdiction, one set of regulations, one cultural context. The rules are clear, even if they're not perfect. A general-purpose robot that moves between a home, a hospital, and a street might cross multiple regulatory frameworks in a single afternoon. Different rules about privacy. Different standards for safety. Different expectations about what a machine should and shouldn't do.

How do you govern that? Not with a single set of rules imposed from one center. The world is too varied for that. But also not with no rules at all, because autonomous machines operating among people need guardrails.

Fabric's approach is participatory governance recorded on a public ledger. Stakeholders from different regions and domains propose, debate, and ratify standards. The entire process is transparent and traceable. Different communities can adapt rules to their context while still operating within a shared framework.

Whether this works in practice whether participatory governance can actually produce coherent rules for machines that cross cultural and regulatory boundaries is a genuinely open question. It's the kind of question that doesn't have a theoretical answer. It only has an empirical one. You try it, see what happens, and adjust.

There's one more thing about "general-purpose" that I keep noticing. It implies that the robots themselves will eventually be actors in the system, not just products within it. A general-purpose robot isn't a tool you pick up and put down. It's an agent that makes decisions, navigates environments, and interacts with other agents — both human and machine.

That's why Fabric is designed to be agent-native. The infrastructure assumes that autonomous software agents are primary participants. They request data. They negotiate resources. They submit proofs. They interact with governance systems. This isn't a design flourish. It's a structural necessity. Because general-purpose robots will inevitably operate in situations where no human is in the loop. The infrastructure has to work without a person supervising every exchange.

The question changes from "how do we control the robot" to "how do we build a system where robots coordinate safely among themselves, under rules that humans set and can audit." That's a fundamentally different design challenge. And it's one that only becomes visible when you take "general-purpose" seriously not as a marketing phrase, but as a description of what's actually being attempted.

I think the reason this matters is that most conversations about robots still operate within the mental model of special-purpose machines. Better tools. Smarter appliances. That framework is comfortable, and it works for the robots that exist today. But it doesn't prepare us for what "general-purpose" actually requires.

General-purpose means the full world. All its variety. All its complexity. All its conflicting rules and expectations. Building machines that can handle that isn't just an engineering challenge. It's a coordination challenge, a governance challenge, and a trust challenge all at once.

Fabric Protocol is one attempt at building the infrastructure to meet that challenge. Whether it's the right attempt is impossible to know yet. But the challenge itself is real. And it's arriving faster than most people expect.

The thought keeps unfolding. There's always another layer beneath the one you just noticed. Which seems fitting, somehow, for a problem this big.

#ROBO $ROBO