I didn’t notice the trade-off at first. It felt normal—almost expected—that every interaction on a blockchain came with a kind of quiet exposure. Wallets, balances, transaction history… all sitting there, permanently visible. The system worked, no doubt. But the more I used it, the more a small question started bothering me: why does proving something always require revealing everything?

That question stayed vague until I tried to do something simple—interact without being fully seen. That’s when the friction became obvious. It wasn’t just about privacy as a feature; it was about the absence of choice. You could participate, or you could stay private. Rarely both.

So I started looking for systems that didn’t force that trade.

That’s where zero-knowledge-based blockchains began to make sense—not as a technological upgrade, but as a response to a very specific limitation. The idea wasn’t to hide data completely, but to change what “verification” actually means. Instead of asking, “Can I see your data?” the system asks, “Can you prove your data satisfies the rules?”

At first, that sounded like abstraction for the sake of it. But when I looked closer, it answered something practical: how do you enable coordination without turning every participant into a transparent node?

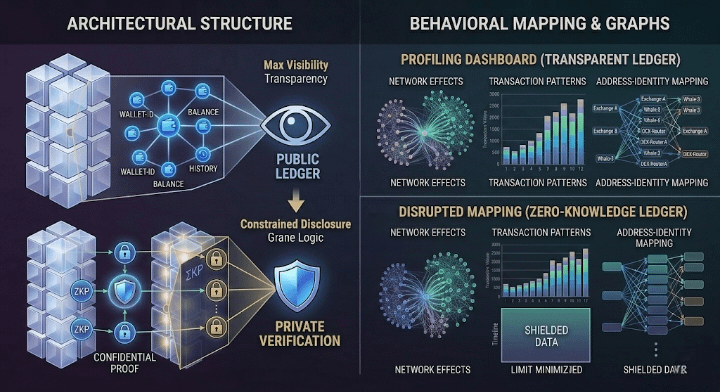

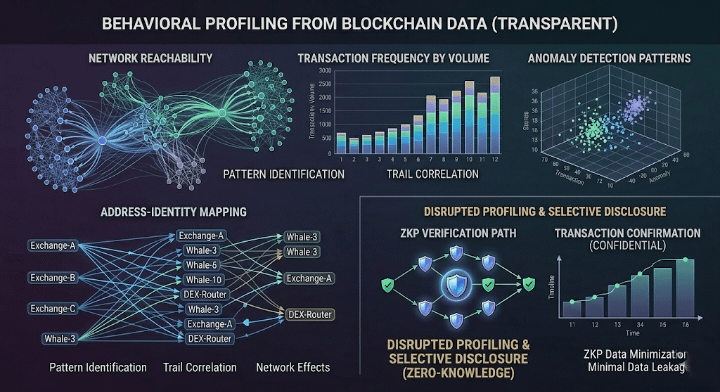

Because that’s what traditional blockchains optimize for—maximum visibility. It simplifies validation, makes auditing easier, and aligns with the idea of trustlessness. But it also creates a new kind of friction: behavioral exposure. Users don’t just transact; they leave trails. Over time, those trails become patterns, and patterns become profiles.

A zero-knowledge system seems to optimize for something else entirely.

It deprioritizes raw transparency in favor of constrained disclosure. You don’t reveal your balance, only that it’s sufficient. You don’t reveal your identity, only that you meet certain conditions. The chain doesn’t store your truth—it verifies that your truth fits within predefined boundaries.

That shift changes what people can actually do.

It removes a specific kind of hesitation. The kind that comes from knowing that every action is permanently observable. If that friction disappears, participation might increase—not because the system is easier, but because it feels less invasive. And that raises a second-order question: what happens when more people are willing to interact because they don’t feel watched?

It likely changes behavior at scale.

For one, it could normalize selective disclosure as a default. Instead of over-sharing by necessity, users start sharing by intent. That might reduce the informational advantage of those who specialize in analyzing on-chain data. Entire categories of analytics, surveillance, and even compliance tooling would need to adapt—not disappear, but evolve.

But this also introduces new constraints.

Zero-knowledge proofs are not free. They shift complexity away from the chain and into computation. Generating proofs can be resource-intensive, and verifying them—while efficient—still introduces overhead. So the system is making a trade: less data exposure in exchange for more computational work.

That trade shapes who finds the system comfortable.

Developers who value privacy-first design might lean in, even if it means dealing with more complex tooling. Users who are sensitive to surveillance might feel more at ease. But others—especially those who rely on open data for analysis, compliance, or strategy—might find it limiting. The system isn’t trying to satisfy everyone; it’s optimizing for a different baseline.

Then there’s the question of incentives.

If the system minimizes visible data, how do fees, rewards, or token dynamics evolve? In transparent systems, behavior can be analyzed and optimized externally. In a zero-knowledge system, much of that visibility disappears. That could reduce certain forms of exploitation, but it also makes it harder to coordinate around shared information.

Over time, governance becomes part of this equation.

Because when you can’t see everything, you start relying more on the rules themselves. What gets encoded into circuits, what conditions are provable, what policies are enforced at the protocol level—these decisions stop being purely technical. They become social. Whoever defines the rules of what can be proven is, in a way, shaping the boundaries of interaction.

And that’s where uncertainty starts to creep in.

It’s still not clear how these systems behave under pressure. What happens when regulators demand visibility that the system is designed not to provide? What happens when users need to debug or audit something that isn’t directly observable? What new forms of centralization might emerge around proof generation or specialized infrastructure?

I find myself coming back to the same tension, just at a deeper level.

If a system can function without requiring you to reveal everything, it changes the nature of participation. But it also shifts where trust, complexity, and power live. It doesn’t remove trade-offs—it rearranges them.

So instead of asking whether this kind of blockchain is better, I’ve started asking different questions.

What kinds of interactions become possible when disclosure is optional?

What new dependencies are introduced when verification relies on computation instead of visibility?

Who controls the rules that define what can be proven—and how often can those rules change?

What behaviors emerge when users know they are no longer being constantly observed?

And maybe most importantly: what evidence would show that this model holds up—not just in theory, but under real-world pressure?

I don’t have answers yet. But those questions feel like the right place to keep looking.

$NIGHT @MidnightNetwork #night