It was one of those quiet late-night moments — around 11:15 PM — when everything feels slower, and you start noticing details you’d usually ignore. I was organizing some of my professional files, preparing to apply for access to a closed development opportunity. Nothing unusual at first… until I reached the verification step.

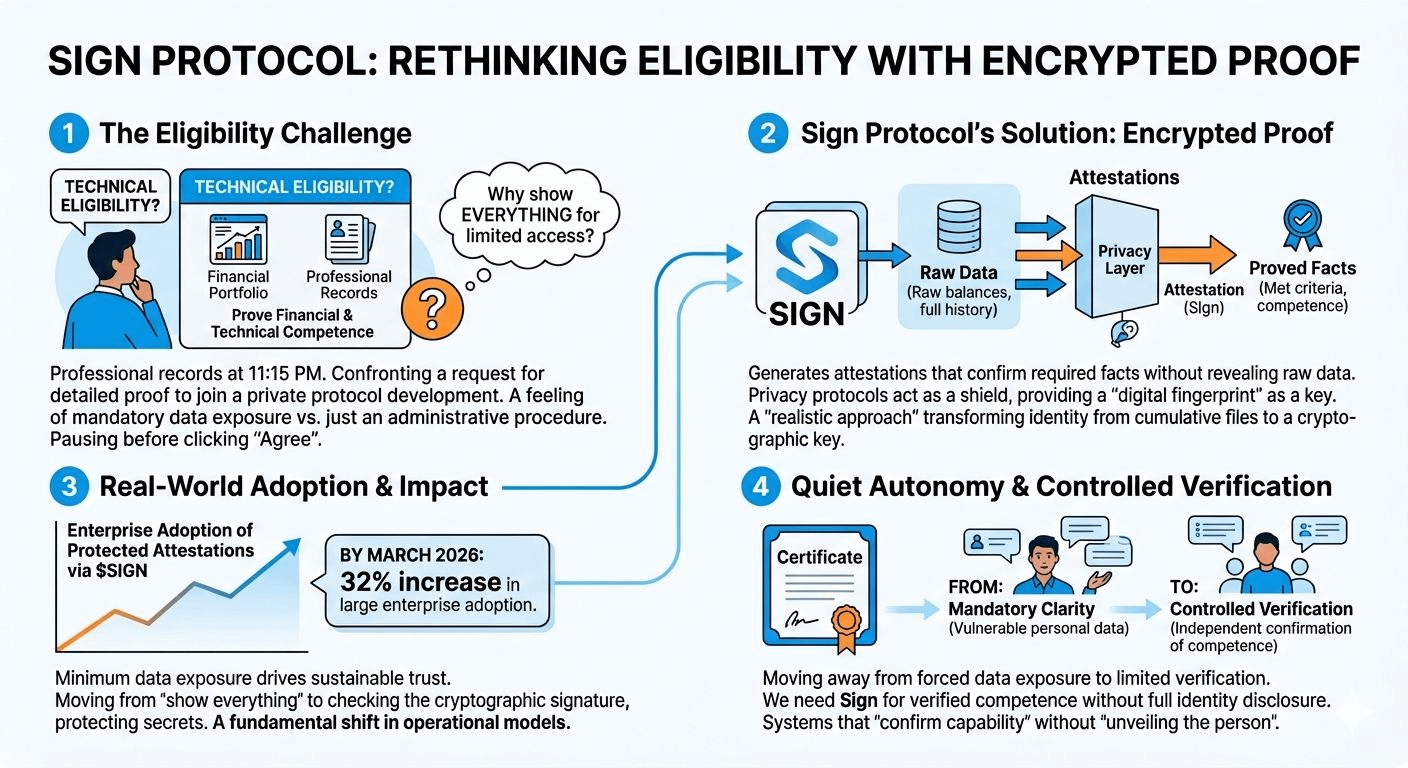

The screen asked for detailed proof of my financial and technical background. Not just a simple confirmation — it wanted depth. Portfolio exposure, financial standing, technical history. I paused longer than I expected. Not because I couldn’t provide it, but because of what it implied.

Why does proving I’m capable still require revealing so much?

That moment didn’t feel like a routine step. It felt like a quiet trade — access in exchange for exposure. And honestly, I wasn’t sure where that data would end up, how long it would exist, or who would eventually have visibility over it. That uncertainty is something most of us have just learned to accept over time.

But that’s exactly why @SignOfficial started making a lot more sense to me.

What $SIGN is building feels like a shift in how we think about trust. Instead of asking users to hand over complete information, Sign introduces a system where you can prove something is true without revealing everything behind it. It’s not about hiding — it’s about limiting unnecessary exposure.

Think of it like this: instead of submitting your entire professional and financial history, you present a verified attestation — a cryptographic proof that confirms you meet the requirements. The system verifies the truth, not the raw data. And that small change actually carries a big impact.

What I find interesting is how realistic this approach feels. In the current digital environment, we’ve normalized over-sharing as the price of participation. Whether it’s applying for jobs, joining platforms, or accessing opportunities, the default expectation has always been: “show everything, then we’ll trust you.”

Sign flips that model.

It leans into the idea that trust doesn’t need full transparency — it needs reliable verification. There’s a difference. One exposes, the other confirms.

And this is where the concept of digital sovereignty becomes more than just a buzzword. It becomes practical. Having control over what you share, how much you share, and when you share it — that’s a form of ownership we’ve been missing for a long time.

What also stands out is how this aligns with where things are heading. More companies and systems are starting to realize that collecting excessive data isn’t just inefficient — it’s risky. The more you store, the more you’re responsible for protecting. Minimal data isn’t a limitation anymore; it’s becoming a smarter strategy.

To me, Sign represents that shift toward “bounded verification.” You’re not invisible, but you’re not fully exposed either. You exist in a space where your qualifications can be confirmed without turning your identity into an open file.

Of course, I’m still observing carefully. Changing how systems think about data won’t happen overnight. There’s a deeply rooted habit of equating transparency with trust, even when that transparency comes at the cost of privacy.

But maybe the real evolution is learning that trust and privacy don’t have to compete.

Sometimes, trust is stronger when less is revealed not more.

And if that idea continues to take shape, then $SIGN isn’t just solving a technical problem. It’s quietly redefining how we interact with systems, opportunities, and each other in a digital world.