For years, “real-world adoption” has been one of the most overused phrases in crypto. Every cycle, new projects claim they’re bridging Web3 with reality, onboarding institutions, or powering the next generation of digital systems. The narrative is always the same: big promises, future integrations, and a roadmap that suggests adoption is just around the corner. But if you look closely, very few of those systems are actually deployed in environments where reliability, auditability, and scale are non-negotiable. There’s a difference between something being available… and something being used.

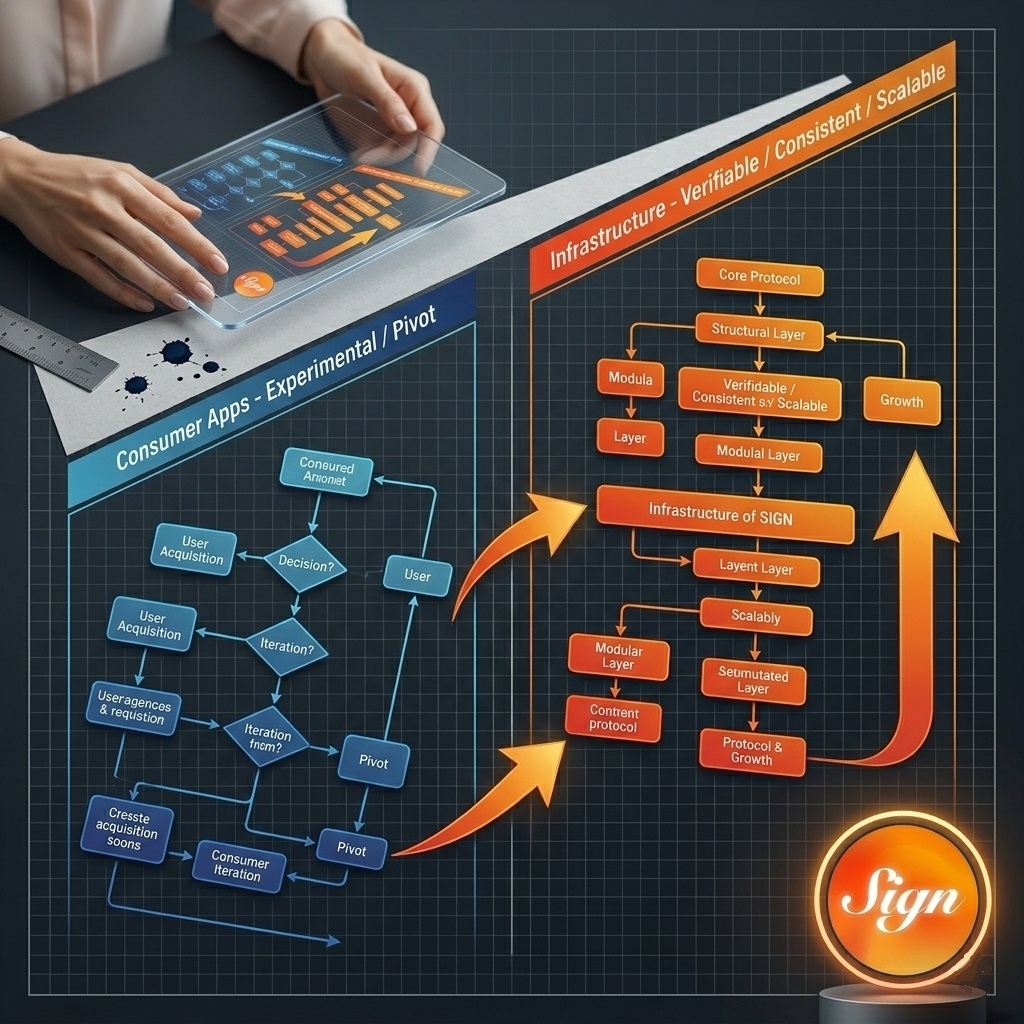

That difference becomes obvious when you move from consumer apps to infrastructure. At the application level, experimentation is acceptable. Products can iterate, fail, and pivot. But infrastructure doesn’t work like that. Once a system is integrated into public services, financial rails, or identity frameworks, it has to operate consistently. It has to be verifiable. And it has to align with regulatory and institutional constraints that most crypto projects never even reach.

This is where the conversation around Sign starts to diverge from the typical narrative.

Instead of positioning itself as a future solution, Sign is already being deployed in systems that operate at a national level. In regions like the United Arab Emirates, Thailand, and Sierra Leone, the protocol is being used to issue verifiable credentials, manage records, and support digital processes that require both trust and control. These are not experimental use cases designed for visibility. They are functional systems embedded in environments where failure is not an option.

That context changes how you evaluate the technology.

Because when a system is used in production, the standard is different. It’s not about potential. It’s about whether the infrastructure can handle real constraints. Data needs to be structured. Verification needs to be consistent. Records need to be auditable. And the system itself needs to remain adaptable as requirements evolve.

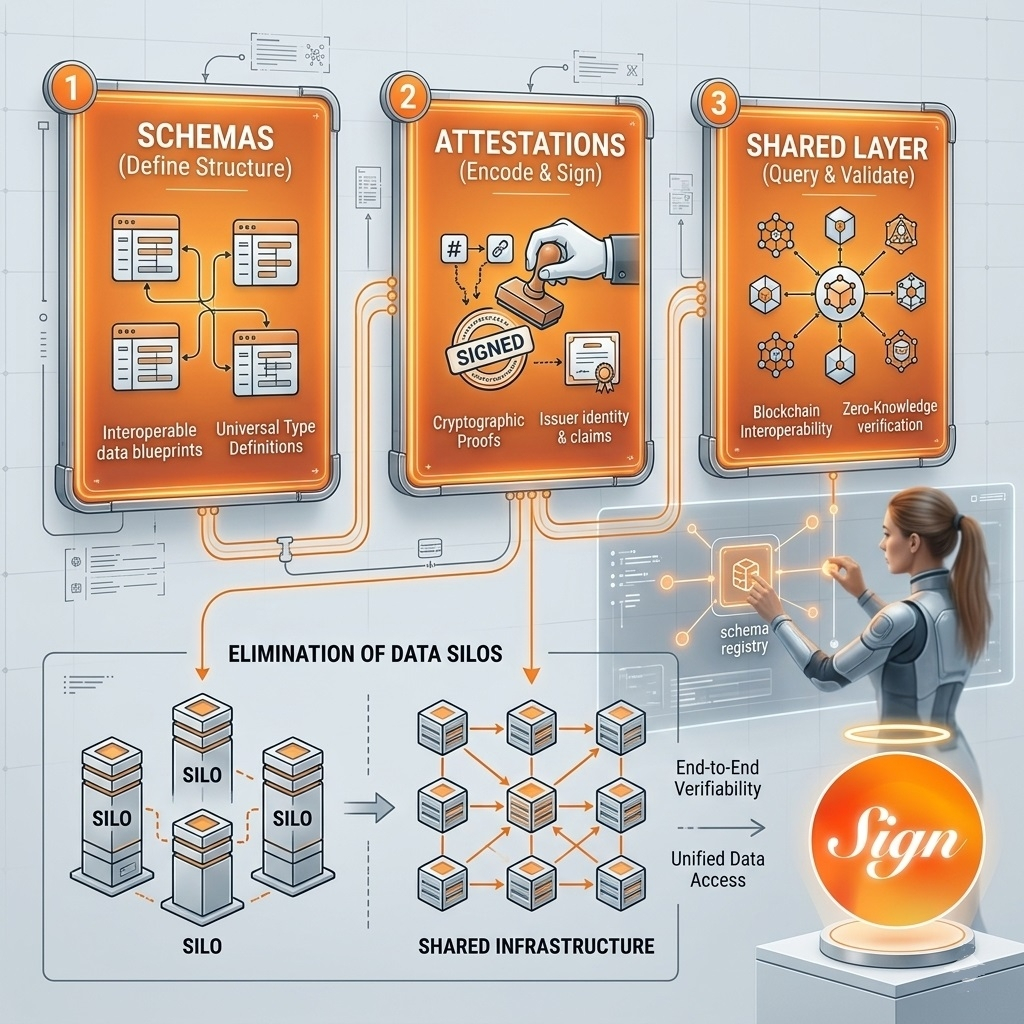

At the core of Sign’s approach is a simple idea: claims should not just exist, they should be provable. Through schemas and attestations, the protocol turns statements into structured, verifiable records that can be issued, referenced, and reused across systems. This is not just a design choice. It is a requirement for any infrastructure that aims to operate beyond isolated applications.

Because without a shared way to express and verify data, every system becomes a silo.

One platform verifies identity one way. Another platform uses a different standard. A third system stores the same information again, slightly modified, and expects it to be trusted without context. Over time, this fragmentation creates inefficiencies that scale with usage. More duplication. More verification steps. More reliance on intermediaries to reconcile differences.

What Sign introduces is a layer where those claims can be standardized.

Schemas define the structure of data. Attestations encode and sign that data. And once those elements exist, they can be queried and validated across different systems without needing to be recreated each time. That reduces redundancy and allows systems to interact without constantly re-establishing trust.

The scale of this is starting to become visible.

The ecosystem has already surpassed millions of on-chain attestations, with hundreds of thousands of schemas created by developers. These are not theoretical metrics. They represent actual usage, where data is being structured, issued, and verified in live environments. And as more systems integrate with this layer, the value of those attestations increases, because they become part of a shared infrastructure rather than isolated records.

This is where most discussions about adoption fall short.

They focus on user numbers, transaction counts, or short-term activity. But infrastructure adoption looks different. It is slower at the beginning, harder to achieve, and significantly more impactful once it happens. Because when infrastructure is adopted, it becomes embedded. It stops being optional and starts becoming part of how systems operate.

That is a very different level of integration.

It also explains why flexibility matters. Sign is not locked into a single approach to storage or verification. It can anchor data on-chain for global verifiability or use off-chain solutions when privacy, cost, or scale require it. This hybrid approach is not just a technical feature. It is what allows the system to function in real-world environments where constraints are not theoretical.

Because real systems don’t operate under ideal conditions.

They require trade-offs. They require adaptability. And they require infrastructure that can support those decisions without breaking interoperability or trust.

Another layer that reinforces this is privacy.

Verification at scale cannot depend on exposing sensitive data. Systems need to confirm that something is true without necessarily revealing the underlying information. This is where zero-knowledge proofs become critical, allowing verification to exist without compromising data integrity. It is one of the key pieces that enables infrastructure like Sign to operate in environments where both compliance and privacy are required.

All of this leads to a different conclusion than the one most narratives push.

Adoption is not a future milestone. It is something that can already be observed in systems that are functioning today.

And when a protocol is being used to issue credentials, manage records, and support digital processes at a national level, it moves out of the category of “emerging technology” and into something else.

Infrastructure.

Not speculative. Not experimental. But operational.

That distinction matters more than most people realize.

Because once a system reaches that stage, the conversation changes. It is no longer about whether it will be used. It is about how far that usage can expand, and how many other systems can build on top of it.

And that is where the real impact starts to compound.