I’ve been thinking about SIGN in a pretty unstructured way, just letting it sit in my head instead of trying to fully break it down. At first, it felt like one of those ideas that sounds familiar credentials, tokens, infrastructure things I’ve come across before. But the more I revisited it, the more it felt quieter than that. Less like something trying to stand out, and more like something trying to sit underneath everything else.

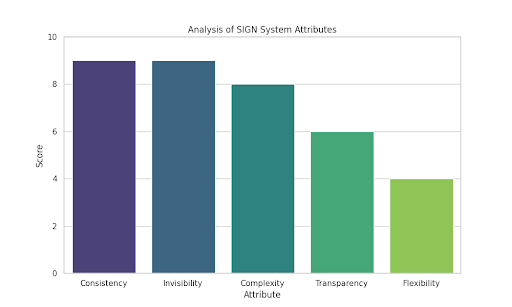

What keeps me coming back is this idea of infrastructure that isn’t meant to be noticed. The kind of system people eventually rely on without even thinking about it. That’s a strange goal if you think about it, because it’s not about attention or visibility. It’s about becoming so consistent that people stop questioning it. And honestly, that feels harder to build than anything that tries to impress you upfront.

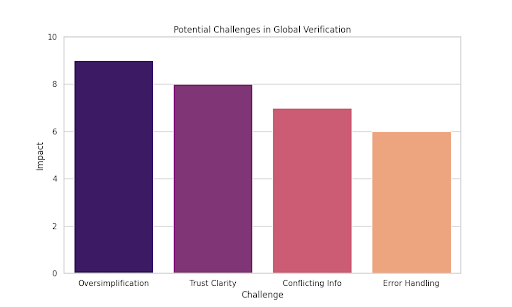

At the same time, anything that deals with “verification” makes me pause a bit. It sounds clean, but it’s never just technical. There’s always a layer where something — or someone — decides what counts and what doesn’t. I find myself wondering how SIGN handles that part. Not in theory, but in real situations where things aren’t clear or neatly defined.

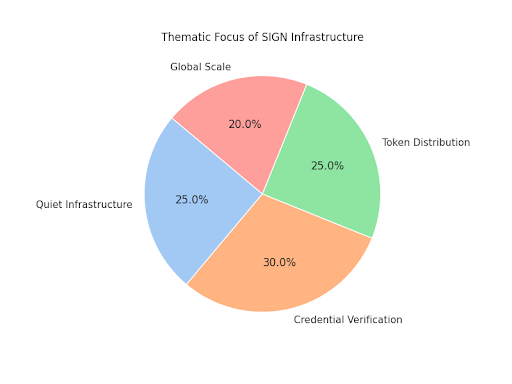

The token distribution side feels more straightforward at first, but the more I think about it, the less simple it becomes. Tokens aren’t just numbers moving around. They usually represent access, value, or some form of participation. And once you’re distributing those things at a global level, it starts shaping behavior in ways that aren’t always obvious right away.

I notice I care more about the small details than the big vision here. Things like whether the system feels transparent, or if it hides too much behind convenience. Whether it allows for mistakes or assumes everything will go smoothly. Those quiet design choices tend to say more about a system than any big explanation ever could.

At the same time, I’m very aware that I’m only seeing part of the picture. It’s easy for something like this to look solid from the outside. But the real questions show up when things don’t go as expected. What happens when information conflicts, or when trust isn’t clear, or when someone falls outside the system’s assumptions?

The idea of this being “global” also stays in the back of my mind. It sounds ambitious, but it also means simplifying a lot of different realities into one structure. I’m not sure how well that balance can be handled. Whether it adapts to differences or quietly ignores them. That part feels important, even if it’s not obvious at first glance.

I do find myself appreciating when a system doesn’t try to claim too much. When it seems aware of its own limits. Verification isn’t the same as truth, and distribution doesn’t automatically mean fairness. If SIGN leans into that kind of thinking, it changes how I see it. It feels less rigid, more like something that supports rather than controls.

I keep thinking about incentives too, even if I can’t fully map them yet. Systems like this don’t just exist — they influence how people act over time. They make certain behaviors easier and others harder, sometimes without people even noticing. That’s something I’d want to understand better, but I know it only becomes clear with time.

So for now, I’m just sitting with it. Not trying to fully figure it out or take a strong position. There’s something about it that feels quietly important, even if I can’t completely explain why yet. It’s more like a thought that stays in the background, slowly connecting with other things I’ve seen. And for some reason, that’s enough to keep me paying attention.