A ranking can run exactly as designed and still look rotten from the outside.

Thats where Midnight ( $NIGHT ) starts getting annoying to me... for real.

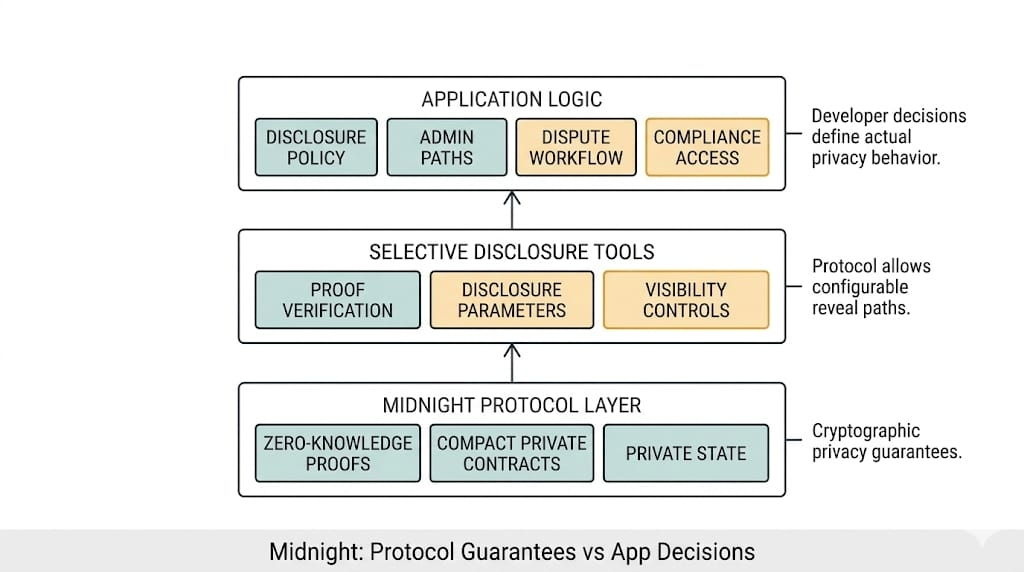

Not the easy version where privacy protects sensitive inputs and everybody acts like the hard part is done because the proof checked out. That part is useful. Midnight should be useful there. If some allocation, eligibility, or access system needs to evaluate private facts without dumping the whole criteria set into public view, fine. That is a real use case. Public-by-default systems are a terrible place to run anything where scoring logic touches personal data, internal thresholds, counterparty risk, or the kind of business rules people do not want turned into public entertainment.

Good.

The problem starts one layer later.

Because the minute Midnight network gets used for ranking, prioritization, allocation, gated access, any of that, the proof only tells you one thing... the hidden rule ran the way it was written.

That is not the same thing as people trusting the rule.

Take some private allocation flow on @MidnightNetwork . Maybe access to a product gets prioritized based on a hidden score. Maybe a pool opens in tranches and some users get through first because they cleared a private threshold. Maybe a private credit workflow ranks counterparties without revealing the whole scoring model underneath. The system can prove the criteria were applied. Nice. Very modern. Very privacy-preserving. Very adult.

Then the outcomes hit users.

One person gets in.

Another gets delayed.

Another gets nothing.

Everybody gets told the process followed the rule.

Then support gets the ticket from the user who landed just below threshold and wants to know whether they lost because of risk score, timing, anti-gaming logic, or some other hidden weight nobody can explain without sounding evasive.

I've seen systems lose more trust from one opaque queue than from an actual outage. I have seen people accept a bad outage faster than a clean rejection they can’t inspect.

That’s where support gets ugly.

Because trust in ranking systems does not come only from procedural correctness. It comes from whether people think the criteria were fair, sane, relevant, non-gamed, non-political, not quietly tilted toward whoever wrote them. Midnight can prove the system followed the hidden rule. It cannot make the hidden rule feel legitimate to the people living under it.

And yeah, that matters more than people like admitting.

Especially because hidden criteria always sound cleaner from the inside than they look from the outside. The team running the system sees sensitive inputs, fraud concerns, anti-gaming logic, internal risk factors, all the reasons they do not want to expose the full model. Fair enough. Maybe they’re right. But the user who got screened out just sees an outcome with no inspectable path behind it, plus some polite line about the rule being applied as intended.

That is exactly how you get procedural trust turning into social distrust.

And Midnight makes that problem sharper, not softer, because it can make these systems viable in places they used to be too invasive or too public to run at all. Private eligibility. Private prioritization. Private scoring. Good. Useful.

Still means somebody built a hidden ranking machine and now wants the output to inherit legitimacy from the proof.

That’s asking a lot from a proof.

Because once the criteria stay private, the argument changes shape. It is no longer did the system cheat in the obvious sense. It becomes:

did the system encode a dumb rule?

did it overweight the wrong thing?

did it rank one class of user differently in a way nobody outside can really challenge?

did the anti-gaming logic quietly become anti-user logic?

did the internal risk model become the product without anyone saying that out loud?

A hidden score can overweight timing, internal risk posture, anti-sybil logic, relationship quality, whatever else the operator thinks is prudent, and still produce a result the proof will happily certify as procedurally correct.

Midnight can prove the score was computed against the hidden inputs it was given. It cannot prove the weighting deserved trust.

The proof was never going to settle that. It just wasn’t built for that fight.

It can settle whether the system followed the hidden criteria as written. Useful. Important even. But if the real discomfort is that nobody outside the system trusts the hidden criteria themselves, then the proof is solving a narrower problem than the operators probably wish it were.

That’s the fight, really.

Midnight is strong exactly where it can compute over sensitive inputs without exposing them. But the second those private computations start deciding rank, access, allocation, or sequence, the system stops being just a privacy story.

Now the whole thing starts smelling like legitimacy, not privacy.

Legitimacy is the nastier part.

A user gets told they were below threshold.

A counterparty gets told they were not prioritized.

An applicant gets told the criteria were satisfied for someone else first.

A product team says the hidden model worked exactly as designed.

Fine. Maybe it did.

The design can still be the thing people don’t trust.

That part keeps hanging inside my head.

Because the system followed the rule sounds strong right up until the real fight is whether the hidden rule deserved to be running the room in the first place.

And if Midnight gets real traction in private scoring or allocation systems, that fight is coming. Not because the proof failed. Because the proof worked, the queue stood, the allocation stood, and people still walked away convinced the hidden score was the real power in the room the whole time.