Imagine a transaction. You meet every criterion, satisfy every rule, and pass every check. The underlying code confirms that the proof you’ve presented is immaculate. Technologically, the matter is settled. The verification process is complete. But is the story really over?

Not quite. Not in the complex, nuanced world of human digital interaction. When we pull back the layers of technology, a fundamental and deeply unsettling question emerges: "Is technical verification sufficient?"

This is the central dilemma facing advancements like the Midnight Protocol, a system that utilizes Zero-Knowledge Proofs (ZK-Proofs) to achieve what seems like magic: proving compliance without disclosing data. It demonstrates that you are eligible, but it never shows who you are. It’s an elegant, brilliant engineering feat—perhaps the pinnacle of privacy-preserving technology in the blockchain era.

Yet, we must ask: what happens when the perfect proof lands on the desk of an imperfect human?

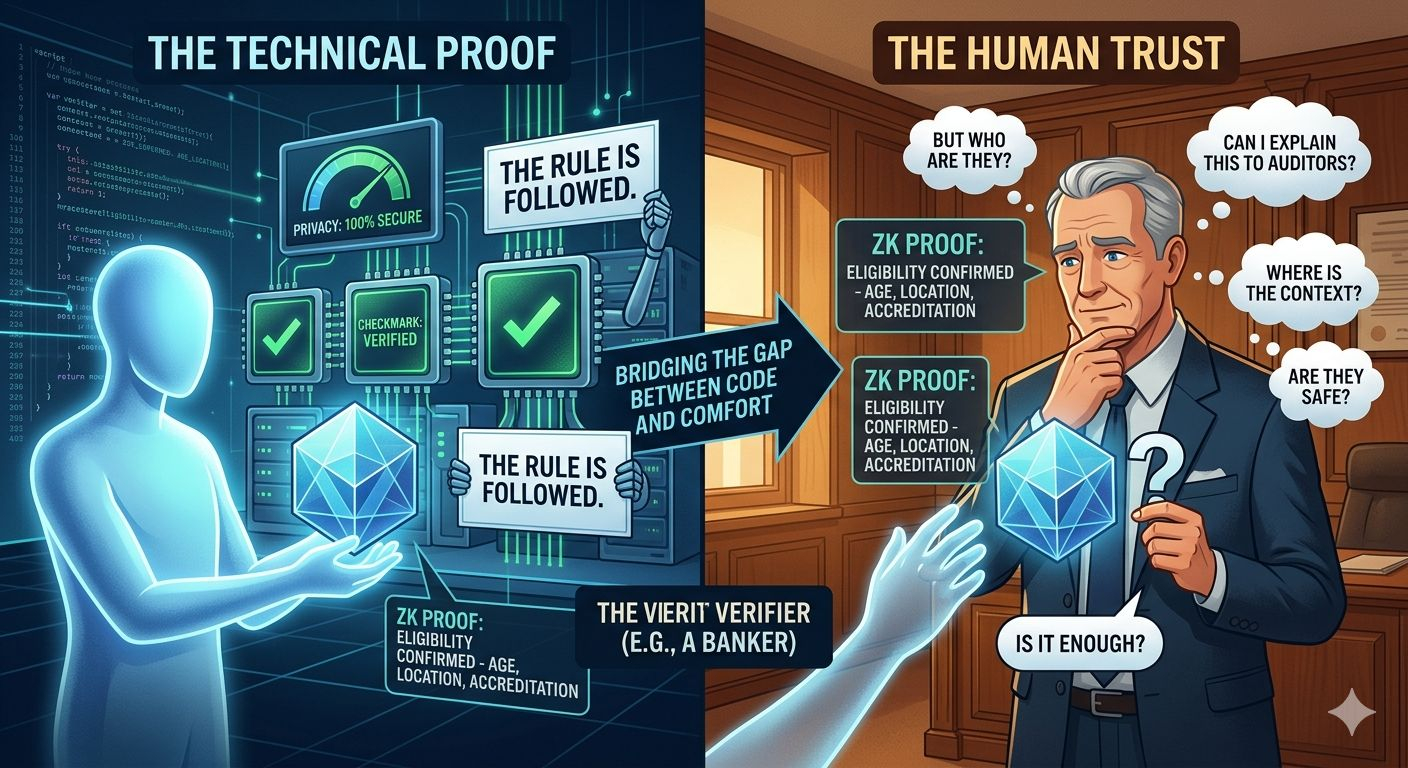

The failure here is not with the technology. The ZK-Proof operates flawlessly. The computation is verified. The conditions are met. But when the verifier—be it a bank, a regulatory body, or a cautious business partner—sees the perfect proof, they don’t always feel secure. Instead, the automatic response often shifts from "Verify" to "Can I see more?"

This singular question doesn’t signal a failure of the protocol; it signals a profound philosophical gap between what code can guarantee and what a person requires to feel trust.

We are shifting the debate from machines to humanity. The proof only provides confirmation: "The criteria were met." It doesn’t provide assurance: "You are safe to proceed." It doesn't ask, "Do you feel comfortable?" Real trust, after all, is not merely the outcome of logical, arithmetic proofs; it is a psychological feeling built on understanding, context, and comfort.

Every participant defines "sufficiency" differently. The "Prover"—the individual—wants to expose as little data as possible, maximizing their privacy. But the "Verifier"—the institution—needs to be able to justify their decision to auditors, partners, and internal regulatory compliance. This is not simple curiosity; it is organizational responsibility, legal liability, and systematic caution.

Consequently, "selective disclosure" becomes a dynamic, shifting, and deeply human negotiation. What is enough today might not be enough tomorrow. The threshold of sufficiency is not a fixed line but a moving target. Every context, every relationship, and every moment redefines what is necessary.

This is the paradox: whenever you attempt to satisfy this human need for "more context" by disclosing extra details, you undermine the precise privacy that the protocol was designed to protect. It’s a delicate, agonizing trade-off with no easy answer.

Even more interesting is that this boundary shifts during the conversation itself. Information that was perfectly sufficient ten minutes ago can become insufficient when a new question changes the context. The proof hasn’t altered, but the emotional state of the recipient has. This reveals a fundamental truth: "Sufficiency" is not a property of the code; it is a feeling in the heart of the receiver.

The Midnight Protocol offers a robust technical assurance of validity. It provides a mathematical certainty. But it cannot force someone to feel safe. Defining what is "enough" happens outside the system, in the messiness of human negotiation, case by case.

This is the paradox of our age: we have achieved the capability to prove everything without revealing anything. And yet, the human element still demands more than just a proof. We need narrative. We need context. We need that subtle, indefinable sense of assurance that transforms a technical certainty into genuine, durable trust.

Yes, the proof is always successful.

But the question "Is it enough?" remains open.

#night $NIGHT @MidnightNetwork