People tend to think the most important moment for a blockchain is the day it launches, the day liquidity appears and exchanges flip the switch and suddenly a token has a price, and the entire narrative collapses into charts and candles and short-term excitement. But that moment is almost irrelevant for infrastructure networks, especially the ones built around zero-knowledge cryptography, because those systems aren’t really competing for attention in the same way consumer applications do. They’re competing with something much quieter and much older: the invisible layers of the internet itself.

And that’s a strange thing to realize if you’ve spent enough time around crypto markets, because markets are loud. They reward immediacy. They reward momentum. They reward the feeling that something dramatic just happened. Yet the real story of a zero-knowledge blockchain begins long after that noise fades, when the token price stops being the headline and engineers start asking a more uncomfortable question that traders rarely care about: can this network actually function as a verification layer for real systems?

That’s where things get interesting. Or difficult. Sometimes both.

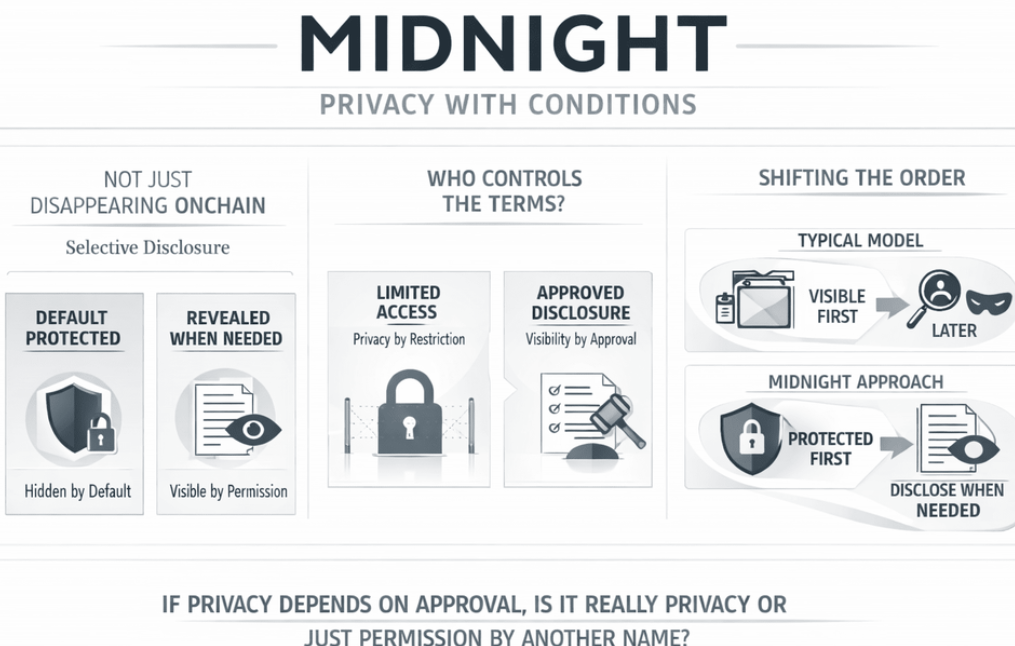

Zero-knowledge proofs are one of those ideas that sound abstract until you sit with them for a while and start realizing how strange they actually are. The basic premise is almost philosophical. You can prove something is true without revealing the thing itself. Not a hint. Not a fragment. Just the proof. The result is a kind of cryptographic paradox where the network can confirm a statement without seeing the data behind it, which, if you think about it long enough, starts to feel like a small but meaningful shift in how digital systems understand truth.

For decades the internet has operated on a very blunt model of verification. If you want to prove something about yourself, you reveal information. If a system needs to confirm your age, your income, your identity, your eligibility for some service, the usual solution is to hand over the raw data. Documents get uploaded. Databases get queried. Somewhere along the way someone stores information that probably didn’t need to be stored in the first place.

It works. Mostly.

But it’s clumsy, and occasionally dangerous, because every time information is revealed it creates another place where that information might be copied, leaked, or quietly repurposed.

Zero-knowledge systems approach the problem differently. They ask a more subtle question: what if verification didn’t require exposure at all? What if the system could simply confirm that a condition was satisfied, nothing more, nothing less?

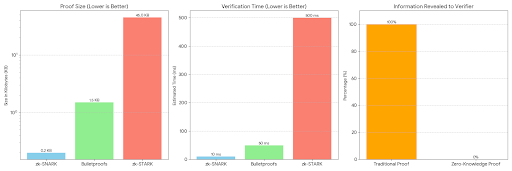

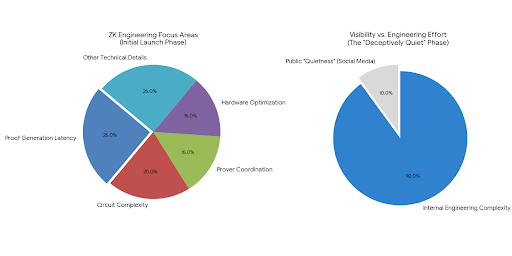

That idea sounds simple in theory, but the engineering behind it is anything but simple, which is why the early months after a ZK blockchain launches tend to look deceptively quiet from the outside. There are no dramatic fireworks. Instead there are engineers staring at proof generation latency, circuit complexity, prover coordination, hardware optimization, and a dozen other technical details that rarely make it into social media threads.

The reason is straightforward. Generating a zero-knowledge proof is computationally heavy. Much heavier than validating a normal transaction. In the early life of a network the proving infrastructure has to prove itself first. Nodes must coordinate efficiently. Proof pipelines must avoid bottlenecks. Latency must stay low enough that applications don’t feel like they’re waiting for cryptography to catch up with them.

If those mechanical layers fail, the grand vision of private verification collapses very quickly.

So the first months are about stability. Quiet engineering. Sometimes frustrating engineering. Circuits get rewritten. Provers get optimized. Throughput gradually improves. It’s slow work. Necessary work.

And yet even if that layer stabilizes, another challenge appears immediately after, one that has less to do with cryptography and more to do with human behavior. Developers have to actually use the system.

This is where the story of many advanced blockchain technologies becomes complicated, because building applications that rely on zero-knowledge proofs requires a very different mindset compared with ordinary smart contract development. Instead of writing logic that executes directly on-chain, developers often have to design circuits that represent logical statements which can later be proven cryptographically. It’s almost like translating software into mathematical arguments.

That barrier matters. It means adoption rarely happens overnight.

Tools improve over time, of course. SDKs appear. Libraries simplify certain proof constructions. Abstractions hide some of the complexity. But there’s still a learning curve, and that learning curve quietly determines whether a ZK network becomes infrastructure or remains an elegant technical demonstration admired mostly by cryptographers.

Because here’s the uncomfortable truth: infrastructure only matters if people build on top of it.

The interesting moment arrives when developers start realizing that a zero-knowledge chain doesn’t just record transactions. It verifies statements about reality. That difference sounds subtle, but it changes everything about how the network can be used.

Imagine a digital identity system that needs to confirm a person meets certain criteria without exposing their personal details. Instead of uploading documents to dozens of services, the system could generate a proof confirming that the criteria are satisfied. The blockchain verifies the proof. The service accepts the result. No private data ever leaves the user’s control.

Or think about financial compliance. Institutions often need to demonstrate that transactions follow regulatory rules, but sharing the raw data behind those transactions can create privacy issues or legal complications. A zero-knowledge proof could confirm that the compliance conditions were met without revealing the underlying financial information.

In that scenario the blockchain becomes something quite different from the typical public ledger. It becomes a neutral verification machine.

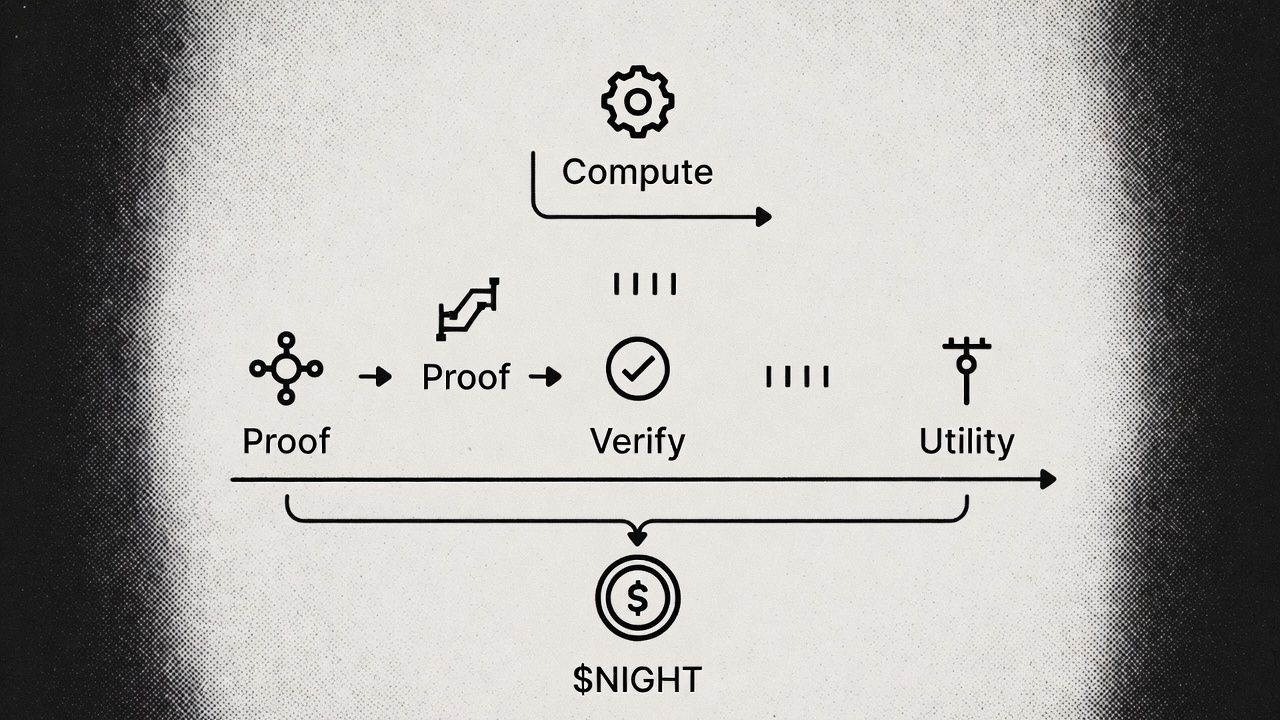

And once you start thinking about it that way, the economic design of these networks begins to make more sense as well. The token isn’t merely a speculative asset floating around exchanges. In principle it represents access to verification capacity. Applications pay to generate and verify proofs. Node operators supply the computational power needed to produce those proofs. The token flows between those two sides of the system as a kind of coordination mechanism.

If applications appear, demand grows. If demand grows, more computational resources enter the network. If capacity expands, costs decrease, which attracts more developers.

A flywheel. Slow at first. Potentially powerful later.

Of course none of that is guaranteed. In the early life of a network supply often arrives before demand does. Validators and infrastructure providers are incentivized to participate through token emissions, but real applications take longer to build. For a while the system can feel slightly imbalanced, like a factory that has installed all its machines before customers start placing orders.

That imbalance sometimes confuses observers who expect immediate utilization, but infrastructure rarely grows in perfect synchrony with adoption. Roads often appear before the cities that rely on them. Fiber cables get laid years before the data traffic fully arrives. The same pattern tends to repeat itself with cryptographic infrastructure.

Still, there’s another dimension to this story that deserves attention, and it involves institutions rather than developers. Large financial organizations have been experimenting with blockchain technology for years, but their interest usually centers on reliability and compliance rather than open speculation. What attracts them to zero-knowledge systems is not the token economy. It’s the possibility of verifying information without exposing sensitive data.

A remittance network, for instance, might need to prove that anti-money-laundering checks were performed correctly before settling a transaction. Today that process often involves transmitting confidential information across multiple intermediaries. With ZK infrastructure, a system could theoretically generate a proof confirming that all regulatory checks passed while keeping the underlying data private.

Companies such as MoneyGram have explored blockchain integrations that hint at this direction, not because they want to speculate on digital assets but because they need infrastructure capable of handling verification at global scale. For them the blockchain is not a marketplace. It’s a coordination layer.

And this is where the philosophical part of the story quietly sneaks in.

If zero-knowledge systems work the way their designers hope they will, they change a fundamental assumption about digital life. Historically the internet has required people to surrender pieces of their identity in order to interact with systems. Accounts, credentials, financial details, documents fragments of personal data scattered across countless databases.

ZK technology suggests a different future where verification replaces exposure.

Instead of showing who you are, you prove what is true about you.

It’s a subtle shift. But an important one.

Of course there are still plenty of uncertainties ahead. Developer adoption could take longer than expected. Proof generation costs might remain high for complex applications. Token emissions in the early stages could create market pressure before real demand appears. None of these risks are theoretical; they’re practical realities that every infrastructure protocol eventually faces.

And yet there’s something oddly compelling about the idea that the most important blockchain systems of the next decade might be the ones people rarely notice. Networks quietly verifying credentials, transactions, identities, supply chain events, compliance checks, and countless other claims, all without forcing users to reveal the data behind those claims.

Invisible infrastructure.

The kind that only becomes obvious when it fails.

Which leads to a question that feels more interesting than any discussion about short-term market movements. If zero-knowledge development tools continue improving, if proof generation becomes easier and cheaper and more accessible to ordinary developers, what happens when verification itself becomes programmable?

Because at that point the blockchain stops being just a ledger.

It becomes something closer to a universal engine for proving truth in a world that increasingly struggles to agree on what truth looks like.

@MidnightNetwork #night $NIGHT