$SIGN #SignDigitalSovereignInfra @SignOfficial

I used to think transparency was something you add at the end.

You build the system first. Then you publish reports, dashboards, maybe an explorer. That’s what accountability usually looks like something layered on top after decisions are already made.

But the more I looked at public systems, the more that model felt backwards.

Because by the time transparency is added, most of the important decisions have already disappeared into process.

That’s where the idea of a public rail started to feel different to me.

Not as a feature.

But as the system itself.

In SIGN, the public rail isn’t just about making data visible.

It’s about making claims verifiable by default.

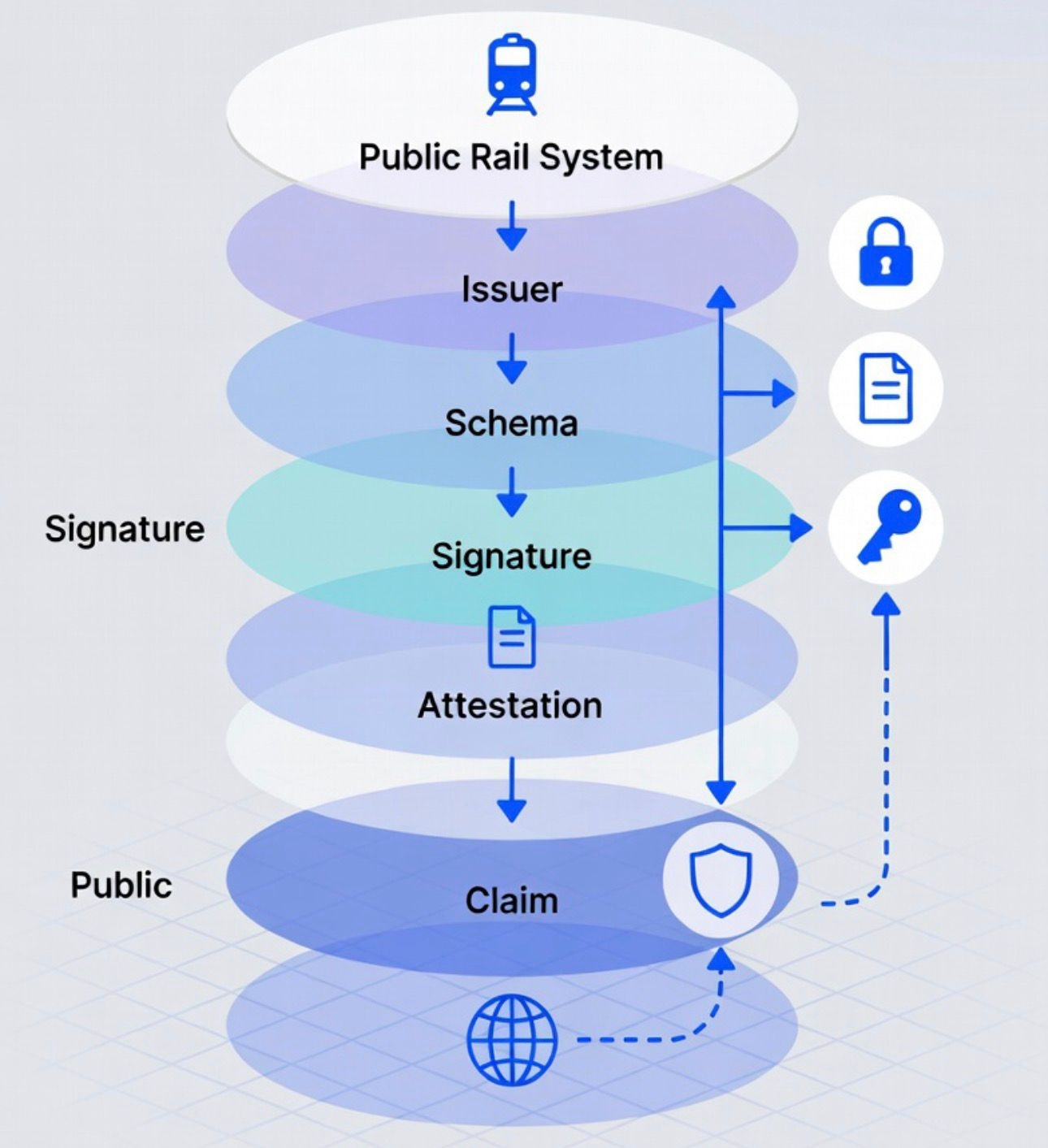

Every public action spending, allocation, issuance is represented as an attestation.

Not a report. Not a summary.

A claim that is signed, structured, and anchored so anyone can verify it.

That shift matters more than it sounds.

Because once something becomes an attestation, it stops being a statement you have to trust.

It becomes something you can check.

Think about public spending.

Right now, most systems show you where money went after the fact.

Budgets get published. Reports get released. Sometimes there are dashboards.

But those are static views.

They don’t let you verify the actual flow of decisions.

They show outcomes, not the underlying claims.

On a public rail, spending doesn’t show up as a report.

It shows up as a sequence of attestations.

An allocation is an attestation.

A disbursement is an attestation.

A completion milestone can also be an attestation.

Each one tied to:

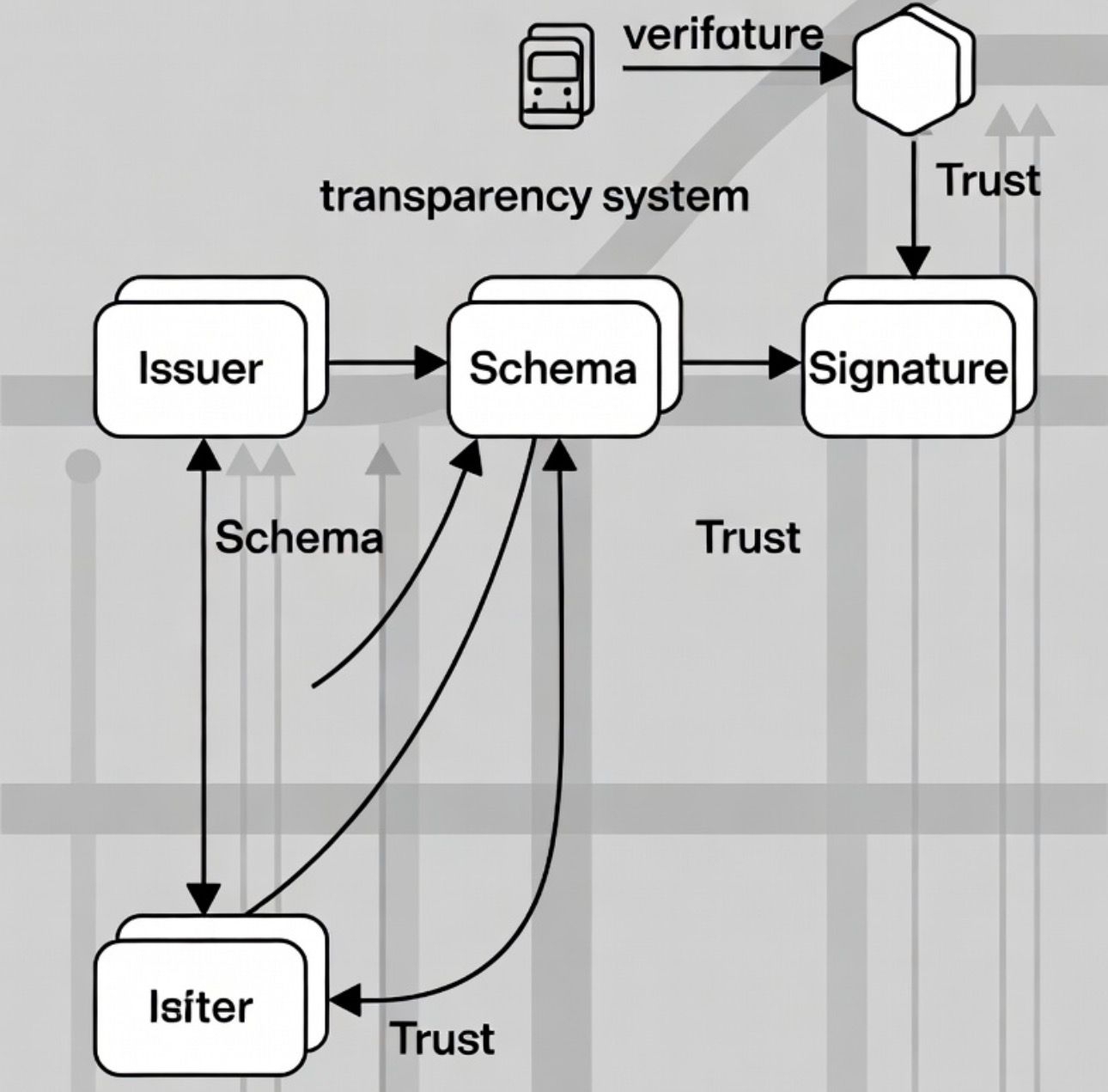

an issuer (who approved it)

a schema (what type of action it represents)

and a signature (so it can be verified independently)

That structure means you’re not reading what happened.

You’re verifying that each step actually occurred under defined rules.

What I didn’t expect is how this changes accountability.

In most systems, accountability depends on interpretation.

You read a report, you trust the source, or you question it.

Here, accountability becomes mechanical.

Because the system doesn’t ask: “Do you believe this?”

It allows you to check: “Does this claim match the rules it was supposed to follow?”

That’s a different level of trust.

Technically, this works because the public rail enforces visibility at the attestation level.

Every claim is:

indexed

queryable

and tied to a schema that defines its meaning

So if a city allocates funds for infrastructure, that allocation isn’t just recorded.

It’s structured in a way that anyone can:

trace its origin

verify the issuer

and follow how it moves through subsequent actions

The rail doesn’t summarize activity.

It exposes the logic behind it.

A simple example makes this more concrete.

Imagine a public infrastructure project.

In a traditional system, you might see:

budget approved

contractor assigned

project completed

But you can’t easily verify how each step connects.

On SIGN’s public rail, each step is an attestation.

The budget approval is issued under a governance schema.

The contractor assignment is issued under a procurement schema.

The payment release is tied to a milestone schema.

Now these aren’t just entries.

They are linked claims.

And anyone can follow that chain, verifying each step against its schema.

Not just what happened, but whether it happened correctly.

Another place this becomes powerful is open verification.

Most systems give access to data.

But access alone doesn’t guarantee understanding or trust.

SIGN’s public rail changes that by standardizing how claims are structured.

Because each attestation follows a schema, different systems can read and verify them consistently.

That means:

auditors don’t need custom integrations

citizens don’t need to rely on summaries

third-party tools can build directly on top of the data

Verification becomes portable.

Not locked inside one platform.

What stands out to me is that transparency here isn’t passive.

It’s active.

The system doesn’t just show information.

It makes that information usable.

Because every claim carries enough structure to be verified independently.

There’s also a subtle shift in how trust works.

In traditional systems, trust accumulates around institutions.

You trust the ministry, the agency, the report.

On a public rail, trust shifts toward the claims themselves.

If the attestation is valid, signed, and follows its schema, it stands on its own.

The system reduces how much you need to trust the narrator.

And this is where the idea clicked for me.

Transparency is no longer just about visibility.

It becomes the product.

Because what the system is really offering is not data.

It’s verifiable public truth.

That also means failure becomes visible in a different way.

If a step is missing, it’s not hidden in a report.

It’s absent from the chain.

If a claim doesn’t meet its schema, it can be flagged immediately.

The system doesn’t wait for audits to catch inconsistencies later.

It exposes them as part of normal operation.

What I find interesting is how this scales.

Most public systems struggle as they grow because:

reporting becomes heavier

audits become slower

trust becomes harder to maintain

A public rail flips that.

The more activity happens, the more attestations exist.

And the more material there is to verify.

Scale doesn’t reduce transparency.

It increases the surface area of verification.

This doesn’t mean everything should be public.

Some data still needs to stay confidential.

But what belongs on the public rail becomes clear.

Not raw data.

Not private details.

But claims that affect public outcomes.

So when I think about public infrastructure now, I don’t think about dashboards or reports.

I think about whether the system exposes its claims in a way that anyone can verify.

Because if it doesn’t, transparency is still just a layer.

Not the foundation.

SIGN’s public rail feels like it treats transparency as something you build from the start.

Not something you add later.

And once you see it that way, it’s hard to go back to systems where visibility depends on permission or timing.

Because those systems aren’t really transparent.

They’re just selective about what they show.