I have been thinking about the application discovery problem on Midnight for the past few days and I cannot shake the feeling that it is one of those challenges that sits so far outside the normal blockchain conversation that the people building the ecosystem have not fully confronted what it actually means for user adoption and I think when it finally becomes visible it is going to feel like a wall that nobody saw coming 😂

let me explain why this one caught my attention because it starts from a place that sounds almost too simple to be interesting and then gets genuinely complicated very quickly.

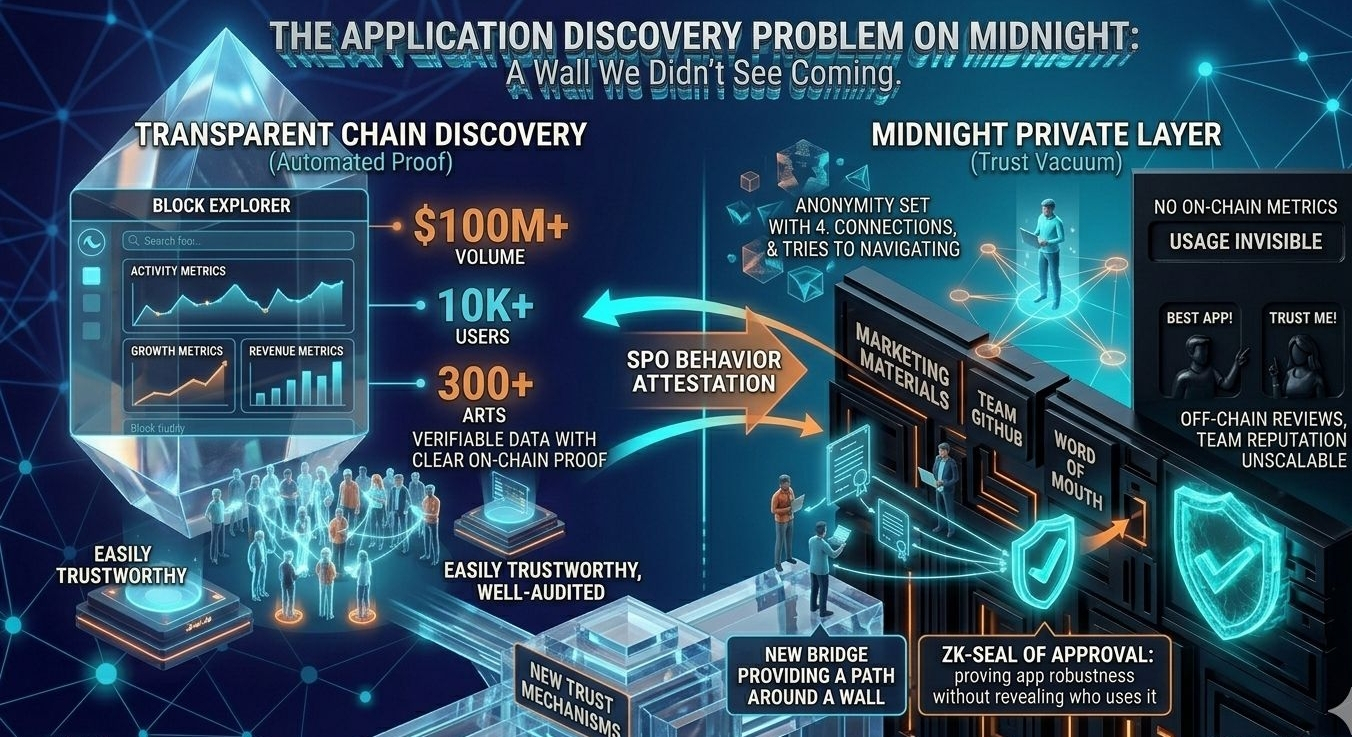

on a transparent blockchain discovering applications is straightforward. you go to a block explorer. you see which contracts are getting the most transactions. you see which applications are growing. you see on-chain activity metrics that tell you which protocols are live, which are being used, which are attracting capital, which are dying. the entire competitive landscape of the application ecosystem is visible to anyone who knows how to read the data.

that transparency serves a function that nobody explicitly designed it for but that turns out to be enormously valuable. it creates a discovery layer. users looking for applications can see which ones are actually being used by real people. developers evaluating whether to build can see which categories of application are underserved. investors looking for opportunities can see which protocols are gaining traction. journalists and researchers can report on ecosystem growth. the transparency of the chain is itself a distribution mechanism for applications.

Midnight's private layer destroys that discovery layer completely.

and I mean completely. not partially. not for some categories of information but not others. completely.

an application operating on Midnight's shielded layer produces almost no observable on-chain signal of its activity. the number of users it has is invisible. the volume of transactions it processes is invisible. the growth rate of its user base is invisible. whether it is being used by one person or ten thousand is invisible. whether it launched last week or has been running for two years is essentially indistinguishable from the outside.

the private application exists. the evidence that it is being used does not.

now think about what that means from a user's perspective trying to navigate the Midnight application ecosystem.

how do you decide which private application to trust with your sensitive data.

on a transparent chain you look at the on-chain metrics. this protocol has processed five billion dollars in volume over two years with no security incidents. that track record is visible and verifiable. the chain itself is the trust signal.

on Midnight the chain cannot provide that signal. the application's usage history is private. its transaction volume is private. its user retention is private. the evidence that would normally build trust in a financial or privacy-sensitive application is exactly the evidence that the privacy model is designed to suppress.

so what does trust building actually look like for a Midnight application.

the first answer most people reach for is reputation. the development team has a public reputation. they have published their code. they have a history in the ecosystem. you trust the application because you trust the people who built it.

that works for early adopters in the technical community who know the developers personally or by reputation. it does not scale. the vast majority of users who will eventually use Midnight applications are not going to research the development team's GitHub history before deciding whether to trust an application with their private medical data or their private financial records. they need trust signals that are accessible without technical expertise and legible without industry context.

on a transparent chain those accessible legible trust signals come from on-chain metrics. usage volume. longevity. absence of exploits. these are things that non-technical users can understand and act on even without knowing anything about the technical design of the application.

on Midnight none of those signals exist for private applications.

the second answer people reach for is audits. third party security audits of the application code. if a reputable auditing firm has reviewed the code and found no critical issues that is a meaningful trust signal that does not depend on on-chain transparency.

audits are real and they matter. but they have important limitations as a primary trust mechanism for a broad user base.

first audits are expensive. not every legitimate application can afford a comprehensive audit from a top-tier firm especially in early stages of development. the barrier to getting a credible audit creates a cost advantage for well-capitalized applications and a disadvantage for smaller developers with good ideas but limited resources.

second audits certify code at a point in time. they do not provide ongoing assurance that the application is behaving as intended. an application that passed an audit two years ago and has since been updated multiple times is not the same as an application that passed an audit last week.

third and most importantly audits assess whether the code does what it claims to do. they do not assess whether what the code claims to do is actually what users need it to do. an application can be technically correct and still be designed in ways that subtly undermine the privacy guarantee it claims to offer through architectural choices that no audit would flag as incorrect.

the third answer is community reputation. word of mouth within the ecosystem. social proof from trusted voices who have evaluated and recommended specific applications.

community reputation works in tight-knit communities where trust networks are dense and information flows quickly. it breaks down as the community grows and as the signal-to-noise ratio in community channels degrades. in a large ecosystem with many applications and many voices community reputation becomes difficult to aggregate reliably and easy to manipulate through coordinated promotion.

and here is the specific failure mode I am most worried about.

the absence of on-chain discovery signals creates a vacuum. and vacuums get filled. the vacuum that Midnight's private layer creates in the application discovery space will get filled by off-chain marketing. by the applications that can spend the most on visibility rather than the ones with the most genuine usage. by influencer promotion and paid content and manufactured social proof that mimics organic trust signals without the underlying substance.

on a transparent chain marketing has to compete with on-chain reality. you can spend heavily on promotion but users can check the metrics and see whether the usage matches the hype. the chain is an accountability mechanism for claims about application quality and adoption.

on Midnight's private layer there is no on-chain accountability mechanism for marketing claims about private application usage. an application that claims to have thousands of active users cannot be verified or falsified by looking at the chain. the privacy design that protects legitimate users also protects misleading claims about application adoption.

that asymmetry between marketing claims and verifiable reality is not a small problem. it is a fundamental feature of how information works in private systems and it has serious consequences for how the competitive dynamics of the application ecosystem develop.

the applications that win market share in the early Midnight ecosystem will be the ones with the best marketing and the most trusted brand presence not necessarily the ones with the best design or the most genuine usage. that is true to some extent in every market but it is much more true in markets where on-chain verification of usage claims is impossible.

I keep thinking about what legitimate infrastructure for private application discovery could actually look like.

one direction is voluntary transparency proofs. applications that want to build trust publish ZK proofs of their aggregate usage metrics. not the individual transaction data. not the private user information. just provable aggregate facts. this application has processed more than ten thousand unique users. this application has been running continuously for more than eighteen months. this application's transaction volume has grown at more than twenty percent month over month for the past six months.

those aggregate facts can be proven with ZK proofs without revealing anything about individual users. an application that publishes credible aggregate proofs of its usage is providing trust signals that users can act on without compromising the privacy of any individual.

the complication is that voluntary transparency proofs are voluntary. applications with strong genuine metrics have every incentive to publish them. applications with weak metrics have no incentive to publish them and may prefer to remain opaque. the distribution of applications that publish voluntary proofs is not a random sample of all applications. it is a selection of the applications with the best metrics — which looks like a useful signal until you realize that absence of a voluntary proof is itself informative and sophisticated bad actors will find ways to generate plausible-looking proofs of metrics that do not reflect genuine usage.

another direction is ecosystem-level reputation infrastructure. a decentralized system where users can attest to their experience with specific applications in a way that is aggregated and made public without revealing individual identities. a privacy-preserving review system where the aggregate quality signal is public even though each individual reviewer is anonymous.

that is technically achievable. it is also a governance challenge and an incentive design challenge. who runs the reputation infrastructure. how are gaming attempts detected and filtered. how do you prevent coordinated manipulation of reputation scores. these are not unsolvable problems but they require investment and careful design that goes beyond what any individual application developer can provide on their own.

the third direction is something I find genuinely interesting and that I have not seen discussed anywhere. it is the idea of application behavior attestation through SPOs.

SPOs process transactions. they can observe certain things about transaction patterns at the block level without seeing transaction content. they know how many transactions were processed in each block. they know the timing distribution. they know the fee distribution. they know which applications received transactions and when.

an SPO that has been processing Midnight blocks for two years has observed the behavioral history of every application on the network at the block level. not the content. but the pattern. that behavioral observation could potentially be aggregated into a trust signal that is rooted in the direct observation of network participants rather than in self-reported metrics or third-party audits.

SPO-attested application behavior metrics would be hard to fake because faking them would require convincing multiple independent SPOs to attest to false information. the decentralized nature of the attestation base is itself a trustworthiness property.

the complication is that SPO-level observation of application transaction patterns is the same metadata that I was worried about as a privacy attack vector in previous analyses. information that is useful for building application trust signals is also information that is useful for inference attacks against private state. the same data serving two completely different purposes with completely different implications depending on who is using it and why.

that tension does not have a clean resolution. every mechanism for building application trust in a private ecosystem creates some information that could theoretically be used to reduce privacy. the design question is whether the trust benefit is worth the inference cost and how to structure the mechanism to maximize the trust value while minimizing the privacy leak.

I want to be direct about something here because I think it is the most practically urgent point in all of this.

the application discovery problem is not a problem that solves itself as the ecosystem matures. in transparent chain ecosystems discovery infrastructure emerged organically because the underlying data was publicly available and entrepreneurs built tools on top of it. block explorers, analytics platforms, portfolio trackers, yield aggregators that compare returns across protocols — all of this infrastructure built itself because the data was there to build on.

on Midnight's private layer the data is not there. the infrastructure cannot build itself from available data because the relevant data is exactly what the privacy design suppresses. discovery infrastructure for the private layer has to be deliberately built by people who understand both the privacy constraints and the user trust requirements and who are willing to invest in a problem that is not as exciting as the cryptographic architecture but is just as important for whether ordinary users can actually navigate the ecosystem safely.

without that deliberate investment the Midnight application ecosystem faces a trust vacuum that will be filled by marketing rather than merit. the applications that win will be the ones with the most visibility not the ones with the most genuine value. and the users who most need the privacy guarantee — the ones trusting private applications with their most sensitive information — will have no reliable way to distinguish the applications worth trusting from the ones that are not.

the zero knowledge proofs protect the data inside the applications.

they cannot protect users from choosing the wrong application in the first place.

and right now the Midnight ecosystem does not have the infrastructure to help users make that choice well. 🤔