Practical privacy is one of the most important pieces of digital infrastructure.

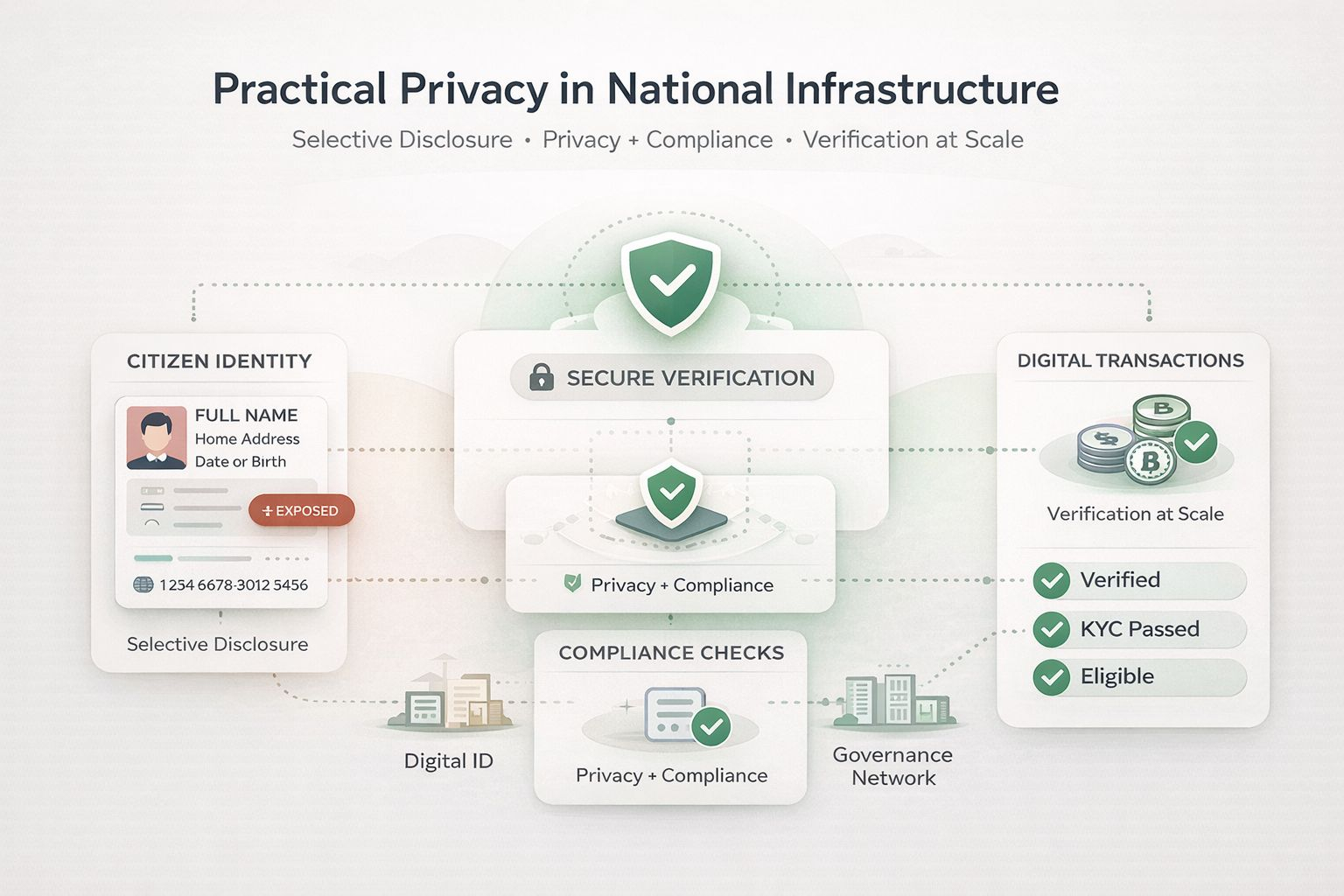

Many verification systems still work in a clumsy way. If someone needs to prove one thing, they often end up revealing much more than necessary. That creates friction for users and weakens trust in systems that are supposed to serve at scale.

That is why $SIGN feels interesting. The core idea is simple: verification should not require full exposure. A person should be able to prove identity, eligibility, or compliance without revealing every extra detail associated with their profile.

That matters even more when the conversation moves beyond apps and into national-scale digital systems. If digital identity, regulated transactions, and compliance checks are going to become part of daily infrastructure, then privacy cannot be treated like an afterthought. It has to be built into the verification layer itself.

What makes this compelling is the balance. Not privacy instead of compliance, but privacy with compliance. Not opacity, but selective disclosure with trust, usability, and accountability still intact.

To me, that is what practical infrastructure looks like.

How much personal data should a verifier actually need?