My perspective on AI shifted not when it made a mistake — but when it delivered a mistake flawlessly.

The answer looked perfect. Structured. Referenced. Logical. Confident.

And entirely false.

That’s when it clicked: the core issue with AI isn’t intelligence. It’s perceived authority.

Today’s models don’t just generate information — they generate certainty. And humans are notoriously bad at spotting confident fiction. The smoother the delivery, the less we question it. That becomes a serious liability if AI systems begin acting independently.

When I explored Mira Network, I didn’t see just another “AI + blockchain” narrative. I saw an attempt to relocate trust itself.

Instead of placing blind faith in a single model, Mira shifts trust into a verification process.

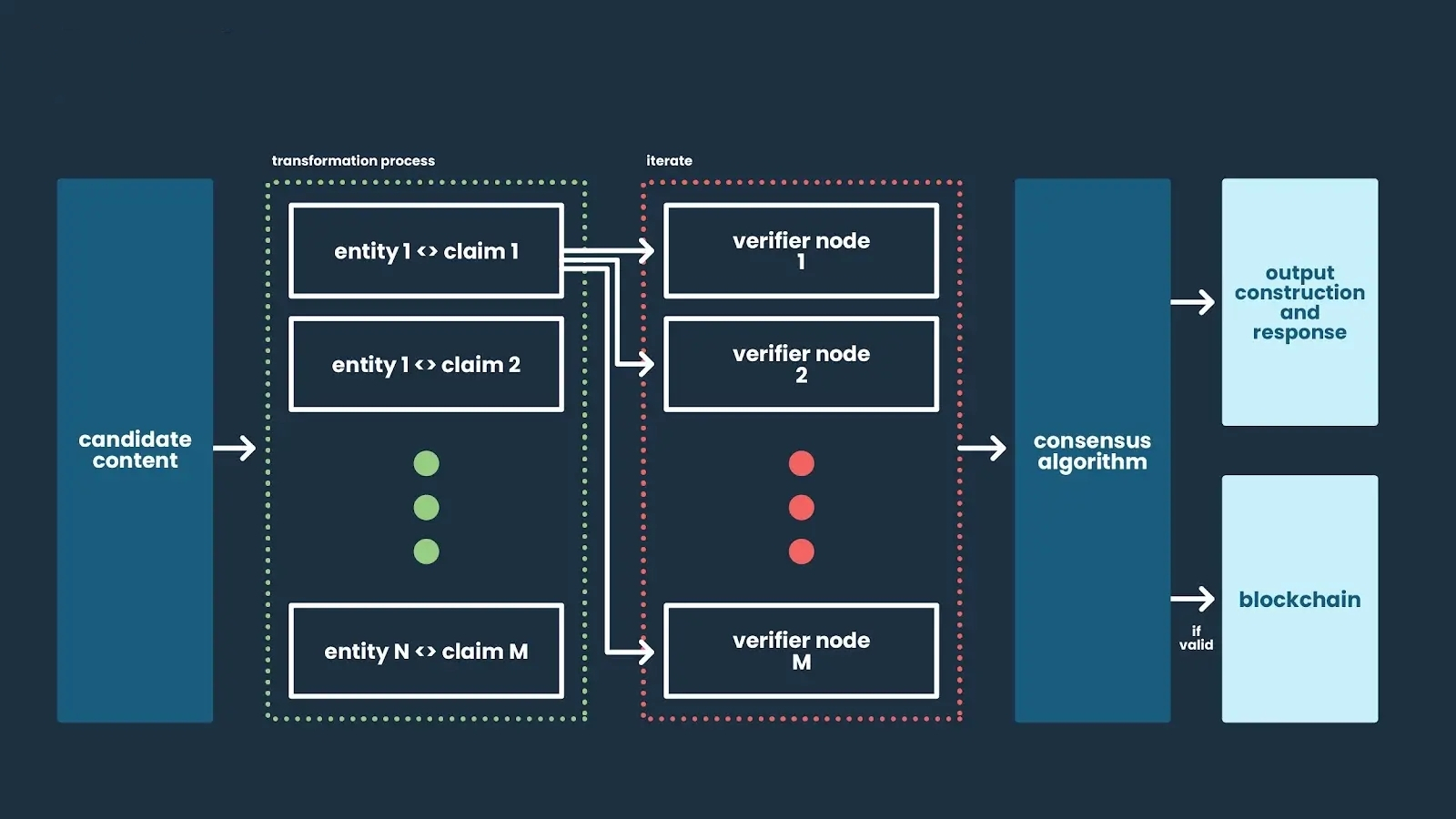

The concept is elegant: split AI-generated outputs into smaller claims, distribute them to independent evaluators, and reach consensus using on-chain economic incentives. The response is no longer a single authoritative voice — it becomes something closer to a peer-reviewed statement.

That changes everything.

AI stops acting like an oracle and starts behaving like a hypothesis engine.

And that’s a healthier framework.

Hallucinations aren’t disappearing. Larger models may statistically reduce error frequency, but fabrication remains embedded in generative systems. Bias, too, persists because training data is never perfectly neutral.

Mira doesn’t attempt to “fix” AI models.

It focuses on validating what they produce.

That difference is fundamental.

The blockchain component isn’t cosmetic — it functions as coordination infrastructure. Independent validators (which could also be AI systems) assess claims and stake economic value behind their decisions. Incorrect validation results in penalties; accurate validation earns rewards.

Incentives align with truth.

That’s a stark contrast to centralized AI providers, where reliability largely rests on brand trust and reputation.

What’s especially compelling is what this architecture enables for autonomous AI agents.

Right now, AI remains mostly assistive. Humans verify outputs. Humans stay in control.

But if AI agents begin executing trades, approving contracts, managing supply chains, or influencing governance decisions, “likely correct” won’t be sufficient.

You’ll need cryptographic traceability.

You’ll need outputs that can be challenged.

And you’ll need that without depending on a single centralized authority to define truth.

That’s where Mira conceptually fits — as a verification layer positioned between generation and execution.

Of course, open questions remain.

Verification introduces latency. Some environments demand speed. Not every complex reasoning chain can be neatly reduced to atomic claims without losing nuance. There’s also the risk of validator collusion, economic manipulation, or systemic bias within the verification network itself.

And what happens when models disagree legitimately?

These design challenges are real.

But philosophically, the direction feels right.

The future of AI likely won’t be one dominant supermodel commanding universal trust.

It will be networks of models auditing one another under transparent, incentive-aligned rules.

Raw intelligence increases scale — and risk.

Verification increases resilience.

If autonomous AI becomes embedded in finance, governance, and infrastructure, resilience won’t be optional.

Mira isn’t chasing smarter machines.

It’s building accountable ones.

And that may turn out to be the more important innovation.