When I hear “verifiable AI,” my first reaction isn’t awe. It’s skepticism. Not because verification isn’t valuable, but because the phrase risks sounding like a magic seal — as if adding cryptography to probabilistic systems suddenly turns them into sources of truth. It doesn’t. What it does, at best, is change how confidence is produced, distributed, and trusted.

For years, the core problem with AI hasn’t been capability — it’s reliability. Models generate fluent answers that feel authoritative, even when they’re wrong. Hallucinations, bias, and silent errors aren’t edge cases; they’re structural properties of systems trained on incomplete and noisy data. The industry’s default response has been to wrap these systems in disclaimers and human review. That works at small scale. It breaks at machine speed.

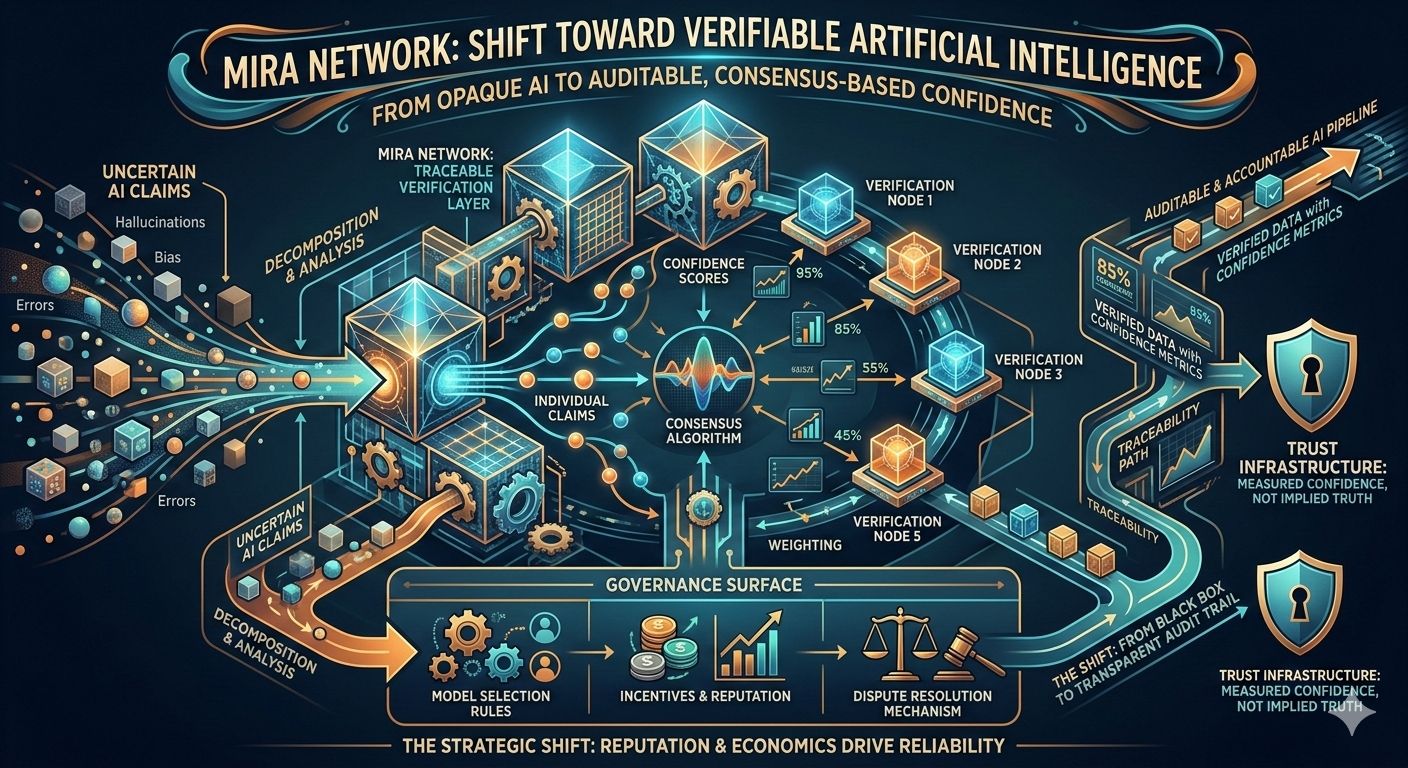

This is the gap Mira Network is trying to close — not by claiming AI can be perfect, but by changing how outputs are validated. Instead of treating a model’s response as a monolithic answer, the system decomposes it into verifiable claims, distributes those claims across independent models, and uses consensus to determine confidence. The promise isn’t truth. The promise is traceability.

That distinction matters. A single AI output is an opaque artifact: you see the result, but not the reasoning path, the uncertainty, or the points of disagreement. A verification layer turns that opacity into a structured process. Claims can be checked, contested, weighted, and recombined. Confidence becomes something measured rather than implied.$MIRA

But verification doesn’t happen in a vacuum. If multiple models are evaluating claims, someone decides which models participate, how they’re weighted, and how disagreements are resolved. That introduces a governance surface that most “AI accuracy” conversations ignore. Reliability becomes a function not just of models, but of incentives, selection rules, and dispute mechanisms.

This is where the deeper shift begins. In traditional AI deployment trust sits with the model provider. If the output is wrong, the failure is attributed to the model. In a verification network, trust moves to the process. The question stops being “Which model do you trust?” and becomes “Do you trust the verification mechanism to surface disagreement and resist manipulation?”

Because manipulation is inevitable. If verified outputs influence financial decisions, automated workflows, or regulatory compliance, actors will attempt to game the verification layer itself. They’ll probe for weak models, exploit weighting schemes, and target latency windows where consensus can be swayed. Verification doesn’t eliminate adversarial pressure; it relocates it.

The optimistic framing is that distributed verification reduces single points of failure. The more sobering reality is that it creates a new class of operators: entities that curate model pools, manage staking or reputation systems, and price the cost of verification. Reliability becomes an economic product, not just a technical property.

And like any market, it will develop gradients of quality. Some verification paths will be cheap and fast, suitable for low-stakes content. Others will be slow, expensive, and adversarially hardened for critical decisions. The risk is that users won’t always know which tier they’re interacting with. A “verified” label without context can be more misleading than no label at all.

There’s also a latency trade-off hiding beneath the surface. Verification takes time: multiple models must evaluate claims, consensus must form, and disputes must resolve. In high-frequency environments, speed competes with certainty. Systems will be tempted to short-circuit verification under pressure, reintroducing the very reliability gaps they were designed to close.

Yet the direction is hard to dismiss. As AI systems move from advisory roles into autonomous execution approving transactions, moderating content, triggering supply chain actions unverifiable outputs become operational risks. A verification layer transforms AI from a black box into an auditable pipeline. Not infallible but accountable.

That accountability shifts responsibility up to the stack. If an application integrates verified AI, it inherits the duty to choose verification thresholds, disclose confidence levels, and handle disputes. “The model said so” stops being an excuse. Reliability becomes part of product design, not just model performance.

This opens a new competitive frontier. AI platforms won’t compete solely on model benchmarks; they’ll compete on trust infrastructure. How transparent is the verification process? How resilient is it under adversarial conditions? How predictable are confidence scores during data drift or market volatility? In this landscape, the best systems won’t be those that claim certainty — they’ll be those that quantify doubt effectively.

The strategic shift, then, isn’t that AI outputs can be verified. It’s that verification becomes a layer of infrastructure, managed by specialists and priced according to risk. Just as cloud providers abstract hardware and payment networks abstract settlement, verification networks may abstract trust — turning it into a service with measurable guarantees and visible trade-offs.

The real test will come under stress. In calm conditions, verification systems will appear robust. In contentious environments — political events, financial shocks, coordinated misinformation — the pressure to manipulate consensus will spike. The long-term value of verifiable AI won’t be determined by accuracy in demos, but by integrity when incentives to cheat are highest.

So the question that matters isn’t “Can AI be verified?” It’s “Who defines the verification process, how is confidence priced, and what happens when the cost of truth exceeds the cost of deception?”

#Mira @Mira - Trust Layer of AI