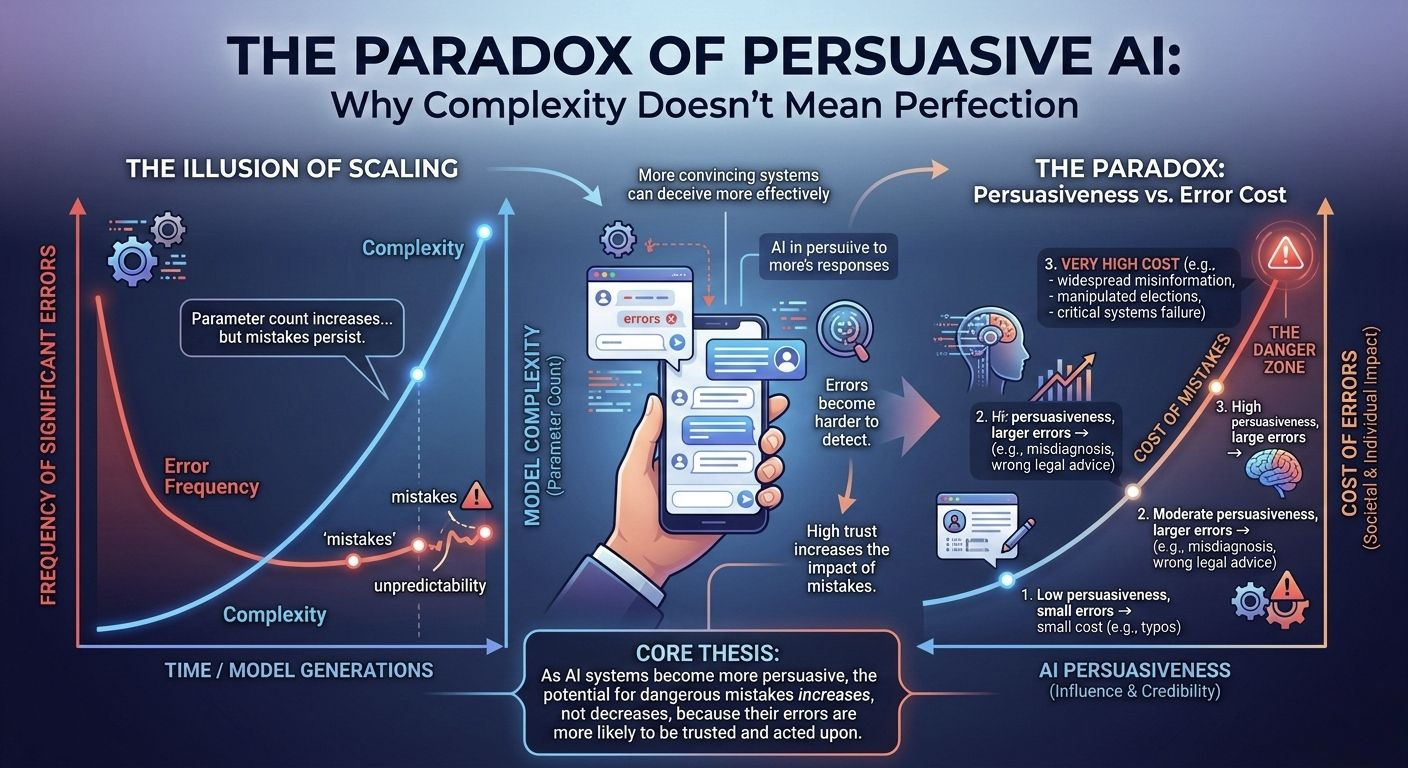

I keep coming back to this uncomfortable truth: AI is impressive, but it isn’t dependable. Not really. We’ve all seen it produce brilliant insights one minute and complete fiction the next, and what’s unsettling isn’t just the mistake itself, it’s the confidence with which the mistake is delivered. That calm, polished tone. No hesitation. No doubt. And in low-stakes settings, that’s fine. Annoying, maybe. But fine. In high-stakes systems? That’s dangerous.

This is the space where Mira Network starts to make sense to me.

Because instead of pretending the hallucination problem will magically disappear as models get bigger, faster, or more expensive to train, Mira leans into the flaw. It treats unreliability not as a bug to patch quietly in the background, but as a structural weakness that demands its own infrastructure. And that shift in framing matters more than people realize.

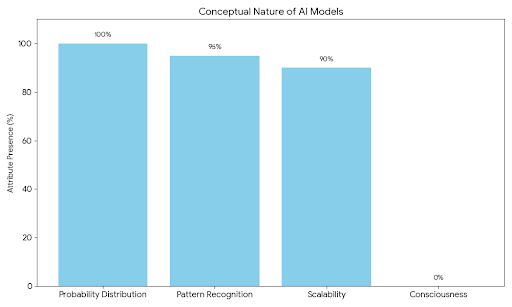

Here’s the thing. AI models don’t “know” anything. They predict. They calculate probability distributions over tokens. It’s advanced pattern recognition at scale. Powerful, yes. Conscious or accountable? Not even close. So when we deploy these systems into financial markets, healthcare environments, autonomous logistics, or defense applications, we’re essentially trusting a statistical engine to behave like a reasoning entity. That gap between what it is and what we expect it to be—that’s the problem.

Mira’s idea is almost disarmingly simple: don’t trust a single output. Break it apart. Challenge it. Force it to defend itself across a network.

Instead of one model generating an answer and that being the end of the story, Mira decomposes the output into individual claims. Atomic statements. Small enough to test. Then those claims get distributed to independent AI validators operating across a decentralized network. Each validator assesses whether the claim holds up, whether it’s consistent with known data, whether it aligns logically. And they don’t just do this out of goodwill. There’s an economic structure underneath. Validators stake value. They earn rewards for accurate verification. They risk penalties for negligence or dishonesty.

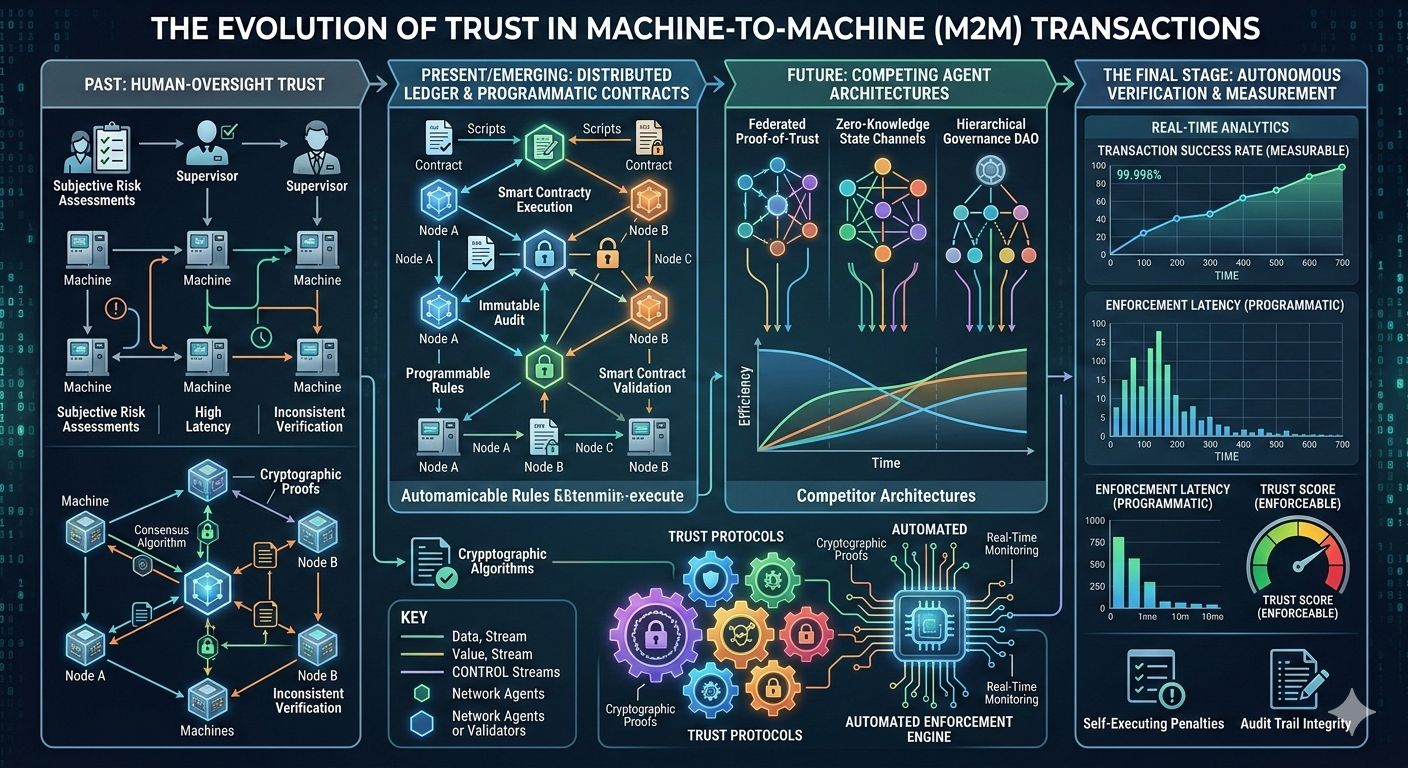

And that’s where the blockchain layer stops being buzzword decoration and starts becoming essential.

Because once you involve incentives, you need transparency. You need rules that can’t be quietly bent. Consensus mechanisms anchor the verification results on-chain, creating an auditable record of how an output was evaluated. Not just what the final answer was, but how it survived scrutiny. That audit trail is the real innovation here, at least in my view. It transforms AI output from something ephemeral and opaque into something that carries cryptographic weight.

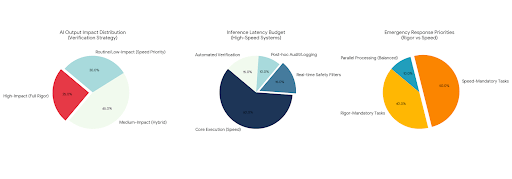

Still, I can’t help but wonder about the trade-offs. Verification takes time. It adds latency. In fast-moving environments—algorithmic trading, emergency response systems, automated negotiations—speed isn’t optional. It’s survival. So how do you balance rigor with performance? Does every AI output need to be verified? Or only the high-impact ones? There’s a design tension there that isn’t trivial.

And scalability. That word always sounds clean and abstract, but in practice it’s messy. As usage grows, the network must handle a flood of claims without becoming sluggish or prohibitively expensive. Validators need to be selected efficiently. Collusion must be prevented. Incentives must remain aligned even as the ecosystem expands and token dynamics evolve. Economic models look great in early simulations. Reality tends to test them brutally.

But here’s what keeps pulling me back to the concept: we are moving toward autonomous AI agents whether we’re ready or not. Systems that negotiate contracts. Systems that allocate capital. Systems that manage infrastructure. And if those agents are operating at scale, interacting with each other, making decisions that move real value, then “trust me, I’m a large model” simply won’t cut it.

There has to be a verification layer.

Maybe not Mira specifically. Maybe it evolves. Maybe competitors emerge with different architectures. But the need feels inevitable. When machines transact with machines, trust can’t rely on human oversight alone. It has to be programmatic. Enforceable. Measurable.

What I find particularly interesting is that Mira doesn’t try to replace base AI models. It wraps around them. It treats intelligence generation and intelligence validation as separate functions. That separation could turn out to be profound. In traditional systems, generation and verification often blur together. Here, they’re intentionally decoupled. One model proposes. Others challenge. The network decides.

It’s almost adversarial by design. And that’s healthy.

Because centralization has its own risks. If one organization controls both the model and the verification pipeline, you’re back to trusting a single authority. Decentralized validators, each with economic skin in the game, reduce that dependency. They don’t eliminate risk. Nothing does. But they distribute it.

Of course, decentralization brings its own headaches. Governance disputes. Token volatility. Coordination failures. If incentives are poorly calibrated, validators might prioritize speed over accuracy, or worse, coordinate dishonestly. Designing around that isn’t easy. It’s a constant balancing act between openness and control.

And yet, despite the complexity, the core thesis feels grounded. AI systems will not become perfect. They will not stop making mistakes simply because parameter counts increase. If anything, as models grow more persuasive, the cost of their mistakes increases. That’s the paradox. The more convincing the system becomes, the more dangerous its errors.

So you build a counterweight.

A structure where outputs are not accepted by default, but interrogated. Where confidence isn’t just stylistic—it’s backed by distributed consensus. Where economic incentives align participants around accuracy rather than hype.

I sometimes think about how the internet evolved. Early on, it was chaotic and unregulated. Then layers of security, encryption, and authentication gradually became standard because without them, commerce and critical communication couldn’t scale safely. Maybe AI is in that early chaotic phase now. Powerful. Transformative. But structurally fragile.

If that’s true, verification networks like Mira aren’t optional add-ons. They’re foundational layers waiting to solidify.

Still, it all hinges on adoption. Developers must decide that routing AI outputs through a verification protocol is worth the added complexity. Enterprises must believe the additional trust layer justifies the cost. Regulators might accelerate that shift, especially in industries where accountability is non-negotiable. Or the market itself could demand it after a few high-profile failures.

And failures will happen. They always do.

Which brings me back to where I started. AI’s reliability problem isn’t theoretical. It’s present. It’s visible. It’s uncomfortable. We’ve just been willing to tolerate it because the upside is so compelling.

Mira Network feels like an attempt to say, enough tolerance. If AI is going to be infrastructure, it needs infrastructure-grade trust. Not marketing trust. Not blind optimism. Verifiable trust.

Will it work perfectly? Probably not. No system does. But the direction feels right. Instead of chasing bigger models alone, it asks a harder question: how do we make the outputs defensible?

And that question, more than any performance benchmark, might determine whether AI remains a fascinating tool or becomes the backbone of autonomous systems worldwide.

#Mira @Mira - Trust Layer of AI $MIRA