There’s something slightly unsettling about how fast robotics is advancing, and I don’t mean in the dramatic, science-fiction way people usually imagine. I mean in the quiet way. Warehouses already rely on autonomous systems. Delivery bots roll down sidewalks. Humanoid prototypes are learning balance and dexterity at a pace that would’ve sounded absurd ten years ago. And yet, most of the conversation still circles around performance specs and viral demo videos. Faster arms. Smarter vision. Better reinforcement learning. Very little attention goes to the underlying structure of control. Who owns these machines? Who verifies what they do? Who decides how they evolve?

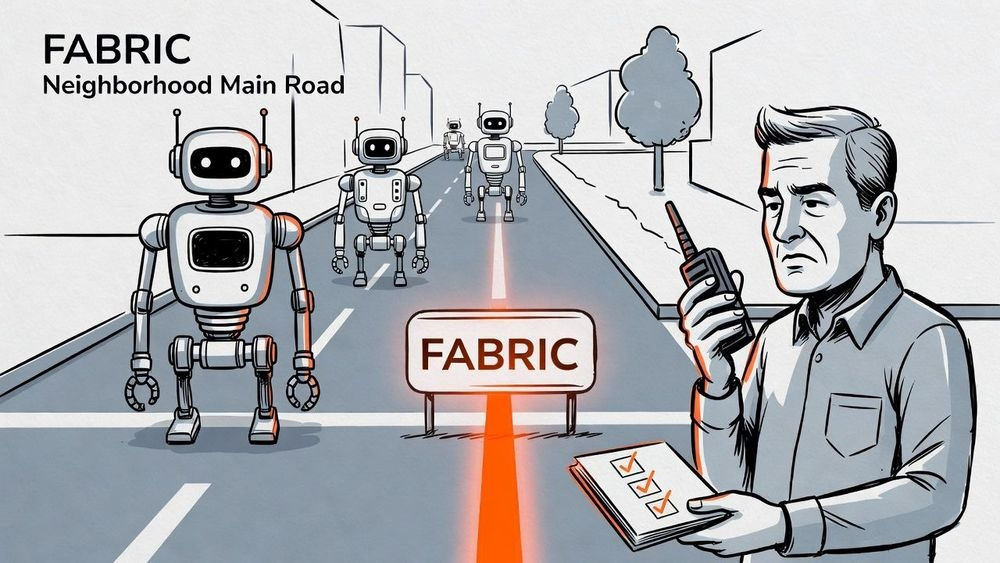

That’s where Fabric Protocol steps in, and the more I think about it, the more I realize it’s less about robots themselves and more about power. Because general-purpose robots real ones, not just single-task industrial units are going to be agents in our environment. They’ll make decisions in dynamic spaces. They’ll interact with people. They’ll adapt. And once you accept that premise, the next question becomes unavoidable: on whose terms?

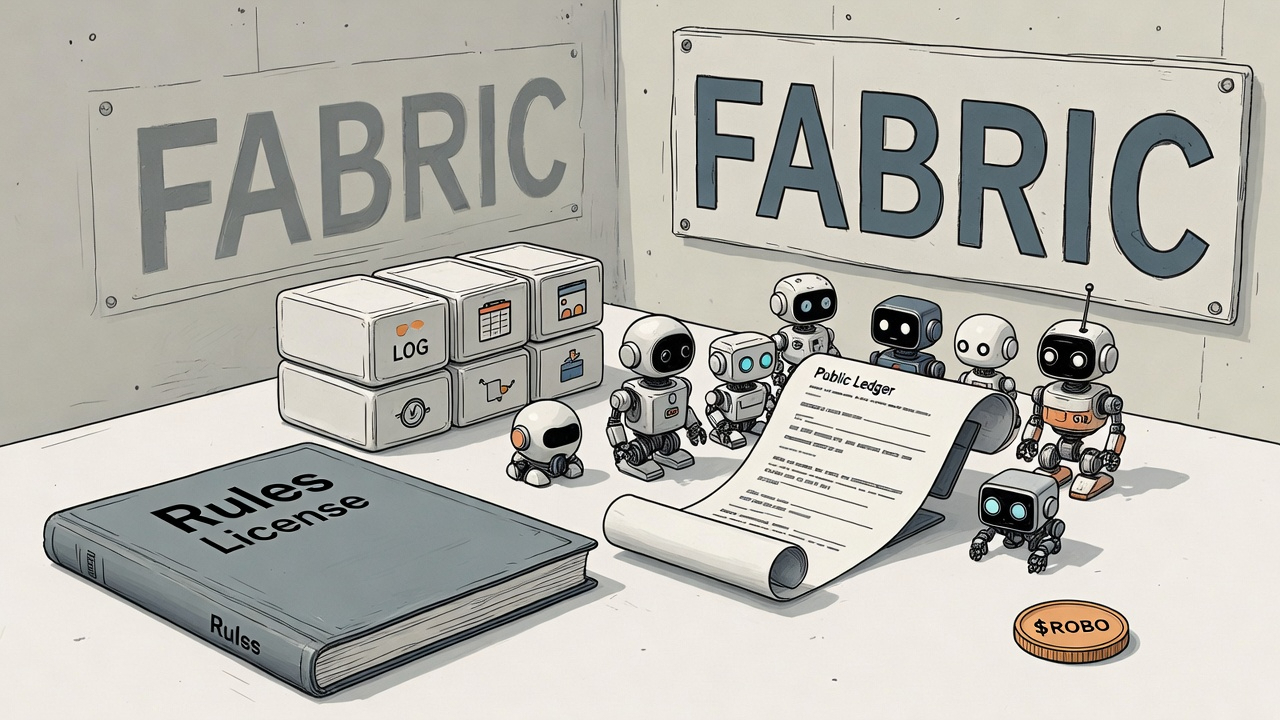

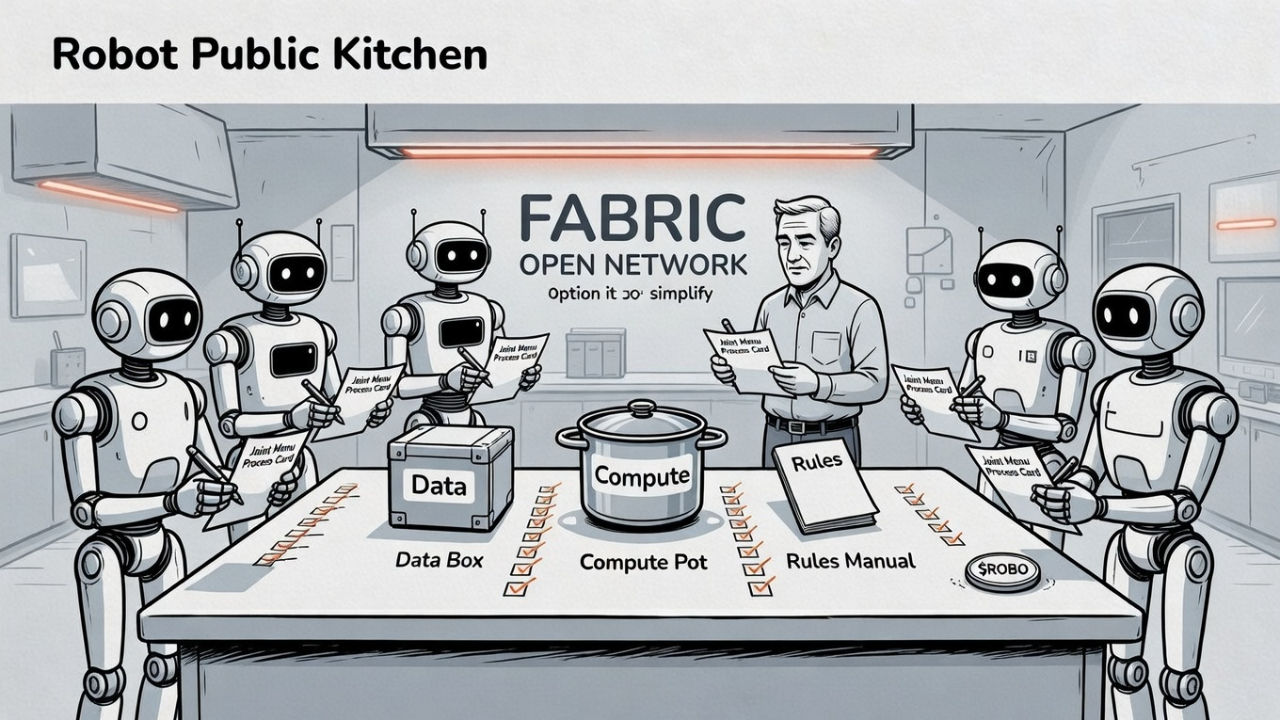

Fabric Protocol proposes something deceptively simple and wildly ambitious at the same time. Build an open, global network where robots aren’t just hardware products but participants in a verifiable system. Not owned outright by one corporation’s black box. Not governed entirely behind closed doors. Instead, coordinated through a public ledger that records data flows, computational proofs, governance rules. It sounds technical. It is technical. But the heart of it is philosophical.

Because here’s the thing robots are no longer just tools. They’re becoming semi-autonomous actors. And actors without accountability structures are dangerous, even if unintentionally so. We’ve already seen what opaque algorithms can do in purely digital spaces bias amplification, unpredictable failures, hidden manipulation. Now imagine that opacity moving into physical environments. Machines lifting heavy objects, navigating crowded areas, handling logistics chains, maybe even assisting in healthcare settings. The margin for error shrinks dramatically.

Fabric’s idea of verifiable computing hits differently when you frame it that way. It’s not about crypto hype or ledger maximalism. It’s about being able to prove what happened. If a robot made a decision, there’s a traceable computational record. If software was updated, it wasn’t a silent patch slipped into production without oversight. If governance rules change, those changes are transparent and collectively validated. In theory, anyway. Implementation is another beast entirely.

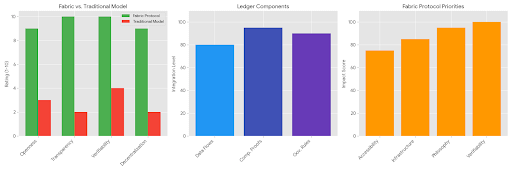

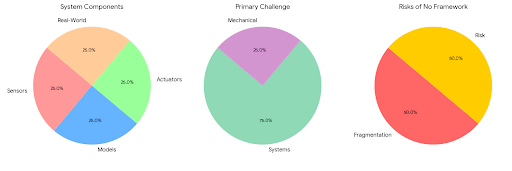

And I keep coming back to that word coordination. Because general-purpose robotics isn’t just a mechanical challenge. It’s a systems challenge. Sensors feed data into models. Models output decisions. Decisions trigger actuators. Actuators interact with the messy unpredictability of the real world. Now multiply that across thousands or millions of machines, each operating in slightly different contexts. Without a shared framework, you get fragmentation. Without accountability, you get risk.

But here’s where it gets uncomfortable. Public ledgers and verifiable systems aren’t lightweight. They introduce friction. Robotics often demands real-time responsiveness. Milliseconds matter. Cryptographic proofs and distributed consensus can’t always keep up with physical immediacy. So Fabric has to navigate a tension that feels almost paradoxical: how do you preserve speed while embedding verification? How do you decentralize coordination without paralyzing the machine?

Maybe the answer lies in layered architecture. Immediate decisions happen locally, close to the hardware, where latency is minimal. Proofs and records anchor asynchronously to the ledger. Governance rules update in structured cycles. That sounds reasonable. But engineering at that scale is brutal. Hardware constraints don’t politely step aside for elegant protocol design. Processing power, battery limits, network reliability these are hard boundaries.

Still, the alternative model feels brittle. Centralized robotics ecosystems concentrate authority. A single entity controls updates, data aggregation, and evolution pathways. Efficient, yes. Profitable, often. But fragile in a way we rarely admit. One security breach. One unethical policy shift. One regulatory clash. Suddenly, entire fleets of machines operate under questionable constraints. The larger the deployment, the greater the systemic risk.

Fabric Protocol, supported by a non-profit foundation rather than a purely profit-driven corporate structure, tries to anchor itself in longer time horizons. That doesn’t make it immune to politics or internal conflict. Foundations are human institutions, after all. They require funding, transparency, and trust. But there’s something fundamentally different about building robotic infrastructure as public goods rather than proprietary stacks.

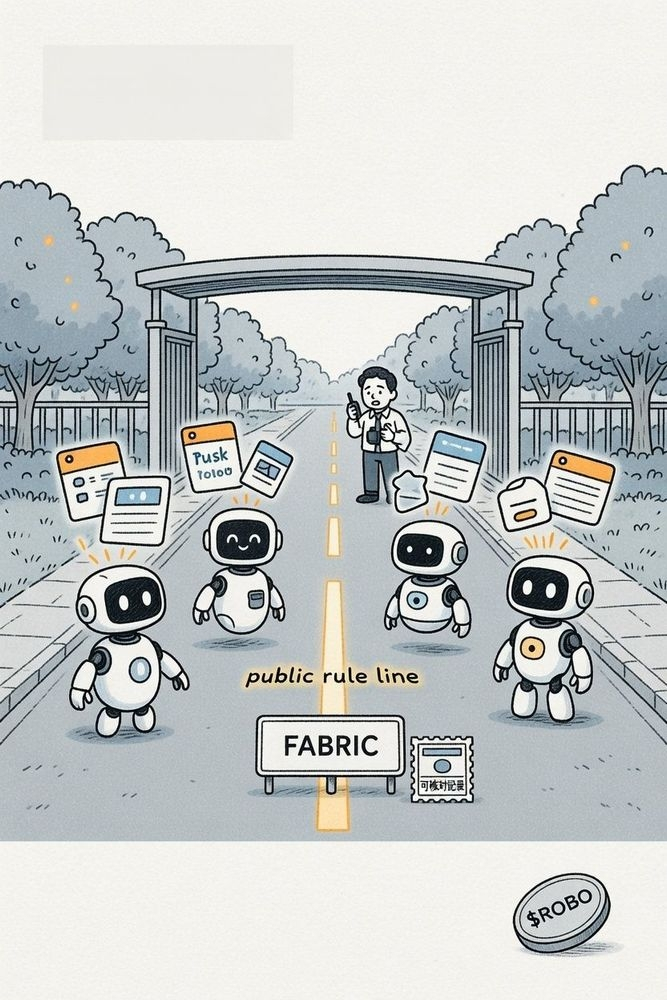

And then there’s governance. Collaborative evolution sounds beautiful until you ask who actually gets a say. Developers? Hardware manufacturers? Operators? End users? Token holders, if economic mechanisms are involved? Governance in decentralized networks has a history of idealism colliding with reality. Voter apathy. Power concentration. Coordination fatigue. Fabric will face those same pressures. There’s no magical escape hatch from human dynamics.

Yet I can’t shake the feeling that ignoring governance entirely would be worse. If robots are going to operate in shared spaces, the rules shaping their behavior should not be invisible. They should be inspectable. Debatable. Upgradable in a transparent way. That’s messy. Democracy is messy. But opaque control structures are riskier in the long run.

The modular infrastructure Fabric promotes also intrigues me. Instead of monolithic robotic systems that can’t interoperate, imagine composable components—vision modules, navigation agents, manipulation frameworks—that plug into a shared verification layer. Improvements in one domain don’t remain siloed. They propagate. Carefully. Governed. Verified. That’s powerful. It accelerates evolution without surrendering oversight.

But evolution itself is tricky. General-purpose robots must adapt to new data, new environments, new regulations. Fabric’s networked approach allows that adaptation to be coordinated rather than chaotic. And yet, every upgrade introduces new attack surfaces, new failure modes. Continuous evolution is a double-edged sword. Move too slowly, and the system stagnates. Move too fast, and you outrun your safety guarantees.

Economics complicate everything further. Open networks don’t sustain themselves on goodwill alone. Incentives must align. Developers need reasons to build modules. Validators need reasons to secure computation. Operators need economic justification for integrating with the protocol. Designing those incentive layers without distorting priorities is delicate work. Over-reward growth, and safety corners get cut. Over-penalize risk, and innovation suffocates.

And maybe that’s the central tension Fabric embodies: ambition versus restraint. The ambition to coordinate a global ecosystem of general-purpose robots. The restraint to embed verification and governance deeply enough that autonomy doesn’t spiral out of control. It’s a high-wire act.

Sometimes I wonder if society is even ready for this conversation. We’re still arguing about data privacy in social media platforms, and here we are contemplating fleets of intelligent machines embedded in daily life. The infrastructure choices we make now will quietly shape decades of interaction between humans and machines. Once deployed at scale, these systems are hard to unwind.

So Fabric Protocol feels less like a product and more like a preemptive architecture decision. Instead of letting robotics evolve purely under corporate silos and retrofitting accountability later, it attempts to bake accountability into the foundation. That’s bold. Risky. Possibly necessary.

Will it succeed? I don’t know. Adoption is unpredictable. Engineering hurdles are real. Governance experiments can fracture communities. But the premise that robots should be verifiable participants in an open, collectively governed network rather than opaque extensions of private power feels directionally right.

And maybe that’s enough for now. Not certainty. Just direction. Because if robots are going to share our physical world, the systems guiding them can’t be an afterthought. They have to be intentional. Transparent. Accountable. Even if that makes everything slower, harder, more complicated.Especially then.

#ROBO @Fabric Foundation $ROBO