When I think about Fabric Protocol and the ROBO token, I try not to begin with market charts or price movements. Those things change quickly and often say more about sentiment than about systems.

When I think about Fabric Protocol and the ROBO token, I try not to begin with market charts or price movements. Those things change quickly and often say more about sentiment than about systems.

What interests me more is the structural question Fabric is trying to explore.

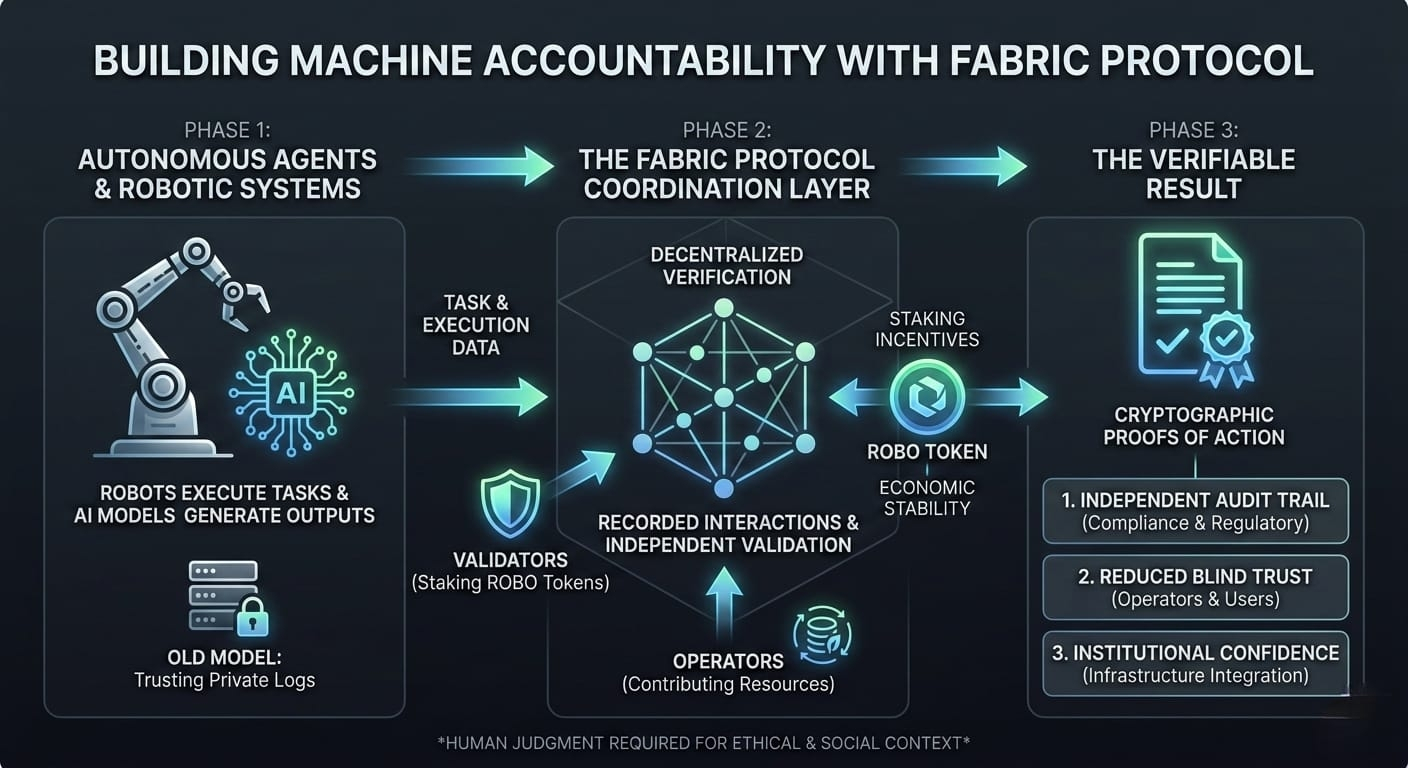

If machines are going to act autonomously in the future, who verifies what those machines actually did?

Right now, most AI and robotic systems operate inside private infrastructure. When a model produces an output or a robot executes a task, the evidence of that action usually lives in internal logs controlled by the company running the system. From the outside, users are expected to trust that the system behaved correctly.

This arrangement works as long as trust in operators remains high.

But as artificial intelligence systems begin performing more complex tasks—analyzing data, coordinating services, executing automated decisions—the gap between trust and verification becomes harder to ignore.

Fabric Protocol approaches this gap with a simple idea: machine actions should be verifiable, not just assumed.

The protocol proposes a coordination layer where AI agents and robotic systems interact through recorded tasks that can be validated through a decentralized network. Instead of relying entirely on internal records, the interaction itself becomes something that can be independently verified.

The ROBO token supports this mechanism by introducing economic participation into the process. Validators and operators contribute resources to verify actions within the system, creating incentives for honest validation while discouraging manipulation.

In theory, this structure aligns with a broader goal of decentralized infrastructure: reducing the need for blind trust.

However, verification alone does not automatically solve every risk surrounding AI systems.

A cryptographic proof can confirm that a process happened in a certain way. It can verify that a task was executed according to defined parameters. What it cannot easily determine is whether the outcome of that process was ethically sound, contextually appropriate, or socially responsible.

Technology can verify actions.

Judgment still belongs to humans.

Another question that often appears in decentralized systems is validator concentration. If verification power becomes concentrated in a small group of participants, the system may remain technically decentralized while practically operating under limited control.

For Fabric Protocol, maintaining broad participation among validators will likely be an important factor in preserving the credibility of its verification model.

Economic sustainability is another layer of the conversation.

Networks that rely on token incentives must balance participation rewards with long-term economic stability. If incentives become too weak, participants may not remain engaged. If they become too aggressive, token inflation can outpace real network usage.

The long-term strength of ROBO will depend not only on market interest but also on whether real activity within the Fabric ecosystem creates consistent demand for the token’s role in verification and coordination.

There is also a regulatory dimension that cannot be ignored.

Governments and institutions around the world are beginning to develop frameworks for AI accountability. Systems that claim to verify AI activity may eventually need to demonstrate that their records can support auditing, compliance, and oversight requirements.

For Fabric Protocol, the clarity of its audit trails and governance processes could become as important as its technical design.

Infrastructure projects are often evaluated by the elegance of their architecture, but their real test appears later.

The systems that succeed are usually the ones that remain open enough for participation, reliable enough for operators, and transparent enough for institutions to trust their records.

Fabric Protocol is attempting to place itself at the intersection of those three conditions.

The question is not simply whether decentralized verification is technically possible.

The deeper question is whether a network like Fabric can maintain the openness, validator diversity, and economic stability necessary to make machine accountability something that operators and institutions are willing to rely on.

That answer will not come from theory.

It will come from how the system behaves once real autonomous agents begin interacting through it—and whether participants trust the verification layer enough to treat it as part of the infrastructure itself.