@MidnightNetwork #night $NIGHT

I remember the exact reply — the one I would have scrolled past if I were in a hurry. It was a short, almost apologetic line under a thread about a new privacy-aware wallet: “I’ll share a bit later — need to be careful what I post.” No fanfare, no grand claims, just a tiny pause in public sharing. The thread kept going; memes, a token-price joke, someone asking for a tutorial. A day later the same person asked a different kind of question in a different place: not “how do I get into this?” but “how would you prove this without showing the data?” The phrasing was clinical, almost architectural.

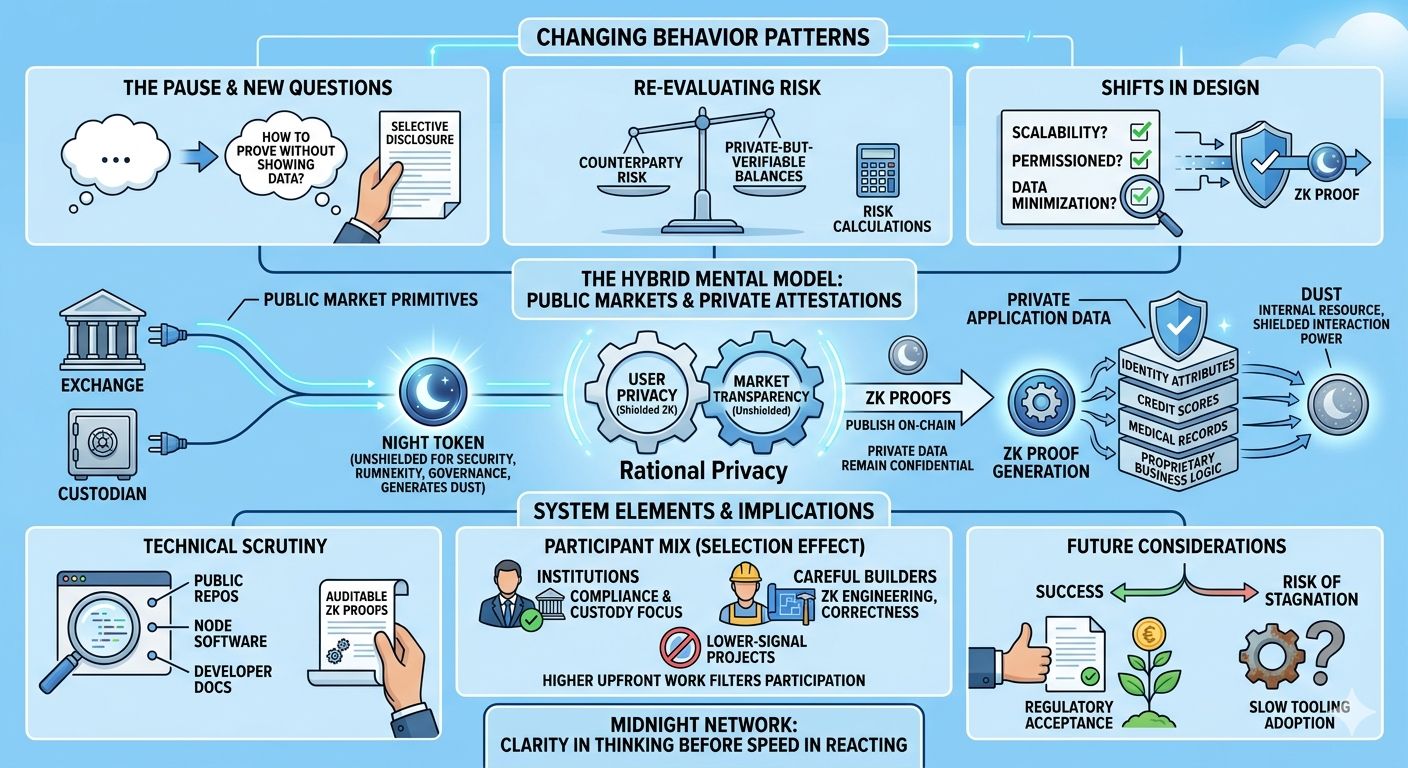

I’m not starting there to dramatize it. I’m starting there because, lately, the small hesitation and the slightly different grammar of questions have been the clearest sign that something is shifting — not in a protocol spec or a headline, but in how people orient decisions around privacy and utility. Over weeks I watched the same pattern crop up in different corners: builders asking about “selective disclosure”; traders wondering how counterparty risk changes when counterparty balances are private-but-verifiable; compliance people asking whether proofs could replace data exchanges they used to demand. These are behavioural signals, not product specs. They matter because behaviour is what ultimately drives whether a system lives or dies.

What people are circling toward — often with the kind of half-formed curiosity you see before real conviction — is a class of designs that try to make privacy feel like a lever you can use, rather than an immovable wall. That design language is what the Midnight team has been calling “rational privacy”: building tools so you can prove things without revealing underlying sensitive information. The project’s documentation and public materials make that explicit: the network is engineered so apps can verify correctness without exposing private data, using zero-knowledge proofs as the core primitive.

Saying the idea out loud makes it sound neat and tidy. Seeing it filter through people’s questions is messier — and far more interesting. Builders are changing their mental checklist: not only “does this scale?” or “is this permissioned?” but “what data do I have to give up to ship?” Traders and ops teams are folding privacy into routine risk calculations — a small change, but one that seems to alter the cadence of decisions. Instead of “launch now and iterate later,” there’s a longer pause to map what needs to be proveable and what must remain private. The pause is not universal. It’s selective: institutions, compliance-focused teams, and some infrastructure outfits are lengthening their decision-making, while other actors are moving faster, willing to accept more exposure for immediate liquidity or reach.

A concrete signal arrived in public announcements and developer repositories. The project that carries this language — not for the first time, but with renewed urgency — has been rolling certain building blocks into public repos, developer docs, and a staged token distribution. The NIGHT token, described on the project’s token page, plays a specific and visible role: it’s intentionally “unshielded,” used for network security, governance, and to generate an internal resource they call DUST that powers shielded interactions. That design choice is notable because it separates the public economic rails from the confidential data-handling layer. It feels like a deliberate nudge to keep some market primitives transparent while letting application data remain private.

There’s a behavioural logic behind that nudge. If the native token is transparent, exchanges, custodians, and market-makers can plug into familiar mechanics without having to invent new models for blind liquidity. Meanwhile, developers can build flows where user data — identity attributes, credit scores, medical attestations, proprietary business logic — never leaves the privacy layer except as a proof. The result is a hybrid mental model: public markets overlaid on private attestations. For some participants that reduces friction; for others it raises an eyebrow. Who wants to be a market-maker on assets whose underlying economic drivers you cannot audit easily? Who wants to be the builder who must now design cryptographic proofs for every new on-chain conditional?

The early market signals were predictable and small. Announcements about mainnet readiness (including confirmations made onstage and in press) produced the usual uptick in attention: threads lit up, testnets saw new activity, and tooling repos received PRs. But the quieter, second-order reactions are what felt different. Custodians and infrastructure providers started mentioning custody readiness and integration planning in ways that sound more like operational checklisting than marketing rhetoric. Partnerships that emphasize custody and compliance cropped up alongside developer grants and hackathons. These are not contradictory; they're complementary — one set of actors is trying to make the system usable for institutions, the other set is trying to lower the entry cost for builders. The two together shape a different ecosystem than a purely retail-privacy play.

It’s tempting to flatten these moves into a single narrative: privacy for users, permissionless for markets. But reality is knotty. I keep circling back to a small tension I noticed in conversations: privacy that is programmable requires more upfront work — constraint modelling, proof engineering, and careful UX. That extra work filters out certain types of participation. Junior builders and speculative projects with minimal budgets may find the cognitive and resource tax too high. Institutions, conversely, may like the trade-off because the model maps more closely to compliance needs: proofs instead of data dumps, attestations instead of full access. So the system seems to invite a different mix of participants: those who can pay for correctness, and those who prefer lightweight exposure.

That selection effect matters. Protocol design choices act like invisible bouncers. A protocol that requires ZK proofs for certain operations subtly biases the participant set toward teams that value long-term correctness and can either build or pay for the primitives. That’s not inherently good or bad; it just shapes culture. I’ve seen communities where a small set of careful builders produce high-signal applications and, over time, attract serious partners. I’ve also seen ecosystems ossify when the entry cost prevents new ideas from percolating. The question — and it’s an open one — is whether the incentives and tooling around this network are lowering the friction quickly enough to avoid the former fate and the exclusionary risk of the latter.

Technically, the project has been deliberate about openness: repositories, node software, and developer docs have been made public as part of a staged rollout. The public code and documentation don’t just reassure builders; they create behavioral scaffolding. When you can read the ledger rules, view node RPCs, and inspect example contracts, the mental overhead of adopting a new architecture drops. That’s a long-term trust play: transparency in code combined with confidentiality in data. For many practitioners, that balance feels intuitively right — yet it also depends on whether the proofs are auditable and whether the tooling is ergonomic enough to integrate into existing pipelines.

I want to be careful here. There are uncertainties baked into every one of these observations. For example, the promise of “rational privacy” depends on how well the ZK primitives compose with real-world compliance demands. Proving “I’m KYC’d” in zero-knowledge is an elegant thought, but the real question is the governance and legal recognition of such proofs. Will regulators accept a proof as equivalent to a data exchange? Or will they insist on logs and records that defeat the privacy intent? The project’s public-facing materials suggest a conscious effort to accommodate compliance workflows, but the legal and institutional acceptance of these primitives will be a slow process, not a switch you can flip overnight.

Another wrinkle is timing. The behavioural shift I notice — the pause before sharing, the different grammar of questions — is happening in parallel with market cycles, tooling maturation, and an ongoing industry conversation about where privacy belongs in Web3. Timing matters because early adopters define the norms. If institutions push in early with custody and market integrations, they can normalize certain operational practices. If builders tepidly adopt the model due to engineering friction, that will push norms in a different direction. These aren’t determinative outcomes; they’re tendencies. The neat thing about watching this unfold in real time is that small choices compound. A custody integration here, a developer grant there, a clean SDK shipped — over months they rearrange the topology of who builds what and why.

So far, the ecosystem feels like an experiment in calibration: maintaining enough transparency in economic rails to keep markets functioning, while creating privacy lanes for sensitive application logic. That hybrid is the behavioral story I keep returning to. It explains the patterns I’ve noticed — the altered conversation tone, the kinds of actors that are showing up, and the careful operational language in partnership announcements. It doesn’t, however, answer whether this will become the dominant model for privacy-aware apps, or whether it will sit as a viable niche for compliance-oriented projects.

As an observer who has spent too many late nights parsing commit logs and rereading documentation, I find the slow cadence of adoption comforting. Systems that require careful design tend to attract careful participants. That conservatism reduces some forms of risk but introduces others — chiefly, the risk of stagnation if tooling and incentives don’t broaden. The practical takeaway is modest: if you’re building in this space, thinking like an architect matters. Map the proofs you need before you write the user flow. If you’re building for institutions, tie the proofs to attestation models that can be surfaced to compliance teams without leaking secrets. If you’re a market participant, consider how public rails and private attestations shift counterparty models and settlement assumptions.

I don’t have a neat conclusion — and I don’t think the system calls for one yet. What matters, practically, is learning to notice the small behavioural cues that precede larger shifts: the pauses, the new question formats, the institutional checklists. Those are the data points that tell you something is changing, often before the headlines catch up.

In the meantime, the project’s trajectory is worth watching because it’s one of the clearer attempts to operationalize a middle path: privacy without isolation, transparency without exposure. Whether it succeeds will depend on legal interpretations, developer ergonomics, and whether the hybrid incentives can attract a diverse set of builders rather than a narrow cohort. For long-term participants, the lesson is familiar and quiet: clarity in thinking matters more than speed in reacting. Small shifts in how people talk and how teams make decisions often presage the meaningful architecture of the next wave — and noticing those shifts, slowly and without theatrical certainty, is the most useful posture you can cultivate.