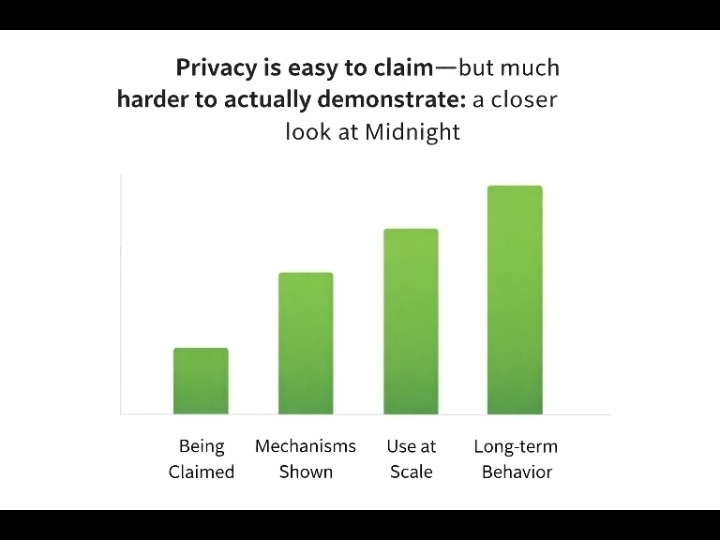

Lately I have been noticing how often the concept of privacy is treated as a headline feature than something that has to be continuously proven. In a lot of emerging systems in decentralized infrastructure privacy is presented almost as a checkbox. Something implied by design choices rather than demonstrated through real-world behavior.

When I look closely at these systems what is usually emphasized publicly is the mechanism: things like zero-knowledge proofs, encryption layers, selective disclosure. These are important, no doubt.. What feels under-discussed is how those mechanisms actually hold up once systems are used at scale. When users make mistakes, when governance changes or when incentives start to pull in different directions.

I have been looking at projects like Midnight. I find myself pausing on that gap between promise and proof. The idea of privacy. Where users can reveal information when needed. Sounds practical and even necessary.. It also introduces a layer of complexity that is not always obvious. Who defines the conditions under which data is revealed? How rigid or flexible are those rules over time?. Perhaps more importantly who has the authority to change them?

I do not think these are flaws much as they are tradeoffs. Absolute privacy can limit usability or compliance. Full transparency can erode user protection. Most real systems end up in between and that middle ground is where things get harder to reason about. It is also where trust becomes less about cryptography alone and more about governance, incentives and long-term alignment.

In my research I have seen that the systems which age well are not necessarily the ones with the strongest initial claims but the ones that make their constraints visible early. Some approaches lean toward minimizing trust pushing complexity into math. Others accept that trust exists and try to structure it making responsibilities explicit. Neither path is clean or complete.

What keeps standing out to me is how little attention is given to how these systems evolve after deployment. Privacy is not static. It changes as networks upgrade as participants shift, as pressures. Regulatory or economic. Start to shape decisions. A design that feels robust today might behave differently under those conditions.

So I have become a bit more cautious when I hear claims about privacy. Not skeptical in a way but more interested in how those claims are tested over time. For me the real signal is not whether a system can hide information but whether it can do consistently predictably and, without quietly shifting control elsewhere.

In the end privacy is not a feature you implement. It is a responsibility you maintain.. The confidence people place in privacy does not come from what is promised upfront but from how reliably privacy holds together when it actually matters. $BNB