I didn’t pay attention to SIGN at first. It didn’t feel urgent, and I’ve learned not to chase every new piece of infrastructure that appears with clean language and confident framing. Most of them sound reasonable in the beginning. Some even look necessary. But over time, the cracks usually show up in places no one highlighted early on.

So I let it sit in the background.

It wasn’t until later, almost by accident, that I started looking at it more closely. Not because of what it claims to do, but because of what it seems to be circling around. And that’s usually where things get more interesting—not in the features themselves, but in the problems they quietly point toward.

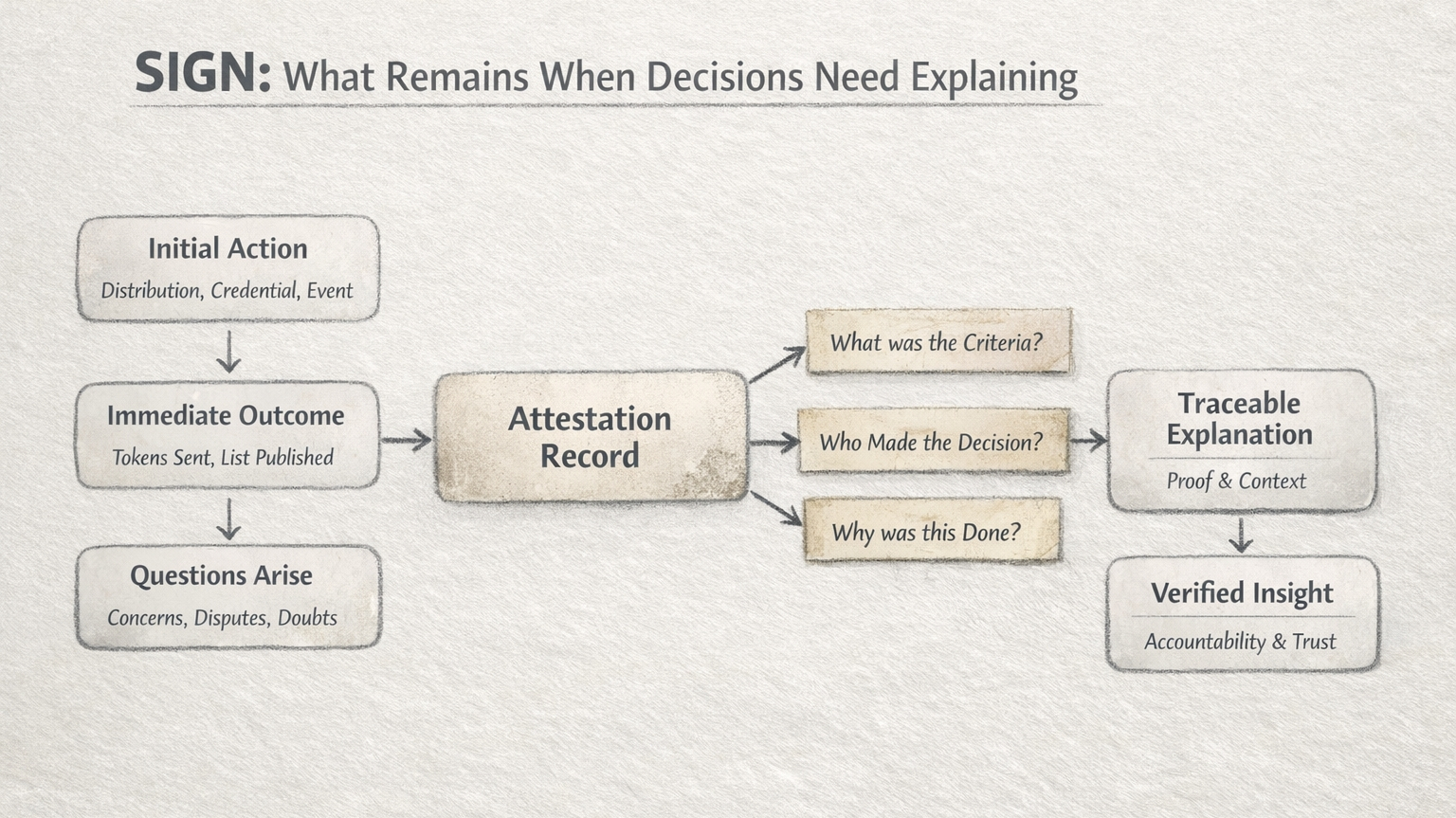

What stood out to me wasn’t token distribution or credentials on their own. Those are familiar ideas now. We’ve seen enough variations of them. What felt different, or at least worth pausing on, was the layer underneath—what happens when decisions need to be explained after they’ve already been made.

That’s a part of the system most people don’t think about until something goes wrong.

I’ve seen it happen more times than I can count. A distribution goes live, everything looks fine at a glance, and then questions start to surface. Someone didn’t receive what they expected. Someone else claims the criteria wasn’t applied fairly. And suddenly, the conversation shifts. It’s no longer about what happened, but whether it can be justified.

That’s where things usually fall apart.

Not because the system failed to execute, but because it can’t clearly explain itself. The reasoning isn’t visible. The decisions aren’t easy to trace. And in the absence of that, everything falls back on trust—trust that the people behind it made the right calls.

But trust like that doesn’t scale very well, especially when the stakes are real.

What SIGN seems to be doing, at least from where I’m standing, is paying attention to that moment. Not the clean execution, but the messy aftermath. It feels less focused on the act of distributing and more focused on leaving behind something that can be revisited later—a kind of memory that doesn’t rely on people remembering things the same way.

I keep thinking about that.

Because storing information isn’t the hard part anymore. We’ve solved that in a dozen different ways. The harder part is making sure that information still makes sense when you come back to it. That it carries enough context to answer questions, not just record events.

SIGN leans into attestations to do that. Not as a flashy concept, but as a way to say, “this is what was believed to be true at this point in time.” It’s a small shift, but it changes how you look at things. Instead of assuming outcomes speak for themselves, it tries to preserve the reasoning behind them.

At least, that’s how it appears.

And I’m careful with that assumption, because this is also where things can get complicated.

Who decides what an attestation should include?

Who determines whether it’s enough?

And when two different records don’t align, what then?

These aren’t edge cases. They’re the situations that define whether a system actually holds up. It’s easy to build for agreement. It’s much harder to build for disagreement, where multiple perspectives exist and none of them can be dismissed outright.

That’s where I find myself returning again and again.

Not to what SIGN promises, but to how it might behave under pressure. Because that’s the part you can’t simulate fully. You only see it when something breaks, or when people start questioning outcomes in ways the system didn’t expect.

There’s a quiet acknowledgment within SIGN that verification isn’t just technical. It carries human weight—context, judgment, interpretation. And those things don’t fit neatly into rigid structures, no matter how well designed they are.

I don’t think SIGN ignores that. If anything, it seems to accept it, even if only partially.

And that’s what makes me stay with it a little longer than I normally would.

Not because I think it solves the problem, but because it doesn’t pretend the problem is simple. It feels like an attempt to give shape to something that usually gets overlooked until it becomes unavoidable.

Still, I don’t see it as settled.

There are too many points where things could drift, too many assumptions that haven’t been fully tested yet. And experience has taught me that those are the places where systems either mature or quietly fall apart.

So I keep watching it in that way—without urgency, without dismissal.

Just trying to understand what it becomes when it’s no longer being explained, and starts being used in situations where explanations are no longer optional.

I’m not sure where it lands yet.

For now, it just sits there in my mind, not fully formed, but not easy to ignore either.