I want to share something with you last night at maybe 2:17 AM just after the TokenTable snapshot window quietly closed and I found myself still staring at a stream of addresses resolving into final states. And you know there was no announcement no surge of excitement just a quiet finality. Allocations had settled, claims had been verified, and somewhere beneath the surface, attestations had already been written into a layer that doesn’t negotiate with time. That moment did nat feel like watching a product in action. It felt like observing a system that is trying to remove doubt itself from the equation.

What stayed with me was not the interfac but the evidence underneath it. I noticed contract traces referencing something like 0x8f... likely tied to an attestation registry I alongside a brief but noticeable spike in gas hovering between 38 and 52 gwei as claim validations peaked. There ware clusters of wallet interactions that didn’t resemble speculative behavior at all they looked procedural almost administrative, like records being finalized rather than value being chased. The entire flow felt less like crypto and more like infrastructure quietly doing its job, and that subtle shft in tone is what made it stand out.

And I was still staring and at one point during testing I initiated a simple attestation nothing complex just a straightforward schema interaction. The transaction did not fail but it did not move either. It sat there in a pending state longer than expected, long enough to make me pause. In that stillness I realized the nature of what was happening. This wasn’t a system optimized purely for speed or convenience. It was a system designed for permanence. When it finally confirmed, there was no sense of relief, just a quiet awareness that something had been recorded in a way that couldn’t be casually undne. That feeling is very different from what most digital interactions have conditioned us to expect.

The more I sat with it, the clearer it became that @SignOfficial is not built in isolated layers but in a loop where each part reinforces the other. The economic aspect doesn’t just distribute tokens it transforms distribution into something provable, something that carries a narrative that can not be rewritten later. TokenTable in that sense, is less about efficiency and more about accountability. It removes the ambiguity that has long surrounded who receives what and under what conditions, and replaces it with a structure where those answers are no longer negotiable.

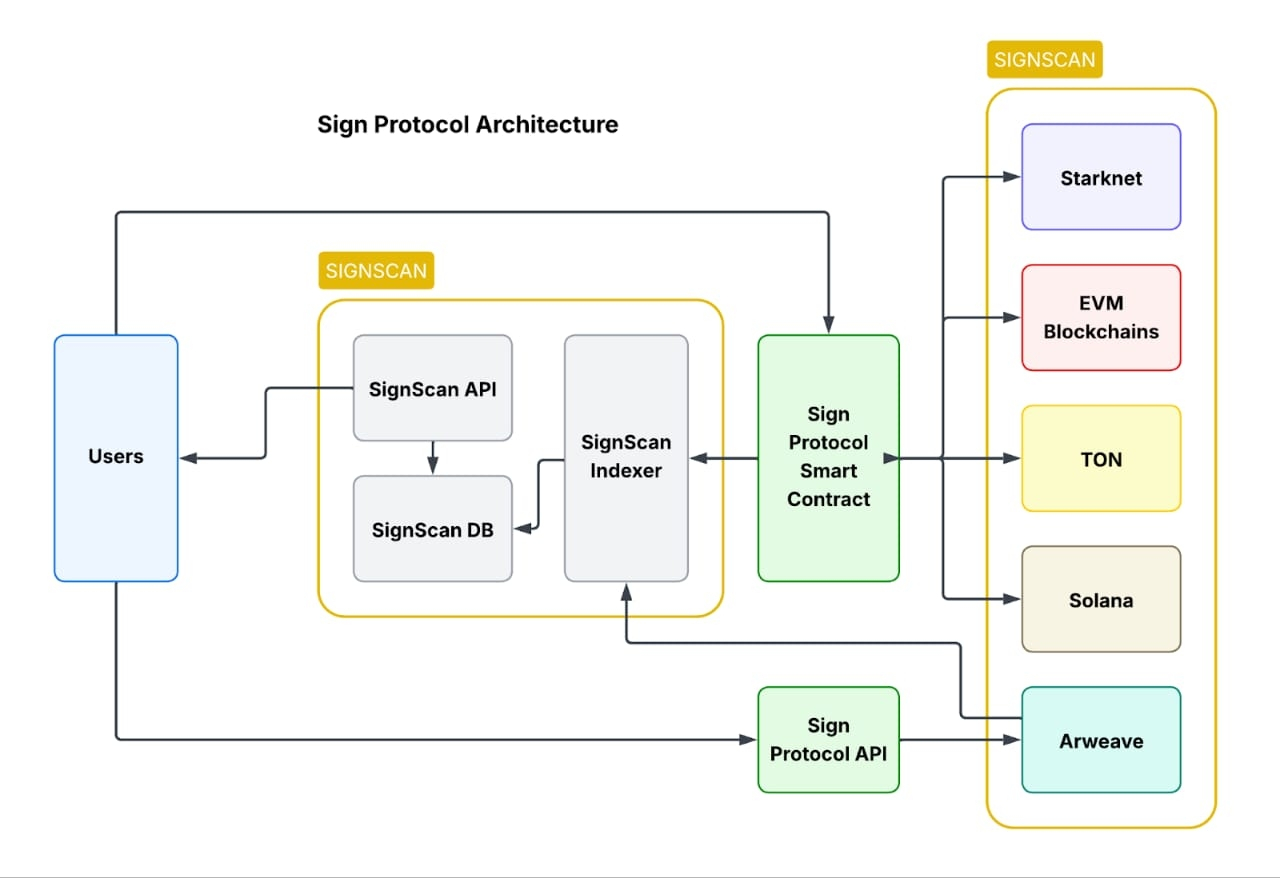

And at night I just figure out what coming next the ambition feels even more complex. It’s not just about operating across multiple chains, but about maintaining consistency of truth between them. That’s where the real challenge emerges. Coordinaing state across different environments, ensuring verification doesn’t lag or fragment and managing throughput without creating bottlenecks is far from trivial. What I observed suggests that this is still an evolving process, not a solved problem. The system is being tested in real time, and it carries the tension of something that is both functional and unfinished.

Where things become more complicated at least for me, is at the identity and governance level. Attestations are not neutral. They represent claims, validations, and decisions about what is considered true. And those decisions don’t emerge from nowhere. Someone defines the schemas, someone sets the standards, and over time those frameworks begin to shape how reality is recorded. The system may be transparent, but influence doesn’t disappear it just becomes more structured and, in some ways, more subtle.

When I briefly compared this to networks like Fetch.ai or Bittensor, the difference became sharper. Those systems are focused on intelligence, on generating or coordinating knowledge. Sign Protocol is doing something almost opposite. It is focused on memory, on ensuring that once something is declared and verified, it remains intact. Intelligence can evolve and correct itself, but memory at this level doesn’t have that flexibility. It preserves everything with equal weight, whether it was perfect or flawed at the moment it was recorded.

The one and for meh most important part I keep returning to is that this kind of system doesn’t eliminate power, it redistributes it. Early participants, major attesters, and those who define the initial schemas will inevitably carry more influence in shaping what becomes accepted truth. Even in a transparent environment, interpretation still exists, and with it comes a new kind of asymmetry. There is also a deeper psychological layer that feels unresolved. We often say we want truth and transparency, but we are used to systems that allow for quiet corrections, for things to fade or be adjusted over time.

I keep thinking about how this translates to everyday life. Not in grand, abstract terms, but in something simple. A person signs a document online, maybe under pressure, maybe with incomplete understading. Today, there are ways to revisit that moment, to amend or dispute it. In a system built on immutable attestations, that moment doesn’t softn with time. It stays exactly as it was, preserved in a way that doesn’t account for context or change.

So I find myself sitting with a question that feels less technical and more human. If Sign Protocol succeeds in makng authenticity unquestionable, if it quietly becomes part of the infrastructure we rely on without even noticing it, what happens to the space we currently have for error, for revision, for being imperfect? Becuse the future it points toward isn’t just one where we can finally trust what we see. It’s one where that trust comes at the cost of never being able to look away from what has already been recorded.