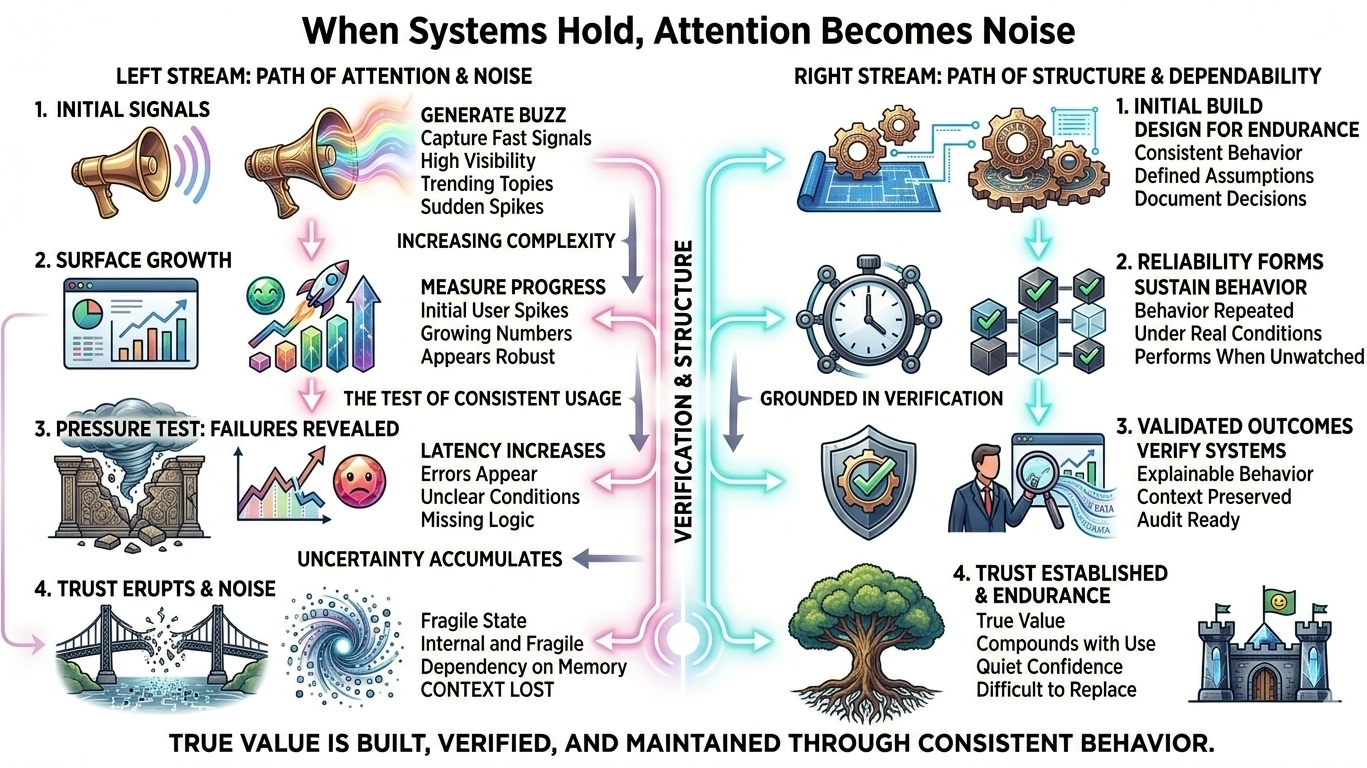

There was a time when attention felt like the clearest measure of success. If something was seen, it was assumed to matter. If it moved quickly, it was assumed to be working. I’ve watched that belief shape decisions more than once—where visibility created confidence, even when nothing underneath was truly stable. But the more you observe real systems, the more that assumption starts to weaken. Not everything that moves is progressing. Sometimes it is only reacting.

I’ve seen systems gain momentum in ways that look convincing from the outside. A spike in users, a surge in activity, numbers that suggest growth. But once real conditions set in—when systems are tested with consistent usage—the picture changes. Latency increases, errors appear, and the system begins to show where it was never fully prepared. The activity was real, but the reliability wasn’t proven.

This difference becomes even clearer in environments where failure has consequences. In financial systems, for example, a process can look correct on the surface while still failing under pressure. A transaction may complete, but the conditions behind it may not be clear. A process may succeed, but its reasoning may not be reproducible later. When that happens, the issue is not execution—it is the absence of something that can be verified.

Verification is where this entire structure either holds or collapses. Without it, consistency remains invisible and unproven. With it, consistency becomes something you can rely on across time, across conditions, and across systems. It is the difference between something that works once and something that continues to work, even when variables change.

In practice, most systems are designed to produce outcomes, not to explain them. They are optimized for output, speed, and scale. But what they often lack is the ability to carry context with them. The result is a system that performs, but cannot always justify its behavior. And when behavior cannot be justified, trust becomes fragile.

That fragility is rarely noticed immediately. Systems can function for long periods without obvious issues. But over time, small gaps begin to accumulate. A missing condition here, an unclear decision there. Individually, these seem insignificant. Together, they create uncertainty that becomes harder to resolve as the system grows. What was once acceptable becomes difficult to defend.

This is where reliability becomes the defining factor. Reliability is not about isolated success. It is about sustained behavior under changing conditions. It is about whether a system continues to perform in a way that can be expected, not just observed. And that expectation only holds when there is something beneath the surface that can consistently support it.

Consistency is the visible layer of that support. It shows that a system is not случайly correct, but structurally aligned. But consistency alone is not enough. It must be grounded in something that can be verified. Without verification, consistency can still exist—but it remains internal, unvalidated, and ultimately incomplete.

This is why the strongest systems are often the least noticeable. They do not rely on visibility to maintain trust. They rely on structure. They are built in a way that allows their behavior to be understood, repeated, and confirmed. And because of that, they don’t need to constantly prove themselves through attention. Their reliability speaks through continuity, not visibility.

In real-world terms, this is the difference between systems that are frequently discussed and systems that are quietly depended on. Many platforms can attract attention quickly. Fewer can maintain trust over time. And even fewer can do so without requiring constant explanation. The ones that do are not necessarily the most visible—they are the most dependable.

As technology continues to evolve, especially with the integration of automation and interconnected systems, this distinction becomes more important. Systems are no longer operating in isolation. They depend on each other. And in such environments, the ability to verify behavior across systems is not optional—it is foundational. Without it, complexity does not create strength. It creates instability.

What’s changing is not just how systems are built, but how they are evaluated. Attention still creates moments of visibility, but it no longer guarantees anything beyond that moment. What lasts is behavior that can be observed repeatedly, validated consistently, and trusted over time. This is where value begins to shift—from what is seen, to what can be relied upon.

And in that shift, something becomes clear. The systems that endure are not the ones that demand the most attention. They are the ones that continue to function when attention fades, when conditions change, and when no one is actively watching. They hold, not because they are loud, but because they are built in a way that allows them to keep holding.

In the end, what matters most is not how much attention something can gather, but how much of it can be removed without affecting what remains. Because the true test of any system is not how it performs when everything is aligned—but how it behaves when it isn’t.

#SignDigitalSovereignInfra #BitmineIncreasesETHStake #AsiaStocksPlunge #TrendingTopic #meme板块关注热点